Abstract

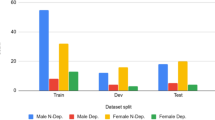

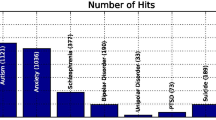

Early detection of depression has always been a challenge. Currently, research on automatic depression detection mainly focuses on using low-level features such as audio, text, or video from interview dialogue as input data, ignoring some high-level features contained in the dialogue. We proposes a multimodal depression detection method for extracting emotional and behavioral features from dialogue and detecting early depression. Specifically, we design an emotional feature extraction module and a behavioral feature extraction module, which input the extracted emotional and behavioral features as high-level features into the depression detection network. In this process, a weighted attention fusion module is used to guide the learning of text and audio modalities and predict the final result. Experimental results on the public dataset DAIC-WOZ show that the extracted emotional and behavioral features effectively complement the high-level semantics missing in the network. Our proposed method improves the F1-score by 6% compared to traditional approaches. The experimental data also indicate the importance of the model’s detection results in early depression detection. This technology has certain application value in professional fields such as caregiving, emotional interaction, psychological diagnosis, and treatment.

P. Wang and B. Yang—contribute equally to this work.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Hao, F., Pang, G., Wu, Y., Pi, Z., **a, L., Min, G.: Providing appropriate social support to prevention of depression for highly anxious sufferers. IEEE Trans. Comput. Soc. Syst. 6(5), 879–887 (2019)

Haque, A., Guo, M., Miner, A.S., Fei-Fei, L.: Measuring depression symptom severity from spoken language and 3d facial expressions. ar**v preprint ar**v:1811.08592 (2018)

Al Hanai, T., Ghassemi, M.M., Glass, J.R.: Detecting depression with audio/text sequence modeling of interviews. In: Interspeech, pp. 1716–1720 (2018)

Lu, H., Zhang, M., Xu, X., Li, Y., Shen, H.T.: Deep fuzzy hashing network for efficient image retrieval. IEEE Trans. Fuzzy Syst. 29(1), 166–176 (2020)

Ma, C., et al.: Visual information processing for deep-sea visual monitoring system. Cogn. Robot. 1, 3–11 (2021)

Lu, H., Teng, Y., Li, Y.: Learning latent dynamics for autonomous shape control of deformable object. IEEE Trans. Intell. Transp. Syst. (2022)

Niu, M., Chen, K., Chen, Q., Yang, L.: HCAG: a hierarchical context-aware graph attention model for depression detection. In: ICASSP 2021–2021 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 4235–4239. IEEE (2021)

Solieman, H., Pustozerov, E.A.: The detection of depression using multimodal models based on text and voice quality features. In: 2021 IEEE Conference of Russian Young Researchers in Electrical and Electronic Engineering (ElConRus), pp. 1843–1848. IEEE (2021)

Hazarika, D., Poria, S., Mihalcea, R., Cambria, E., Zimmermann, R.: Icon: interactive conversational memory network for multimodal emotion detection. In: Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing, pp. 2594–2604 (2018)

Liu, S., et al.: Towards emotional support dialog systems. ar**v preprint ar**v:2106.01144 (2021)

Gratch, J., et al.: The distress analysis interview corpus of human and computer interviews. In: LREC, pp. 3123–3128. Reykjavik (2014)

Flores, R., Tlachac, M., Shrestha, A., Rundensteiner, E.: Temporal facial features for depression screening. In: Adjunct Proceedings of the 2022 ACM International Joint Conference on Pervasive and Ubiquitous Computing and the 2022 ACM International Symposium on Wearable Computers, pp. 488–493 (2022)

An, M., Wang, J., Li, S., Zhou, G.: Multimodal topic-enriched auxiliary learning for depression detection. In: Proceedings of the 28th International Conference on Computational Linguistics, pp. 1078–1089 (2020)

Gui, T., et al.: Cooperative multimodal approach to depression detection in twitter. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 33, pp. 110–117 (2019)

Yang, T., et al.: Fine-grained depression analysis based on Chinese micro-blog reviews. Inf. Process. Manag. 58(6), 102681 (2021)

Wei, P.C., Peng, K., Roitberg, A., Yang, K., Zhang, J., Stiefelhagen, R.: Multi-modal depression estimation based on sub-attentional fusion. In: Karlinsky, L., Michaeli, T., Nishino, K. (eds.) Computer Vision – ECCV 2022 Workshops. ECCV 2022. LNCS, vol. 13806, pp. 623–639. Springer, Cham (2023). https://doi.org/10.1007/978-3-031-25075-0_42

Chen, W., **ng, X., Xu, X., Pang, J., Du, L.: Speechformer: a hierarchical efficient framework incorporating the characteristics of speech. ar**v preprint ar**v:2203.03812 (2022)

Zhao, W., Zhao, Y., Li, Z., Qin, B.: Knowledge-bridged causal interaction network for causal emotion entailment. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 37, pp. 14020–14028 (2023)

Cer, D., Yang, Y., Kong, S.V., Hua, N., Limtiaco, N.: Rhomni st john, noah constant, mario guajardo-cespedes, steve yuan, chris tar, and others. 2018. universal sentence encoder. ar**v preprint ar**v:1803.11175 (2018)

Cer, D., et al.: Universal sentence encoder. ar**v preprint ar**v:1803.11175 (2018)

Liu, G., Guo, J.: Bidirectional LSTM with attention mechanism and convolutional layer for text classification. Neurocomputing 337, 325–338 (2019)

Cummins, N., Vlasenko, B., Sagha, H., Schuller, B.: Enhancing speech-based depression detection through gender dependent vowel-level formant features. In: ten Teije, A., Popow, C., Holmes, J.H., Sacchi, L. (eds.) AIME 2017. LNCS (LNAI), vol. 10259, pp. 209–214. Springer, Cham (2017). https://doi.org/10.1007/978-3-319-59758-4_23

Williamson, J.R., et al.: Detecting depression using vocal, facial and semantic communication cues. In: Proceedings of the 6th International Workshop on Audio/Visual Emotion Challenge, pp. 11–18 (2016)

Ma, X., Yang, H., Chen, Q., Huang, D., Wang, Y.: Depaudionet: an efficient deep model for audio based depression classification. In: Proceedings of the 6th International Workshop on Audio/Visual Emotion Challenge, pp. 35–42 (2016)

Shen, Y., Yang, H., Lin, L.: Automatic depression detection: an emotional audio-textual corpus and a GRU/BiLSTM-based model. In: ICASSP 2022–2022 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 6247–6251. IEEE (2022)

Sun, B., Zhang, Y., He, J., Yu, L., Xu, Q., Li, D., Wang, Z.: A random forest regression method with selected-text feature for depression assessment. In: Proceedings of the 7th Annual Workshop on Audio/Visual Emotion Challenge, pp. 61–68 (2017)

Acknowledgements

This work has been supported by The Jiangsu Province Graduate Research and Practice Innovation Program No. KYH21020530.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2024 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Wang, P., Yang, B., Wang, S., Zhu, X., Ni, R., Yang, C. (2024). Multimodal Depression Detection Network Based on Emotional and Behavioral Features in Conversations. In: Lu, H., Cai, J. (eds) Artificial Intelligence and Robotics. ISAIR 2023. Communications in Computer and Information Science, vol 1998. Springer, Singapore. https://doi.org/10.1007/978-981-99-9109-9_44

Download citation

DOI: https://doi.org/10.1007/978-981-99-9109-9_44

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-99-9108-2

Online ISBN: 978-981-99-9109-9

eBook Packages: Computer ScienceComputer Science (R0)