Abstract

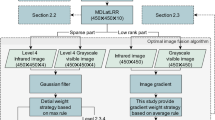

With the development of multi-source detectors, the fusion of infrared and visible light images has received close attention from researchers. Infrared images have the advantages of all-day time, and can clearly image temperature-sensitive targets under low or no light conditions. Visible light images have strong imaging capabilities for target details under good lighting conditions. After the two are fused, the advantages of the two imaging methods can be integrated. In this paper, to obtain more valuable scene information in the fused image, an infrared and visible image fusion method based on multi-scale Gaussian rolling guidance filter (MLRGF) decomposition is proposed. First of all, the MLRGF is utilized to decompose infrared images and visible light images into three different scale layers, which are called detail preservation layer, edge preservation layer and energy base layer, respectively. Then, the three different scale layers are respectively fused based on the properties of different scale layers through spatial frequency-based, gradient-based and energy-based fusion strategies. Finally, the final fusion result is obtained by adding the fusion results of the three different scale layers. Experimental results show that the proposed method has achieved excellent results in both subjective evaluation and objective evaluation.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Burt P.J., Adelson E.H.: The Laplacian pyramid as a compact image code. Readings in Computer Vision. Morgan Kaufmann, pp. 671–679 (1987)

Lewis, J.J., O’Callaghan, R.J., Nikolov, S.G., et al.: Pixel-and region-based image fusion with complex wavelets. Inf. Fusion 8(2), 119–130 (2007)

Da Cunha, A.L., Zhou, J., Do, M.N.: The nonsubsampled contourlet transform: Theory, design, and applications. IEEE Trans. Image Process. 15(10), 3089–3101 (2006)

Zhao, C., Guo, Y., Wang, Y.: A fast fusion scheme for infrared and visible light images in NSCT domain. Infrared Phys. Technol. 72, 266–275 (2015)

He, K., Sun, J., Tang, X.: Guided image filtering. IEEE Trans. Pattern Anal. Mach. Intell. 35(6), 1397–1409 (2012)

Li, S., Kang, X., Hu, J.: Image fusion with guided filtering. IEEE Trans. Image Process. 22(7), 2864–2875 (2013)

Liu, Y., Chen, X., Ward, R.K., et al.: Image fusion with convolutional sparse representation. IEEE Signal Process. Lett. 23(12), 1882–1886 (2016)

Liu, Y., Chen, X., Cheng, J., et al.: Infrared and visible image fusion with convolutional neural networks. Int. J. Wavelets Multiresolut. Inf. Process. 16(03), 1850018 (2018)

Li, H., Wu, X.J., Durrani, T.S.: Infrared and visible image fusion with ResNet and zero-phase component analysis. Infrared Phys. Technol. 102, 103039 (2019)

He, K., et al.: Deep residual learning for image recognition. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp. 770–778 (2016)

Ma, J., Yu, W., Liang, P., et al.: FusionGAN: A generative adversarial network for infrared and visible image fusion. Inf. Fusion 48, 11–26 (2019)

Zhang, Q., Shen, X., Xu, L., et al.: Rolling Guidance Filter. In: European conference on computer vision. Springer, Cham, pp. 815–830 (2014)

Tan, W., Zhou, H., Song, J., et al.: Infrared and visible image perceptive fusion through multi-level Gaussian curvature filtering image decomposition. Appl. Opt. 58(12), 3064–3073 (2019)

Tan, W., **ang, P., Zhang, J., et al.: Remote sensing image fusion via boundary measured dual-channel PCNN in multi-scale morphological gradient domain. IEEE Access 8, 42540–42549 (2020)

**ao-Bo, Q., **g-Wen, Y., Hong-Zhi, X., et al.: Image fusion algorithm based on spatial frequency-motivated pulse coupled neural networks in nonsubsampled contourlet transform domain. Acta Automatica Sinica 34(12), 1508–1514 (2008)

Liu, Y., Wang, Z.: Simultaneous image fusion and denoising with adaptive sparse representation. IET Image Proc. 9(5), 347–357 (2015)

Li, H., Wu, X.J.: Infrared and visible image fusion using latent low-rank representation. ar**v preprint ar**v:1804.08992 (2018)

Wang, Z., Bovik, A.C., Sheikh, H.R., et al.: Image quality assessment: from error visibility to structural similarity. IEEE Trans. Image Process. 13(4), 600–612 (2004)

Qu, G., Zhang, D., Yan, P.: Information measure for performance of image fusion. Electron. Lett. 38(7), 313–315 (2002)

Petrovic, V., Xydeas, C.: Objective image fusion performance characterization. In: Tenth IEEE International Conference on Computer Vision (ICCV'05), vol. 1., IEEE 2, pp. 1866–1871 (2005)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Zhang, J., **ang, P., Teng, X., Zhang, X., Zhou, H. (2022). Infrared and Visible Image Fusion Based on Multi-scale Gaussian Rolling Guidance Filter Decomposition. In: Berretti, S., Su, GM. (eds) Smart Multimedia. ICSM 2022. Lecture Notes in Computer Science, vol 13497. Springer, Cham. https://doi.org/10.1007/978-3-031-22061-6_6

Download citation

DOI: https://doi.org/10.1007/978-3-031-22061-6_6

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-22060-9

Online ISBN: 978-3-031-22061-6

eBook Packages: Computer ScienceComputer Science (R0)