Abstract

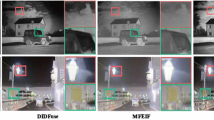

Image fusion technology has been widely used in analyzing fusion effect under various settings. This paper proposed the image fusion method suitable for both infrared and grayscale visible image. As a first step, the base and detail layers of the image are obtained through the multilayer image decomposition method. For the base layer, we select a fusion method based on the gradient weight map to address the loss of feature details inherent in the average fusion strategy. For the detail layer analysis, we use a weighted least squares-based fusion strategy to mitigate the impact of noise. In this research, the database containing various settings is used to verify the robustness of this methodology. The result is also used to compare with other types of fusion methods in order to provide subjective kind of method and objective kind of image indicator for easier verification. The fusion result indicated that this research method not only reduces noise in the infrared images but also maintains the desired global contrast. As a result, the fusion process can retrieve more feature details while preserving the structure of the feature area.

Similar content being viewed by others

Data availability

The TNO Image fusion dataset that support the findings of this study are available in https://figshare.com/articles/TNO_Image_Fusion_Dataset/1008029. This paper’s data and code are available online at https://github.com/ChingCheTu/A-Multi-level-Optimal-Fusion-Algorithm-for-Infrared-and-Visible-Image.

References

Han, J., Bhanu, B.: Fusion of color and infrared video for moving human detection. Pattern Recogn. 40(6), 1771–1784 (2007)

Park, S., et al.: Evaluation of spatio-temporal fusion models of multi-sensor high-resolution satellite images for crop monitoring: an experiment on the fusion of Sentinel-2 and RapidEye images. Korean J. Remote Sens. 36(5), 807–821 (2020)

Hong, D.S., et al.: CrossFusion net: deep 3D object detection based on RGB images and point clouds in autonomous driving. Image Vis. Comput. 100, 103 (2020)

Bhatnagar, G., Wu, Q.M.J., Liu, Z.: A new contrast based multimodal medical image fusion framework. Neurocomputing 157, 143–152 (2015)

Ma, J., Ma, Y., Li, C.: Infrared and visible image fusion methods and applications: a survey. Inf. Fusion 45, 153–178 (2019)

Gao, C., et al.: Improving the performance of infrared and visible image fusion based on latent low-rank representation nested with rolling guided image filtering. IEEE Access 9, 91462–91475 (2021)

Zhou, Z.Q., et al.: Perceptual fusion of infrared and visible images through a hybrid multi-scale decomposition with Gaussian and bilateral filters. Inf. Fusion 30, 15–26 (2016)

Yang, B., Li, S.T.: Multifocus image fusion and restoration with sparse representation. IEEE Trans. Instrum. Meas. 59(4), 884–892 (2010)

Chen, J., et al.: Infrared and visible image fusion based on target-enhanced multiscale transform decomposition. Inf. Sci. 508, 64–78 (2020)

Du, J., et al.: Union Laplacian pyramid with multiple features for medical image fusion. Neurocomputing 194, 326–339 (2016)

Beaulieu, M., Foucher, S., Gagnon, L.: Multi- spectral image resolution refinement using stationary wavelet transform. In: IGARSS 2003. 2003 IEEE International Geoscience and Remote Sensing Symposium. Proceedings (IEEE Cat. No.03CH37477) (2003)

Li, H., Manjunath, B.S., Mitra, S.K.: Multisensor image fusion using the wavelet transform. Graph. Models Image Process. 57(3), 235–245 (1995)

Li, G., Lin, Y., Qu, X.: An infrared and visible image fusion method based on multi-scale transformation and norm optimization. Inf. Fusion 71, 109–129 (2021)

**, J., Richards, J.A.: Segmented principal components transformation for efficient hyperspectral remote-sensing image display and classification. IEEE Trans. Geosci. Remote Sens. 37(1), 538–542 (1999)

Yokoya, N., Yairi, T., Iwasaki, A.: Coupled nonnegative matrix factorization unmixing for hyperspectral and multispectral data fusion. IEEE Trans. Geosci. Remote Sens. 50(2), 528–537 (2012)

Li, S., et al.: Pixel-level image fusion: a survey of the state of the art. Inf. Fusion 33, 100–112 (2017)

Liu, G., Lin, Z., Yu, Y.: Robust subspace segmentation by low-rank representation. In: Icml. Citeseer (2010)

Li, H., Wu, X.-J.: Multi-focus image fusion using dictionary learning and low-rank representation. In: International Conference on Image and Graphics. Springer (2017)

Yu, S., Chen, X.: Infrared and visible image fusion based on a latent low-rank representation nested with multiscale geometric transform. IEEE Access 8, 110214–110226 (2020)

Li, H., Wu, X.J., Kittler, J.: MDLatLRR: a novel decomposition method for infrared and visible image fusion. IEEE Trans. Image Process. 29, 4733–4746 (2020)

Liu, G., Yan, S.: Latent low-rank representation for subspace segmentation and feature extraction. In: 2011 International Conference on Computer Vision (2011)

Ma, J., et al.: Infrared and visible image fusion based on visual saliency map and weighted least square optimization. Infrared Phys. Technol. 82, 8–17 (2017)

Ma, J., et al.: DDcGAN: a dual-discriminator conditional generative adversarial network for multi-resolution image fusion. IEEE Trans. Image Process. 29, 4980–4995 (2020)

Li, H., Wu, X.-J., Kittler, J.: RFN-Nest: an end-to-end residual fusion network for infrared and visible images. Inf. Fusion 73, 72–86 (2021)

Veshki, F.G., et al.: Multimodal image fusion via coupled feature learning. Signal Process. 200, 108637 (2022)

Zhang, H., et al.: GAN-FM: infrared and visible image fusion using GAN with full-scale skip connection and dual Markovian discriminators. IEEE Trans. Comput. Imaging 7, 1134–1147 (2021)

Xu, H., et al.: U2Fusion: a unified unsupervised image fusion network. IEEE Trans. Pattern Anal. Mach. Intell. 44(1), 502–518 (2022)

Zhang, H., et al.: Rethinking the image fusion: a fast unified image fusion network based on proportional maintenance of gradient and intensity. In: Proceedings of the AAAI Conference on Artificial Intelligence (2020)

Ma, J., et al.: FusionGAN: a generative adversarial network for infrared and visible image fusion. Inf. Fusion 48, 11–26 (2019)

Zhang, Y., et al.: IFCNN: a general image fusion framework based on convolutional neural network. Inf. Fusion 54, 99–118 (2020)

Wang, Z., Simoncelli, E.P., Bovik, A.C.: Multiscale structural similarity for image quality assessment. In: The Thrity-Seventh Asilomar Conference on Signals, Systems and Computers, 2003. IEEE (2003)

Jagalingam, P., Hegde, A.V.: A review of quality metrics for fused image. Aquat. Procedia 4, 133–142 (2015)

Xydeas, C., Petrovic, V.: Objective image fusion performance measure. Electron. Lett. 36, 308–309 (2000)

Blum, R.S., Liu, Z.: Multi-sensor image fusion and its applications. CRC Press, Boca Raton (2018)

Mittal, A., Soundararajan, R., Bovik, A.C.: Making a “completely blind” image quality analyzer. IEEE Signal Process. Lett. 20(3), 209–212 (2013)

Cui, G., et al.: Detail preserved fusion of visible and infrared images using regional saliency extraction and multi-scale image decomposition. Opt. Commun. 341, 199–209 (2015)

Rajalingam, B., Priya, R., Bhavani, R.: Hybrid multimodal medical image fusion using combination of transform techniques for disease analysis. Procedia Comput. Sci. 152, 150–157 (2019)

Acknowledgements

This work was supported by the National Science and Technology Council, Taiwan, under Grant NSTC 110-2221-E-167-017.

Author information

Authors and Affiliations

Contributions

B-LJ provided the overall concept of the article and was responsible for the majority of the writing and structuring. C-CT participated in the writing of the article, reviewing and editing it. C-CT was responsible for collecting and analyzing the data. B-LJ and C-CT provided technical support and resolved issues encountered during the experiments. B-LJ was responsible for editing and proofreading the entire article.

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Jian, BL., Tu, CC. Multi-level optimal fusion algorithm for infrared and visible image. SIViP 17, 4209–4217 (2023). https://doi.org/10.1007/s11760-023-02653-5

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11760-023-02653-5