Abstract

Background

Statistical tests of mediation are important for advancing implementation science; however, little research has examined the sample sizes needed to detect mediation in 3-level designs (e.g., organization, provider, patient) that are common in implementation research. Using a generalizable Monte Carlo simulation method, this paper examines the sample sizes required to detect mediation in 3-level designs under a range of conditions plausible for implementation studies.

Method

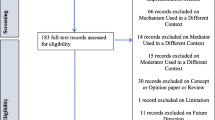

Statistical power was estimated for 17,496 3-level mediation designs in which the independent variable (X) resided at the highest cluster level (e.g., organization), the mediator (M) resided at the intermediate nested level (e.g., provider), and the outcome (Y) resided at the lowest nested level (e.g., patient). Designs varied by sample size per level, intraclass correlation coefficients of M and Y, effect sizes of the two paths constituting the indirect (mediation) effect (i.e., X→M and M→Y), and size of the direct effect. Power estimates were generated for all designs using two statistical models—conventional linear multilevel modeling of manifest variables (MVM) and multilevel structural equation modeling (MSEM)—for both 1- and 2-sided hypothesis tests.

Results

For 2-sided tests, statistical power to detect mediation was sufficient (≥0.8) in only 463 designs (2.6%) estimated using MVM and 228 designs (1.3%) estimated using MSEM; the minimum number of highest-level units needed to achieve adequate power was 40; the minimum total sample size was 900 observations. For 1-sided tests, 808 designs (4.6%) estimated using MVM and 369 designs (2.1%) estimated using MSEM had adequate power; the minimum number of highest-level units was 20; the minimum total sample was 600. At least one large effect size for either the X→M or M→Y path was necessary to achieve adequate power across all conditions.

Conclusions

While our analysis has important limitations, results suggest many of the 3-level mediation designs that can realistically be conducted in implementation research lack statistical power to detect mediation of highest-level independent variables unless effect sizes are large and 40 or more highest-level units are enrolled. We suggest strategies to increase statistical power for multilevel mediation designs and innovations to improve the feasibility of mediation tests in implementation research.

Similar content being viewed by others

Background

The goal of implementation science is to improve the quality and effectiveness of health services by develo** strategies that promote the adoption, implementation, and sustainment of empirically supported interventions in routine care [1]. Understanding the causal processes that influence healthcare professionals’ and participants’ behavior greatly facilitates this aim [2, 3]; however, knowledge regarding these processes is in its infancy [4, 5]. One popular approach to understanding causal processes is to conduct mediation studies in which the relationship between an independent variable (X) and a dependent variable (Y) is decomposed into two relationships—an indirect effect that occurs through an intervening or mediator variable (M) and a direct effect that does not occur through an intervening variable [6, 7]. Figure 1 shows a mediation model in which the effect of X on Y is decomposed into direct (c’) and indirect effects (the product of the a and b paths). Estimates of the a, b, and c’ paths shown in Fig. 1 can be obtained from regression analyses or structural equation modeling. Under certain assumptions, these estimates allow for inference regarding the extent to which the effect of X on Y is mediated, or transmitted, through the intervening variable M [8,9,10]. Interpreted appropriately, mediation analysis enables investigators to test hypotheses about how X contributes to change in Y and thereby to elucidate the mechanisms of change that influence implementation [5, 9, 10]. Recently, several major research funders, including the National Institutes of Health in the USA, have emphasized the importance of an experimental therapeutics approach to translational and implementation research in which mechanisms of action are clearly specified and tested [11,12,13]. Mediation analysis offers an important method for such tests.

Mediation analysis has long been of importance in implementation science, with recent studies emphasizing the need to increase the frequency and rigor with which this method is used [5, 14]. Guided by theoretical work on implementation mechanisms [15, 16], emerging methods-focused guidance for implementation research calls for the use of mediation analyses in randomized implementation trials to better understand how implementation strategies influence healthcare processes and outcomes [5, 17]. A systematic review of studies examining implementation mechanisms indicated mediation analysis was the dominant method for testing mechanisms in the field, used by 30 of 46 studies [4]. Other systematic reviews highlight deficits in the quality of published mediation analyses in implementation science to date and have called for increased and improved use of the method [5, 18]. Reflecting its growing importance within the field, mediation analyses feature prominently in several implementation research protocols published in the field’s leading journal, Implementation Science, during the last year [19,20,21,22]. Chashin et al. [23] recently published guidance for reporting mediation analyses in implementation studies, including the importance of determining required sample sizes for mediation tests a priori.

Designing mediation studies requires estimates of the sample size needed to detect the indirect effect. This seemingly simple issue takes on special nuance and heightened importance in implementation research because of the complexity of statistical power analysis for multilevel research designs—which are the norm in implementation research [17, 24]—and the constraints on sample size posed by the practical realities of conducting implementation research in healthcare systems. While statistical power analysis methods and tools for single-level mediation are well-developed and widely available [8, 25,26,27,28,29], these approaches are inappropriate for testing mediation in studies with two or more hierarchical levels, such as patients nested within providers nested within organizations [9, 30, 31]. Generating correct inferences about mediation from multilevel research designs requires multilevel analytic approaches and associated power analyses to determine the required sample size [32,33,34,35,36].

While some tools have begun to emerge to estimate required sample sizes for 2- and 3-level mediation designs [37, 60] or the Monte Carlo confidence interval approach [26, 61].

Cells C and D in Fig. 3 represent statistical power estimates for MVM and MSEM using a 1-sided hypothesis test. Many mediation hypotheses could reasonably be specified as directional (i.e., 1-sided) because the implementation strategy is anticipated to have a positive (or negative) effect on the mediator and outcome. The use of a 1-sided test should reduce the sample size needed to detect mediation. Estimates of statistical power for 1-sided tests were generated using an algebraic transformation of the results from the 2-sided simulations and thus did not require additional computational time (details available upon request).

Results

Completion of the simulations required 591 days of computational time. Completion rates, defined as the number of replications within a simulation that successfully converged (e.g., 500 out of 500), were high: 97.8% (n=17,114) of the MVM simulations exhibited complete convergence (i.e., 500 of 500 replications were successfully estimated) and 79.4% (n=13,889) of the MSEM simulations exhibited complete convergence. The lowest number of completed replications for any design was 493 (out of 500). The high rate at which the replications were completed increases confidence in the resulting simulation-based estimates of statistical power.

How many of the designs studied had adequate statistical power to detect mediation?

Table 1 shows the frequency and percent of designs studied that had adequate statistical power (≥ 0.8) to detect mediation by study characteristic based on a conventional MVM model, using a 2-sided test (cell A in Fig. 3). Only 463 of the 17,496 (2.6%) designs had adequate statistical power to detect mediation. As expected, statistical power was higher for the designs in cell C of Fig. 3 which were estimated using MVM and a 1-sided hypothesis test: 808 of these designs (4.6%) had adequate power to detect mediation.

As an alternative to MVM, investigators may use MSEM. Focusing on cell B of Fig. 3 (MSEM, 2-sided test), results indicated that 228 of the 17,496 designs (1.3%) studied had adequate statistical power to detect mediation. Shifting to cell D of Fig. 3 (MSEM, 1-sided test): 369 of the designs (2.1%) had adequate statistical power.

In summary, less than 5% of the 3-level mediation designs studied had adequate statistical power to detect mediation regardless of the statistical model employed (i.e., MVM vs. MSEM) or whether tests were 1- vs. 2-sided.

What study characteristics were associated with increased statistical power to detect mediation?

Table 1 presents the frequency and percent of designs with adequate statistical power to detect mediation by study characteristic for the 17,496 designs in cell A of Fig. 3 (MVM, 2-sided test). Because results were similar for all four cells in Fig. 3, we focus on the results from cell A and describe variations for the other cells as appropriate. Additional file 2 presents the frequency and percent of study designs with adequate statistical power to test mediation by study characteristic for all four cells shown in Fig. 3.

First, consistent with expectations, statistical power to detect mediation increased as the magnitude of effect sizes increased for the two paths that constitute the indirect effect (i.e., a3 and b3). Notably, none of the designs in Table 1 had adequate power when either the a3 or b3 paths were small; less than 1% of designs had adequate power when the a3 or b3 paths were medium.

Second, the number of adequately powered designs increased as sample sizes increased at each level, with the level-3 sample size having the largest effect on power. In Table 1, no designs with fewer than 40 level-3 clusters (e.g., organizations) had adequate power to detect mediation. This finding also held for the MSEM designs (cells B and D in Fig. 3; see Additional file 2). However, for cell C in Fig. 3 (MVM, 1-sided test), 11 designs (0.1%) had adequate power to detect mediation with level-3 sample sizes of 20 (see Additional file 2).

Third, larger total sample sizes were associated with increased power, although this relationship was not monotonic because the total sample size consisted of the product of the sample sizes at each level. In Table 1, the minimum total required sample size to detect mediation was N=900 level-1 units. The minimum total sample for cell C in Fig. 3 (MVM, 1-sided test) was N=600. The minimum total sample for cell B in Fig. 3 (MSEM, 2-sided test) was N=1800, and the minimum total sample for cell D in Fig. 3 (MSEM, 1-sided test) was N=1200.

What was the range of minimum sample sizes required to detect mediation?

Table 2 presents the minimum sample sizes required to achieve statistical power ≥ 0.8 to detect mediation by values of effect size for the a3 and b3 paths that constitute the indirect effect, the size of the direct effect, and the level-3 ICCs of the mediator and outcome. Results in Table 2 are based on cell A of Fig. 3 (MVM, 2-sided). In each cell of Table 2, two sample sizes are provided, one assuming a small direct effect (cs) and the other assuming a medium direct effect (cm). Sample sizes are presented as N3 [N2 [N1]] where N3 = number of level-3 units (e.g., organizations), N2 = number of level-2 units (e.g., providers) per cluster, and N1 = number of level-1 units (e.g., patients) per level-2 unit. Because the N3 sample size is typically the most resource intensive to recruit in implementation studies, and because multiple combinations of N1, N2, and N3 can achieve the same total sample size in a given cell, the minimum sample sizes shown in Table 2 were selected based on the sample combination with adequate power and the smallest N3, followed by the smallest N2, followed by the smallest N1. Blank cells (-) are informative in that they indicate there were no sample sizes that achieved adequate statistical power to detect mediation for that design; for these cells, it is not possible to design a study with adequate statistical power to test mediation within the range of sample sizes and input values we tested. Additional file 3 provides a similar table for cell C of Figure 3 (MVM, 1-sided test).

Table 2 provides additional insights into the design features necessary to test mediation in 3-level designs under conditions that are plausible for implementation research. First, most of the cells in Table 2 are empty, indicating no design in that cell had adequate power to detect mediation. This underscores the limited circumstances under which one can obtain a sample large enough to test mediation in 3-level implementation designs. Second, no designs with combinations of small or medium effects for the a3 and b3 paths had adequate statistical power. This indicates at least one large effect size for either the a3 or b3 path is needed to achieve adequate statistical power to test mediation. Third, the size of the level-3 ICC of the mediator (ICCm3) is extremely important. When ICCm3 is small, there are no designs with adequate power except those that have large effect sizes for both a3 and b3 paths.

Discussion

Thought leaders and funders in the field of implementation science have increasingly called for a stronger focus on understanding implementation mechanisms [13,14,15,16], with methodologists pointing to mediation analysis as a recommended tool in this effort [5, 17]. Because statistical power to test mediation in multilevel designs depends on the specific range of input values that are feasible within a given research area, we estimated what sample sizes, effect sizes, and ICCs are required to detect mediation in 3-level implementation research designs. We estimated statistical power and sample size required to detect mediation using a range of input values feasible for implementation research. Designs were tested under four different conditions representing two statistical models (MVM vs. MSEM) and 1- versus 2-sided hypothesis tests (see Fig. 3). Fewer than 5% of the designs studied had adequate statistical power to detect mediation. In almost all cases, the smallest number of level-3 clusters necessary to achieve adequate power was 40, the upper limit of what is possible in many implementation studies. This raises important questions about the feasibility of mediation analyses in implementation research as it is currently practiced. Enrolling 40 organizations usually requires substantial resources and may not be feasible within a limited geographic area or timeframe [24, 55]. In many settings, it also may not be possible to enroll enough level-2 units per setting (e.g., nurses on a ward, primary care physicians in a practice, specialty mental health clinicians in a clinic) or level-1 units (e.g., patients per provider). Below, we discuss the implications of these findings for researchers, funders of research, and the field.

Implications for researchers

Implementation research commonly randomizes highest-level units to implementation strategies and measures characteristics of these units that may predict implementation, such as organizational climate or culture, organizational or team leadership, or prevailing policies or norms within geopolitical units. If researchers wish to study multilevel mediation, they must either obtain a large number of highest-level units or choose potential mediating variables that are likely to have large effects. While it is not known how often such level-3 independent variables have large effects on putative lower-level mediators, there are some encouraging data on the potential for large associations between lower-level mediators and lowest-level outcomes. For example, in a meta-analysis of 79 studies, Godin et al. found variables from social cognitive theories explained up to 81% of the variance in providers’ intention to execute healthcare behaviors and 28% of the variance in physicians’ behaviors, 24% of the variance in nurses’ behavior, and 55% of the variance in other healthcare professionals’ behavior [62]. These effect sizes are comparable to or larger than the effect size for the b3 path used in this study, suggesting that the variables proposed as antecedents to behavior in these theoretical models may serve as effective mediators linking level-3 independent variables to level-1 implementation outcomes.

Researchers can take steps to increase statistical power. One approach is to include a baseline covariate that is highly correlated with the outcome, ideally a pretest measure of the outcome itself, which can significantly increase statistical power, in some cases reducing the required sample size by 50% [30, 38] and future research is needed to characterize the types of pretest covariates that are available in implementation research as well as the strength of the relationship between these covariates and pertinent implementation and clinical outcomes as these will be important for study planning. Future research should also examine how unbalanced clusters influence power in multilevel mediation.

Conclusions

This study assesses the sample sizes needed to test mediation in 3-level designs that are typical and plausible in implementation science in healthcare. Results suggest large effect sizes coupled with 40 or more highest-level units are needed to test mediation. Innovations in research design are likely needed to increase the feasibility of studying mediation within the multilevel contexts common to implementation science.

Notes

Percentages in parentheses are approximate for the b3 and c’3 paths because they are partial coefficients.

Abbreviations

- EBP:

-

Evidence-based practice

- MSEM:

-

Multilevel structural equation modeling

- MVM:

-

Conventional linear multilevel regression analysis using manifest (observed) variables

References

Eccles MP, Mittman BS. Welcome to implementation science. Implement Sci. 2006;1:1. https://doi.org/10.1186/1748-5908-1-1.

Nilsen P. Making sense of implementation theories, models and frameworks. Implement Sci. 2015;10:53. https://doi.org/10.1186/s13012-015-0242-0.

Williams NJ, Beidas RS. Annual research review: the state of implementation science in child psychology and psychiatry: a review and suggestions to advance the field. J Child Psychol Psychiatry. 2019;60:430–50. https://doi.org/10.1111/jcpp.12960.

Lewis CC, Boyd MR, Walsh-Bailey C, Lyon AR, Beidas R, Mittman B, et al. A systematic review of empirical studies examining mechanisms of implementation in health. Implement Sci. 2020;15:21. https://doi.org/10.1186/s13012-020-00983-3.

Williams NJ. Multilevel mechanisms of implementation strategies in mental health: integrating theory, research, and practice. Admin Pol Ment Health. 2016;43:783–98. https://doi.org/10.1007/s10488-015-0693-2.

Baron RM, Kenny DA. The moderator–mediator variable distinction in social psychological research: conceptual, strategic, and statistical considerations. J Pers Soc Psychol. 1986;51:1173–82. https://doi.org/10.1037/0022-3514.51.6.1173.

MacKinnon DP. Introduction to statistical mediation analysis: Routledge; 2007.

Hayes AF. Introduction to mediation, moderation, and conditional process analysis: a regression-based approach. 1st ed: Guilford Publications; 2017.

Preacher KJ. Advances in mediation analysis: a survey and synthesis of new developments. Annu Rev Psychol. 2015;66:825–52. https://doi.org/10.1146/annurev-psych-010814-015258.

VanderWeele T. Explanation in causal inference: methods for mediation and interaction: Oxford University Press; 2015.

Insel TR. The NIMH experimental medicine initiative. World Psychiatry. 2015;14:151.

Lewandowski KE, Ongur D, Keshavan MS. Development of novel behavioral interventions in an experimental therapeutics world: challenges, and directions for the future. Schizophr Res. 2018;192:6–8.

Nielsen L, Riddle M, King JW, Aklin WM, Chen W, Clark D, et al. The NIH science of behavior change program: transforming the science through a focus on mechanisms of change. Behav Res Ther. 2018;101:3–11.

Lewis CC, Powell BJ, Brewer SK, Nguyen AM, Schriger SH, Vejnoska SF, et al. Advancing mechanisms of implementation to accelerate sustainable evidence-based practice integration: protocol for generating a research agenda. BMJ Open. 2021;11(10):e053474.

Weiner BJ, Lewis MA, Clauser SB, Stitzenberg KB. In search of synergy: strategies for combining interventions at multiple levels. J Natl Cancer Inst Monogr. 2012;44:34–41.

Grol RP, Bosch MC, Hulscher ME, Eccles MP, Wensing M. Planning and studying improvement in patient care: the use of theoretical perspectives. Milbank Quart. 2007;85(1):93–138.

Wolfenden L, Foy R, Presseau J, Grimshaw JM, Ivers NM, Powell BJ, et al. Designing and undertaking randomised implementation trials: guide for researchers. BMJ. 2021;372:m3721. https://doi.org/10.1136/bmj.m3721.

McIntyre SA, Francis JJ, Gould NJ, Lorencatto F. The use of theory in process evaluations conducted alongside randomized trials of implementation interventions: a systematic review. Transl Behav Med. 2020;10:168–78.

Beidas RS, Ahmedani B, Linn KA, et al. Study protocol for a type III hybrid effectiveness-implementation trial of strategies to implement firearm safety promotion as a universal suicide prevention strategy in pediatric primary care. Implement Sci. 2021;16(89). https://doi.org/10.1186/s13012-021-01154-8.

Kohrt BA, Turner EL, Gurung D, et al. Implementation strategy in collaboration with people with lived experience of mental illness to reduce stigma among primary care providers in Nepal (RESHAPE): protocol for a type 3 hybrid implementation effectiveness cluster randomized controlled trial. Implement Sci. 2022;17(39). https://doi.org/10.1186/s13012-022-01202-x.

Cumbe VFJ, Muanido AG, Turner M, et al. Systems analysis and improvement approach to optimize outpatient mental health treatment cascades in Mozambique (SAIA-MH): study protocol for a cluster randomized trial. Implement Sci. 2022;17(37). https://doi.org/10.1186/s13012-022-01213-8.

Swindle T, Rutledge JM, Selig JP, et al. Obesity prevention practices in early care and education settings: an adaptive implementation trial. Implement Sci. 2022;17(25). https://doi.org/10.1186/s13012-021-01185-1.

Cashin AG, McAuley JH, Lee H. Advancing the reporting of mechanisms in implementation science: a guideline for reporting mediation analyses (AGReMA). Implement Res Pract. 2022;3:26334895221105568.

Mazzucca S, Tabak RG, Pilar M, Ramsey AT, Baumann AA, Kryzer E, et al. Variation in research designs used to test the effectiveness of dissemination and implementation strategies: a review. Front Public Health. 2018;6:32. https://doi.org/10.3389/fpubh.2018.00032.

Fritz MS, Mackinnon DP. Required sample size to detect the mediated effect. Psychol Sci. 2007;18:233–9. https://doi.org/10.1111/j.1467-9280.2007.01882.x.

Hayes AF, Scharkow M. The relative trustworthiness of inferential tests of the indirect effect in statistical mediation analysis: does method really matter? Psychol Sci. 2013;24:1918–27.

MacKinnon DP, Lockwood CM, Hoffman JM, West SG, Sheets V. A comparison of methods to test mediation and other intervening variable effects. Psychol Methods. 2002;7:83–104. https://doi.org/10.1037/1082-989X.7.1.83.

Schoemann AM, Boulton AJ, Short SD. Determining power and sample size for simple and complex mediation models. Soc Psychol Personal Sci. 2017;8:379–86. https://doi.org/10.1177/1948550617715068.

Thoemmes F, Mackinnon DP, Reiser MR. Power analysis for complex mediational designs using Monte Carlo methods. Struct Equ Model. 2010;17:510–34. https://doi.org/10.1080/10705511.2010.489379.

Raudenbush SW, Bryk AS. Hierarchical linear models: applications and data analysis methods. Thousand Oaks: Sage; 2002. p. 1.

Snijders TA, Bosker RJ. Multilevel analysis: an introduction to basic and advanced multilevel modeling. Thousand Oaks: Sage; 2011.

Zhang Z, Zyphur MJ, Preacher KJ. Testing multilevel mediation using hierarchical linear models: problems and solutions. Organ Res Methods. 2009;12:695–719.

Krull JL, MacKinnon DP. Multilevel modeling of individual and group level mediated effects. Multivar Behav Res. 2001;36:249–77. https://doi.org/10.1207/S15327906MBR3602_06.

Pituch KA, Murphy DL, Tate RL. Three-level models for indirect effects in school-and class-randomized experiments in education. J Exp Educ. 2009;78:60–95.

Preacher KJ. Multilevel SEM strategies for evaluating mediation in three-level data. Psychol Methods. 2011;46:691–731. https://doi.org/10.1080/00273171.2011.589280.

Preacher KJ, Zyphur MJ, Zhang Z. A general multilevel SEM framework for assessing multilevel mediation. Psychol Methods. 2010;15:209. https://doi.org/10.1037/a0020141.

Kelcey B, Spybrook J, Dong N. Sample size planning for cluster-randomized interventions probing multilevel mediation. Prev Sci. 2019;20:407–18. https://doi.org/10.1007/s11121-018-0921-6.

Kelcey B, **e Y, Spybrook J, Dong N. Power and sample size determination for multilevel mediation in three-level cluster-randomized trials. Multivar Behav Res. 2021;56:496–513. https://doi.org/10.1080/00273171.2020.1738910.

Aarons GA, Ehrhart MG, Moullin JC, et al. Testing the leadership and organizational change for implementation (LOCI) intervention in substance abuse treatment: a cluster randomized trial study protocol. Implement Sci. 2017;12(29). https://doi.org/10.1186/s13012-017-0562-3.

Wang X, Turner EL, Preisser JS, Li F. Power considerations for generalized estimating equations analyses of four-level cluster randomized trials. Biom J. 2022;64(4):663–80.

Bollen KA. Structural equations with latent variables: Wiley; 1989.

Gonzalez-Roma V, Hernandez A. Conducting and evaluating multilevel studies: recommendations, resources, and a checklist. Organ Res Methods. 2022. https://doi.org/10.1177/10944281211060712.

Preacher KJ, Zhang Z, Zyphur MJ. Alternative methods for assessing mediation in multilevel data: the advantages of multilevel SEM. Struct Equ Model. 2011;18:161–82. https://doi.org/10.1080/10705511.2011.557329.

Lüdtke O, Marsh HW, Robitzsch A, Trautwein U, Asparouhov T, Muthén B. The multilevel latent covariate model: a new, more reliable approach to group-level effects in contextual studies. Psychol Methods. 2008;13:203–29.

Muthén BO, Muthén LK, Asparouhov T. Regression and mediation analysis using Mplus. Los Angeles: Muthén & Muthén; 2017.

Muthén LK, Muthén BO. How to use a Monte Carlo study to decide on sample size and determine power. Struct Equ Model. 2002;9:599–620. https://doi.org/10.1207/S15328007SEM0904_8.

Skrondal A. Design and analysis of Monte Carlo experiments: attacking the conventional wisdom. Multivar Behav Res. 2000;35:137–67. https://doi.org/10.1207/s15327906mbr3502_1.

Boomsma A. Reporting Monte Carlo simulation studies in structural equation modeling. Struct Equ Model. 2013;20:518–40. https://doi.org/10.1080/10705511.2013.797839.

Zhang Z. Monte Carlo based statistical power analysis for mediation models: methods and software. Behav Res Methods. 2014;46:1184–98.

Cohen J. A power primer. Psychol Bull. 1992;112:155–9. https://doi.org/10.1037//0033-2909.112.1.155.

Ben Charif A, Croteau J, Adekpedjou R, Zomahoun HTV, Adisso EL, Légaré F. Implementation research on shared decision making in primary care: inventory of intracluster correlation coefficients. Med Decis Mak. 2019;39:661–72. https://doi.org/10.1177/0272989x19866296.

Campbell MK, Fayers PM, Grimshaw JM. Determinants of the intracluster correlation coefficient in cluster randomized trials: the case of implementation research. Clin Trials. 2005;2:99–107. https://doi.org/10.1191/1740774505cn071oa.

Murray DM, Blitstein JL. Methods to reduce the impact of intraclass correlation in group-randomized trials. Eval Rev. 2003;27(1):79–103.

Forman-Hoffman VL, Middleton JC, McKeeman JL, Stambaugh LF, Christian RB, Gaynes BN, et al. Quality improvement, implementation, and dissemination strategies to improve mental health care for children and adolescents: a systematic review. Implement Sci. 2017;12:93. https://doi.org/10.1186/s13012-017-0626-4.

Novins DK, Green AE, Legha RK, Aarons GA. Dissemination and implementation of evidence-based practices for child and adolescent mental health: a systematic review. J Am Acad Child Adolesc Psychiatry. 2013;52:1009–1025.e18. https://doi.org/10.1016/j.jaac.2013.07.012.

Powell BJ, Proctor EK, Glass JE. A systematic review of strategies for implementing empirically supported mental health interventions. Res Soc Work Pract. 2014;24:192–212. https://doi.org/10.1177/1049731513505778.

Rabin BA, Glasgow RE, Kerner JF, Klump MP, Brownson RC. Dissemination and implementation research on community-based cancer prevention: a systematic review. Am J Prev Med. 2010;38:443–56. https://doi.org/10.1016/j.amepre.2009.12.035.

Muthén LK, Muthén BO. Mplus user’s guide: statistical analysis with latent variables. 8th ed: Muthén & Muthén; 2017.

Sobel ME. Asymptotic confidence intervals for indirect effects in structural equation models. Sociol Methodol. 1982;13:290–312. https://doi.org/10.2307/270723.

Efron B, Tibshirani TJ. An introduction to the bootstrap: Chapman & Hall; 1993.

Preacher KJ, Selig JP. Advantages of Monte Carlo confidence intervals for indirect effects. Commun Methods Meas. 2012;6:77–98.

Godin G, Bélanger-Gravel A, Eccles M, Grimshaw J. Healthcare professionals’ intentions and behaviours: a systematic review of studies based on social cognitive theories. Implement Sci. 2008;3:36. https://doi.org/10.1186/1748-5908-3-36.

Bloom HS, Richburg-Hayes L, Black AR. Using covariates to improve precision for studies that randomize schools to evaluate educational interventions. Educ Eval Policy Anal. 2007;29:30–59. https://doi.org/10.3102/0162373707299550.

Konstantopoulos S. The impact of covariates on statistical power in cluster randomized designs: which level matters more? Multivar Behav Res. 2012;47:392–420. https://doi.org/10.1080/00273171.2012.673898.

Imai K, Keele L, Tingley D. A general approach to causal mediation analysis. Psychol Methods. 2010;15:309–34. https://doi.org/10.1037/a0020761.

VanderWeele TJ. Mediation analysis: a practitioner’s guide. Annu Rev Public Health. 2016;37:17–32.

Insel T. NIMH’s new focus in clinical trials. www.nimh.nih.gov/funding/grant-writing-and-application-process/concept-clearances/2013/nimhs-new-focus-in-clinical-trials. Accessed 6 June 2022.

US National Institute of Mental Health. Consideration of sex as a biological variable in NIH-funded research. https://grants.nih.gov/grants/guide/notice-files/not-od-15-102.html. Accessed 6 June 2022.

US National Institute of Mental Health. Dissemination and implementation research in health (R01 clinical trial optional) PAR-22-105. https://grants.nih.gov/grants/guide/pa-files/PAR-22-105.html. Accessed 6 June 2022.

Fishman J, Yang C, Mandell D. Attitude theory and measurement in implementation science: a secondary review of empirical studies and opportunities for advancement. Implement Sci. 2021;16(1):87. https://doi.org/10.1186/s13012-021-01153-9.

Cidav Z, Mandell D, Pyne J, Beidas R, Curran G, Marcus S. A pragmatic method for costing implementation strategies using time-driven activity-based costing. Implement Sci. 2020;15(1):28. https://doi.org/10.1186/s13012-020-00993-1.

Saldana L, Ritzwoller DP, Campbell M, Block EP. Using economic evaluations in implementation science to increase transparency in costs and outcomes for organizational decision-makers. Implement Sci Commun. 2022;3(1):40. https://doi.org/10.1186/s43058-022-00295-1.

Dopp AR, Kerns SEU, Panattoni L, Ringel JS, Eisenberg D, Powell BJ, et al. Translating economic evaluations into financing strategies for implementing evidence-based practices. Implement Sci. 2021;16(1):66. https://doi.org/10.1186/s13012-021-01137-9.

Treweek S, Zwarenstein M. Making trials matter: pragmatic and explanatory trials and the problem of applicability. Trials. 2009;10:37. https://doi.org/10.1186/1745-6215-10-37.

Norton WE, Loudon K, Chambers DA, et al. Designing provider-focused implementation trials with purpose and intent: introducing the PRECIS-2-PS tool. Implement Sci. 2021;16:7. https://doi.org/10.1186/s13012-020-01075-y.

Cronbach LJ. Research on classrooms and schools: formulation of questions, design, and analysis: Stanford University Evaluation Consortium; 1976.

Acknowledgements

The authors would like to thank Eliza Macneal for her assistance in programming the simulations for this study.

Funding

This work was funded in part through a grant from the US National Institute of Mental Health (P50MH113840) to the University of Pennsylvania P50 ALACRITY Center for Transforming Mental Health Care Delivery through Behavioral Economics and Implementation Science (PIs: Mandell, Beidas, Volpp). The views expressed herein are those of the authors and do not necessarily reflect those of NIMH.

Author information

Authors and Affiliations

Contributions

All authors (NJW, KJP, PDA, DM, SCM) contributed to the conceptualization of the research questions and design of the study. KJP generated the simulation code for all analyses. SCM supervised the completion of the simulations and tabulation of the results. NJW, KJP, PDA, DM, and SCM aided in the interpretation of the simulation data. NJW drafted the initial manuscript. All authors (NJW, KJP, PDA, DM, SCM) revised the manuscript for essential intellectual content and approved the final manuscript.

Authors’ information

-

Nathaniel J. Williams, Ph.D. is an Associate Professor in the School of Social Work and Research and Evaluation Coordinator in the Institute for the Study of Behavioral Health and Addiction at Boise State University, Boise, ID, natewilliams@boisestate.edu

-

Kristopher J. Preacher, Ph.D. is a Professor of Quantitative Methods and Associate Chair of the Department of Psychology & Human Development at Vanderbilt University, Nashville, TN, kris.preacher@vanderbilt.edu

-

Paul D. Allison, Ph.D. is the President of Statistical Horizons LLC, Ardmore, PA, allison@statisticalhorizons.com

-

David Mandell, Sc.D. is the Kenneth E. Appel Professor of Psychiatry and the Director of the Penn Center for Mental Health at the University of Pennsylvania Perelman School of Medicine, Philadelphia, PA, mandell@upenn.edu

-

Steven C. Marcus, Ph.D. is a Research Associate Professor in the School of Social Policy & Practice at the University of Pennsylvania, Philadelphia, PA, marcuss@upenn.edu

Corresponding author

Ethics declarations

Ethics approval and consent to participate

Not applicable.

Consent for publication

Not applicable.

Competing interests

The authors declare no competing interests.

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Supplementary Information

Additional file 1.

Mplus code for power analyses.

Additional file 2.

Frequency of designs with adequate statistical power by method and test.

Additional file 3.

Sample size crosstabulation.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/. The Creative Commons Public Domain Dedication waiver (http://creativecommons.org/publicdomain/zero/1.0/) applies to the data made available in this article, unless otherwise stated in a credit line to the data.

About this article

Cite this article

Williams, N.J., Preacher, K.J., Allison, P.D. et al. Required sample size to detect mediation in 3-level implementation studies. Implementation Sci 17, 66 (2022). https://doi.org/10.1186/s13012-022-01235-2

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/s13012-022-01235-2