Abstract

There are numerous barriers in robotic surgical training, including reliance on observational learning, low-quality feedback, and inconsistent assessment. Artificial intelligence (AI) offers potential solutions to these central problems in robotic surgical education and may allow for more efficient and efficacious training. Three key areas in which AI has particular relevance to robotic surgical education are video labeling, feedback, and assessment. Video labeling refers to the automated designation of prespecified categories to operative videos. Numerous prior studies have applied AI for video labeling, particularly for retrospective educational review after an operation. Video labeling allows learners and their instructors to rapidly identify critical parts of an operative video. We recommend incorporating AI-based video labeling into robotic surgical education where available. AI also offers a mechanism by which reliable feedback can be provided in robotic surgery. Feedback through AI harnesses automated performance metrics (APMs) and natural language processing (NLP) to provide actionable and descriptive plans to learners while reducing faculty assessment burden. We recommend combining supervised AI-generated, APM-based feedback with expert-based feedback to allow surgeons and trainees to reflect on metrics like bimanual dexterity and efficiency. Finally, summative assessment by AI could allow for automated appraisal of surgeons or surgical trainees. However, AI-based assessment remains limited by concerns around bias and opaque processes. Several studies have applied computer vision to compare AI-based assessment with expert-completed rating scales, though such work remains investigational. At this time, we recommend against the use of AI for summative assessment pending additional validity evidence. Overall, AI offers solutions and promising future directions by which to address multiple educational challenges in robotic surgery. Through advances in video labeling, feedback, and assessment, AI has demonstrated ways by which to increase the efficiency and efficacy of robotic surgical education.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Introduction

Numerous barriers prevent efficient and effective robotic surgical training, including reliance on observational learning, low-quality feedback, and inconsistent assessment [1,2,3,4]. As surgeons apply the robotic platform to more and increasingly complex procedures, these problems stand to intensify [5]. Accepted approaches to addressing these issues have been unevenly adopted by different training programs; indeed, while the Society of American Gastrointestinal and Endoscopic Surgeons (SAGES) recommends structured curricula for robotic surgical education, training varies substantially by specialty, program, and stage [6,7,8,9]. Such variation reflects heterogeneous uptake of robotic surgery as a whole and insufficient faculty development highlighting the specifics of robotic surgical instruction [5, 10, 11].

At a basic level, programs’ use of simulation differs considerably, with some requiring simulation experience prior to operative involvement and others struggling with adequate access to simulation [12, 13]. Among those incorporating simulation in training, simulation types range from low-cost home simulation to virtual reality to complex tissue- and cadaver-based models [14,15,16]. Feedback during simulation also varies, with some programs emphasizing real-time, in-person expert feedback and others relying on self-monitored progression [2, 17, 18]. In the operating room (OR), learners’ ability to participate in and observe robotic surgery diverges dramatically by setting [11, 19, 20]. Feedback and assessment in the OR also differ across sites; some instructors provide adept and actionable advice, while others struggle to offer useful guidance or reliable assessment [4, 21, 22]. Finally, after an operation, some surgeons and learners have the opportunity to review intra-operative videos to learn from their performance, while others experience barriers to video review [23].

To some extent, this heterogeneity in robotic surgical education reflects differing uptake and enthusiasm for robotic surgery. However, divergent physical, administrative, and instructional resources also likely contribute to the diverse educational experiences of those learning robotic surgery. Artificial intelligence (AI) offers potential solutions to these central problems in robotic surgical education. Properly applied, AI may provide for a more accessible and standardized experience for tomorrow’s robotic surgeons.

Rationale for the use of artificial intelligence

AI encompasses a range of subfields, including machine learning, artificial neural networks, natural language processing, and computer vision [24]. Each of these subfields has the potential to impact and improve robotic surgical education in different ways. One significant way in which AI can improve robotic surgical education is by increasing training efficiency. While many surgeons and trainees have the ability to record intra-operative videos, manually reviewing them in a meaningful way takes a substantial amount of time. Automated video labeling allows learners and their instructors to rapidly identify critical parts of an operative video, including key operative steps and near misses, and hone in on important areas for future improvement [25]. Through natural language processing, AI can also save time by allowing for basic automated feedback to reduce assessment burden and save time for expert faculty [26,27,28]. A second key way by which AI can advance robotic surgical education is by improving the efficacy of simulation, feedback, and assessment. Many learners perform standard simulation exercises that are general to all robotic surgical learners. An AI-informed simulation curriculum, if created and optimized, could harness past performance to customize simulation based on a learner’s needs [29]. Furthermore, AI can allow for more efficacious feedback and assessment by harnessing new inputs and automated performance metrics (APMs) that more accurately reflect operative events than a human rater [30]. For example, AI-detected instrument movement could offer a more accurate interpretation of a surgeon’s economy of motion than an instructor’s perceptions [31]. AI can then incorporate these and other inputs to provide descriptive action plans to learners. Furthermore, AI-based assessment can offer a standardized way by which to increase assessment reliability, eliminate the unpredictability of human raters, identify struggling learners, and promote evidence-based graduated autonomy [3]. Altogether, AI offers surgical educators opportunities to improve the efficiency and efficacy of robotic training greatly.

Available evidence

Work to incorporate AI into robotic surgical education has made significant strides in recent years. We will focus here on the applications of AI to video labeling, feedback, and assessment. Of note, there are numerous additional areas of promise for AI in robotic surgery beyond the scope of this paper, including in intra-operative decision support and autonomous task performance [24, 32].

Video labeling

Video labeling refers to the automated designation of prespecified categories to operative videos [25]. Numerous prior studies have reported on methods to apply AI for video labeling [33]. As such, SAGES has developed consensus guidelines around video labeling to promote a shared vocabulary among educators, researchers, and engineers [34]. Labeling can be done of various types of surgical activities. At the most basic level, videos can be labeled by their short and discrete gestures, alternately called surgemes in some prior work [35]. Gestures may include things like moving to a target or grabbing a suture [35, 36]. Gesture labeling may be too granular for training and is not included in the SAGES guidelines around annotation. However, others have reported on gesture review for assigning meaningful stylistic descriptors from fluid and smooth to viscous and rough [37]. A set of gestures performed together can be labeled as an action, alternately called maneuver. Actions are composite gestures like knot tying and suture throwing [38]. Action labeling can also be useful as certain actions may indicate complications or points of interest in a video [38]. Multiple actions come together to make up a task, such as closing a wound or dissecting the hepatocystic triangle [34, 36]. Task labeling can be particularly helpful in identifying and reviewing specific components of a procedure. Finally, all procedure tasks together comprise the phase, which includes access, execution of the surgical objectives, and closure [34].

In addition to surgical activities, video labeling can also be applied to specific events, anatomy, and instruments. For example, in cholecystectomies, AI video labeling has been used to identify the critical view of safety [39]. AI has also been applied to label other anatomic structures. One study assessed the ability of a deep-learning model to accurately identify the uterus, ovaries, and surgical tools [40]. Another study attempted to overcome limitations related to visual noise and obscured structures to apply labels in partial nephrectomies [41]. Finally, AI-assisted video blurring is an automated method by which to protect patient privacy. AI allows for non-operative moments, such as out-of-body camera cleaning, to be blurred; this can protect identities without compromising the educational quality of videos [42].

Feedback

Feedback is a second major area in which AI has been studied in robotic surgical education. Given surgeons’ inconsistent formative feedback that may not be actionable for learners, AI offers a mechanism by which reliable, high-quality feedback could be provided in robotic surgery [43]. The first way by which AI could improve feedback is by making meaning of APMs. Though APMs do not require AI for collection, they sometimes represent vast amounts of data that can be minimally understandable in raw form. Thus, AI can facilitate the interpretation of APMs and provide actionable, specific feedback tailored to individual surgeons. Such feedback is already incorporated into virtual reality robotic simulation [35]. APM-based feedback has also been evaluated in real-world and tissue model settings. Hung and colleagues used machine learning to train a model based on APMs from 78 robotic-assisted radical prostatectomies [30]. Similarly, Lazar et al. created a model using APMs from 42 simulated lung lobectomies [44]. APMs have been shown to correlate in these studies with actual outcomes of interest, including postoperative complications. APM-based models have highlighted substantial variance in surgeon and trainee performance in certain metrics, such as idle time and wrist articulation, which could be fed back to surgeons and trainees to help them reflect on their motions during an operation or simulation.

Another way in which AI could enhance feedback is through language processing. Surgeons often provide poor quality feedback [21]. Prior authors have harnessed natural language processing to assess feedback quality with high accuracy and specificity [45, 46]. These studies demonstrated the ability of natural language processing to recognize when surgeons’ narrative feedback did not include relevant, specific, and corrective components necessary for learners to improve. This allows low-quality feedback to be identified and improved to help surgeon reviewers. Of note, AI-generated suggestions have been shown in other fields to be of most use to novice learners [47, 48]. This may reflect limitations in AI to detect and replicate the nuances of feedback for advanced surgical techniques. Interestingly, however, in an AMEE guide, Tolsgaard and colleagues note, “While novice learners may gain the most from good AI feedback, they may be more susceptible than experts to incorrect advice provided by AI systems.” Indeed, while AI could be quite useful for providing feedback to novices, such novices may have less context to understand errors in the AI-based feedback.

Assessment

Summative assessment is closely related to feedback, though it differs in its goal of predicting future performance and determining readiness for additional autonomy or practice. Additionally, unlike feedback, summative assessment may not always be conducted to improve performance [49]. Multiple authors have investigated the use of AI for assessment in robotic surgery. Wang and colleagues used a deep convolutional neural network based on a publicly available robotic surgery dataset [50]. They created a model to assess performance on suturing, needle passing, and knot tying and found that AI-based assessment was 91.3% to 95.4% accurate in skill rating when compared with a global rating scale. Another group used computer vision to similarly compare AI-based assessment with an expert-completed rating scale [51]. They found correlations between AI-determined metrics, like bimanual dexterity and efficiency, and expert ratings. Additionally, AI has been applied to determine disease severity in cholecystectomies [52]. Disease severity or procedure difficulty assessment can provide context for manual expert assessment. More specifically, an expert could contextualize a rubric-based assessment with an AI-generated difficulty recommendation. No prior identified work has used AI for actual summative assessment—with resultant learner consequences—in robotic surgery.

Recommendations

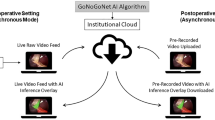

The potential uses of AI in robotic surgical education are exceptionally promising (Fig. 1). Nonetheless, we acknowledge that further work must determine the ways in which AI affects robotic surgical education in real-life settings, from trainees’ reactions and learning to actual behavior and results. Based on the available evidence, we recommend incorporating AI-based video labeling into robotic surgical education for learners and surgeons to replay and review robotic operations. This may require addressing medicolegal and technological barriers to intra-operative video recording and easing the process by which those videos can later be seen [23]. Future work should focus on how surgeons use and apply video labeling to ensure that the technology meets educational needs. Video labeling has been chiefly used for retrospective educational review after an operation [33]. A next step for video labeling could be incorporating real-time video labeling on an intra-operative observer screen. Though research must address the effects of this on workflow, such an intervention could allow for more efficacious learning for trainees who are relegated in parts or all of an operation to observation [53, 54]. Other work could investigate automated case logging based on video labeling to allow surgeons and trainees to track their progression over time and divide joint case metrics by operator [42]. Future studies that apply and assess video labeling should use the SAGES consensus vocabulary [34].

We also recommend combining AI-generated, APM-based feedback with expert-based feedback to allow surgeons and trainees to reflect on metrics like bimanual dexterity and efficiency. AI-generated, APM-based feedback may represent a more accurate measure of certain activities in robotic surgery, which could help surgeons and trainees better track their performance and monitor improvement over time. We also suggest that further work identify how APMs could be used to tailor simulation efforts. This could allow for more efficient use of simulation time. Future work in AI-based feedback in robotic surgery could focus on better combining language processing with computer vision. High-quality automated feedback could be provided based on videos of robotic operations or simulations. This could overcome current hurdles associated with manual feedback, including delays in feedback and poorly written or unactionable feedback [51]. Until further work demonstrates the accuracy of AI-based feedback in additional and novel settings, we recommend that AI-based feedback generally be supervised and approved by an expert.

Finally, we recommend against the use of AI without expert supervision for summative assessment at this time. While the aforementioned and other studies have shown substantial strides in the ability of AI to detect a number of important metrics in robotic surgery, it remains insufficiently tested to use summatively. We recommend that further work develop additional evidence in all aspects of validity and in users’ experience prior to considering AI-based summative feedback [55].

Limitations of artificial intelligence

There are important limitations to note with the application of AI to robotic surgical education. First, much of the existing work has applied models to ideal cases or basic procedures that ‘follow the script’ through a single camera view and using a single robotic system. Applying computer vision when there is deviation from the script and improving algorithmic performance in new settings will be of utmost importance to the broader use of AI in robotic surgical education. Similarly, many studies have been limited by small datasets, a lack of external validation, and opaque processes [33]. As additional authors apply and investigate AI in robotic surgical education, they should continue to emphasize the validity and transparency of their processes. Doing so may increase learner and surgeon receptivity to AI-based feedback. Furthermore, validity and transparency will be particularly important prior to the use of AI for assessment in robotic surgical education. Educators simply cannot adopt AI-based assessment in the setting of opaque processes. While a goal of AI-based feedback and assessment is standardization and bias reduction, algorithmic bias remains a troubling barrier [56]. Underskilling is the inaccurate downgrading of a surgeon’s performance by AI, while overskilling is the inaccurate upgrading of a surgeon’s performance by AI; both underskilling and overskilling present obstacles to the summative use of AI-generated assessment [57].

Finally, we caution against the widespread view of AI-based feedback and assessment as “objective.” While many authors in the works cited in this paper emphasize the importance of objective metrics such as those possible through AI, it is important to note that humans decide which metrics to include in an algorithm or AI-based rubric. Whether a particular gesture correlates with an outcome that is important to patients is mostly unknown [30, 58]. Similarly, many AI-based assessments use expert feedback as a gold standard; however, not all expert feedback is created equally. As such, while AI certainly may produce highly reliable or consistent results, portraying AI-based metrics as an objective and ultimate reflection of truth may do a disservice to learners, educators, and patients.

Conclusions

AI offers potential solutions to multiple educational challenges in robotic surgery. Through advances in video labeling, feedback, and assessment, AI has demonstrated ways by which to increase the efficiency and efficacy of robotic surgical education. Barriers to the widespread use of AI remain, particularly with regard to the use of AI for assessment of robotic surgical skill. Both AI-based technologies and robotic surgery have changed rapidly, and will likely continue to evolve quickly in coming weeks, months, and years. As such, recommendations and limitations in this area will also change. To facilitate impactful AI-based innovations in robotic surgical education, future work should focus on further develo** validity and transparency.

Summary box

-

Robotic surgical education has been challenged by a reliance on observational learning, low-quality feedback, and inconsistent assessment

-

AI offers solutions to these and other challenges in robotic surgical education

-

Strengths of AI in this field center around video labeling and metric-based feedback

-

Ongoing work must focus on develo** validity and ensuring transparency

Where to find more information

We suggest reading “Artificial Intelligence Methods and Artificial Intelligence-Enabled Metrics for Surgical Education: A Multidisciplinary Consensus” and “Artificial Intelligence and Surgical Education: A Systematic Sco** Review of Interventions” for recommendations and review regarding the use of AI in surgical education more broadly [29, 59]. For additional resources on the use of AI in robotic surgery, consider “A systematic review on artificial intelligence in robot-assisted surgery” [33]. Finally, for information on consensus video annotation, we advise reviewing “SAGES consensus recommendations on an annotation framework for surgical video” by Meireles and colleagues [34].

References

Shaw RD, Eid MA, Bleicher J, Broecker J, Caesar B, Chin R, et al. Current barriers in robotic surgery training for general surgery residents. J Surg Educ. 2022;79(3):606–13.

SAGES Robotic Task Force, Chen R, Rodrigues Armijo P, Krause C, Siu KC, Oleynikov D. A comprehensive review of robotic surgery curriculum and training for residents, fellows, and postgraduate surgical education. Surg Endosc. 2020;34(1):361–7.

Chen J, Cheng N, Cacciamani G, Oh P, Lin-Brande M, Remulla D, et al. Objective assessment of robotic surgical technical skill: a systematic review. J Urol. 2019;201(3):461–9.

Beane M. Shadow learning: building robotic surgical skill when approved means fail. Adm Sci Q. 2019;64(1):87–123.

Sheetz KH, Claflin J, Dimick JB. Trends in the adoption of robotic surgery for common surgical procedures. JAMA Netw Open. 2020;3(1): e1918911.

Green CA, Chern H, O’Sullivan PS. Current robotic curricula for surgery residents: a need for additional cognitive and psychomotor focus. Am J Surg. 2018;215(2):277–81.

The SAGES-MIRA Robotic Surgery Consensus Group, Herron DM, Marohn M. A consensus document on robotic surgery. Surg Endosc. 2008;22(2):313–25.

Winder JS, Juza RM, Sasaki J, Rogers AM, Pauli EM, Haluck RS, et al. Implementing a robotics curriculum at an academic general surgery training program: our initial experience. J Robot Surg. 2016;10(3):209–13.

Ramirez Barriga M, Rojas A, Roggin KK, Talamonti MS, Hogg ME. Development of a two-week dedicated robotic surgery curriculum for general surgery residents. J Surg Educ. 2022;79(4):861–6.

Harrison W, Munien K, Desai D. Robotic surgery education in Australia and New Zealand: primetime for a curriculum. ANZ J Surg. 2024;94(1–2):30–6.

Green CA, Mahuron KM, Harris HW, O’Sullivan PS. Integrating robotic technology into resident training: challenges and recommendations from the front lines. Acad Med. 2019;94(10):1532–8.

Stewart CL, Green C, Meara MP, Awad MM, Nelson M, Coker AM, et al. Common components of general surgery robotic educational programs. J Surg Educ. 2023;80:1717–22.

MacCraith E, Forde JC, Davis NF. Robotic simulation training for urological trainees: a comprehensive review on cost, merits and challenges. J Robot Surg. 2019;13(3):371–7.

Witthaus MW, Farooq S, Melnyk R, Campbell T, Saba P, Mathews E, et al. Incorporation and validation of clinically relevant performance metrics of simulation (CRPMS) into a novel full-immersion simulation platform for nerve-sparing robot-assisted radical prostatectomy (NS-RARP) utilizing three-dimensional printing and hydroge: Incorporating clinical metrics in a RARP model. BJU Int. 2020;125(2):322–32.

Wile RK, Brian R, Rodriguez N, Chern H, Cruff J, O’Sullivan PS. Home practice for robotic surgery: a randomized controlled trial of a low-cost simulation model. J Robot Surg. 2023;17:2527–36.

Sridhar AN, Briggs TP, Kelly JD, Nathan S. Training in robotic surgery—an overview. Curr Urol Rep. 2017;18(8):58.

Thornblade LW, Fong Y. Simulation-based training in robotic surgery: contemporary and future methods. J Laparoendosc Adv Surg Tech. 2021;31(5):556–60.

Kumar A, Smith R, Patel VR. Current status of robotic simulators in acquisition of robotic surgical skills. Curr Opin Urol. 2015;25(2):168–74.

Fleming CA, Ali O, Clements JM, Hirniak J, King M, Mohan HM, et al. Surgical trainee experience and opinion of robotic surgery in surgical training and vision for the future: a snapshot study of pan-specialty surgical trainees. J Robot Surg. 2022;16(5):1073–82.

Zhao B, Hollandsworth HM, Lee AM, Lam J, Lopez NE, Abbadessa B, et al. Making the jump: a qualitative analysis on the transition from bedside assistant to console surgeon in robotic surgery training. J Surg Educ. 2020;77(2):461–71.

Green CA, Chu SN, Huang E, Chern H, O’Sullivan P. Teaching in the robotic environment: use of alternative approaches to guide operative instruction. Am J Surg. 2020;219(1):191–6.

Green CA, Lin J, Higgins R, O’Sullivan PS, Huang E. Expertise in perception during robotic surgery (ExPeRtS): what we see and what we say. Am J Surg. 2022;224(3):908–13.

Pangal DJ, Donoho DA. “Are we recording this?” Surgeons deserve next-generation analytics. J Neurosurg. 2023;1:1–4.

Hashimoto DA, Rosman G, Rus D, Meireles OR. Artificial intelligence in surgery: promises and perils. Ann Surg. 2018;268(1):70–6.

Cheikh Youssef S, Hachach-Haram N, Aydin A, Shah TT, Sapre N, Nair R, et al. Video labelling robot-assisted radical prostatectomy and the role of artificial intelligence (AI): training a novice. J Robot Surg. 2022;17(2):695–701.

Fard MJ, Ameri S, Darin Ellis R, Chinnam RB, Pandya AK, Klein MD. Automated robot-assisted surgical skill evaluation: predictive analytics approach. Int J Med Robot Comput Assist Surg. 2018;14(1): e1850.

Acton RD, Chipman JG, Lunden M, Schmitz CC. Unanticipated teaching demands rise with simulation training: strategies for managing faculty workload. J Surg Educ. 2015;72(3):522–9.

Shkolyar E, Pugh C, Liao JC. Laying the groundwork for optimized surgical feedback. JAMA Netw Open. 2023;6(6): e2320465.

Vedula SS, Ghazi A, Collins JW, Pugh C, Stefanidis D, Meireles O, et al. Artificial intelligence methods and artificial intelligence-enabled metrics for surgical education: a multidisciplinary consensus. J Am Coll Surg. 2022;234(6):1181–92.

Hung AJ, Chen J, Ghodoussipour S, Oh PJ, Liu Z, Nguyen J, et al. A deep-learning model using automated performance metrics and clinical features to predict urinary continence recovery after robot-assisted radical prostatectomy. BJU Int. 2019;124(3):487–95.

Kutana S, Bitner DP, Addison P, Chung PJ, Talamini MA, Filicori F. Objective assessment of robotic surgical skills: review of literature and future directions. Surg Endosc. 2022;36(6):3698–707.

Vasey B, Lippert KAN, Khan DZ, Ibrahim M, Koh CH, Layard Horsfall H, et al. Intraoperative applications of artificial intelligence in robotic surgery: a sco** review of current development stages and levels of autonomy. Ann Surg. 2022;278:896–903.

Moglia A, Georgiou K, Georgiou E, Satava RM, Cuschieri A. A systematic review on artificial intelligence in robot-assisted surgery. Int J Surg. 2021;95: 106151.

Meireles OR, Rosman G, Altieri MS, Carin L, Hager G, Madani A, et al. SAGES consensus recommendations on an annotation framework for surgical video. Surg Endosc. 2021;35(9):4918–29.

Despinoy F, Bouget D, Forestier G, Penet C, Zemiti N, Poignet P, et al. Unsupervised trajectory segmentation for surgical gesture recognition in robotic training. IEEE Trans Biomed Eng. 2016;63(6):1280–91.

DiPietro R, Ahmidi N, Malpani A, Waldram M, Lee GI, Lee MR, et al. Segmenting and classifying activities in robot-assisted surgery with recurrent neural networks. Int J CARS. 2019;14(11):2005–20.

Ershad M, Rege R, Majewicz FA. Automatic and near real-time stylistic behavior assessment in robotic surgery. Int J CARS. 2019;14(4):635–43.

Perumalla C, Kearse L, Peven M, Laufer S, Goll C, Wise B, et al. AI-based video segmentation: procedural steps or basic maneuvers? J Surg Res. 2023;283:500–6.

Mascagni P, Vardazaryan A, Alapatt D, Urade T, Emre T, Fiorillo C, et al. Artificial intelligence for surgical safety: automatic assessment of the critical view of safety in laparoscopic cholecystectomy using deep learning. Ann Surg. 2022;275(5):955–61.

Madad Zadeh S, Francois T, Calvet L, Chauvet P, Canis M, Bartoli A, et al. SurgAI: deep learning for computerized laparoscopic image understanding in gynaecology. Surg Endosc. 2020;34(12):5377–83.

Nosrati MS, Amir-Khalili A, Peyrat JM, Abinahed J, Al-Alao O, Al-Ansari A, et al. Endoscopic scene labelling and augmentation using intra-operative pulsatile motion and colour appearance cues with preoperative anatomical priors. Int J CARS. 2016;11(8):1409–18.

Filicori F, Bitner DP, Fuchs HF, Anvari M, Sankaranaraynan G, Bloom MB, et al. SAGES video acquisition framework-analysis of available OR recording technologies by the SAGES AI task force. Surg Endosc. 2023;37(6):4321–7.

Pugh CM. The quantified surgeon: a glimpse into the future of surgical metrics and outcomes. Am Surg. 2023;31:31348231168315.

Lazar JF, Brown K, Yousaf S, Jarc A, Metchik A, Henderson H, et al. Objective performance indicators of cardiothoracic residents are associated with vascular injury during robotic-assisted lobectomy on porcine models. J Robot Surg. 2022;17(2):669–76.

Solano QP, Hayward L, Chopra Z, Quanstrom K, Kendrick D, Abbott KL, et al. Natural language processing and assessment of resident feedback quality. J Surg Educ. 2021;78(6):e72–7.

Ötleş E, Kendrick DE, Solano QP, Schuller M, Ahle SL, Eskender MH, et al. Using natural language processing to automatically assess feedback quality: findings from 3 surgical residencies. Acad Med. 2021;96(10):1457–60.

Tschandl P, Rinner C, Apalla Z, Argenziano G, Codella N, Halpern A, et al. Human–computer collaboration for skin cancer recognition. Nat Med. 2020;26(8):1229–34.

Tolsgaard MG, Pusic MV, Sebok-Syer SS, Gin B, Svendsen MB, Syer MD, et al. The fundamentals of artificial intelligence in medical education research: AMEE Guide No. 156. Med Teach. 2023;45(6):565–73.

Kornegay JG, Kraut A, Manthey D, Omron R, Caretta-Weyer H, Kuhn G, et al. Feedback in medical education: a critical appraisal. AEM Educ Train. 2017;1(2):98–109.

Wang Z, Majewicz FA. Deep learning with convolutional neural network for objective skill evaluation in robot-assisted surgery. Int J CARS. 2018;13(12):1959–70.

Yang JH, Goodman ED, Dawes AJ, Gahagan JV, Esquivel MM, Liebert CA, et al. Using AI and computer vision to analyze technical proficiency in robotic surgery. Surg Endosc. 2023;37(4):3010–7.

Korndorffer JR, Hawn MT, Spain DA, Knowlton LM, Azagury DE, Nassar AK, et al. Situating artificial intelligence in surgery: a focus on disease severity. Ann Surg. 2020;272(3):523–8.

Barnes KE, Brian R, Greenberg AL, Watanaskul S, Kim EK, O’Sullivan PS, et al. Beyond watching: harnessing laparoscopy to increase medical students’ engagement with robotic procedures. Am J Surg. 2023;S0002–9610(23):00092–102.

Turner SR, Mormando J, Park BJ, Huang J. Attitudes of robotic surgery educators and learners: challenges, advantages, tips and tricks of teaching and learning robotic surgery. J Robot Surg. 2020;14(3):455–61.

Messick S. Validity of psychological assessment: validation of inferences from persons’ responses and performances as scientific inquiry into score meaning. Am Psychol. 1995;50(9):741–9.

Mittermaier M, Raza MM, Kvedar JC. Bias in AI-based models for medical applications: challenges and mitigation strategies. NPJ Digit Med. 2023;6(1):113.

Kiyasseh D, Laca J, Haque TF, Otiato M, Miles BJ, Wagner C, et al. Human visual explanations mitigate bias in AI-based assessment of surgeon skills. NPJ Digit Med. 2023;6(1):54.

Hung AJ, Chen J, Shah A, Gill IS. Telementoring and telesurgery for minimally invasive procedures. J Urol. 2018;199(2):355–69.

Kirubarajan A, Young D, Khan S, Crasto N, Sobel M, Sussman D. Artificial intelligence and surgical education: a systematic sco** review of interventions. J Surg Educ. 2022;79(2):500–15.

Funding

Riley Brian and Alyssa Murillo received funding for their research through the Intuitive-UCSF Simulation-Based Surgical Education Research Fellowship. Camilla Gomes received funding for her research through Johnson & Johnson.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Brian, R., Murillo, A., Gomes, C. et al. Artificial intelligence and robotic surgical education. Global Surg Educ 3, 60 (2024). https://doi.org/10.1007/s44186-024-00262-5

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s44186-024-00262-5