Abstract

During the advantages of shorter training and higher information throughput, steady-state visual evoked potential (SSVEP) is widely used in brain–computer interface (BCI) research. Recently, collecting EEG signals from the ear area (ear-EEG) has gained increasing attention because it is more comfortable and convenient than scalp electrodes. The ear-EEG-based BCI system based on ear electrodes has weaker signals and more noise components because the electrodes are located far away from the top of the head. In this study, the RandOm Convolutional KErnel Transform (ROCKET) algorithm integrated with the Morlet wavelet transform (Morlet-ROCKET) was proposed to solve this issue. This study compared the performence of Morlet-ROCKET with two established methods: canonical correlation analysis-based (FBCCA) and Transformer methods. The proposed Morlet-ROCKET model demonstrated superior performance across multiple measures, including increased classification accuracy in 1 s, 3 s, and 4 s time windows and higher area under the curve (AUC) values in receiver operating characteristic (ROC) analysis. The analysis result proved that with efficient data processing algorithms, ear-EEG-based BCI systems can also have good performance, and providing support for the popularization of BCI.

Highlights

-

This paper employs ear-EEG to record SSVEP signals, showcasing a new approach in signal acquisition.

-

It introduces Morlet-ROCKET, an innovative method characterized by low computational complexity.

-

The proposed method has demonstrated enhanced performance compared to conventional methods, such as FBCCA and transformer, indicating its effectiveness in EEG signal analysis.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

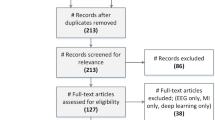

The Brain–Computer Interface (BCI) is an innovative technology which facilitates direct communication between the brain and computers or external devices. This technology has immense potential to improve the interaction capabilities of individuals with disabilities, as well as applications in industrial production and aerospace engineering [1, 2]. Traditional BCI systems use scalp electrodes based on the international 10–20 standard for recording brain signals. However, this on-scalp EEG recording approach requires stable attachment using caps, headsets, or adhesives, leading to discomfort and obtrusiveness for users. To address these issues, ‘ear-EEG’ was proposed, which involves placing electrodes around the ear, significantly enhancing invisibility, mobility, and comfort for the wearer, and offering a less intrusive experience than full-scalp EEG. In BCI research, there has been an increasing focus on steady-state visual evoked potentials (SSVEP) due to their superior signal-to-noise ratio (SNR) and the elimination of extensive training requirements [3,4,5,6, The typical CCA method recognizes the remaining target frequency in the canonical correlation values. Considering \(\textbf{X}\in \mathbb {R}^{N\times d_1}, \textbf{Y}\in \mathbb {R}^{N\times d_2}\), where N corresponds to several observations. \(d_1\) and \(d_2\) represent the dimension of the observation. CCA, as a 2-multidimensional variable, finds a pair of linear combinations of the variables \({\omega }_x\in \mathbb {R}^{1\times d_1}, {\omega }_y\in \mathbb {R}^{1\times d_2}\) to maximize the correlation between \({\omega }_x \textbf{X}^T\) and \({\omega }_y \textbf{Y}^T\), called canonical variants. Mathematically, this relationship can be expressed as follows: In this context, EEG data typically constitute \(\textbf{X}\), while \(\textbf{Y}\) is represented by reference sinusoidal signals and their harmonics. For example, for a stimulus frequency of 4 Hz, the reference signals would include frequencies of 4 Hz and its harmonics (8 Hz, 12 Hz,...) up to the Nyquist frequency. FBCCA is a variant designed method based on CCA for SSVEP classification tasks. It could leverage a series of band-pass filters to decompose the EEG signal into several sub-band components and the CCA will be applied to each sub-band component [26]. This methodology enhances the extraction and utilization of harmonics within the EEG signal, thereby offering improved performance over the standard CCA. In this study, 4 filter banks were implemented and each defined by a distinct band-pass filter with a predetermined upper frequency limit of 80 Hz, evenly distributed lower limits to cover the full range of SSVEP frequency bands. Morlet wavelet stands as a specialized time-frequency analysis method which is originating in the early 1980s. It is designed for the analysis of non-stationary signals as a pivotal tool in signal processing [27]. The Morlet wavelet is mathematically defined as the element-wise product of a sinusoidal wave and Gaussian function. The key parameter is the full width at half maximum (FWHM) of the Gaussian function. Specific mathematical formulation could be used to attain optimal smoothing performance in both the temporal and spectral domains [28] as follows: where t corresponds to time, f denotes frequency, and h represents the FWHM in seconds. We considered 2 Hz\(\sim\)60 Hz as the frequency range associated with the Morlet wavelet. Morlet wavelet transform was applied to a specific time window of eight-channel ear-EEG data, in order to converting the shape of the data from (samples, channels, times) to (samples, frequencies, times). Finally, the output of the Morlet wavelet transform was used as the input for ROCKET model. The Transformer model is distinguished for its efficiency in various sequence-to-sequence tasks and built on an architecture which integrates an encoder with a decoder. However, only encoder component of the model will be used in time-series classification tasks like SSVEP signal categorization. Each encoder block in this architecture is designed to transform input data through a series of operations that capture the intricacies of sequential information. The core of Transformer encoder is multi-head attention mechanism. It allows model to focus on different parts of the input sequence when processing a particular element. Mathematically, the multi-head attention can be described by the following equation: where queries \(\textbf{Q}\in \mathbb {R}^{N\times D_k}\), keys \(\textbf{K}\in \mathbb {R}^{M\times D_k}\), values \(\textbf{V}\in \mathbb {R}^{M\times D_v}\). N, M stand for lengths of queries and keys \(D_k, D_m\) represent the dimensions of keys and values, respectively. The multi-head attention with H dimensions. Our Transformer encoder stack comprises three such encoders in series. The output from the final encoder is passed through a fully connected layer with 256 units, followed by a dropout layer with a 0.5 rate to prevent overfitting. The dimensionality of all model components was set to 256 to maintain consistency. Additional batch normalization and a dropout layer at a rate of 0.2 were implemented for regularization (Fig. 3). ROCKET is a feature extraction method which employs random convolutional kernels to transform time-series data based on derived features such as including the maximum value and proportion of positive values (PPV). Unlike typical deep neural networks (DNN) required back-propagation to adjust the weights of the layers, ROCKET model demonstrated the efficacy of using a vast number of random convolutional kernels to capture features that are pertinent to time-series classification, leading to a substantial reduction in the training time. An additional benefit of ROCKET is significantly reduced number of hyper-parameters compared to a DNN, which not only minimizes the time-consuming and laborious task of fine-tuning but also renders the model more accessible and robust. Originally designed for single-channel time series data, ROCKET faced limitations when applied to multichannel datasets. To address this, we re-engineered the generation of random CNN kernels. The first step involves generating random CNN kernels for a single channel. These kernels are then broadcasted to all channels. Finally, the channel axis is averaged to accommodate multichannel data. The detailed algorithmic structure of this Multi-channel ROCKET model is outlined in Algorithm 1. We employed the ridge classifier which is an extension of the ridge regression, due to its efficacy in handling multicollinearity and high-dimensional feature spaces. The classifier was trained using ROCKET-transformed SSVEP signals to perform 4-class classification of ear-EEG data. Ridge classifier has emerged as a widely adopted approach for parameter estimation in the context of multiple linear regression due to its effectiveness in mitigating the issue of collinearity that often plagues this type of analysis by computing the sum of penalty of linear regression and value of weights (see Eq. (7)) [29]: where the X is the design matrix, y is the target, \(\textbf{w}\) is the coefficient vector. The classifier in question follows a two-step process for binary classification. Firstly, it transforms binary targets into the set \(\{-1, 1\}\). Then, it approaches the classification problem as a regression task with the same optimization objective as previously mentioned. The predicted class is determined by the sign of the regressor’s prediction. Multi-class classification analysis was treat as a multi-output regression task. The classifier identifies the predicted class by selecting the output featuring the highest corresponding value. This study transformed SSVEP signals using ROCKET with 10,000 convolutional kernels to extract 20,000 features. Ridge classifier [30] is well suited for situations in which the number of features is larger than the number of samples. It was used to classify the transformed SSVEP signals of ear-EEG. In conclusion, this study conducted a rigorous comparative analysis to ascertain the effectiveness of three distinct computational approaches applied to uniform ear-EEG-based SSVEP data. The methods evaluated were: the established CCA-based technique, the cutting-edge deep learning-based Transformer, and the innovative ’semi-machine’ learning method known as ROCKET. Each of these methods were used for analyzing preprocessed input data of ear-EEG recordings that were meticulously filtered and standardized. Specifically, the FBCCA method utilized 8-channel SSVEP data subjected to these preprocessing steps. In contrast, the data for Transformer and ROCKET methods were further transformed through Morlet wavelet decomposition to enhance feature extraction capabilities (Fig. 4). Figure 5 delineates the SSVEP components based on ear-EEG which elicited by stimuli at varying frequencies and analyzed via Morlet wavelet transform. Notably, during the 5 Hz stimulus, the harmonic response at 15 Hz is more prominent than the fundamental 5 Hz stimulus. In the 7 Hz stimulus, both fundamental and 21 Hz harmonic exhibit comparable intensity. In 9 Hz and 11 Hz stimulus experiments, fundamental components on 9 Hz and 11 Hz are more prominent than harmonic. When using ROCKET to analyze ear-EEG, wavelet transform is an indispensable data processing process. The value of the FWHM (denoted as h in eq (2)) has significant relevance to interpreting the result of the Morlet wavelet transform, and it is highly dependent on the specific task. Figure 6 shows the distribution of accuracy of the ROCKET model with different FWHMs. We found that the model demonstrated the best performance when \(FWHM = 0.75\cdot \text{ length }\_\text{ of }\_\text{ timewindow }\). The accuracy of the target frequencies based on ear-EEG did not increase after h=0.75 in different time windows. The time-frequency analysis based on ear-EEG revealed the presence of both target frequency components and their respective harmonic frequency components. Nonetheless, it’s important to note that there were noticeable noise components present in the data. We applied the original data to the ROCKET model to verify the necessity of the Morlet wavelet transform (Fig. 7). In Fig. 7, EEG data without Morlet wavelet has a very low classification accuracy (orange color) in ROCKET model. The substantial discrepancy observed between the original and transformed data suggests that the Morlet wavelet transform plays a critical role in preprocessing the Morlet-ROCKET model. The transformed data-based accuracies of the target frequency classification in different time windows were higher than those based on the original data, and statistical analysis demonstrated significant differences between them. This study used ear-EEG-based time-frequency data as input data for the Morlet-ROCKET models. Of these, Leave-One-Out cross validation was conducted (see Fig. 8). Additionally, the results from a Games-Howell test—conducted to compare each method within the same time window—are presented in Fig. 8 which indicate that Morlet-ROCKET outperforms FBCCA and Transformer in specific time window scenarios, with significant accuracy enhancements observable in the 1 s, 3 s, and 4 s windows. The confusion matrices in Fig. 9 reveal a direct correlation between the length of the time window and classification accuracy, with the latter increasing as the former extends. This trend is further substantiated by the ROC curves in Fig. 10, comparing the true positive rate against the false positive rate for the Morlet-ROCKET and Transformer models across different time windows. The Morlet-ROCKET model consistently demonstrates superior performance, as evidenced by the area under the curve (AUC) values, reinforcing its efficacy in SSVEP signal classification. The comprehensive evaluation presented herein not only corroborates the superiority of the Morlet-ROCKET model over traditional FBCCA and emerging Transformer methods, but also highlights the crucial role of wavelet preprocessing in SSVEP signal classification. The methodological advancements introduced by this study could pave the way for more robust and accurate BCI systems. Based on the results in Fig. 5, we can see that visual stimulation frequencies such as 5 Hz, 7 Hz, 9 Hz, 11 Hz which are commonly used in traditional SSVEP experiments based on head electrodes can still be detected from the electrodes around ears. This evidence supports the feasibility of simplifying BCI systems by relocating electrodes to the periphery of the ears, which could potentially enhance user comfort and system practicality. As indicated in Fig. 6, model accuracy enhancement with increased FWHM highlights the SSVEP signal’s dependency on frequency resolution over temporal precision. This observation aligns with the frequency-domain characteristics of SSVEP signals and merits further investigation into the optimal balance between frequency resolution and temporal accuracy in SSVEP-based BCIs. The comparative analysis depicted in Fig. 7 clearly demonstrates a significant enhancement in performance when the ROCKET framework utilizes data transformed via Morlet wavelet, compared to its use of original, untransformed data. EEG data are inherently characterized by their high levels of noise and non-stationary behavior, which lead to a decrease in ROCKET’s performance as the length of the time window extends. The adoption of wavelet transforms acts as an effective strategy for diminishing noise and spotlighting signal characteristics that are more stable over time. This technique helps in preserving crucial information that might otherwise be lost when analysis is conducted solely with raw data. Such information loss is particularly evident in longer time windows due to an increase in signal variability. By integrating wavelet transforms, we can mitigate these issues, thereby enhancing the ROCKET model’s ability to analyze EEG data across various time spans. Figure 8 demonstrates the Morlet-ROCKET model’s superior accuracy over conventional FBCCA and Transformer approaches, particularly in the 1 s, 3 s, and 4 s time windows. The prerequisite for such enhanced accuracy is the preprocessing of EEG data via Morlet wavelet transform, suggesting that raw EEG data may be ill-suited for ROCKET-based classification without such preprocessing. The classification accuracies for different frequency stimuli which was detailed by the confusion matrix in Fig. 9, indicate a notable discrepancy in the ROCKET model’s performance across varied time windows. The improved accuracy for the 5 Hz stimulus in a 4 s window suggests that extending the time window can have a beneficial impact on the classification of lower frequency stimuli, potentially due to increased data availability for pattern recognition. Lastly, the ROC curves in Fig. 10 further substantiate the efficacy of the Morlet-ROCKET model. The AUC values for both ROCKET and Transformer models display an upward trend with increasing time window lengths, reinforcing the notion that longer time windows may facilitate more accurate SSVEP signal classification. These results emphasize the importance of preprocessing in SSVEP-based BCIs and suggest that the Morlet-ROCKET model, with its ability to accommodate different time windows and stimuli frequencies, could provide a robust framework for future BCI applications. Future work should aim to validate these findings in larger participant cohorts and explore the integration of this approach into real-world BCI systems. This study introduced a hybrid model combining Morlet wavelet transform and the ROCKET algorithm for classifying SSVEP signals from ear-EEG data. The model displayed remarkable accuracy, outperforming FBCCA and Transformer in detecting frequencies of 5 Hz, 7 Hz, 9 Hz, and 11 Hz. With an accuracy of \(75.5\pm 6.7\%\), it significantly exceeds the \(40\sim 70\%\) range reported in prior ear-EEG SSVEP studies [31]. These findings not only validate the model’s efficacy but also highlight its potential for practical BCI applications, pointing towards a future direction for non-invasive and user-friendly BCI systems. Further research is necessary to explore the full potential of this approach in diverse settings and across a wider frequency range. However, the ROCKET algorithm’s tendency to saturate with large datasets suggests a scope for further optimization. Future research should focus on enhancing the model’s efficiency across a broader frequency range and in diverse settings, paving the way for more versatile and effective BCI applications.3 Methodology

3.1 FBCCA

3.2 Morlet wavelet

3.3 Transformer architecture

3.4 Multi-channel ROCKET

4 Results

4.1 SSVEP based on ear-EEG

4.2 Classification results of FBCCA, Transformer and Morlet-ROCKET

4.3 Performance of Morlet-ROCKET model

5 Discussion

6 Conclusion

Availability of data and materials

The datasets generated during and/or analyzed during the current study are available from the corresponding author on reasonable request.

References

Douibi K, Le Bars S, Lemontey A, Nag L, Balp R, Breda G. Toward EEG-based BCI applications for industry 4.0: challenges and possible applications. Front Hum Neurosci. 2021;456.

Wang X, Gong G, Li N, Ma Y. A survey of the BCI and its application prospect. Springer; 2016. p. 102–11.

Veena N, Anitha N. A review of non-invasive BCI devices. Int J Biomed Eng Technol. 2020;34(3):205–33.

Xu M, Han J, Wang Y, Jung T-P, Ming D. Implementing over 100 command codes for a high-speed hybrid brain–computer interface using concurrent p300 and SSVEP features. IEEE Trans Biomed Eng. 2020;67(11):3073–82.

Zhang Y, Guo D, Li F, Yin E, Zhang Y, Li P, Zhao Q, Tanaka T, Yao D, Xu P. Correlated component analysis for enhancing the performance of SSVEP-based brain-computer interface. IEEE Trans Neural Syst Rehabil Eng. 2018;26(5):948–56.

Wang Y, Chen X, Gao X, Gao S. A benchmark dataset for SSVEP-based brain-computer interfaces. IEEE Trans Neural Syst Rehabil Eng. 2016;25(10):1746–52.

Li Y, **ang J, Kesavadas T. Convolutional correlation analysis for enhancing the performance of SSVEP-based brain-computer interface. IEEE Trans Neural Syst Rehabil Eng. 2020;28(12):2681–90.

Guney OB, Oblokulov M, Ozkan H. A deep neural network for SSVEP-based brain-computer interfaces. IEEE Trans Biomed Eng. 2021;69(2):932–44.

Yao H, Liu K, Deng X, Tang X, Yu H. FB-EEGNet: a fusion neural network across multi-stimulus for SSVEP target detection. J Neurosci Methods. 2022;379:109674.

Friman O, Volosyak I, Graser A. Multiple channel detection of steady-state visual evoked potentials for brain–computer interfaces. IEEE Trans Biomed Eng. 2007;54(4):742–50.

Zhang Y, Zhou G, Zhao Q, Onishi A, ** J, Wang X, Cichocki A. Multiway canonical correlation analysis for frequency components recognition in SSVEP-based BCIS. In: Proceedings of the 18th international conference on neural information processing, ICONIP 2011, Shanghai, China, November 13-17, 2011, Part I 18, Springer; 2011. p. 287–295.

Wang Y, Wang R, Gao X, Hong B, Gao S. A practical VEP-based brain–computer interface. IEEE Trans Neural Syst Rehabil Eng. 2006;14(2):234–40.

Chen X, Chen Z, Gao S, Gao X. A high-ITR SSVEP-based BCI speller. Brain Comput Interfaces. 2014;1(3–4):181–91.

Nakanishi M, Wang Y, Wang Y-T, Jung T-P. A comparison study of canonical correlation analysis based methods for detecting steady-state visual evoked potentials. PLoS ONE. 2015;10(10):0140703.

Zhang Y, Zhou G, ** J, Wang M, Wang X, Cichocki A. L1-regularized multiway canonical correlation analysis for SSVEP-based BCI. IEEE Trans Neural Syst Rehabil Eng. 2013;21(6):887–96.

Zhang Y, Zhou G, ** J, Wang X, Cichocki A. Frequency recognition in SSVEP-based BCI using multiset canonical correlation analysis. Int J Neural Syst. 2014;24(04):1450013.

Kwak N-S, Lee S-W. Error correction regression framework for enhancing the decoding accuracies of ear-EEG brain–computer interfaces. IEEE Trans Cybern. 2019;50(8):3654–67.

Floriano A, Diez PF, Freire Bastos-Filho T. Evaluating the influence of chromatic and luminance stimuli on SSVEPs from behind-the-ears and occipital areas. Sensors. 2018;18(2):615.

Vaswani A, Shazeer N, Parmar N, Uszkoreit J, Jones L, Gomez AN, Kaiser Ł, Polosukhin I. Attention is all you need. In: Advances in neural information processing systems. 2017;30.

Devlin J, Chang M-W, Lee K, Toutanova K. Bert: pre-training of deep bidirectional transformers for language understanding. ar**v:1810.04805 (2018)

Zerveas G, Jayaraman S, Patel D, Bhamidipaty A, Eickhoff C. A transformer-based framework for multivariate time series representation learning. In: Proceedings of the 27th ACM SIGKDD conference on knowledge discovery and data mining; 2021. pp. 2114–2124.

Lin T, Wang Y, Liu X, Qiu X. A survey of transformers. AI Open; 2022.

Pan Y, Chen J, Zhang Y, Zhang Y. An efficient CNN-LSTM network with spectral normalization and label smoothing technologies for SSVEP frequency recognition. J Neural Eng. 2022;19(5):056014.

Dempster A, Petitjean F, Webb GI. Rocket: exceptionally fast and accurate time series classification using random convolutional kernels. Data Min Knowl Discov. 2020;34(5):1454–95.

Mu J, Grayden DB, Tan Y, Oetomo D. Comparison of steady-state visual evoked potential (SSVEP) with lcd vs. led stimulation. In: 2020 42nd annual international conference of the IEEE engineering in medicine and biology society (EMBC), IEEE; 2020. p. 2946–2949

Chen X, Wang Y, Gao S, Jung T-P, Gao X. Filter bank canonical correlation analysis for implementing a high-speed SSVEP-based brain–computer interface. J Neural Eng. 2015;12(4):046008.

Goupillaud P, Grossmann A, Morlet J. Cycle-octave and related transforms in seismic signal analysis. Geoexploration. 1984;23(1):85–102.

Cohen MX. A better way to define and describe Morlet wavelets for time-frequency analysis. NeuroImage. 2019;199:81–6.

McDonald GC. Ridge regression. Wiley Interdiscip Rev Comput Stat. 2009;1(1):93–100.

Peng C, Cheng Q. Discriminative ridge machine: a classifier for high-dimensional data or imbalanced data. IEEE Trans Neural Netw Learn Syst. 2020;32(6):2595–609.

Sterr A, Ebajemito JK, Mikkelsen KB, Bonmati-Carrion MA, Santhi N, Della Monica C, Grainger L, Atzori G, Revell V, Debener S, et al. Sleep EEG derived from behind-the-ear electrodes (cEEGrid) compared to standard polysomnography: a proof of concept study. Front Hum Neurosci. 2018;12:452.

Funding

The authors have not disclosed any funding.

Author information

Authors and Affiliations

Contributions

XL and GC wrote the main manuscript. TH and FK recorded data. HT revised manuscript.

Corresponding author

Ethics declarations

Competing interests

There are no conflict of interests.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Li, X., Haba, T., Cui, G. et al. The classification of SSVEP-BCI based on ear-EEG via RandOm Convolutional KErnel Transform with Morlet wavelet. Discov Appl Sci 6, 149 (2024). https://doi.org/10.1007/s42452-024-05816-2

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s42452-024-05816-2