Abstract

Logistic reduced rank regression is a useful data analysis tool when we have multiple binary response variables and a set of predictors. In this paper, we describe logistic reduced rank regression and present a new majorization minimization algorithm for the estimation of model parameters. Furthermore, we discuss Type I and Type D triplots for visualizing the results of a logistic reduced rank regression model, compare them, and then develop a hybrid triplot using elements of both types. Two empirical data sets are analyzed. This analysis is used to (1) compare the new algorithm to an existing one in terms of speed; and (2) to show the hybrid triplot and its interpretation.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

In many scientific disciplines, quantitative information is collected on a set of variables for a number of objects or participants. When the set of variables can be divided into a set of P predictors and a set of R responses, we need regression type of models. Researchers often neglect the multivariate nature of the response set and consequently univariate multiple regression models are fitted, one for each response variable separately. Such an approach does not take into account that the response variables might be correlated and does not provide insight into the relationships between the response variables. In this paper, the interest lies in the case where the R response variables are binary.

For binary response variables, the typical regression model is a logistic regression (Agresti 2013), that is, a generalized linear model (McCullagh and Nelder 1989) with logit link function and a Bernoulli or binomial distribution. In logistic regression models, the probability that person \(i\) answers yes (or 1) on the response variable Y is defined as \(\pi _{i} = P(Y_{i} = 1)\). These probabilities (or estimated values) are commonly defined in terms of the log-odds form (in GLMs the “linear predictor’ ’), denoted by \(\theta _{i}\), that is,

and similarly

Finally, the log-odds form is a (linear) function of the P predictor variables, that is

where \(x_{ip}\) is the observed value for person i on predictor variable p and m and \(a_p\) are the parameters which need to be estimated. The intercept m is the expected log-odds when all predictor variables equal zero, and the regression weights \(a_p\) indicate the difference in log-odds for two observations that differ one unit in predictor variable p and have equal values for all other predictor variables.

Having R outcome variables (\(Y_r\), \(r = 1,\ldots , R\)) a multivariate model can be defined with probabilities \(\pi _{ir} = P(Y_{ir} = 1)\) that are parameterized as

and the log-odds form is

The intercepts can be collected in a vector \({\varvec{m}}\) and the regression weights can be collected in a matrix \({\varvec{A}}\) of size \(P \times R\).

For multivariate outcomes, Yee and Hastie (2003) proposed reduced rank vector generalized linear models, that are multivariate models with a rank constraint on the matrix with regression weights, that is,

where \({\varvec{B}}\) is a matrix of size \(P \times S\) and \({\varvec{V}}\) a matrix of size \(R \times S\). The rank of the matrix \({\varvec{A}}\) is S, a number in the range 1 to \(\min (P, R)\), and when \(S < \min (P, R)\) the rank is reduced, hence the name reduced rank regression. The matrix \({\varvec{B}}\) has elements \(b_{ps}\) for \(s = 1,\ldots , S\) and \({\varvec{V}}\) has elements \(v_{rs}\). The S elements for the predictor variable p (i.e., the p-th row of \({\varvec{B}}\)), are collected in the column vector \({\varvec{b}}_p\); similarly, the S elements for response variable r are collected in the column vector \({\varvec{v}}_r\).

At this point, it is instructive to take a step back to models for continuous outcomes. Reduced rank regression (Anderson 1951; Izenman 1975; Tso 1981; Davies and Tso 1982), also called redundancy analysis (Van den Wollenberg 1977), has been proposed as a multivariate tool for simultaneously predicting the responses from the set of predictors. Reduced rank regression can be motivated from a regression point of view, but also from a principal component point of view.

From the regression point of view of reduced rank regression, the goal is to predict the response variables using the set of predictor variables. We, therefore, set up a multivariate regression model

where \({\varvec{m}}\) denote the vector with the R intercepts, \({\varvec{A}}\) is a \(P \times R\) matrix with regression weights, and \({\varvec{E}}\) is a matrix with residuals. In the usual multivariate regression model the matrix of regression coefficients is unrestricted. In reduced rank regression, the restriction \({\varvec{A}} = {\varvec{B}}{\varvec{V}}'\) is imposed.

Reduced rank regression can also be cast as a constrained principal component analysis (PCA, Takane (2013)). In PCA, the matrix with multivariate responses (\({\varvec{Y}}\)) of size \(N \times R\) is decomposed into a matrix with object scores (\({\varvec{U}}\)) of dimension \(N \times S\), and a matrix with variable loadings (\({\varvec{V}}\)) of size \(R \times S\). The rank, or dimensionality, should be smaller or equal to \(\min (N, R)\). We can write PCA as the following expression

where usually, identifiability constraints are imposed such as \({\varvec{V}}'{\varvec{V}} = {\varvec{I}}\) or \({\varvec{U}}'{\varvec{U}} = N{\varvec{I}}\). To estimate the PCA parameters, usually the means of the responses are computed, that is \(\hat{{\varvec{m}}} = N^{-1} {\varvec{Y}}'{\varvec{1}}\). Then, the centered response matrix \({\varvec{Y}}_c = {\varvec{Y}} - {\varvec{1}}\hat{{\varvec{m}}}'\) is computed, and subsequently \({\varvec{U}}\) and \({\varvec{V}}\) are estimated by minimizing the least squares loss function

where \(\Vert \cdot \Vert ^2\) denotes the squared Frobenius norm of a matrix. Eckart and Young (1936) show that this can be achieved by a singular value decomposition. With predictor variables, the scores (\({\varvec{U}}\)) can be restricted to be a linear combination of these predictor variables, sometimes called external variables (i.e., \({\varvec{X}}\)), that is, \({\varvec{U}} = {\varvec{XB}}\), with \({\varvec{B}}\) a \(P \times S\) matrix, to be estimated. Ten Berge (1993) shows that \({\varvec{B}}\) and \({\varvec{V}}\) can be estimated using a generalized singular value decomposition in the metrics \({\varvec{X}}'{\varvec{X}}\) and \({\varvec{I}}\) (see Appendix A for details), that is, we decompose \(({\varvec{X}}'{\varvec{X}})^{-\frac{1}{2}}{\varvec{X}}'{\varvec{Y}}_c\) with a singular value decomposition, that is

where \({\varvec{P}}'{\varvec{P}} = {\varvec{Q}}'{\varvec{Q}} = {\varvec{I}}\) and \({\varvec{\Phi }}\) is a diagonal matrix with singular values. Subsequently, define the following estimates

The \({\varvec{m}}\) are subsequently estimated as

Reduced rank regression can thus be understood from two different points of views: a multivariate regression model with constraints on the regression weights or a principal component analysis with constraints on the object scores. Yee and Hastie (2003) approached logistic reduced rank regression as a multivariate regression model with a rank constraint on the regression weights. We could approach logistic reduced rank regression also from the PCA point of view. PCA for binary variables has received considerable attention lately (Collins et al. 2001; Schein et al. 2003; De Leeuw 2006; Landgraf and Lee 2020).

De Leeuw (2006) defined the log-odds term \(\theta _{ir}\) in terms of a principal component analysis \(\theta _{ir} = m_r + {\varvec{u}}_{i}'{\varvec{v}}_{r}\), where \({\varvec{u}}_i\) is the vector with object scores for participant i. Similar to standard PCA, object scores and variable loadings are obtained. These scores and loading, however, reconstruct the log-odds term, not the response variables themselves. De Leeuw (2006) also proposed a Majorization Minimization (MM) algorithm (Heiser 1995; Hunter and Lange 2004; Nguyen 2017) for maximum likelihood estimation of the parameters. In the majorization step, the negative log-likelihood is majorized by a least squares function. This majorizing least squares function is minimized by applying a singular value decomposition on a matrix with working responses, that are variables that function as the response variables in each iteration of the algorithm but are updated from iteration to iteration.

Yee and Hastie (2003, Sect. 3.4) note that “in general, minimization of the negative likelihood cannot be achieved by use of the singular value decomposition” and therefore proposed an alternating algorithm, where in each iteration first \({\varvec{B}}\) is estimated considering \({\varvec{V}}\) fixed, and subsequently \({\varvec{V}}\) is estimated considering \({\varvec{B}}\) fixed. For both steps a weighted least squares update is derived, where in every iteration the weights and the responses need to be redefined based on the current set of parameters. In the next section, we develop an MM algorithm for logistic reduced rank regression (note, not all reduced rank generalized linear models) based on the work of De Leeuw (2006), where in each of the iterations a generalized singular value decomposition is applied. We compare the two algorithms in terms of speed of computation in Sect. 4.

For interpretation of (logistic) reduced rank models, a researcher can inspect the estimated coefficients \({\varvec{A}} = {\varvec{BV}}'\). Coefficients in this matrix can be interpreted like the usual regression weights in (logistic) regression models. Because the number of coefficients is usually large (i.e., \(P \times R\)) it is difficult to obtain a holistic interpretation from this matrix. Visualization can help to obtain such a holistic interpretation of the reduced rank model. PCA solutions can be graphically represented by biplots (Gabriel 1971; Gower and Hand 1996; Gower et al. 2011). Biplots are generalizations of usual scatterplots for multivariate data, where the observations (\(i = 1,\ldots , N\)) and the variables (\(r = 1,\ldots , R\)) are represented in low dimensional visualizations. The observations are represented by points, whereas the variables are represented by variable axes. These biplots have been extended for reduced rank regression (Ter Braak and Looman 1994), that not only represent observations and response variables but also predictor variables by variable axes, that is, they represent three different types of information, and therefore we call them triplots.

For visualization of the logistic reduced rank regression model, two types of triplots have been proposed. Vicente-Villardón et al. (2006) and Vicente-Villardón and Vicente-Gonzalez (2019) modified the usual biplots/triplots for the representation of binary data based on logistic models. Another type of triplot was proposed by Poole and Rosenthal (1985), Clinton et al. (2004), Poole et al. (2011), and De Rooij and Groenen (2022).

2.1 General theory about MM algorithms

The idea of MM for finding a minimum of the function \({\mathcal {L}}({\varvec{\theta }})\), where \({\varvec{\theta }}\) is a vector of parameters, is to define an auxiliary function, called a majorization function, \({\mathcal {M}}({\varvec{\theta }}|{\varvec{\vartheta }})\) with two characteristics

where \({\varvec{\vartheta }}\) is a supporting point, and

The two equations tell us that \({\mathcal {M}}({\varvec{\theta }}|{\varvec{\vartheta }})\) is a function that lies above (i.e., majorizes) the original function and touches the original function at the support point. Because of the above two properties, an iterative sequence defines a convergent algorithm because by construction

where \({\varvec{\theta }}^+\) is

the updated parameter. A main advantage of MM algorithms is that they always converge monotonically to a (local) minimum. The challenge is to find a parametrized function family, \({\mathcal {M}}({\varvec{\theta }}|{\varvec{\vartheta }})\), that can be used in every step.

In our case, the original function equals the negative log-likelihood. We majorize this function with the least squares function, that is, \({\mathcal {M}}({\varvec{\theta }}|{\varvec{\vartheta }})\) is a least squares function. We use majorization via a quadratic upper bound, that is, for a twice differentiable function \({\mathcal {L}}({\varvec{\theta }})\) and for each \({\varvec{\vartheta }}\) the function

majorizes \({\mathcal {L}}({\varvec{\theta }})\) at \({\varvec{\vartheta }}\) when the matrix \({\varvec{A}}\) is such that

is positive semi definite. We also use the property that majorization is closed under summation, that is, when \({\mathcal {M}}_1\) majorizes \({\mathcal {L}}_1\) and \({\mathcal {M}}_2\) majorizes \({\mathcal {L}}_2\), then \({\mathcal {M}}_1 + {\mathcal {M}}_2\) majorizes \({\mathcal {L}}_1 + {\mathcal {L}}_2\).

2.2 The algorithm

Lets recap our loss function

Because of the summation property, we can focus on a single element, \({\mathcal {L}}_{ir}(\theta _{ir})\). The first derivative of \({\mathcal {L}}_{ir}(\theta _{ir})\) with respect to \(\theta _{ir}\) is

Filling in the derivative and using the upper bound \(A = \frac{1}{4}\) (Böhning and Lindsay 1988; Hunter and Lange 2004), we have that

Let us now define \(z_{ir} = \vartheta _{ir} - 4\xi _{ir}\) to obtain

where \(c_{ir} = {\mathcal {L}}_{ir}(\vartheta _{ir}) - \frac{1}{8}z_{ir}^2 - \xi _{ir}\vartheta _{ir} + \frac{1}{8}\vartheta _{ir}^2\) is a constant.

Now as

we have that

a least squares majorization function, with \(c = \sum _i \sum _r c_{ir}\).

For logistic principal component analysis, De Leeuw (2006) defined \(\theta _{ir} = m_r + {\varvec{u}}_i'{\varvec{v}}_r\). Collecting the elements \(z_{ir}\) in the matrix \({\varvec{Z}}\), in every iteration of the MM-algorithm he minimizes

For logistic reduced rank regression, we define \(\theta _{ir} = m_r + {\varvec{x}}_i'{\varvec{B}}{\varvec{v}}_r\). In every iteration of the MM-algorithm we minimize

which can be done by computing the mean and a generalized singular value decomposition as in Expressions 1, 2, and 3. In detail, in every iteration we compute \({\varvec{Z}} = {\varvec{1m}}' + {\varvec{XBV}}' + 4({\varvec{Y}} - {\varvec{\Pi }})\), where \({\varvec{\Pi }}\) is the matrix with elements \(\pi _{ir}\), using the current parameter values and update our parameters as

-

\({\varvec{m}}^+ = N^{-1} ({\varvec{Z}} - {\varvec{XBV}}'){\varvec{1}}\)

-

\(({\varvec{X}}'{\varvec{X}})^{-\frac{1}{2}}{\varvec{X}}'({\varvec{Z}} - {\varvec{1m}}') = {\varvec{P}}{\varvec{\Phi }}{\varvec{Q}}'\)

-

\({\varvec{B}}^+ = \sqrt{N} ({\varvec{X}}'{\varvec{X}})^{-\frac{1}{2}} {\varvec{P}}_S\)

-

\({\varvec{V}}^+ = (\sqrt{N})^{-1} {\varvec{Q}}_S{\varvec{\Phi }}_S\)

where \({\varvec{P}}_S\) are the singular vectors corresponding to the S largest singular values, similarly for \({\varvec{Q}}_S\), and \({\varvec{\Phi }}_S\) is the diagonal matrix with the S largest singular values.

3 Visualization

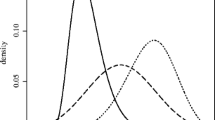

A rank 2 model can be visualized with a two-dimensional representation in a so-called triplot, that shows simultaneously three types of information: the predictor variables, the response variables, and the participants (objects). When a higher rank model is fitted, triplots can be constructed for any pair of dimensions.

We discuss two types of triplots for logistic reduced rank regression. The first is based on the inner product relationship where we project points, representing the participants, on response variable axes with markers indicating probabilities of responding with yes (or 1). We call this a triplot of type I. Another type of triplot was recently described in detail by De Rooij and Groenen (2023) and uses a distance representation. We call this a triplot of type D. The two triplots are equivalent in the sense that they represent the same information but in a different way. In this section, we describe the two triplots in detail, make a comparison, and propose a new hybrid type of triplot that combines the advantages of the two types of triplots.

Visualization of Logistic Reduced Rank Models. a graphical representation of two predictor variables and the process for interpolation for two observations A and B; b type I representation of one response variable and the process of prediction for the two observations; c type D representation of one response variable, where the distance between the two observations A and B and the two response classes determines the probabilities

3.1 The type I triplot

This type of logistic triplot was proposed by Vicente-Villardón et al. (2006) and Vicente-Villardón and Vicente-Gonzalez (2019). The objects, or participants, are depicted as points in a two-dimensional Euclidean space with coordinates \({\varvec{u}}_i = {\varvec{B}}'{\varvec{x}}_i\).

Each of the predictor variables is represented by a variable axis through the origin of the Euclidean space with direction \(b_{p2}/b_{p1}\). Markers can be added to the variables axis representing units \(t = \pm 1, \pm 2, ..\) with coordinates \(t \frac{b_{p2}}{b_{p1}}\). We use the convention that the variable axis has a dotted and a solid part. The solid part represents the observed range of the variable in the data, the dotted part extends the variable axis to the border of the display. The variable label is printed on the side with the highest value of the variable.

Object coordinates (\({\varvec{u}}_i\)) are a direct result of these predictor variable axis by the process of interpolation, as described in Gower and Hand (1996) and Gower et al. (2011).

We illustrated in Fig. 1a, where two predictor variables are represented one with regression weights 0.55 and -0.45 (diagonal variable axis), the other with regression weights -0.05 and -0.75 (almost vertical variable axis). Markers for values -3 till 3 are added to both variable axes. Also included are two observations, A and B, who have values on the two predictor variables 2 and 1 (A), and -3 and 2 (B) respectively. The process of interpolation is illustrated by the grey dotted lines, that is, we have to add two vectors to obtain the coordinates for the two observations.

Each of the response variables is also represented by a variable axis through the origin. The direction of the variables axis is \(v_{r2}/v_{r1}\). Markers can be added to these variables axis as well. We add markers that represent the probabilities of responding 1 equal to \(\pi = \{0.1, 0.2, \ldots , 0.9\}\). The location of these markers is given by \(\lambda ({\varvec{v}}_r'{\varvec{v}}_r)^{-1}{\varvec{v}}_r\), where \(\lambda = \log (\pi /(1-\pi )) - {\hat{m}}_r\) (based on Gower et al. 2011, page 24) . To obtain the predicted probabilities for an object or participant for response variables r, the process called prediction (Gower and Hand 1996; Gower et al. 2011) has to be used, where the point representing this object is projected onto the variable axis.

This is illustrated in Fig. 1b for a single response variable with \(v_{r1} = -0.8\) and \(v_{r2} = -0.5\). The value of \(m = -1\). On the variable axis there are markers indicating the expected probabilities. By projecting the points of the observations, A and B, onto this variable axis we obtain the expected probabilities. For observation A this is approximately 0.28, while for observation B the expected probability is approximately 0.61.

The two-dimensional space can be partitioned into two parts by a decision line for response variable r. These decision lines are perpendicular to the response variable axis and through the \(\pi = 0.5\) marker. As we have a decision line for each response variable, we have R of these lines in total, together partitioning the space in a maximum of \(\sum _{s=0}^S \left( {\begin{array}{c}R\\ s\end{array}}\right)\) regions (Coombs and Kao 1955), each region having a favourite response profile.

3.2 The type D triplot

These types of triplots are described in detail in De Rooij and Groenen (2023). Similar work has been proposed earlier mainly in the context of political vote casts by Poole and Rosenthal (1985); Clinton et al. (2004), and Poole et al. (2011).

In Type D triplots, the response variables are represented in a different manner, while the object points and the variable axes for the predictor remain the same (see Fig. 1a). Each response variable is represented by two points, one for the no-category (with coordinates \({\varvec{w}}_{r0}\)) and one for the yes-category (with coordinates \({\varvec{w}}_{r1}\)). The squared distance between an object location and these two points defines the probability, that is

where \(d^2({\varvec{u}}_i,{\varvec{w}}_{r1})\) is the squared Euclidean two-mode distance

The coordinates \({\varvec{w}}_{r0}\) and \({\varvec{w}}_{r1}\) for all r can be collected in the \(2R \times S\) matrix \({\varvec{W}}\). This matrix can be reparametrized as

with \({\varvec{A}}_l = {\varvec{I}}_R \otimes [1, 1]'\) and \({\varvec{A}}_k = {\varvec{I}}_R \otimes [1, -1]'\), where \(\otimes\) denotes the Kronecker product, and where \({\varvec{L}}\) is the \(R \times S\) matrix with response variable locations and \({\varvec{K}}\) the \(R \times S\) matrix representing the discriminatory power for the response variables. Elements of \({\varvec{K}}\) and \({\varvec{L}}\) are denoted by \(k_{rs}\) and \(l_{rs}\), respectively.

To obtain the coordinates \({\varvec{W}}\) from the parameters of the logistic reduced rank regression we take the following steps (for details, see De Rooij and Groenen 2022). An exact comparison is difficult, because in the implementation in VGAM the call starts with a formula, so the design matrices have to be built, whereas the lmap packages starts with the two matrices. Furthermore, the VGAM implementation uses several criteria for convergence, while the lmap convergence criterion is solely based on deviance. In the comparison, we first checked using the complete data set which convergence criterion in lmap leads to the same solution. Nevertheless, the comparison is not completely fair. We estimate the same rank 2 model using these two algorithms. The data set has \(N = 1885\), \(P = 9\), and \(R = 11\). To compare the speed of the two algorithms we use the microbenchmark package (Mersmann 2021) where the two algorithms are applied ten times. Results are shown in Table 1, where it can be seen that MM algorithm is much faster.

For our MM algorithm, \(({\varvec{X}}'{\varvec{X}})^{-\frac{1}{2}}\) and \(({\varvec{X}}'{\varvec{X}})^{-\frac{1}{2}}{\varvec{X}}\) need only to be evaluated once. During the iterations a SVD of a \(P \times R\) matrix has to be solved which is a relatively small matrix. Therefore, the computational burden during the iterations is small. As is usual for MM algorithms convergence is relatively slow in terms of the number of iterations needed (Heiser 1995). For the drug consumption data, 42 iterations were needed.

The IRLS algorithm alternates between an update of \({\varvec{B}}\) and \({\varvec{V}}\) (Yee 2015, Sect. 5.3.1). For both updates, a matrix with working weights needs to be inverted. As this matrix depends on the current estimated values, the inverse needs to be re-evaluated in every iteration. These matrix inversions are computationally heavy. In terms of the number of iterations the IRLS algorithm is faster, that is, only 6 iterations were needed for the drug consumption data.

Yee (2015) (Sect. 3.2.1) points out that, apart from some matrix multiplications, the number of floating point operations (flops) for the IRLS algorithm is \(2NS^3(P^2 + R^2)\) per iteration, which for this application is 6092320. The SVD in the MM algorithm takes \(2PR^2 + 11R^3 = 16819\) flops per iteration (cf., Trefethen and Bau 1997). These computations exclude the intercepts (\({\varvec{m}}\)). Also with respect to storage our algorithm has some advantages, that is, the MM algorithm works with the matrix \({\varvec{X}}\) while the IRLS algorithm works with \({\varvec{X}} \otimes {\varvec{I}}_S\), a much larger matrix.

4.1.2 Visualization

De Rooij and Groenen (2022). The algorithm uses the majorization inequality of De Leeuw (2006) to move from the negative log-likelihood to a least squares function and the generalized singular value decomposition for minimizing the least squares function. Each of these two steps is known and as such the algorithm is a straightforward combination of the two. The inequality of De Leeuw (2006) to find a least-squares majorization function for the negative binomial or multinomial log-likelihood can more commonly used to develop logistic models for categorical data.

We compared the new algorithm to the IRLS algorithm (Yee and Hastie 2003; Yee 2015) on two empirical data sets; our new algorithm is about ten times faster but uses more iterations. MM algorithms are known for slow convergence (see Heiser 1995) in terms of a number of iterations needed. Yet, the updates within the iterations are computationally cheap. A singular value decomposition of a \(P \times R\) matrix needs to be computed, where usually P and R are relatively small. Our MM algorithm is only applicable for logistic reduced rank models, whereas the IRLS algorithm of Yee (2015) is designed for the family of reduced rank generalized linear models.

In the VGAM-package (Yee 2022, 2015), in a second step also the standard errors of the model parameters can be obtained. In an MM algorithm, not the negative log-likelihood is itself minimized but iteratively the majorization function is minimized. Therefore, our algorithm does not automatically give an estimate of the Hessian matrix nor the standard errors. Is this a bad thing? Buja et al. (2019a, 2019b) recently argued that if we know statistical models are approximations, we should also carry the consequences of this knowledge. The computation of standard errors assumes the model to be true, that is, not an approximation. Therefore, assuming the model is true while knowing it is not true results in estimated standard errors that are biased. A better approach to obtain standard errors or confidence intervals is to use the so-called pairs bootstrap, where the predictors and responses are jointly re-sampled. For the bootstrap it is useful if the algorithm is fast.

Two types of triplots were discussed and compared, the Type I and Type D triplots. The two types of triplots are equal on the predictor side of the model, but differ in the representation of the response variables. Whereas the Type I triplot uses an inner product relationship where object points have to be projected onto response variable axes, the Type D uses a distance relationship, where the distance of an object point towards the yes and no point for each response variable determines the probabilities. We discussed advantages and disadvantages of both approaches, and were then able to develop a new, hybrid, type of triplot by combining the two.

In the Type D triplot we make use of the two-mode Euclidean distance. This distance is often used to model single-peaked response functions and the object points are then called ideal points. The single-peaked response function is usually contrasted with the dominance response function (see, for example, Drasgow et al. 2010, and references therein). Whereas in the latter the probability of answering yes is a monotonic function of the position on the latent trait, in the former this probability is a single-peaked function defined by distances. In the Type D triplot, however, the relationship is still of the dominance type, where the probability of answering yes goes monotonically up or down for objects located on any straight line in the Euclidean representation. The main reason is that the model is in terms of the distance towards the categories of the response variable, not the response variable itself. De Rooij et al. (2022) recently developed a distance model where the distance between the object and the response variable (i.e., not the categories) determines the probability of answering yes. Such a representation warrants an interpretation in terms of single peaked relationships. Logistic reduced rank regression models assume a monotonic predictor-response relationship, no matter how the model is visualized.

Data Availability

Non communicable diseases data set: This paper uses data from SHARE Wave 1 (DOI: 10.6103/SHARE.w1.800), see Börsch-Supan et al. (2013) for methodological details. The SHARE data collection has been funded by the European Commission, DG RTD through FP5 (QLK6-CT-2001-00360), FP6 (SHARE-I3: RII-CT-2006-062193, COMPARE: CIT5-CT-2005-028857, SHARELIFE: CIT4-CT-2006-028812), FP7 (SHARE-PREP: GA No 211909, SHARE-LEAP: GA No227822, SHARE M4: GA No261982, DASISH: GA No283646) and Horizon 2020 (SHARE-DEV3: GA No676536, SHARE-COHESION: GA No870628, SERISS: GA No654221, SSHOC: GA No823782, SHARE-COVID19: GA No101015924) and by DG Employment, Social Affairs & Inclusion through VS 2015/0195, VS 2016/0135, VS 2018/0285, VS 2019/0332, and VS 2020/0313. Additional funding from the German Ministry of Education and Research, the Max Planck Society for the Advancement of Science, the U.S. National Institute on Aging (U01_AG09740-13S2, P01_AG005842, P01_AG08291, P30_AG12815, R21_AG025169, Y1-AG-4553-01, IAG_BSR06-11, OGHA_04-064, HHSN271201300071C, RAG052527A) and from various national funding sources is gratefully acknowledged (see www.share-project.org). Data can be obtained from www.share-project.org. Drug consumption data set: The drug consumption data is available on UCI data repository (https://archive.ics.uci.edu/ml/machine-learning-databases/00373/drug_consumption.data). R-code: R-code for the analyses conducted in this paper can be found on the github page of the first author.

References

Abdi H (2007) Singular value decomposition (svd) and generalized singular value decomposition. Encyclopedia of measurement and statistics 907–912

Agresti A (2013) Categorical data analysis, 3rd edn. John Wiley & Sons

Anderson TW (1951) Estimating linear restrictions on regression coefficients for multivariate normal distributions. The Annals of Mathematical Statistics 327–351

Böhning D, Lindsay BG (1988) Monotonicity of quadratic-approximation algorithms. Ann Inst Stat Math 40(4):641–663

Buja A, Brown L, Berk R, George E, Pitkin E, Traskin M, Zhang K, Zhao L et al (2019) Models as approximations I: consequences illustrated with linear regression. Stat Sci 34(4):523–544

Buja A, Brown L, Kuchibhotla AK, Berk R, George E, Zhao L et al (2019) Models as approximations II: a model-free theory of parametric regression. Stat Sci 34(4):545–565

Börsch-Supan A, Brandt M, Hunkler C, Kneip T, Korbmacher J, Malter F, Schaan B, Stuck S, Zuber S (2013) Data Resource Profile: The Survey of Health, Ageing and Retirement in Europe (SHARE). Int J Epidemiol 42(4):992–1001

Clinton J, Jackman S, Rivers D (2004) The statistical analysis of roll call data. Am Polit Sci Rev 98(2):355–370

Collins M, Dasgupta S, Schapire RE (2001) A generalization of principal components analysis to the exponential family. Advances in neural information processing systems 14

Coombs CH, Kao R (1955) Nonmetric factor analysis. University of Michigan. Department of Engineering Research. Bulletin

Davies P, Tso MK-S (1982) Procedures for reduced-rank regression. J R Stat Soc 31(3):244–255

De Leeuw J (2006) Principal component analysis of binary data by iterated singular value decomposition. Comput Stat Data Anal 50(1):21–39

De Rooij M, Busing FMTA (2022) lmap: Logistic Map**. R package version 0.1.1

De Rooij M, Groenen PJF (2023) The melodic family for simultaneous binary logistic regression in a reduced space. In: Okada A, Shigemasu K, Yoshino R, Yokoyama S (eds) Facets of behaviormetrics: the 50th anniversary of the behaviormetric society, Springer. (preprint available at ar** of binary variables: a single-peaked perspective. Submitted paper

Drasgow F, Chernyshenko OS, Stark S (2010) 75 years after likert: Thurstone was right! Ind Organizational Psychol 3(4):465–476

Eckart C, Young G (1936) The approximation of one matrix by another of lower rank. Psychometrika 1(3):211–218

Fehrman E, Muhammad AK, Mirkes EM, Egan V, Gorban AN (2017) The five factor model of personality and evaluation of drug consumption risk. In: Palumbo F, Montanari A, Vichi M (eds) Data science: innovative developments in data analysis and clustering. Springer, Berlin, pp 231–242

Gabriel KR (1971) The biplot graphic display of matrices with application to principal component analysis. Biometrika 58(3):453–467

Gower J, Hand D (1996) Biplots. Taylor & Francis

Gower J, Lubbe S, Le Roux N (2011) Understanding Biplots. Wiley

Heiser WJ (1995) Convergent computation by iterative majorization: theory and applications in multidimensional data analysis. In: Krzanowski WJ (ed) Recent advances in descriptive multivariate analysis. Clarendon Press, pp 157–189

Hunter DR, Lange K (2004) A tutorial on MM algorithms. Am Stat 58(1):30–37

Izenman AJ (1975) Reduced-rank regression for the multivariate linear model. J Multivariate Anal 5(2):248–264

Landgraf AJ, Lee Y (2020) Dimensionality reduction for binary data through the projection of natural parameters. J Multivariate Anal 180:104668

McCullagh P, Nelder JA (1989) Generalized linear models. Chapman & Hall / CRC

Mersmann O (2021) microbenchmark: accurate Timing Functions. R package version 1(4):9

Nguyen HD (2017) An introduction to majorization-minimization algorithms for machine learning and statistical estimation. Wiley Interdisciplinary Rev 7(2):e1198

Poole K, Lewis JB, Lo J, Carroll R (2011) Scaling roll call votes with wnominate in r. J Stat Softw 42:1–21

Poole KT, Rosenthal H (1985) A spatial model for legislative roll call analysis. Am J Political Sci 357–384

Schein AI, Saul LK, Ungar LH (2003) A generalized linear model for principal component analysis of binary data. In: Bishop CM, Frey BJ (eds) Proceedings of the ninth international workshop on artificial intelligence and statistics

Takane Y (2013) Constrained principal component analysis and related techniques. CRC Press

Ten Berge JM (1993) Least squares optimization in multivariate analysis. DSWO Press, Leiden University Leiden

Ter Braak CJ, Looman CW (1994) Biplots in reduced-rank regression. Biometrical J 36(8):983–1003

Trefethen LN, Bau D (1997) Numerical linear algebra, vol 181. Siam

Tso M-S (1981) Reduced-rank regression and canonical analysis. J R Stat Soc Ser B (Methodological) 43(2):183–189

Van den Wollenberg AL (1977) Redundancy analysis an alternative for canonical correlation analysis. Psychometrika 42(2):207–219

Vicente-Villardón JL, Galindo-Villardón MP, Blázquez-Zaballos A (2006) Logistic biplots. Multiple Correspondence Anal Related Methods 503–521

Vicente-Villardón JL, Vicente-Gonzalez L (2019) Redundancy analysis for binary data based on logistic responses. In: Chadjipadelis T, Lausen B, Markos A, Lee TR, Montanari A, Nugent R (eds) Data analysis and rationality in a complex world. Springer, Berlin, pp 331–339

Yee TW (2015) Vector generalized linear and additive models: with an implementation in R. Springer, New York, USA

Yee TW (2022) VGAM: Vector generalized linear and additive models. R package version 1.1-7

Yee TW, Hastie TJ (2003) Reduced-rank vector generalized linear models. Stat Model 3(1):15–41

Funding

The author declares that no funds, grants, or other support were received during the preparation of this manuscript.

Author information

Authors and Affiliations

Contributions

As this is a single-author paper, everything was done by the main author.

Corresponding author

Ethics declarations

Conflict of interest

The authors have no relevant financial or non-financial interests to disclose.

Additional information

Communicated by Alfonso Iodice D’Enza.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix A: The generalized singular value decomposition

Appendix A: The generalized singular value decomposition

The singular value decomposition is a well known decomposition of a matrix and can be considered a special case of the generalized singular value decomposition in the metrics \({\varvec{I}}\) and \({\varvec{I}}\). The generalized singular value decomposition of the matrix \({\varvec{Y}}\) in the metrics \({\varvec{G}}\) and \({\varvec{H}}\) is given by

where \({\varvec{P}}\) is a matrix of left generalized singular vectors, \({\varvec{Q}}\) is a matrix of right generalized singular vectors, and \({\varvec{\Lambda }}\) a diagonal matrix with singular values. The matrices \({\varvec{P}}\) and \({\varvec{Q}}\) satisfy \({\varvec{P}}'{\varvec{GP}} = {\varvec{Q}}'{\varvec{HQ}} = {\varvec{I}}\), that is, they are columnwise orthogonal with respect to the metric matrices \({\varvec{G}}\) and \({\varvec{H}}\) ( Takane 2013, page 65). We assume that both \({\varvec{G}}\) and \({\varvec{H}}\) are square, symmetric, positive definite matrices.

The generalized singular value decomposition is computed as follows (Takane 2013; Abdi 2007). Do a usual SVD on the matrix \({\varvec{Y}}_*\) defined as \({\varvec{Y}}_* = {\varvec{G}}^{\frac{1}{2}}{\varvec{Y}}{\varvec{H}}^{\frac{1}{2}}\), that is

and then

Rights and permissions

This article is published under an open access license. Please check the 'Copyright Information' section either on this page or in the PDF for details of this license and what re-use is permitted. If your intended use exceeds what is permitted by the license or if you are unable to locate the licence and re-use information, please contact the Rights and Permissions team.

About this article

Cite this article

de Rooij, M. A new algorithm and a discussion about visualization for logistic reduced rank regression. Behaviormetrika 51, 389–410 (2024). https://doi.org/10.1007/s41237-023-00204-3

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s41237-023-00204-3