Abstract

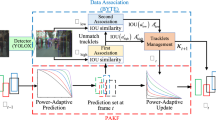

Multi-object Tracking (MOT) is one of the most fundamental computer vision tasks that contributes to various video analysis applications. Despite the recent promising progress, current MOT research is still limited to a fixed sampling frame rate of the input stream. They are neither as flexible as humans nor well-matched to industrial scenarios which require the trackers to be frame rate insensitive in complicated conditions. In fact, we empirically found that the accuracy of all recent state-of-the-art trackers drops dramatically when the input frame rate changes. For a more intelligent tracking solution, we shift the attention of our research work to the problem of Frame Rate Agnostic MOT (FraMOT), which takes frame rate insensitivity into consideration. In this paper, we propose a Frame Rate Agnostic MOT framework with a Periodic training Scheme (FAPS) to tackle the FraMOT problem for the first time. Specifically, we propose a Frame Rate Agnostic Association Module (FAAM) that infers and encodes the frame rate information to aid identity matching across multi-frame-rate inputs, improving the capability of the learned model in handling complex motion-appearance relations in FraMOT. Moreover, the association gap between training and inference is enlarged in FraMOT because those post-processing steps not included in training make a larger difference in lower frame rate scenarios. To address it, we propose Periodic Training Scheme to reflect all post-processing steps in training via tracking pattern matching and fusion. Along with the proposed approaches, we make the first attempt to establish an evaluation method for this new task of FraMOT. Besides providing simulations and evaluation metrics, we try to solve new challenges in two different modes, i.e., known frame rate and unknown frame rate, aiming to handle a more complex situation. The quantitative experiments on the challenging MOT17/20 dataset (FraMOT version) have clearly demonstrated that the proposed approaches can handle different frame rates better and thus improve the robustness against complicated scenarios.

Similar content being viewed by others

Data Availibility

All data used in this paper are publicly available on corresponding websites. MOT17/MOT20: motchallenge.net; CrowdHuman: www.crowdhuman.org; CityScapes: www.cityscapes-dataset.com; HIE: humaninevents.org. SOMPT22: sompt22.github.io

References

Bernardin, K., & Stiefelhagen, R. (2008). Evaluating multiple object tracking performance: The clear mot metrics. Journal on Image and Video Processing, 2008, 1.

Brasó, G., & Leal-Taixé, L. (2020). Learning a neural solver for multiple object tracking. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (pp. 6247–6257).

Chu, P., & Ling, H. (2019). Famnet: Joint learning of feature, affinity and multi-dimensional assignment for online multiple object tracking. In The IEEE International Conference on Computer Vision (ICCV).

Chu, Q., Ouyang, W., Li, H., Wang, X., Liu, B., & Yu, N. (2017). Online multi-object tracking using cnn-based single object tracker with spatial-temporal attention mechanism. In ICCV.

Cordts, M., Omran, M., Ramos, S., Rehfeld, T. (2016). The cityscapes dataset for semantic urban scene understanding. In Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR).

Dendorfer, P., Rezatofighi, H., Milan, A., Shi, J., Cremers, D., Reid,I., Roth, S., Schindler, K., & Leal-Taixe, L. (2020). Mot20: A benchmark for multi object tracking in crowded scenes. ar**v:2003.09003

Dendorfer, P., Osep, A., Milan, A., Schindler, K., Cremers, D., Reid, I., Roth, S., & Leal-Taixe, L. (2021). Motchallenge: A benchmark for single-camera multiple target tracking. International Journal of Computer Vision, 129(4), 845–881.

Ge, Z., Liu, S., Wang, F., Li, Z., & Sun, J. (2021). YOLOX: Exceeding YOLO series in 2021. https://arxiv.org/abs/2107.08430.

Han, T., Bai, L., Gao, J., Wang, Q., & Ouyang, W. (2022). Dr. vic: Decomposition and reasoning for video individual counting. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (pp. 3083–3092).

Hornakova, A., Henschel, R., Rosenhahn, B., & Swoboda, P. (2020). Lifted disjoint paths with application in multiple object tracking. In International conference on machine learning, PMLR (pp. 4364–4375).

Hu, W., Shi, X., Zhou, Z., & **ng, J. (2020). Dual l1-normalized context aware tensor power iteration and its applications to multi-object tracking and multi-graph matching. International Journal of Computer Vision, 128(2), 360–392.

Kieritz, H., Hubner, W., & Arens, M. (2018). Joint detection and online multi-object tracking. In CVPRW.

Li, J., Gao, X., & Jiang, T. (2020). Graph networks for multiple object tracking. In Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision (pp. 719–728).

Lin, W., Liu, H., Liu, S., Li, Y., Qian, R., Wang, T., Xu, N., **ong, H., Qi, G.-J. & Sebe, N. (2020). Human in events: A large-scale benchmark for human-centric video analysis in complex events. ar**v:2005.04490

Luiten, J., Osep, A., Dendorfer, P., Torr, P., Geiger, A., Leal-Taixe, L., & Leibe, B. (2021). Hota: A higher order metric for evaluating multi-object tracking. International Journal of Computer Vision, 129(2), 548–578.

Ma, C., Yang, F., Li, Y., Jia, H., **e, X., & Gao, W. (2021). Deep trajectory post-processing and position projection for single & multiple camera multiple object tracking. International Journal of Computer Vision, 129(12), 3255–3278.

Maksai, A., & Fua, P. (2019). Eliminating exposure bias and metric mismatch in multiple object tracking. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (pp. 4639–4648).

Milan, A., Leal-Taixé, L., & Reid, I., Roth, S., & Schindler, K. (2016). MOT16: A benchmark for multi-object tracking. ar**v:1603.00831

Milan, A., Rezatofighi, S. H., Dick, A. R., Reid,. I., & Schindler, K. (2017). Online multi-target tracking using recurrent neural networks. In AAAI.

Ristani, E., Solera, F., Zou, R., Cucchiara, R., & Tomasi, C. (2016). Performance measures and a data set for multi-target, multi-camera tracking. In European Conference on Computer Vision (pp. 17–35). Springer.

Sadeghian, A., Alahi, A., & Savarese, S. (2017). Tracking the untrackable: Learning to track multiple cues with long-term dependencies. In ICCV.

Saleh, F., Aliakbarian, S., Rezatofighi, H., & Salzmann, M. (2021). Probabilistic tracklet scoring and inpainting for multiple object tracking. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (pp. 14329–14339).

Shao, S., Zhao, Z., Li, B., **ao, T., Yu, G., Zhang, X., & Sun, J. (2018). Crowdhuman: A benchmark for detecting human in a crowd. ar**v:1805.00123

Simsek, F. E., Cigla, C., & Kayabol, K. (2023). Sompt22: A surveillance oriented multi-pedestrian tracking dataset. In Computer Vision–ECCV 2022 Workshops: Tel Aviv, Israel, October 23–27, 2022, Proceedings, Part V (pp. 659–675). Springer.

Takala, V., & Pietikainen, M. (2007). Multi-object tracking using color, texture and motion. In 2007 IEEE Conference on Computer Vision and Pattern Recognition, IEEE (pp. 1–7).

Tang, S., Andres, B., Andriluka, M., & Schiele, B. (2016). Multi-person tracking by multicut and deep matching. In ECCV.

Tang, S., Andriluka, M., Andres, B., & Schiele, B. (2017). Multiple people tracking by lifted multicut and person reidentification. In CVPR.

Wang, Y., Kitani, K., & Weng, X. (2021). Joint object detection and multi-object tracking with graph neural networks. In 2021 IEEE International Conference on Robotics and Automation (ICRA), IEEE (pp. 13708–13715).

Wen, L., Lei, Z., Chang, M. C., Qi, H., & Lyu, S. (2017). Multi-camera multi-target tracking with space-time-view hyper-graph. International Journal of Computer Vision, 122(2), 313–333.

Wojke, N., Bewley, A., & Paulus, D. (2017). Simple online and realtime tracking with a deep association metric. In ICIP.

Wu, J., Cao, J., & Song, L., Wang, Y., Yang, M., & Yuan, J. (2021). Track to detect and segment: An online multi-object tracker. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 12352–12361).

Xu, J., Cao, Y., Zhang, Z., & Hu, H. (2019). Spatial-temporal relation networks for multi-object tracking. In ICCV.

Yoon, J. H., Lee, C. R., Yang, M. H., & Yoon, K.-J. (2019). Structural constraint data association for online multi-object tracking. International Journal of Computer Vision, 127(1), 1–21.

Yu, F., Li, W., Li, Q., Liu, Y, Shi, X., & Yan, J. (2016). Poi: Multiple object tracking with high performance detection and appearance feature. In ECCV.

Zhang, L., Li, Y., & Nevatia, R. (2008). Global data association for multi-object tracking using network flows. In CVPr.

Zhang, Y., Wang, C., Wang, X., Zend, W., & Liu, W. (2021). Fairmot: On the fairness of detection and re-identification in multiple object tracking. International Journal of Computer Vision, 129(11), 3069–3087.

Zhang, Y., Sun, P., Jiang, Y., Yu, D., Weng, F., Yuan, Z., Luo, P., Liu, W., & Wang, X. (2022). Bytetrack: Multi-object tracking by associating every detection box. In Proceedings of the European Conference on Computer Vision (ECCV).

Zhou, X., Koltun, V., & Krähenbühl, P. (2020). Tracking objects as points. ECCV.

Acknowledgements

This work is partially supported by the National Key R &D Program of China (NO.2022ZD0160100). The Research is supported by Shanghai AI Laboratory. Wanli Ouyang was supported by the Australian Research Council Grant DP200103223, Australian Medical Research Future Fund MRFAI000085, CRC-P Smart Material Recovery Facility (SMRF) - Curby Soft Plastics, and CRC-P ARIA - Bionic Visual-Spatial Prosthesis for the Blind.

Author information

Authors and Affiliations

Corresponding author

Additional information

Communicated by Matej Kristan.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix A: Tacking Results Demo

Appendix A: Tacking Results Demo

Figures 12 and 13 show some selected tracking results of our approach on the MOT20 testing set. Different colors and different numbers represent different trajectories. We can see that at the lower frame rate scenarios (i.e., sampling factor \(k\ge 25\)), our method keeps a stable performance. More importantly, the results are all from the same model checkpoint, showing that our method can handle various frame rates robustly. Among these scenarios, the movements of the targets between adjacent frames are much larger than those in normal frame rate scenarios.

In Fig. 13, the targets of number 2, number 6 and number 19 do not have a large bounding box overlap between adjacent frames, leading to less reliable motion cues. In normal frame rate scenarios, such a large motion gap in adjacent frames usually indicates a different identity, which is quite different from lower frame rate scenarios. Thanks to the FAAM design and PTS strategy, Our method is able to make a correct prediction in multi-frame-rate settings simultaneously.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Feng, W., Bai, L., Yao, Y. et al. Towards Frame Rate Agnostic Multi-object Tracking. Int J Comput Vis 132, 1443–1462 (2024). https://doi.org/10.1007/s11263-023-01943-2

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11263-023-01943-2