Abstract

In this paper, we study a robust optimization problem whose constraints include nonsmooth and nonconvex functions and the intersection of closed sets. Using advanced variational analysis tools, we first provide necessary conditions for the optimality of the robust optimization problem. We then establish sufficient conditions for the optimality of the considered problem under the assumption of generalized convexity. In addition, we present a dual problem to the primal robust optimization problem and examine duality relations.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Because of prediction error, fluctuation and disorder, or lack of information, many practical and realistic problems have uncertain data. So robust optimization has emerged and become a remarkable and efficient framework for studying mathematical programming problems under data uncertainties; see, e.g., [3, 4].

Nowadays, robust optimization has been intensively studied in all aspects of theory, method and application [7, 9, 10, 13, 15, 17, 21, 22, 31].

The goal of this paper is to study an uncertain optimization problem of the form:

where x is a decision variable, \(\tau \) and \(u_i,\, i=1,...,p, \) are uncertain parameters, which reside in the uncertainty sets T and \(V_i\), respectively, \(T\subset {\mathbb {R}}^k\) and \( V_i \subset {\mathbb {R}}^{n_i},\, i=1,...,p,\) are nonempty compact sets, \(C_j\subset {\mathbb {R}}^n,\, j=1,...,m,\) are nonempty closed subsets, and \(f: {\mathbb {R}}^n \times T \rightarrow {\mathbb {R}}\) and \(g_i:{\mathbb {R}}^n \times V_i \rightarrow {\mathbb {R}}, \, i=1,...,p,\) are functions.

A robust optimization problem associated with (UP) is defined by

The problem of type (RP) admits a general formulation and so it provides a unified framework for investigating various robust optimization problems. For instance, when \(m:=1\), the nonempty subset \(C_1\) is a closed convex set in \({\mathbb {R}}^n,\) f is a continuous convex function, and \(g_1,...,g_p,\) are continuously differentiable functions, Chieu et al. in [6] examined links among various constraint qualifications including Karush–Kuhn–Tucker conditions for an optimization problem without uncertainties. In the case of \(m:=1\) and the constraints related to a convex cone, Ghafari and Mohebi in [12] provided a new characterization of the Robinson constraint qualification, which collapses to the validation of a generalized Slater constraint qualification and a sharpened nondegeneracy condition for a (no uncertainty) nonconvex optimization problem involving nearly convex feasible sets.

Another approach based on a characterization of the normal cone together with the oriented distance function to establish necessary and sufficient optimality conditions for a smooth optimization problem of type (RP) without uncertainties was given by Jalilian and Pirbazari in [14]. When there are no uncertainty and constraint functions \(g_i\), we refer the reader to [1] for a recent result on optimality, which was obtained by using a canonical representation of a closed set via an associated oriented distance function. For a special case of this problem (RP) with \(m:=1\), where there is no uncertainty in the objective, \(C_1\) is a closed convex cone of \({\mathbb {R}}^n,\,f\) and \(g_i(\cdot ,u_i),\, u_i\in V_i, \,i=1,...,p,\) are convex functions, Lee and Lee in [18] established an optimality theorem for approximate solutions under a new robust characteristic cone constraint qualification. This result was developed by Sun et al. in [30] to a more general class of robust optimization problems in locally convex vector spaces.

In passing, dealing with a robust optimization problem involving many simple geometric constraints \(C_j\)’s in (RP) is often more preferable than involving a single abstract set due to the technical calculations of related concepts in variational analysis and nonsmooth/nonconvex generalized differentiations. Moreover, general programming problems with finitely many geometric constraints arise frequently in practical applications (see e.g., customer satisfaction modelling within the automotive industry [14]) and many other popular classes of optimization problems with specific types of constraints [2, 23] can be reformulated and cast into resulting models involving geometric constraints.

To the best of our knowledge, there are not any results related to optimality conditions and duality for the nonsmooth and nonconvex robust optimization problem (RP). This is because there are challenges associated with not only the nonsmooth and nonconvex structures of the related functions and sets but also uncertainty data. In this work, we employ advanced variational analysis tools (see e.g., [24]) and recent advances on nonsmooth robust optimization (see e.g., [7, 8]) to establish necessary conditions for the optimality of the robust optimization problem (RP). We also provide sufficient conditions for the optimality of the considered problem under the assumption of generalized convexity. Moreover, we address a dual problem to the robust optimization problem (RP) and explore duality relations between them.

The organization of the paper is as follows. Section 2 provides some basic concepts and calculus rules from variational analysis needed for proving our main results. In Sect. 3, we establish necessary conditions and sufficient conditions for the optimality of problem (RP). Section 4 is devoted to examining robust duality relations between the problem (RP) and its dual problem. The last section summarizes the obtained results.

2 Preliminaries

Throughout the paper, the inner product and a norm in \({\mathbb {R}}^n\) are denoted respectively by \(\langle \cdot , \cdot \rangle \) and \(\Vert \cdot \Vert ,\) where \(n\in {\mathbb {N}}:=\{1,2,...\}.\) We use the notation \({\mathbb {R}}_+^n\) and \({\mathbb {R}}_-^n\) for the nonnegative orthant and nonpositive orthant of \({\mathbb {R}}^n\), respectively. Let \(\Gamma \) be a nonempty subset of \({\mathbb {R}}^n,\) the interior, the convex hull and the boundary of \(\Gamma \) are denoted respectively by \(\textrm{int}\,\Gamma ,\) \(\textrm{co} \,\Gamma \) and \(\textrm{bd} \,\Gamma \). The notation \(x\xrightarrow {\Gamma } \overline{x}\) means that \(x\rightarrow \overline{x}\) and \(x\in \Gamma .\) The polar cone of \(\Gamma \subset {\mathbb {R}}^n \) is defined by

Let \({\mathcal {F}}: X\subset {\mathbb {R}}^n\rightrightarrows {\mathbb {R}}^m \) be a multivalued function/set-valued map. \({\mathcal {F}}\) is closed at \(\overline{x}\in X\) if for any sequence \(\{x_l\} \subset X,\, x_l \rightarrow \overline{x}\) and any sequence \(\{y_l\}\subset {\mathbb {R}}^m,\, y_l \in {\mathcal {F}}(x_l),\, y_l \rightarrow \overline{y}\) as \(l\rightarrow \infty \), we have \(\overline{y} \in {\mathcal {F}}(\overline{x}).\)

Let us recall some concepts and calculus rules from Variational Analysis (see e.g., [24, 27]). Let \({\mathcal {F}}: {\mathbb {R}}^n\rightrightarrows {\mathbb {R}}^n\) be a multivalued function, the sequential Painlevé-Kuratowski upper/outer limit of \({\mathcal {F}}\) at \(\overline{x}\in \textrm{dom}\, {\mathcal {F}}\) is given by

where \(\textrm{dom} {\mathcal {F}}:=\{x\in {\mathbb {R}}^n\mid {\mathcal {F}}(x)\ne \emptyset \}.\) The Fréchet normal cone (known also as the regular normal cone) \(\widehat{N} (\overline{x}; \Gamma )\) to \(\Gamma \) at \(\overline{x}\in \Gamma \) is defined by

We put \(\widehat{N}(\overline{x};\Gamma ):=\emptyset \) for any \( \overline{x}\in {\mathbb {R}}^n{\setminus }\Gamma .\) The Mordukhovich normal cone (known also as the limiting normal cone) \(N(\overline{x}; \Gamma )\) to \(\Gamma \) at \(\overline{x}\in \Gamma \) is defined by

If \( \overline{x}\in {\mathbb {R}}^n\setminus \Gamma ,\) then \(N(\overline{x};\Gamma ):=\emptyset .\) Given a function \(\psi : {\mathbb {R}}^n \rightarrow \overline{{\mathbb {R}}}\), where \( \overline{{\mathbb {R}}}:= {\mathbb {R}}\cup \{\infty \},\) we denote \(\textrm{epi} \psi :=\{(x,\alpha ) \in {\mathbb {R}}^n \times {\mathbb {R}}\, \mid \, \psi (x) \le \alpha \}.\) The Fréchet subdifferential and limiting/Mordukhovich subdifferential of \(\psi : {\mathbb {R}}^n \rightarrow \overline{{\mathbb {R}}}\) at \(\overline{x}\in {\mathbb {R}}^n\) with \(|\psi (\overline{x})| < \infty \) are respectively given by

If \(|\psi (\overline{x})| = \infty ,\) then the above subdifferentials are empty. Given a set \(\Gamma \subset {\mathbb {R}}^n,\) we consider an indicator function \(\delta (\cdot ;\Gamma )\) defined by

By [27, Proposition 1.19], we obtain that

Remark that the above-defined normal cones and subdifferentials reduce to the corresponding concepts of normal cone and subdifferential in convex analysis when \(\Gamma \) is a convex set and \(\psi \) is a convex function.

When \(\psi \) is locally Lipschitz at \(\overline{x}\in {\mathbb {R}}^n\), i.e., there exist a neighborhood U of \(\overline{x}\) and a real number \({\mathcal {L}}>0\) such that

we assert by [24, Corollary 1.81] that \(\Vert \vartheta \Vert \le {\mathcal {L}}\) for any \(\vartheta \in \partial \psi (\overline{x})\). Moreover, if \(\overline{x}\) is a local minimizer for \(\psi \), then we get by the nonsmooth version of Fermat’s rule (see [24, Proposition 1.114]) that

Lemma 2.1

([24, Theorem 3.36]) Let the functions \(\psi _i: {\mathbb {R}}^n \rightarrow \overline{{\mathbb {R}}}, \, i=1,...,p,\, p\ge 2, \) be lower semicontinuous around \(\overline{x}\in {\mathbb {R}}^n,\) and let all but one of these be locally Lipschitz at \(\overline{x}.\) Then one has

In the rest of this section, we recall a calculus rule for calculating the limiting subdifferential of maximum of finitely many functions.

Lemma 2.2

([24, Theorem 3.36 and Theorem 3.46]) Let the functions \(\psi _i: {\mathbb {R}}^n \rightarrow \overline{{\mathbb {R}}}, \, i=1,...,p,\, p\ge 2, \) be locally Lipschitz at \(\overline{x}\in {\mathbb {R}}^n\) and denote the maximum function by \( \psi (x):=\underset{i=1,...,p}{\mathop {\max }}\,\psi _{i}(x)\) for \( x \in {\mathbb {R}}^n.\) Then

3 Robust Optimality Conditions

In this section, we first present necessary conditions for the optimality of the robust problem (RP). We then establish sufficient conditions by employing the generalized convexity for a set of finitely many real-valued functions.

In what follows, we assume that the objective function f together with the constraint functions \(g_1,...,g_p\) of the problem (RP) satisfy the following assumptions:

(A1) Given a fixed \(\overline{x}\in {\mathbb {R}}^n,\) there exist neighborhoods \(U_i,\, i=0,...,p,\) of \(\overline{x}\) such that the functions \(\tau \in T \mapsto f(x,\tau )\), \(x \in U_0 \), and \(u_i \in V_i \mapsto g_i(x,u_i) \), \(x \in U_i, i=1,\ldots ,p\) are upper semicontinuous and the functions f and \(g_i\) are partially uniformly Lipschitz of ranks \({\mathcal {L}}_0>0\) and \({\mathcal {L}}_i>0\) on \(U_0\) and \(U_i,\) respectively, i.e.,

(A2) For the above \(\overline{x}\in {\mathbb {R}}^n,\) the multivalued function \((x, \tau ) \in U_0 \times T \rightrightarrows \partial _x f(x,\tau ) \subset {\mathbb {R}}^n\) is closed at \((\overline{x}, \overline{\tau })\) for each \(\overline{\tau }\in T( \overline{x}),\) and the multivalued functions \((x, u_i) \in U_i \times V_i \rightrightarrows \partial _x g_i(x,u_i) \subset {\mathbb {R}}^n, i = 1,..., p\) are closed at \((\overline{x}, \overline{u}_i)\) for each \(\overline{u}_i \in V_i( \overline{x}),\) where the symbol \(\partial _x\) stands for the limiting subdifferential operation with respect to the first variable x and

with

It is worth mentioning that the above assumptions are commonly found in the study of robust optimization problems or in the nonsmooth analysis such as calculating the nonsmooth subdifferentials/subgradients of max or supremum functions over an infinite set. More precisely, the hypothesis (A1) ensures that the functions \({\mathcal {F}}\) and \({\mathcal {G}}_i,\, i=1,...,p,\) are well-defined, and furthermore, it entails that the functions \({\mathcal {F}}\) and \({\mathcal {G}}_i\) are locally Lipschitz of ranks \({\mathcal {L}}_0\) and \({\mathcal {L}}_i,\, i=1,...,p,\) respectively. The hypothesis (A2) can be viewed as a relaxation of subdifferentials for the class of convex functions, and this assumption is automatically satisfied in smooth settings as their gradients are continuous (see Corollary 3.1 below). In fact, (A2) holds for a broader class of regular nonsmooth functions including subsmooth, and continuously prox-regularity functions whenever (A1) holds. We refer the interested readers to [7, 8] and the references therein for a detailed review.

Let us introduce the following constraint qualification (CQ), which will be necessary to derive the Karush–Kuhn–Tucker (KKT) condition for the robust problem (RP).

Definition 3.1

For the problem (RP), let \(\overline{x} \in S:=\{x\in {\mathbb {R}}^n\mid x \in \bigcap \limits _{j=1}^{m}C_j,\, g_i(x,u_i) \le 0, \,\,\forall u_i\in V_i,\,\,i=1,...,p\}\) and denote

We say that the constraint qualification (CQ) is satisfied at \(\overline{x}\) if there does not exist \((\mu _1,...,\mu _p)\in \Lambda (\overline{x})\) such that

where \(V_i(\overline{x})\) is defined by (3.1).

Observe that the concept of (CQ) in Definition 3.1 reduces to the (extended) Mangasarian-Fromovitz constraint qualification in the case of smooth setting with \(C_j={\mathbb {R}}^n, \, j=1,...,m\) (see, e.g., [5, 24, 27] for more details).

We are now ready to present necessary optimality conditions for the robust problem (RP) in terms of the limiting/Mordukhovich subdifferentials and normal cones.

Theorem 3.1

Let the assumptions (A1) and (A2) hold for an optimal solution \(\overline{x}\) of problem (RP). Assume that the equation \(\nu _1+\cdots +\nu _m=0,\) where \( \nu _j \in N(\overline{x}; C_j),\, j=1,...,m,\) has only the trivial solution \(\nu _j=0, \,j=1,...,m.\) Then, there exists \((\mu _0,\mu _1,...,\mu _{p})\in {\mathbb {R}}_{+}^{p+1}\) with \( \sum \limits _{i=0}^p\mu _i=1\) such that

If assume additionally that (CQ) holds at \(\overline{x}\), then \(\mu _0\) in the relation of (3.3) is a positive number.

Proof

Assume that \(\overline{x}\) is an optimal solution of problem (RP), we define the corresponding function \(\psi :{\mathbb {R}}^n \rightarrow {\mathbb {R}}\) as follows:

where \({\mathcal {F}}\) and \({\mathcal {G}}_i, \, i=1,...,p,\) are defined by (3.2).

We claim that

Indeed, by \({\mathcal {G}}_i(\overline{x})\le 0, \,i=1,...,p\), it holds that \(\psi (\overline{x})=0.\) Now, take any \(x\in \bigcap \limits _{j=1}^{m}C_j.\) It is easy to see that \({\mathcal {F}}(x)\ge {\mathcal {F}}(\overline{x})\) if x is a feasible point of problem (RP), which entails that \(\psi (x)\ge 0.\) Otherwise, it is true that \(\underset{i=1,...,p}{\mathop {\max }}\,{\mathcal {G}}_i(x)>0\) and so \(\psi (x)> 0.\)

By (3.5), we see that \(\overline{x}\) is a minimizer of the following optimization problem

where \(\delta \) is the indicator function defined as in (2.1). Applying the nonsmooth version of Fermat’s rule in (2.3) to the problem (3.6) gives us

Note that, under (A1), the function \(\psi \) is locally Lipschitz around \(\overline{x}\) and that, due to the closedness of the sets \(C_1,...,C_m\), the indicator function \(\delta \) is lower semicontinuous around this point. Therefore, invoking the sum rule for the limiting subdifferential in Lemma 2.1 and the formula (2.2), we arrive at

Moreover, since the system \(\nu _1+\cdots +\nu _m=0,\) where \(\nu _j \in N(\overline{x}; C_j),\, j=1,...,m,\) has only one solution, \(\nu _j=0,\, j=1,...,m,\) we apply the formula of normal cones to finite set intersections (cf. [27, Corollary 2.17]) to arrive at

This, together with the calculus rule in Lemma 2.2 and the inclusion (3.7), yields

Under the assumptions of (A1) and (A2), we argue similarly as in the proof of [7, Theorem 3.3] to arrive at

where \(T(\overline{x})\) and \(V_i(\overline{x})\) are given as in (3.1).

Next, combining (3.8) and (3.9) shows that there exists \( (\mu _0,...,\mu _p)\in {\mathbb {R}}_{+}^{p+1}\) such that \(\sum \limits _{i=0}^p \mu _i=1\) and

So, (3.3) and (3.4) have been justified.

Now, let the (CQ) be satisfied at \(\overline{x}\). We obtain from (3.3) and (3.4) that \(\mu _0 >0\), which completes the proof. \(\Box \)

The following corollary gives necessary optimality conditions for the robust problem (RP) under the smoothness of related functions and the convexity of uncertainty sets. In this case, both the hypotheses (A1) and (A2) are automatically satisfied. In what follows, we use \(\nabla _x h(\overline{x},\overline{\tau })\) to denote the derivative of a differentiable function h with respect to the first variable at a given point \((\overline{x},\overline{\tau })\).

Corollary 3.1

Let \(\overline{x}\) be an optimal solution of problem (RP), where T and \( V_i,\, i=1,...,p,\) are convex sets. Let \(U_i,\, i=0,...,p,\) be neighborhoods of \(\overline{x}\) such that for each \(x \in U_0\) and \(x \in U_i\), i=1,...,p \( f(x,\cdot ), \, g_i(x,\cdot )\) are concave functions on T and \(V_i\), respectively. Assume that f and \(g_i, \,i=1,...,p,\) are strictly differentiable with respect to the first variable on \(U_0\times T\) and \(U_i\times V_i,\) respectively. Assume further that maps \((x,\tau ) \mapsto \nabla _x f(x,\tau )\) and \((x,u_i) \mapsto \nabla _x g_i(x,u_i)\) are continuous on \(U_0\times T\) and \(U_i\times V_i,\) respectively. If the system \(\nu _1+\cdots +\nu _m=0\), where \(\nu _j \in N(\overline{x}; C_j),\, j=1,...,m,\) has only one solution \(\nu _j=0, \,j=1,...,m,\) then there exist \(\overline{\tau }\in T(\overline{x}),\, \overline{u}_i \in V_i(\overline{x}),\,\,i=1,...,p\) and \((\mu _0,\mu _1,...,\mu _{p})\in {\mathbb {R}}_{+}^{p+1}\) with \(\sum \limits _{i=0}^p\mu _i=1\) such that

Moreover, we have \(\mu _0 >0\) if the condition (CQ) is satisfied at \(\overline{x}.\)

Proof

Observe first that the hypothesis (A2) is automatically satistied at \(\overline{x}\) for our setting as the maps \((x,\tau ) \mapsto \nabla _x f(x,\tau )\) and \((x,u_i) \mapsto \nabla _x g_i(x,u_i), i=1,...,p\) are continuous on \(U_0\times T\) and \(U_i\times V_i,\) respectively. To verify the hypothesis (A1), we only justify for the function f as the similarities go for the functions \(g_i,\, i=1,...,p.\) To see this, we first claim that for each \(\epsilon > 0,\) there exists neighborhood \(U_\epsilon \) of \(\overline{x}\) satisfying

where \(U_\epsilon \) can be chosen such that \(U_\epsilon \subset U_0\) and it is a convex set. To prove (3.12), suppose on the contrary that there exist \(\overline{\epsilon }>0\) and a sequence \(\{(x_k,y_k,\tau _k)\}\) in \(U_0 \times U_0 \times T\) such that \((x_k,y_k) \rightarrow (\overline{x},\overline{x})\) as \(k\rightarrow \infty \) and

Because of the compactness of T and passing to a subsequence if necessary, we may assume that \(\{\tau _k\}\) converges to some \(\overline{\tau }\in T.\) Besides, due to the continuity of the map \((x,\tau ) \mapsto \nabla _x f(x,\tau )\) on \(U_0\times T,\) it follows that

which contradicts (3.13).

Let (3.12) hold for a given \(\epsilon >0.\) For any \(x,\, y \in U_\epsilon \) with \(x\ne y\) and for any \(\tau \in T,\) by the mean value theorem (cf. [11, Theorem 2.4, p. 75]), we find \(z \in (x,y) \subset U_\epsilon \) satisfying the condition

where \((x,y):=\textrm{co}\{x,y\}\setminus \{x,y\}\). This, together with (3.12), gives us

Therefore, it follows that

Moreover, by (3.12), \(\Vert \nabla _x f(y, \tau )\Vert \le \Vert \nabla _x f(\overline{x}, \tau )\Vert + \epsilon \), we arrive at

We conclude that (3.15) holds for every \( x,\, y\in U_\epsilon \) and \( \tau \in T\) as the case of \(x=y\) also holds trivially.

By the continuity of function \(\tau \mapsto \Vert \nabla _x f(\overline{x},\tau )\Vert \) on the compact set T, it admits the maximum value over T, and so we can take \({\mathcal {L}}_0 \ge \underset{\tau \in T}{\max }\ \big \{ \Vert \nabla _x f(\overline{x},\tau )\Vert +2\epsilon \big \}.\) Consequently, the hypothesis (A1) is satisfied.

In this setting, we can verify that (see e.g., similar arguments as in [7, Corollary 3.4]), the sets \(\{\nabla _x f(\overline{x}, \tau ) \,\mid \,\tau \in T(\overline{x})\} \text { and } \{\nabla _x g_i(\overline{x}, u_i) \,\mid \, u_i \in V_i(\overline{x})\}, \, i=1,...,p,\) are convex. Applying Theorem 3.1, we find \((\mu _0,\mu _1,...,\mu _{p})\in {\mathbb {R}}_{+}^{p+1}\) with \( \sum \limits _{i=0}^p\mu _i=1\) such that

which imply the assertions in (3.10) and (3.11), and thus completes the proof. \(\Box \)

Remark 3.1

By considering \(m:=1,\) Corollary 3.1 can be regarded as a generalization version of [19, Theorem 2.3], which was obtained by another approach.

Let us now illustrate the necessary optimality conditions given in Theorem 3.1.

Example 3.1

Let \(f: {\mathbb {R}}^2 \times T \rightarrow {\mathbb {R}}\) and \(g_i:{\mathbb {R}}^2 \times V_i \rightarrow {\mathbb {R}},\, i=1,2, 3 \) be given respectively by

where \(x:=(x_1,x_2)\in {\mathbb {R}}^2\), \( \tau \in T:=[-2,-1] \cup [1,2],\) \(u_1\in V_1:=[0,1],\,u_2 \in V_2:=[2,5]\) and \(u_3 \in V_3:=[-\dfrac{\pi }{2}, \pi ].\) We consider the robust optimization problem (RP) with geometric constraints \(C_j,\, j=1,2,\) given by \(C_1:=\{(x_1,x_2) \in {\mathbb {R}}^2 \, \mid \, x_1^2+x_2^2 \le 2\}\) and \(C_2:=[-2,0]\times [-1,\dfrac{3}{5}],\) which is the following problem

In this setting, for each \(x:=(x_1,x_2)\in {\mathbb {R}}^2\), we see that

and (cf. [7, Example 3.6]) that

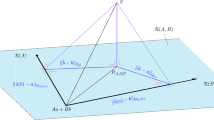

Denote \(S:=\{x:=(x_1,x_2)\in {\mathbb {R}}^2\mid x \in C_1\cap C_2,\, \mathcal G_i(x) \le 0, \,i=1,2, 3\}.\) Then, S is the feasible set of problem (EP1) and is depicted in the gray shade of Fig. 1.

Letting \(\overline{x}:=\big (-\dfrac{4}{5},\dfrac{3}{5}\big ) \in S,\) we can verify that the assumptions (A1) and (A2) are satisfied at \(\overline{x}\). Moreover, we can also check that \(\overline{x}\) is an optimal solution of problem (EP1). By direct calculation, we obtain that

Then, for each \( \tau \in T(\overline{x}),\, u_1\in V_1(\overline{x}),\,u_2\in V_2(\overline{x})\) and \(u_3\in V_3(\overline{x}),\) we have

Taking \(\mu _0=\dfrac{3}{4},\, \mu _2=\dfrac{1}{4}\) and \( \mu _1=\mu _3=0,\) we see that

which show that the necessary optimality conditions in Theorem 3.1 hold for the problem (EP1).

The feasible set S of problem (EP1) is shaded in gray

Let us state a robust Karush–Kuhn–Tucker (KKT) condition for the problem (RP).

Definition 3.2

Let \(\overline{x}\) be a feasible point of problem (RP). We say that the robust (KKT) condition is satisfied at \(\overline{x}\) if there exists \( (\mu _1,...,\mu _{p})\in {\mathbb {R}}_{+}^{p}\) such that

Remark 3.2

With the assumptions as in Theorem 3.1, it can be seen that if the condition (CQ) satisfies at an optimal solution \(\overline{x}\) of problem (RP), then the robust (KKT) condition holds at \(\overline{x}.\) However, if the robust (KKT) condition is satisfied at a feasible point \(\overline{x}\) of problem (RP), we may not conclude that \(\overline{x}\) is an optimal solution of the problem as the following example shows.

Example 3.2

Let \(f:{\mathbb {R}}^2\times T \rightarrow {\mathbb {R}}\) and \(g:{\mathbb {R}}^2 \times V_1 \rightarrow {\mathbb {R}}\) be defined by

where \(x:=(x_1,x_2)\in {\mathbb {R}}^2,\,\tau \in T:=[\dfrac{2}{3},1]\) and \(u\in V_1:=[-3,0].\) Consider the following problem

with the geometric constraint \(C_1:=\{x\in {\mathbb {R}}^2\, \mid \, x_1^2+x_2^2 \le 1\}.\)

Let \(\overline{x}=(0,0) \) be a feasible point of problem (EP2). By direct computation, we obtain

Observe that the robust (KKT) condition satisfies at \(\overline{x}.\) However, \(\overline{x}\) is not an optimal solution of (EP2) as \(\mathcal F(\overline{x})=0 > -1 = {\mathcal {F}}(\widehat{x}),\) where \(\widehat{x}=(-1,0)\).

Inspired by [7], we define the following generalized convexity. For the convenience in the sequel, we employ the notations \({\mathcal {F}}(x):=\underset{\tau \in T}{\mathop {\max }}\, f(x,\tau ),\) and \({\mathcal {G}}_i( x):=\underset{{u_i}\in {V_i}}{\mathop {\max }}\, g_i( x,u_i),\, i=1,...,p,\) for \(x\in {\mathbb {R}}^n.\)

Definition 3.3

The combination \(({\mathcal {F}},{\mathcal {G}}_1,...,{\mathcal {G}}_p)\) is called generalized convex at \(\overline{x} \in \bigcap \limits _{j=1}^{m}C_j,\) if for any \(x \in \bigcap \limits _{j=1}^{m}C_j,\) there exists \(w \in \big (\sum \limits _{j=1}^mN(\overline{x};C_j)\big )^\circ \) such that

where \(T(\overline{x})\) and \(V_i(\overline{x}),\, i=1,...,p,\) are defined as in (3.1).

We can verify that if the functions \(f(\cdot ,\tau ),\, \tau \in T\) and \(g_i(\cdot , u_i),\, u_i \in V_i,\, i=1,...,p,\) are convex, then the inequalities in Definition 3.3 are automatically satisfied at any \(\overline{x}\in \bigcap \limits _{j=1}^{m}C_j\) by letting \(w:=x-\overline{x}\) for each \(x \in \bigcap \limits _{j=1}^{m}C_j\). However, the reverse is not true in general as the following example shows.

Example 3.3

Let \(f:{\mathbb {R}}\times T \rightarrow {\mathbb {R}}\) and \(g:{\mathbb {R}}\times V_1 \rightarrow {\mathbb {R}}\) be given by

where \(T:=[0,1]\) and \(V_1:=[0,2].\)

Denote \({\mathcal {F}}(x):=\max \limits _{\tau \in T}f(x,\tau )\) and \({\mathcal {G}}(x):=\max \limits _{u\in V_1}g(x,u)\) for \(x\in {\mathbb {R}}\). We consider \(C_1:=[-2,0]\) and \(\overline{x}=0 \in C_1.\) It holds that

In this setting, we can verify that the generalized convexity of \(({\mathcal {F}},{\mathcal {G}})\) is satisfied at \(\overline{x}\), while \(g_1(\cdot ,0)\) is not a convex function.

The next theorem supplies sufficient conditions for the optimality of problem (RP).

Theorem 3.2

Suppose that the robust (KKT) condition holds at a feasible point \(\overline{x}\) of problem (RP). If \(({\mathcal {F}},\mathcal G_1,...,{\mathcal {G}}_p)\) is generalized convex at \(\overline{x}\), then \(\overline{x}\) is an optimal solution of problem (RP).

Proof

As \(\overline{x}\) satisfies the robust (KKT) condition, we can find \((\mu _1,...,\mu _{p})\in {\mathbb {R}}_{+}^{p}\) and

such that

By (3.16), there exist \(\lambda _l \ge 0, \, x_l^* \in \partial _x f(\overline{x}, \tau _l), \,\tau _l \in T(\overline{x}), \,l=1,...,l_\tau , \,l_\tau \in {\mathbb {N}},\) \(\mu _{ik} \ge 0, \, x_{ik}^{*} \in \partial _x g_i(\overline{x}, u_{ik}), u_{ik} \in V_i(\overline{x}), \,k=1,...,k_i, \,k_i \in {\mathbb {N}}\) such that \( \sum \limits _{k=1}^{k_i} \mu _{ik}=1\), \(\sum \limits _{l=1}^{l_\tau }\lambda _l=1\) and

Therefore, we get from (3.17) that

Assume on the contrary that \(\overline{x}\) is not an optimal solution. Then, there exists a feasible point \(\widehat{x}\) of problem (RP) satisfying

where \({\mathcal {F}}(x):=\underset{\tau \in T}{\mathop {\max }}\, f(x,\tau )\) for each \(x \in {\mathbb {R}}^n.\)

By the generalized convexity of \(({\mathcal {F}},\mathcal G_1,...,{\mathcal {G}}_p)\) at \(\overline{x}\), for the above \(\widehat{x}\), one can find \(w \in \big (\sum \limits _{j=1}^m N(\overline{x};C_j)\big )^{\circ }\) such that

where the first inequality holds due to (3.19) and the definition of polar cone. This gives us

It is worth noting that from the definition of max functions \({\mathcal {F}}(x)\) and \( {\mathcal {G}}_i(x),\, i=1,...,p,\) we arrive at \(f(\widehat{x},\tau _l)\le {\mathcal {F}}(\widehat{x}),\, g_i(\widehat{x},u_{ik})\le {\mathcal {G}}_i(\widehat{x}),\,f(\overline{x},\tau _l)=\mathcal F(\overline{x}),\,g_i(\overline{x},u_{ik})= {\mathcal {G}}_i(\overline{x})\) for \(l=1,...,l_\tau ,\,l_\tau \in {\mathbb {N}},\,\tau _l \in T(\overline{x}),\, k=1,...,k_i, \,k_i \in {\mathbb {N}},\, u_{ik} \in V_i(\overline{x}).\) So, it follows by (3.21) that

Note that \( {\mathcal {G}}_i(\widehat{x})\le 0\) for \(i=1,...,p\) and by (3.18) that \(\mu _i{\mathcal {G}}_i(\overline{x})=0\). Therefore, the inequality (3.22) shows that

which entails a contradiction to (3.20), and so the proof is complete. \(\Box \)

Remark 3.3

Theorem 3.2 develops [19, Theorem 2.4] with \(m=1.\) In the case, where there are no geometric constraints, we refer the interested reader to [17, Proposition 2.1] for a necessary and sufficient condition for robust optimal solutions of a robust convex optimization problem.

The next example shows how one can utilize Theorem 3.2 to verify an optimal solution.

Example 3.4

Let \(f:{\mathbb {R}}^2 \times T \rightarrow {\mathbb {R}}\) and \(g:{\mathbb {R}}^2\times V_1 \rightarrow {\mathbb {R}}\) be defined by

where \( x:=(x_1,x_2)\in {\mathbb {R}}^2,\,\tau :=(\tau _1,\tau _2) \in T:=\{(\tau _1,\tau _2) \in {\mathbb {R}}^2 \, \mid \, \tau _1 \ge 0,\, \tau _2 \ge 0, \,\tau _1^2+\tau _2^2 \le 1\}\) and \(u\in V_1:= [-2,0].\) Consider a robust optimization problem:

where the geometric constraints \(C_1\) and \(C_2\) are described by

It is clear that \(\overline{x}:=(0,0)\) is a feasible point of problem (EP3). Denote \({\mathcal {F}}(x):=\max \limits _{\tau \in T}f(x,\tau )\) and \({\mathcal {G}}(x):=\max \limits _{u\in V_1}g(x,u)\) for \(x\in {\mathbb {R}}^2\). By direct calculation, one has

Then, we can verify that the robust (KKT) condition of problem (EP3) is satisfied at \(\overline{x}.\)

To verify the generalized convexity of \(({\mathcal {F}},{\mathcal {G}})\) at \(\overline{x},\) take arbitrarily \(x:=(x_1,x_2)\in C_1 \cap C_2.\) Then, by taking \(w:=(0,2x_1+2x_2) \in (N(\overline{x};C_1) +N(\overline{x};C_2)) ^\circ = {\mathbb {R}}_-^2\), we have

which show that \(({\mathcal {F}},{\mathcal {G}})\) is generalized convex at \(\overline{x}.\) Now, applying Theorem 3.2, we claim that \(\overline{x}\) is an optimal solution of the considered problem.

4 Duality in Robust Optimization

In this section, we propose a dual problem to the uncertain optimization problem (UP) and examine some robust duality relations for the pair of primal and dual problems. For the sake of convenience, we recall here the notations

where \(y\in {\mathbb {R}}^n.\)

For the uncertain optimization problem (UP), we address an uncertain dual optimization problem as follows:

where \(\tau \in T\) and the feasible set \(S_D\) is defined by

We investigate the problem (DU) by analyzing its robust (worst-case) counterpart:

Note that a point \((\overline{y}, \overline{\mu }) \in S_D\) is a solution of (DR) if \( {\mathcal {F}}(y) \le {\mathcal {F}}(\overline{y})\) for every \( (y, \mu ) \in S_D \).

The first theorem in this section provides a weak duality relation between (RP) and (DR).

Theorem 4.1

(Weak duality) Let x be a feasible point of the problem (RP) and let \((y, \mu ) \) be a feasible point of the problem (DR). If \(({\mathcal {F}},{\mathcal {G}}_1,...,{\mathcal {G}}_p)\) is generalized convex at y, then we have

Proof

Let \((y, \mu ) \in S_D\). This means that \(\mu :=(\mu _1,...,\mu _p) \in {\mathbb {R}}_+^p\) and there exist

such that

Assume that the family \(({\mathcal {F}},{\mathcal {G}}_1,...,{\mathcal {G}}_p)\) is generalized convex at y. Then, for \(x \in \bigcap \limits _{j=1}^{m}C_j\) above, we find \(w \in \big (\sum \limits _{j=1}^m N(y;C_j)\big ) ^\circ \) such that

We assert by (4.1) that there exist \(\lambda _l \ge 0, \, y_l^* \in \partial _x f(y, \tau _l),\, \tau _l \in T(y), \,l=1,...,l_\tau , \,l_\tau \in {\mathbb {N}},\) such that \(\sum \limits _{l=1}^{l_\tau }\lambda _l=1\) and

Therefore, from (4.4), we have

Since \(f(x,\tau _l)\le {\mathcal {F}}(x)\) and \(f(y,\tau _l)=\mathcal F(y)\) for all \(\tau _l \in T(y),\,l=1,...,l_\tau ,\) we arrive at

Similarly, we can verify that for each \(i=1,...,p,\) there exist \( \mu _{ik} \ge 0, \,k=1,...,k_i, \,k_i \in {\mathbb {N}},\) such that \(\sum \limits _{k=1}^{k_i} \mu _{ik}=1,\) and then

Combining now (4.2) with (4.5) and (4.6) gives us

This shows that

Furthermore, \(\sum \limits _{i=1}^{p}\mu _i {\mathcal {G}}_i(y) \ge 0\) due to (4.3) and \({\mathcal {G}}_i(x)\le 0\) for all \(i=1,...,p,\) because x is a feasible point of problem (RP). So, we get by (4.7) that

which completes the proof of the theorem.\(\Box \)

The following example emphasizes the importance of the generalized convexity imposed in Theorem 4.1.

Example 4.1

Let \(f:{\mathbb {R}}^2\times T \rightarrow {\mathbb {R}}\) and \( g:{\mathbb {R}}^2 \times V_1 \rightarrow {\mathbb {R}}\) be defined respectively by

where \(x:=(x_1,x_2)\in {\mathbb {R}}^2,\,\tau \in T:=[-2,0]\) and \(u_1 \in V_1:=[-1,0].\) Consider a robust optimization problem:

where the geometric constraint \(C_1\) is given by \( C_1:=\{(x_1,x_2)\in {\mathbb {R}}^2\, \mid \, x_1^2+x_2^2 \le 1\}.\) Then, the dual problem in terms of (DR) for (EP4) is defined by

Let \(\overline{x}=\big (\dfrac{1}{2},\dfrac{1}{2}\big ), \, \overline{\mu }=1\) and \(\overline{y}=(0,0)\) and denote \({\mathcal {F}}(x):=\max \limits _{\tau \in T}f(x,\tau )\) and \({\mathcal {G}}(x):=\max \limits _{u_1\in V_1}g(x,u_1)\) for \(x\in {\mathbb {R}}^2\). A direct calculation shows that

and so we can check that \(\overline{x}\) is feasible for (EP4) and \(( \overline{x},\overline{\mu }) \) is feasible for (ED4). However, it holds that

which shows that Theorem 4.1 is not true for this setting. The reason is that the generalized convexity of \(({\mathcal {F}},{\mathcal {G}})\) at \(\overline{y} \) is violated.

In the following theorem, we establish strong duality and converse duality relations between (RP) and (DR).

Theorem 4.2

(Strong and converse duality) Consider the robust optimization problem (RP) and its dual problem (DR).

-

(i)

Let assumptions (A1) and (A2) hold at an optimal solution \(\overline{x}\) of problem (RP). Asssume that the condition (CQ) is satisfied at \(\overline{x}\) and that the equation \(\nu _1+\cdots +\nu _m=0,\) where \(\nu _j \in N(\overline{x}; C_j),\, j=1,...,m,\) has only the trivial solution \(\nu _1=\cdots =\nu _m=0.\) If \((\mathcal F,{\mathcal {G}}_1,...,{\mathcal {G}}_p)\) is generalized convex at any \(y \in \bigcap \limits _{j=1}^{m}C_j,\) then there exists \( \overline{\mu }\in {\mathbb {R}}_+^p\) such that \((\overline{x}, \overline{\mu })\) is a solution of problem (DR).

-

(ii)

Let \((\overline{x}, \overline{\mu })\) be a feasible point of problem (DR). If \(\overline{x}\) is a feasible point of problem (RP) and \(({\mathcal {F}},{\mathcal {G}}_1,...,{\mathcal {G}}_p)\) is generalized convex at \(\overline{x},\) then \(\overline{x}\) is an optimal solution of (RP).

Proof

(i) As \(\overline{x}\) is an optimal solution of problem (RP), it follows that \(\overline{x}\in \bigcap \limits _{j=1}^{m}C_j.\) According to Theorem 3.1, we can find \( (\mu _0,\mu _1,...,\mu _{p})\in {\mathbb {R}}_{+}^{p+1}\) with \(\mu _0>0\) and \( \sum \limits _{i=0}^{p} \mu _i=1\) such that

By dividing the above relationships by \(\mu _0,\) and then setting \(\overline{\mu }_i:=\dfrac{\mu _i}{\mu _0},\,i=1,...,p,\) we obtain that \( \overline{\mu }:=(\overline{\mu }_1,...,\overline{\mu }_p) \in {\mathbb {R}}_+^p\) and \((\overline{x}, \overline{\mu }) \in S_D.\)

Now, let \(({\mathcal {F}},{\mathcal {G}}_1,...,{\mathcal {G}}_p)\) be generalized convex at any \(y\in \bigcap \limits _{j=1}^{m}C_j.\) For any \((y,\mu ) \in S_D,\) we invoke Theorem 4.1 to conclude that

This means that \((\overline{x}, \overline{\mu })\) is a solution of problem (DR).

(ii) As \((\overline{x}, \overline{\mu }) \in S_D,\) we have \( \overline{\mu }:=(\overline{\mu }_1,...,\overline{\mu }_{p})\in {\mathbb {R}}_{+}^p\) and

Let \(\overline{x}\) be a feasible point of problem (RP). Then, it entails that \( \overline{\mu }_i\underset{u_i\in V_i}{\mathop {\,\max }} g_i(\overline{x},u_i) \le 0\) for all \(i=1,...,p.\) This, together with (4.9), ensures that

Namely, the robust (KKT) condition of problem (RP) holds at \(\overline{x}\). To complete the proof, it suffices to invoke Theorem 3.2. \(\Box \)

We finish this section by giving an example that shows how one can calculate an optimal solution of a robust optimization problem through its dual counterpart.

Example 4.2

Let \(f:{\mathbb {R}}^2\times T \rightarrow {\mathbb {R}}\) and \(g_i:{\mathbb {R}}^2 \times V_i \rightarrow {\mathbb {R}},\, i=1,2\) be defined by

where \(x:=(x_1,x_2) \in {\mathbb {R}}^2,\, \tau \in T:=[1,2],\,u_1 \in V_1:= [-5,-3] \) and \(u_2 \in V_2:=[0,2].\)

Consider a robust optimization problem:

where the geometric constraints \(C_1\) and \(C_2\) are given respectively by

Then, the dual problem in terms of (DR) for (EP5) is defined by

where the feasible set \(S_D\) is given by

with \({\mathcal {G}}_i(x):=\underset{u_i\in V_i}{\mathop {\max }} g_i(x,u_i),\, i=1,2\) for \(x\in {\mathbb {R}}^2.\)

Denoting \(\overline{x}:=(0,0)\), we see that \(\overline{x}\) is a feasible point of problem (EP5). By direct calculation, we have

Taking \(\overline{\mu }:=(1,1),\) we see that \((\overline{x}, \overline{\mu }) \in S_D.\) Moreover, we can verify that \(({\mathcal {F}},{\mathcal {G}}_1,\mathcal G_2)\) is generalized convex at \(\overline{x},\) where \(\mathcal F(x):=\underset{\tau \in T}{\max }f(x,\tau )\) for \(x\in {\mathbb {R}}^2.\) Employing now Theorem 4.2(ii), we assert that \({\bar{x}}\) is an optimal solution of problem (EP5).

5 Conclusions

This paper studied a robust optimization problem, where the related data are nonsmooth and nonconvex functions, and moreover the constraint set involves an intersection of finitely many closed sets. More concretely, we presented necessary conditions for the optimality of the underlying problem. Under the additional assumption of generalized convexity and the robust (KKT) condition satisfying at a given feasible point, we provided sufficient conditions that allow the referenced feasible point to be optimal. We also established a dual problem to the robust optimization problem and proposed their duality relationships.

It would be interesting to see how we can develop numerical schemes based on the obtained optimality conditions to find optimal solutions or optimal value for the considered robust optimization problem. When the intersection of finitely geometric constraints involves data uncertainties, the investigation of the corresponding robust optimization problem might become intractable and the current approach would not be applicable as we encounter an uncertainty setting, where the robust optimization counterpart inherits an infinite number of geometric constraint sets. Some possible approaches such as tangential extremal principles for a countable set system in [25, 26] could be developed to study this type of uncertain/robust optimization problems. Moreover, some appropriate applications to examine other general classes of robust optimization problems are well worth a further study.

References

Allevi, E., Martínez-Legaz, J.E., Riccardi, R.: Optimality conditions for convex problems on intersections of non necessarily convex sets. J. Glob. Optim. 77, 143–155 (2020)

Bao, T.Q., Mordukhovich, B.S.: Existence of minimizers and necessary conditions in set-valued optimization with equilibrium constraints. Appl. Math. 52, 453–472 (2007)

Ben Tal, A., El Ghaoui, L., Nemirovski, A.: Robust Optimization. Princeton University Press, Princeton (2009)

Bertsimas, D., Brown, D.B., Caramanis, C.: Theory and applications of robust optimization. SIAM Rev. 53, 464–501 (2011)

Bonnans, J.F., Shapiro, A.: Perturbation Analysis of Optimization Problems. Springer, New York (2000)

Chieu, N.H., Jeyakumar, V., Li, G., Mohebi, H.: Constraint qualifications for convex optimization without convexity of constraints: new connections and applications to best approximation. Eur. J. Oper. Res. 265, 19–25 (2018)

Chuong, T.D.: Optimality and duality for robust multiobjective optimization problems. Nonlinear Anal. 134, 127–143 (2016)

Chuong, T.D.: Robust optimality and duality in multiobjective optimization problems under data uncertainty. SIAM J. Optim. 30, 1501–1526 (2020)

Chuong, T.D., Jeyakumar, V.: Convergent hierachy of SDP relaxations for a calss of semi infinite convex polynomial programs and application. Appl. Math. Comput. 315, 381–399 (2017)

Chuong, T.D., Jeyakumar, V.: Tight SDP relaxations for a class of robust SOS-convex polynomial programs without the slater condition. J. Convex Anal. 25, 1159–1182 (2018)

Clarke, F.H., Ledyaev, Y.S., Stern, R.J., Wolenski, R.R.: Nonsmooth Analysis and Control Theory, p. 198. Springer, New York (1998)

Ghafari, N., Mohebi, H.: Optimality conditions for nonconvex problems over nearly convex feasible sets. Arab. J. Math. 10, 395–408 (2021)

Hong, Z., Jiao, L., Kim, D.S.: Approximate optimality conditions for robust convex optimization without convexity of constraints. Linear Nonlinear Anal. 5, 173–182 (2019)

Jalilian, K., Pirbazari, K.N.: Convex optimization without convexity of constraints on non-necessary convex sets and its applications in customer satisfaction in automotive industry. Numeri. Algebra Control Optim. 12, 537–550 (2022)

Jeyakumar, V., Li, G.Y.: Strong duality in robust convex programming: complete characterizations. SIAM J. Optim. 20, 3384–3407 (2010)

Jeyakumar, V., Li, G.Y.: Robust solutions of quadratic optimization over single quadratic constraint under interval uncertainty. J. Glob. Optim. 55, 209–226 (2013)

Jeyakumar, V., Lee, G.M., Li, G.Y.: Characterizing robust solution sets of convex programs under data uncertainty. J. Optim. Theory Appl. 164, 407–435 (2015)

Lee, J.H., Lee, G.M.: On \(\epsilon \)-solutions for convex optimization problems with uncertainty data. Positivity 16, 509–526 (2012)

Lee, G.M., Kim, M.H.: On duality theorems for robust optimization problems. J. Chungcheong Math. Soc. 4, 723–734 (2013)

Lee, G.M., Son, P.T.: On nonsmooth optimality theorems for robust optimization problems. Bull. Korean Math. Soc. 51, 287–301 (2014)

Lee, J.H., Jiao, L.: On quasi \(\epsilon \)-solution for robust convex optimization problems. Optim. Lett. 11, 1609–1622 (2017)

Li, X.B., Wang, S.: Characterizations of robust solution set of convex programs with uncertain data. Optim. Lett. 12, 1387–1402 (2018)

Mordukhovich, B.S.: Necessary conditions in nonsmooth minimization via lower and upper subgradients. Set-Valued Anal. 12, 163–193 (2004)

Mordukhovich, B.S.: Variational Analysis and Generalized Differentiation I: Basic Theory. Springer, Berlin (2006)

Mordukhovich, B.S., Hung, P.M.: Tangential extremal principle for finite and infinite set systems I: basic theory. Math. Program. Ser. B 136, 3–30 (2012)

Mordukhovich, B.S., Hung, P.M.: Tangential extremal principles for finite and infinite systems of sets II: applications to semi-infinite and multiobjective optimization. Math. Program. Seri. B 136, 31–63 (2012)

Mordukhovich, B.S.: Variational Analysis and Applications. Springer, New York (2018)

Sisarat, N., Wangkeeree, R.: Characterizing the solution set of convex optimization problems without convexity of constraints. Optim. Lett. 14, 1127–1144 (2020)

Sisarat, N., Wangkeeree, R., Lee, G.M.: Some characterizations of robust solution sets for uncertain convex optimization problems with locally Lipschitz inequality constraints. J. Ind. Manag. Optim. 16, 469–493 (2020)

Sun, X.K., Li, X.B., Long, X.J., Peng, Z.Y.: On robust approximate optimal solutions for uncertain convex optimization and applications to multi-objective optimization. Pacific J. Optim. 4, 621–643 (2017)

Sun, X.K., Peng, Z.Y., Guo, X.L.: Some characterizations of robust optimal solutions for uncertain convex optimization problems. Optim. Lett. 10, 1463–1478 (2016)

Tuy, H.: Convex Analysis and Global Optimization. Springer Optimization and Its Applications, vol. 110. Springer, Berlin (2016)

Acknowledgements

The authors are grateful to the editor and referees for the valuable comments and suggestions. This research is funded by Vietnam National University Ho Chi Minh City (VNU-HCM) under grant number T2024-26-01.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that they have no Conflict of interest.

Additional information

Communicated by Juan Parra.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Hung, N.C., Chuong, T.D. & Anh, N.L.H. Optimality and Duality for Robust Optimization Problems Involving Intersection of Closed Sets. J Optim Theory Appl (2024). https://doi.org/10.1007/s10957-024-02447-w

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s10957-024-02447-w