Abstract

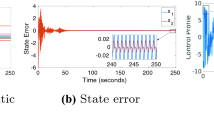

In this paper, we develop a novel non-parametric online actor-critic reinforcement learning (RL) algorithm to solve optimal regulation problems for a class of continuous-time affine nonlinear dynamical systems. To deal with the value function approximation (VFA) with inherent nonlinear and unknown structure, a reproducing kernel Hilbert space (RKHS)-based kernelized method is designed through online sparsification, where the dictionary size is fixed and consists of updated elements. In addition, the linear independence check condition, i.e., an online criteria, is designed to determine whether the online data should be inserted into the dictionary. The RHKS-based kernelized VFA has a variable structure in accordance with the online data collection, which is different from classical parametric VFA methods with a fixed structure. Furthermore, we develop a sparse online kernelized actor-critic learning RL method to learn the unknown optimal value function and the optimal control policy in an adaptive fashion. The convergence of the presented kernelized actor-critic learning method to the optimum is provided. The boundedness of the closed-loop signals during the online learning phase can be guaranteed. Finally, a simulation example is conducted to demonstrate the effectiveness of the presented kernelized actor-critic learning algorithm.

Similar content being viewed by others

References

Anderson BD, Moore JB (2007) Optimal control: linear quadratic methods. Courier Corporation, HH

Bellman R (1966) Dynamic programming. Science 153(3731):34–37

Berlinet A, Thomas-Agnan C (2011) Reproducing kernel Hilbert spaces in probability and statistics. Springer Science & Business Media, Berlin

Bishop CM (2006) Pattern recognition and machine learning. Springer, Berlin

Boyd S, Boyd SP, Vandenberghe L (2004) Convex optimization. Cambridge University Press, Cambridge

Cortes C, Vapnik V (1995) Support-vector networks. Mach learn 20(3):273–297

Hall P, Park BU, Samworth RJ et al (2008) Choice of neighbor order in nearest-neighbor classification. Annals Stat 36(5):2135–2152

Härdle W, Simar L (2015) “Applied multivariate statistical analysis course,”

Haykin SS (2009) “Neural networks and learning machines,”

Ioannou PA, Sun J (2012) Robust adaptive control. Courier Corporation, HH

Jiang Y, Jiang Z-P (2015) Global adaptive dynamic programming for continuous-time nonlinear systems. IEEE Trans Autom Control 60(11):2917–2929

Khalil HK, Grizzle JW (2002) Nonlinear systems. Prentice hall Upper Saddle River, NJ

Kingravi HA, Chowdhary G, Vela PA, Johnson EN (2012) Reproducing kernel Hilbert space approach for the online update of radial bases in neuro-adaptive control. IEEE Trans Neural Netw Learn Syst 23(7):1130–1141

Krstic M, Kokotovic PV, Kanellakopoulos I (1995) Nonlinear and adaptive control design. John Wiley & Sons Inc, Hoboken

Kung SY (2014) Kernel methods and machine learning. Cambridge University Press, Cambridge

Lewis FL, Liu D (2013) Reinforcement learning and approximate dynamic programming for feedback control. John Wiley & Sons, Hoboken

Lewis FL, Yesildirak A, Jagannathan S (1998) Neural network control of robot manipulators and nonlinear systems. Taylor & Francis Inc, USA

Lewis FL, Vrabie D, Syrmos VL (2012) Optimal control. John Wiley & Sons, Hoboken

Liang M, Wang D, Liu D (2020) Neuro-optimal control for discrete stochastic processes via a novel policy iteration algorithm. IEEE Trans Syst Man Cybern Syst 50(11):3972–3985

Liberzon D (2011) Calculus of variations and optimal control theory. Princeton university press, Princeton, NJ, USA

Lin H, Zhao B, Liu D, Alippi C (2020) Data-based fault tolerant control for affine nonlinear systems through particle swarm optimized neural networks. IEEE/CAA J Automatica Sinica 7(4):954–964

Liu D, Wei Q (2014) Policy iteration adaptive dynamic programming algorithm for discrete-time nonlinear systems. IEEE Trans Neural Netw Learn Syst 25(3):621–634

Liu D, Wang D, Wang F, Li H, Yang X (2014) Neural-network-based online hjb solution for optimal robust guaranteed cost control of continuous-time uncertain nonlinear systems. IEEE Trans Cybern 44(12):2834–2847

Liu D, Xue S, Zhao B, Luo B, Wei Q (2021) Adaptive dynamic programming for control: a survey and recent advances. IEEE Trans Syst Man Cybern Syst 51(1):142–160

Nguyen-Tuong D, Schölkopf B, Peters J (2009) “Sparse online model learning for robot control with support vector regression,” in 2009 IEEE/RSJ International Conference on Intelligent Robots and Systems. IEEE, pp. 3121–3126

Poggio T, Girosi F (1990) Networks for approximation and learning. Proceedings of the IEEE 78(9):1481–1497

Prajna S, Papachristodoulou A, Parrilo PA (2002) “Introducing sostools: A general purpose sum of squares programming solver,” in Proceedings of the 41st IEEE Conference on Decision and Control, 2002., vol. 1. IEEE, pp. 741–746

Russell S, Norvig P (2002) Artificial intelligence: a modern approach. Prentice Hall, Upper Saddle River, New Jersey, USA

Sarangapani J (2018) Neural network control of nonlinear discrete-time systems. CRC Press, Cambridge

Sepulchre R, Jankovic M, Kokotovic PV (2012) Constructive nonlinear control. Springer Science & Business Media, HH

Speyer JL, Jacobson DH (2010) Primer on optimal control theory. SIAM

Tao G, Kokotovic PV (1996) Adaptive control of systems with actuator and sensor nonlinearities. John Wiley & Sons Inc, Hoboken

Vamvoudakis KG, Lewis FL (2010) Online actor-critic algorithm to solve the continuous-time infinite horizon optimal control problem. Automatica 46(5):878–888

Vamvoudakis KG, Lewis FL, Hudas GR (2012) Multi-agent differential graphical games: Online adaptive learning solution for synchronization with optimality. Automatica 48(8):1598–1611

Wang F-Y, Zhang H, Liu D (2009) Adaptive dynamic programming: an introduction. IEEE Comput Intell Magazine 4(2):39–47

Wang M, Ge SS, Hong K (2010) Approximation-based adaptive tracking control of pure-feedback nonlinear systems with multiple unknown time-varying delays. IEEE Trans Neural Netw 21(11):1804–1816

Witten IH, Frank E (2002) Data mining: practical machine learning tools and techniques with java implementations. Acm Sigmod Record 31(1):76–77

Wu X, Kumar V, Quinlan JR, Ghosh J, Yang Q, Motoda H, McLachlan GJ, Ng A, Liu B, Philip SY et al (2008) Top 10 algorithms in data mining. Knowledge Inform Syst 14(1):1–37

Xue S, Luo B, Liu D (2020a) Event-triggered adaptive dynamic programming for zero-sum game of partially unknown continuous-time nonlinear systems. IEEE Trans Syst Man Cybern Syst 50(9):3189–3199

Xue S, Luo B, Liu D, Yang Y (2020b) Constrained event-triggered H\(_\infty \) control based on adaptive dynamic programming with concurrent learning. In: IEEE Transactions on Systems, Man, and Cybernetics: Systems. https://doi.org/10.1109/TSMC.2020.2997559

Yang Y, Xu C-Z (2020) Adaptive fuzzy leader-follower synchronization of constrained heterogeneous multiagent systems. IEEE Trans Fuzzy Syst. https://doi.org/10.1109/TFUZZ.2020.3021714

Yang Y, Wunsch D, Yin Y (2017) Hamiltonian-driven adaptive dynamic programming for continuous nonlinear dynamical systems. IEEE Trans Neural Netw Learn Syst 28(8):1929–1940

Yang Y, Modares H, Wunsch DC, Yin Y (2018) Leader-follower output synchronization of linear heterogeneous systems with active leader using reinforcement learning. IEEE Trans Neural Netw Learn Syst 29(6):2139–2153

Yang Y, Modares H, Wunsch DC, Yin Y (2019) Optimal containment control of unknown heterogeneous systems with active leaders. IEEE Trans Control Syst Technol 27(3):1228–1236

Yang Y, Ding D-W, **ong H, Yin Y, Wunsch DC (2020a) Online barrier-actor-critic learning for H\(_\infty \) control with full-state constraints and input saturation. J Franklin Inst 357(6):3316–3344

Yang Y, Vamvoudakis KG, Modares H, Yin Y, Wunsch DC (2020b) Safe intermittent reinforcement learning with static and dynamic event generators. IEEE Trans Neural Netw Learn Syst 31(12):5441–5455

Yang Y, Vamvoudakis KG, Modares H, Yin Y, Wunsch DC (2020c) Hamiltonian-driven hybrid adaptive dynamic programming. IEEE Trans Syst Man Cybern Syst. https://doi.org/10.1109/TSMC.2019.2962103

Yang Y, Gao W, Modares H, Xu C-Z (2021a) Robust actor-critic learning for continuous-time nonlinear systems with unmodeled dynamics. IEEE Trans Fuzzy Syst. https://doi.org/10.1109/TFUZZ.2021.3075501

Yang Y, Mazouchi M, Modares H (2021b) Hamiltonian-driven adaptive dynamic programming for mixed H\(_2\)/H\(_\infty \) performance using sum-of-squares. Int J Robust Nonlinear Control 31(6):1941–1963

Zhao B, Liu D (2020) Event-triggered decentralized tracking control of modular reconfigurable robots through adaptive dynamic programming. IEEE Trans Indus Electron 67(4):3054–3064

Zhao B, Liu D, Li Y (2017) Observer based adaptive dynamic programming for fault tolerant control of a class of nonlinear systems. Inform Sci 384:21–33

Zhao B, Wang D, Shi G, Liu D, Li Y (2018) Decentralized control for large-scale nonlinear systems with unknown mismatched interconnections via policy iteration. IEEE Trans Syst Man Cybern Syst 48(10):1725–1735

Zhao B, Liu D, Luo C (2020) Reinforcement learning-based optimal stabilization for unknown nonlinear systems subject to inputs with uncertain constraints. IEEE Trans Neural Netw Learn Syst 31(10):4330–4340

Zhang H, Liu D (2006) Fuzzy modeling and fuzzy control. Springer, Berlin

Zhang Q, Zhao D (2019) Data-based reinforcement learning for nonzero-sum games with unknown drift dynamics. IEEE Trans Cybern 49(8):2874–2885

Zhang Q, Zhao D, Zhu Y (2017) Event-triggered \({H}_\infty \) control for continuous-time nonlinear system via concurrent learning. IEEE Trans Syst Man Cybern Syst 47(7):1071–1081

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

This work was supported in part by the National Natural Science Foundation of China under Grants 61903028, 61973330, 61803371 and 61773075, in part by the Bei**g Natural Science Foundation under Grant 4212038, in part by the Open Research Project of the State Key Laboratory of Management and Control for Complex Systems, Institute of Sciences under Grant 20210108, in part by the Open Research Project of the State Key Laboratory of Industrial Control Technology, Zhejiang University, China under Grant ICT2021B48, in part by the Fundamental Research Funds for the Central Universities under Grant 2019NTST25, and in part by the State Key Laboratory of Synthetical Automation for Process Industries under Grant 2019-KF-23-03.

Rights and permissions

About this article

Cite this article

Yang, Y., Zhu, H., Zhang, Q. et al. Sparse online kernelized actor-critic Learning in reproducing kernel Hilbert space. Artif Intell Rev 55, 23–58 (2022). https://doi.org/10.1007/s10462-021-10045-9

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10462-021-10045-9