Abstract

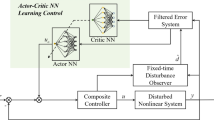

This paper studies the anti-disturbances adaptive control problem with reinforcement learning (RL) actor-critic method for systems which subjected to matched, mismatched disturbances and input uncertainties. As most of the classical adaptive methods are not applicable in this case, firstly, actor-critic networks are introduced to approximate the unknown dynamics and cost function respectively. And the critic network is used to judge the performance of the actor network and give reinforcement signal to guide the updating of network weights. Furthermore, by using the hyperbolic tangent function to estimate the disturbances boundaries, the input uncertainties and time-varying disturbances can be matched and solved. As a result, an adaptive controller and a series of adaptive parameter update laws based on the backstep** method are proposed, which can accelerate the convergence under multi-source uncertainties without priori information. It also overcomes the shortcoming of data-based reinforcement learning not guaranteeing stability. Finally, through analyzing the Lyapunov function, the controller is proved to be actual exponential stable and all kinds of errors are bounded. The numerical simulation shows the validity and superiority of the proposed method.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Liu, L., Gao, T., Liu, Y.J., et al.: Time-varying IBLFs-based adaptive control of uncertain nonlinear systems with full state constraints. Automatica 129, 109595 (2021)

Song, Y., Guo, J., Huang, X.: Smooth neuroadaptive PI tracking control of nonlinear systems with unknown and nonsmooth actuation characteristics. IEEE Trans. Neural Netw. Learn. Syst. 28(9), 2183–2195 (2016)

Hamayun, M.T., Edwards, C., Alwi, H.: A fault tolerant direct control allocation scheme with integral sliding modes. In: Hamayun, M.T., Edwards, C., Alwi, H. (eds.) Fault Tolerant Control Schemes Using Integral Sliding Modes. Studies in Systems, Decision and Control, vol. 61, pp. 63–79. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-32238-4_4

Wang, Y., Zhu, B., Zhang, H., et al.: Functional observer-based finite-time adaptive ISMC for continuous systems with unknown nonlinear function. Automatica 125, 109468 (2021)

**feng, L., Fucai, L., Yaxue, R.: Fuzzy identification of nonlinear dynamic system based on selection of important input variables. J. Syst. Eng. Electron. 99, 1–11 (2022)

Zhang, T., Liu, H., **a, M., et al.: Adaptive neural control of MIMO uncertain nonlinear systems with unknown dynamics and output constraint. Int. J. Adapt. Control Signal Process. 32(12), 1731–1747 (2018)

Tang, Z.L., Ge, S.S., Tee, K.P., et al.: Adaptive neural control for an uncertain robotic manipulator with joint space constraints. Int. J. Control 89(7), 1428–1446 (2016)

Zhang, T., **a, M., Yi, Y.: Adaptive neural dynamic surface control of strict-feedback nonlinear systems with full state constraints and unknown dynamics. Automatica 81, 232–239 (2017)

Yager, R.R.: Implementing fuzzy logic controllers using a neural network framework. Fuzzy Sets Syst. 48(1), 53–64 (1992)

Fu, S., Qiu, J., Chen, L., et al.: Adaptive fuzzy observer design for a class of switched nonlinear systems with actuator and sensor faults. IEEE Trans. Fuzzy Syst. 26(6), 3730–3742 (2018)

Chen, Y., Liu, Z., Chen, C.L.P., et al.: Adaptive fuzzy control of switched nonlinear systems with uncertain dead-zone: a mode-dependent fuzzy dead-zone model. Neurocomputing 432, 133–144 (2021)

Feng, G.: A survey on analysis and design of model-based fuzzy control systems. IEEE Trans. Fuzzy Syst. 14(5), 676–697 (2006)

Liu, Y.J., Li, S., Tong, S., et al.: Adaptive reinforcement learning control based on neural approximation for nonlinear discrete-time systems with unknown nonaffine dead-zone input. IEEE Trans. Neural Netw. Learn. Syst. 30(1), 295–305 (2018)

Peng, Z., Hu, J., Shi, K., et al.: A novel optimal bipartite consensus control scheme for unknown multi-agent systems via model-free reinforcement learning. Appl. Math. Comput. 369, 124821 (2020)

Berkenkamp, F., Turchetta, M., Schoellig, A., et al.: Safe model-based reinforcement learning with stability guarantees. Adv. Neural Inf. Process. Syst. 30 (2017)

Yuan, J., Lamperski, A.: Online control basis selection by a regularized actor critic algorithm. In: 2017 American Control Conference (ACC), pp. 4448–4453. IEEE (2017)

Han, M., Tian, Y., Zhang, L., et al.: Reinforcement learning control of constrained dynamic systems with uniformly ultimate boundedness stability guarantee. Automatica 129, 109689 (2021)

Ouyang, Y., Dong, L., Wei, Y., et al.: Neural network based tracking control for an elastic joint robot with input constraint via actor-critic design. Neurocomputing 409, 286–295 (2020)

Grondman, I., Vaandrager, M., Busoniu, L., et al.: Efficient model learning methods for actor–critic control. IEEE Trans. Syst. Man Cybern. Part B (Cybern.) 42(3), 591–602 (2011)

Wang, Z., Yuan, J.: Fuzzy adaptive fault tolerant IGC method for STT missiles with time-varying actuator faults and multisource uncertainties. J. Franklin Inst. 357(1), 59–81 (2020)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 Bei**g HIWING Sci. and Tech. Info Inst

About this paper

Cite this paper

Chang, Y., Zhu, Z., **ng, X. (2023). Reinforcement Learning-Based Anti-disturbances Adaptive Control for Systems Subjected to Mismatched Disturbances and Input Uncertainties. In: Fu, W., Gu, M., Niu, Y. (eds) Proceedings of 2022 International Conference on Autonomous Unmanned Systems (ICAUS 2022). ICAUS 2022. Lecture Notes in Electrical Engineering, vol 1010. Springer, Singapore. https://doi.org/10.1007/978-981-99-0479-2_82

Download citation

DOI: https://doi.org/10.1007/978-981-99-0479-2_82

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-99-0478-5

Online ISBN: 978-981-99-0479-2

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)