Abstract

We extend the convergence analysis of the Scholtes-type regularization method for cardinality-constrained optimization problems. Its behavior is clarified in the vicinity of saddle points, and not just of minimizers as it has been done in the literature before. This becomes possible by using as an intermediate step the recently introduced regularized continuous reformulation of a cardinality-constrained optimization problem. We show that the Scholtes-type regularization method is well-defined locally around a nondegenerate T-stationary point of this regularized continuous reformulation. Moreover, the nondegenerate Karush–Kuhn–Tucker points of the corresponding Scholtes-type regularization converge to a T-stationary point having the same index, i.e. its topological type persists. As consequence, we conclude that the global structure of the Scholtes-type regularization essentially coincides with that of CCOP.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

In nonconvex optimization Scholtes-type regularization methods became popular since the seminal paper [1]. Typically, nonsmooth constraints are relaxed by means of a parameter. Then, Karush–Kuhn–Tucker points of the induced nonlinear programs need to be computed. They are shown to converge towards some suitably defined stationary points of the original optimization problem as the regularization parameter tends to zero. Scholtes-type regularization methods for mathematical programs with complementarity (MPCC), vanishing (MPVC), switching (MPSC), and orthogonality type constrains (MPOC) were examined along these lines in the literature so far, see e.g. [1,2,3,4] for further details, respectively.

In this paper, we study the Scholtes-type regularization method for the class of cardinality-constrained optimization problems:

with the feasible set given by equality, inequality, and cardinality constraints, where the so-called zero “norm” \(\left\| x\right\| _0 = \left| \left\{ i \in \{1,\ldots , n\}\; \vert \; x_i\ne 0\right\} \right| \) is counting non-zero entries of x. Here, we assume that the objective function f, as well as the equality and inequality constraints \(h=\left( h_p, p \in P\right) \), \(g=\left( g_q, q \in Q\right) \) are twice continuously differentiable, and \(s \in \{1,\ldots , n-1\}\) is an integer. In order to arrive at the Scholtes-type regularization, the so-called continuous reformulation of CCOP from [5] is helpful:

As pointed out there, \({\bar{x}}\) solves CCOP if and only if there exists a vector \({\bar{y}}\) such that \(\left( {\bar{x}}, {\bar{y}}\right) \) solves (1). In order to tackle (1) numerically, [6] suggests to regularize the orthogonality type constraints by using the Scholtes’ idea, cf. [1]:

where \(t>0\). Further in [7], the authors prove that—under some suitable constraint qualification and second-order sufficient condition—the Scholtes-type regularization method is well-defined locally around a minimizer of (1). Moreover, the Karush–Kuhn–Tucker points of (2) converge to an S-stationary point of (1) whenever \(t\rightarrow 0\).

Our goal is to extend the convergence analysis of the Scholtes-type regularization method beyond the case of minimizers of (1), but also for all kinds of its saddle points. By doing so, we intend to relate the indices of nondegenerate Karush–Kuhn–Tucker points of the Scholtes-type regularization with those of T-stationary points of the regularized continuous reformulation. Here, nondegeneracy refers to some tailored versions of linear independence constraint qualification, strict complementarity and second-order regularity. Assuming nondegeneracy, Karush–Kuhn–Tucker points and T-stationary points can be classified according to their quadratic and T-index, respectively. The index encodes the local structure of the optimization problem under consideration in algebraic terms and its global structure in the sense of Morse theory, see [8, 9]. We note that for our purpose we need to preliminarily regularize the continuous reformulation (1). The reason is that all T-stationary points of (1)—considered as an MPOC instance—turn out to be degenerate, cf. [4]. To overcome this obstacle, it has been suggested in [9] not only to linearly perturb the objective function in (1) with respect to y-variables, but also to additionally relax the upper bounds on them. As for our main results, the Scholtes-type regularization method proves to be well-defined locally around a nondegenerate T-stationary point of the regularized continuous reformulation. Moreover, the nondegenerate Karush–Kuhn–Tucker points of its Scholtes-type regularization converge to a T-stationary point having the same index. These results allow us to relate the x-variables of the Karush–Kuhn–Tucker points of the Scholtes-type regularization to the M-stationary points of CCOP directly.

We emphasize that the study of saddle points for the Scholtes-type regularization is not only valuable from the global optimization perspective, but also from the practical point of view. Indeed, since the Scholtes-type regularization falls into the scope of nonlinear programming, we can only hope to efficiently compute its Karush–Kuhn–Tucker points. This can be done e.g. by using Newton-type methods, which—as well known—do not in general converge towards minimizers. These Karush–Kuhn–Tucker points of the Scholtes-type regularization will thus appear to be saddle points of different kinds. Their convergence to the saddle points of the regularized continuous reformulation of CCOP and of CCOP itself has then to be addressed.

The article is organized as follows. In Sect. 2 we discuss some preliminary results on CCOP and its regularized continuous reformulation. Sect. 3 is devoted to the extended convergence analysis of its Scholtes-type regularization.

Our notation is standard. The cardinality of a finite set A is denoted by \(\vert A \vert \). The n-dimensional Euclidean space is denoted by \(\mathbb {R}^n\) with the coordinate vectors \(e_i,i= 1,\ldots , n\). The vector consisting of ones is denoted by e. Given a twice continuously differentiable function \(f:\mathbb {R}^n\rightarrow \mathbb {R}\), \(\nabla f\) denotes its gradient, and \(D^2f\) stands for its Hessian.

2 Preliminaries

We start with the notion of nondegenerate stationarity for CCOP as described in [10]. For that, we use the index set of active inequality constraints and the index set of vanishing x-variables, i.e.

Let us introduce the CCOP-tailored linear independence constraint qualification.

Definition 1

(CC-LICQ, see [11]) We say that a feasible point \({\bar{x}}\) of CCOP satisfies the cardinality-constrained linear independence constraint qualification (CC-LICQ) if the following gradients are linearly independent:

It was shown in [10] that the topologically relevant stationary concept for CCOP is M-stationarity, namely in the sense of the Morse theory.

Definition 2

(M-stationarity, see [5]) A CCOP feasible point \({\bar{x}}\) is called M-stationary if there exist multipliers

such that the following conditions hold:

It is convenient to define the Lagrange function, since the multipliers are unique under CC-LICQ, cf. [6],

We also use the corresponding tangent space

We now focus on the definition of nondegeneracy for M-stationary points, which was introduced in [10]. It is justified there by showing that all M-stationary points of CCOP are generically nondegenerate.

Definition 3

(Nondegenerate M-stationarity, see [10]) An M-stationary point \({\bar{x}}\) of CCOP is called nondegenerate if

-

NDM1: CC-LICQ holds at \({\bar{x}}\),

-

NDM2: \({\bar{\mu }}_q>0\) for all \(q\in Q_0({\bar{x}})\),

-

NDM3: if \(\left\| {\bar{x}}\right\| _0<s\) then \({\bar{\gamma }}_i\ne 0\) for all \(i\in I_0({\bar{x}})\),

-

NDM4: the matrix \(D^2 L({\bar{x}})\restriction _{\mathcal {T}_{{\bar{x}}}}\) is nonsingular.

For a nondegenerate M-stationary point we eventually use an additional condition:

-

NDM5: \(\gamma _i\ne 0\) holds for all \(i \in I_0({\bar{x}})\).

With a nondegenerate M-stationary point \({\bar{x}}\) an M-index can be associated. The M-index captures the structure of CCOP locally around \({\bar{x}}\) and defines the type of an M-stationary point, see [10] for details. In particular, nondegenerate minimizers of CCOP are characterized by a vanishing M-index. If the M-index does not vanish, we get all kinds of saddle points.

Definition 4

(M-index, see [10]) Let \({\bar{x}}\) be a nondegenerate M-stationary point of CCOP. The number of negative eigenvalues of the matrix \(D^2\,L({\bar{x}})\restriction _{\mathcal {T}_{{\bar{x}}}}\) is called its quadratic index (QI). The number \(s-\left\| {\bar{x}}\right\| _0\) is called the sparsity index (SI) of \({\bar{x}}\). We define the M-index (MI) as the sum of both, i. e. \(MI=SI+QI\).

Now, we are ready to associate with CCOP the regularized continuous reformulation as suggested in [9]:

where the components of \(c \in \mathbb {R}^n\) are positive and pairwise different, and \(0 < \varepsilon \le \frac{1}{n-s}\). Given a feasible point \(({\bar{x}},{\bar{y}})\) of \(\mathcal {R}\), we define the index sets which correspond to the orthogonality type constraints \(x_i y_i=0\), \(y_i \ge 0\):

The index sets of the active inequality constraints will be denoted by

The regularized continuous reformulation \(\mathcal {R}\) is a special case of MPOC. The latter class was examined in [4], where the MPOC-tailored linear independence constraint qualification and the notion of (nondegenerate) T-stationary points with the corresponding T-index were introduced. It has been shown there that T-stationarity is the topologically relevant stationarity notion for MPOC, again in the sense of Morse theory. We note that the alternative concept of S-stationarity has been defined for the original continuous reformulation (1). It has been shown in [4] that S-stationarity implies T-stationarity for (1), but not vice versa. These both facts motivated us in [9] to apply T-, rather than S-stationarity to the regularization \(\mathcal {R}\).

Definition 5

(MPOC-LICQ, [9]) We say that a feasible point \(({\bar{x}},{\bar{y}})\) of \(\mathcal {R}\) satisfies the MPOC-tailored linear independence constraint qualification (MPOC-LICQ) if the following vectors are linearly independent:

Definition 6

(T-stationary point, [9]) A feasible point \(({\bar{x}},{\bar{y}})\) of \(\mathcal {R}\) is called T-stationary if there exist multipliers

such that the following conditions hold:

We define the appropriate Lagrange function:

Moreover, we set for the corresponding tangent space

Definition 7

(Nondegenerate T-stationary point, [9]) A T-stationary point \(({\bar{x}},{\bar{y}})\) of \(\mathcal {R}\) with multipliers \(({\bar{\lambda }}, {\bar{\mu }}, {\bar{\sigma }}, {\bar{\varrho }})\) is called nondegenerate if

-

NDT1: MPOC-LICQ holds at \(({\bar{x}},{\bar{y}})\),

-

NDT2: \({\bar{\mu }}_{1,q}>0\), \(q \in Q_0\left( {\bar{x}}\right) \), \({\bar{\mu }}_{2,i}>0\), \(i \in \mathcal {E}\left( {\bar{y}}\right) \), and \({\bar{\mu }}_3>0\) if \(\sum \nolimits _{i=1}^{n} {\bar{y}}_i =n-s\),

-

NDT3: \({\bar{\varrho }}_{1,i}\ne 0\) and \({\bar{\varrho }}_{2,i}< 0\), \(i\in a_{00}\left( {\bar{x}},{\bar{y}}\right) \),

-

NDT4: the matrix \(D^2\,L^{\mathcal {R}}({\bar{x}},{\bar{y}})\restriction _{\mathcal {T}^\mathcal {R}_{({\bar{x}},{\bar{y}})}}\) is nonsingular.

For a nondegenerate T-stationary point we eventually use additional conditions:

-

NDT5: if \(a_{00}\left( {\bar{x}},{\bar{y}}\right) \not = \emptyset \), then \({\bar{\sigma }}_{1,i}\ne 0\) for all \(i\in a_{01}({\bar{x}}, {\bar{y}})\).

-

NDT6: \({\bar{\sigma }}_{1,i}\ne 0\), \(i\in a_{01}({\bar{x}}, {\bar{y}})\).

Definition 8

(T-index, [9]) Let \(({\bar{x}},{\bar{y}})\) be a nondegenerate T-stationary point of \(\mathcal {R}\) with unique multipliers \(\left( {\bar{\lambda }},{\bar{\mu }}, {\bar{\sigma }},{\bar{\varrho }}\right) \). The number of negative eigenvalues of the matrix \(D^2\,L^{\mathcal {R}}({\bar{x}},{\bar{y}})\restriction _{\mathcal {T}^\mathcal {R}_{({\bar{x}},{\bar{y}})}}\) is called its quadratic index (QI). The cardinality of \(a_{00}\left( {\bar{x}},{\bar{y}}\right) \) is called the biactive index (BI) of \(({\bar{x}},{\bar{y}})\). We define the T-index (TI) as the sum of both, i.e. \(TI=QI+BI\).

The nondegeneracy conditions NDT1-NDT4 are tailored for \(\mathcal {R}\). Note that NDT2 corresponds to the strict complementarity and NDT4 to the second-order regularity as they are typically defined in the context of nonlinear programming. NDT1 substitutes the usual linear independence constraint qualification. NDT3 is new and says that the multipliers corresponding to biactive orthogonality type constraints must not vanish. With a nondegenerate T-stationary point \(({\bar{x}},{\bar{y}})\) a T-index can be associated. The T-index captures the structure of \(\mathcal {R}\) locally around \(({\bar{x}},{\bar{y}})\) and defines the type of a T-stationary point, see [9] for details. In particular, nondegenerate minimizers of \(\mathcal {R}\) are characterized by a vanishing T-index. If the T-index does not vanish, we get all kinds of saddle points.

Next Lemma 1 provides insights into the structure of auxiliary y-variables corresponding to a T-stationary point of \(\mathcal {R}\).

Lemma 1

(Auxiliary y-variables in \(\mathcal {R}\), [9]) Let \(({\bar{x}},{\bar{y}})\) be a T-stationary point of \(\mathcal {R}\), then it holds:

-

(a)

the summation inequality constraint is active, i.e. \( \sum \nolimits _{i=1}^{n} {\bar{y}}_i =n - s\),

-

(b)

the index set \(a_{01}({\bar{x}},{\bar{y}})\) consists of exactly \(n-s\) elements,

-

(c)

\(n-s-1\) components of \({\bar{y}}\) are equal to \(1+\varepsilon \), one component is equal to \(1-(n-s-1)\varepsilon \), and s remaining components vanish.

We note that nondegenerate M-stationary points of CCOP naturally correspond to nondegenerate T-stationary points of \(\mathcal {R}\) and vice versa. As shown in [9], also their M- and T-indices coincide. Thus, the regularized continuous reformulation \(\mathcal {R}\) can be likewise studied instead of (1).

Theorem 1

(Stationarity of \(\mathcal {R}\) and CCOP, [9])

-

(a)

If \({\bar{x}}\) is an M-stationary point of CCOP, then there exist at least \(\left( {\begin{array}{c}n-\left\| {\bar{x}}\right\| _0-1\\ n-s-1\end{array}}\right) \) choices of \({\bar{y}}\) such that \(({\bar{x}},{\bar{y}})\) is a T-stationary point of \(\mathcal {R}\). If \({\bar{x}}\) is additionally nondegenerate with M-index m, then all corresponding T-stationary points \(({\bar{x}}, {\bar{y}})\) are also nondegenerate with T-index m. Moreover, their number is exactly \(\left( {\begin{array}{c}n-\left\| {\bar{x}}\right\| _0-1\\ n-s-1\end{array}}\right) \), and NDT5 holds at any of them.

-

b)

If \(({\bar{x}},{\bar{y}})\) is a T-stationary point of \(\mathcal {R}\), then \({\bar{x}}\) is an M-stationary point of CCOP. If \(({\bar{x}},{\bar{y}})\) is additionally nondegenerate with T-index m and satisfies NDT5, then \({\bar{x}}\) is also nondegenerate with M-index m.

3 Scholtes-type regularization

Let us now regularize the orthogonality type constraints in \(\mathcal {R}\) by using the Scholtes’ idea, cf. [1]:

where \(t>0\). Note that \(\mathcal {S}\) from above falls into the scope of nonlinear programming. The notation for the sets \(Q_0(x)\) and \(\mathcal {E}(y)\), which were used for \(\mathcal {R}\), will be used here again. Furthermore, we define for a feasible point \(\left( x, y\right) \) of \(\mathcal {S}\) the index set of vanishing y-components as well as the index sets of active relaxed orthogonality type constraints:

We also eventually use the following index sets:

For the sake of completeness we state the linear independence constraint qualification for the nonlinear programming problem \(\mathcal {S}\).

Definition 9

(LICQ) We say that a feasible point (x, y) of \(\mathcal {S}\) satisfies the linear independence constraint qualification (LICQ) if the following vectors are linearly independent:

Let us relate MPOC-LICQ for \(\mathcal {R}\) with LICQ for \(\mathcal {S}\).

Theorem 2

(MPOC-LICQ vs. LICQ) Let a feasible point \(({\bar{x}}, {\bar{y}})\) of \(\mathcal {R}\) fulfill MPOC-LICQ. Then, LICQ holds at all feasible points (x, y) of \(\mathcal {S}\) for all sufficiently small t, whenever they are sufficiently close to \(({\bar{x}},{\bar{y}})\).

Proof

Let us contrarily assume that there exists a sequence of feasible points \(\left( x^t,y^t\right) \) of \(\mathcal {S}\) violating LICQ, which converges to \(({\bar{x}}, {\bar{y}})\) for \(t \rightarrow 0\). Additionally, suppose that along some subsequence, which we index by t again, it holds \(\sum \nolimits _{i=1}^{n} y^t_i = n - s\). Then, we have \(\sum \nolimits _{i=1}^{n} {\bar{y}}_i = n - s\). Due to MPOC-LICQ at \(\left( {\bar{x}},{\bar{y}}\right) \) as well as continuity of \(\nabla h\) and \(\nabla g\), we have that for t sufficiently small all multipliers \({\bar{\lambda }}^t,{\bar{\mu }}^t,{\bar{\sigma }}^t,{\bar{\varrho }}^t\) in the following equation vanish:

Moreover, due to the violation of LICQ at \(\left( x^t,y^t\right) \), there exist multipliers \(\lambda ^t,\mu ^t,\eta ^t,\nu ^t\), not all vanishing, with

For t sufficiently small we have \(Q_0\left( x^t\right) \subset Q_0({\bar{x}})\) and \(\mathcal {E}\left( y^t\right) \subset \mathcal {E}\left( {\bar{y}}\right) \). In addition, it holds \(\mathcal {H}\left( x^t,y^t\right) \subset a_{01}({\bar{x}},{\bar{y}}) \cup a_{10}({\bar{x}},{\bar{y}}) \cup a_{00}({\bar{x}},{\bar{y}})\) and \(\mathcal {N}\left( y^t\right) \subset a_{10}({\bar{x}},{\bar{y}}) \cup a_{00}({\bar{x}},{\bar{y}})\). By setting some \(\mu \)-multipliers to be zero if needed, we equivalently obtain:

This, however, implies that not all multipliers in the following equation vanish:

A contradiction to (8) follows by taking into account that \( \mathcal {H}( x^t, y^t) \cap \mathcal {N}( y^t) = \emptyset \). If instead we suppose that there is no subsequence with \(\sum \limits _{i=1}^{n} y^t_i = n - s\), then we can consider a subsequence with \(\sum \nolimits _{i=1}^{n} y^t_i > n - s\). By following a similar argumentation, we produce a contradiction to (8) again. \(\square \)

Next, we give the definitions of a (nondegenerate) Karush–Kuhn–Tucker point of \(\mathcal {S}\) and of its quadratic index as it is meanwhile standard in nonlinear programming, see e.g. [8].

Definition 10

(Karush–Kuhn–Tucker point) A feasible point (x, y) of \(\mathcal {S}\) is called Kurush–Kuhn–Tucker point if there exist multipliers

such that the following conditions hold:

We again define the Lagrange function as

The tangent space is given by

Definition 11

(Nondegenerate Karush–Kuhn–Tucker point) A Karush–Kuhn–Tucker point (x, y) of \(\mathcal {S}\) with multipliers \((\lambda , \mu , \eta , \nu )\) is called nondegenerate if

-

ND1: LICQ holds at (x, y),

-

ND2: \( \mu _{1,q}>0\), \(q \in Q_0\left( x\right) \), \(\mu _{2,i}>0\), \(i \in \mathcal {E}\left( y\right) \), \(\eta ^\ge _i>0\), \(i \in \mathcal {H}^\ge (x,y)\), \(\eta ^\le _i>0\), \(i \in \mathcal {H}^\le (x,y)\), \(\nu _i >0\), \(i \in \mathcal {N}(y)\), and \( \mu _3>0\) if \(\sum \nolimits _{i=1}^{n} y_i =n-s\),

-

ND3: the matrix \(D^2\,L^\mathcal {S}(x,y) \restriction _{\mathcal {T}^\mathcal {S}_{(x,y)}}\) is nonsingular.

Definition 12

(Quadratic index) Let (x, y) be a Karush–Kuhn–Tucker point of \(\mathcal {S}\) with unique multipliers \((\lambda , \mu , \eta , \nu )\). The number of negative eigenvalues of the matrix \(D^2\,L^\mathcal {S}(x,y)\restriction _{\mathcal {T}^\mathcal {S}_{(x,y)}}\) is called its quadratic index (QI).

Note that ND1-ND3 are usual assumptions in nonlinear programming. ND1 refers to the linear independence constraint qualification, ND2 means the strict complementarity, and ND3 describes the second-order regularity. For the index of a nondegenerate Karush–Kuhn–Tucker point just the quadratic part is essential.

Lemma 2 examines the structure of y-components of a Karush–Kuhn–Tucker point of \(\mathcal {S}\).

Lemma 2

(Auxiliary y-variables in \(\mathcal {S}\)) Let (x, y) be a Karush–Kuhn–Tucker point of \(\mathcal {S}\). Then, it holds:

-

(a)

the summation inequality constraint is active, i.e. \(\sum \nolimits _{i=1}^n y_i=n-s\),

-

(b)

the index set \(\mathcal {E}( y) \cup \mathcal {H}(x, y)\) consists of at least \(n-s-1\) elements, and the index set \(\mathcal {N}( y)\) consists of at most s elements. Additionally, there is at most one index, that does not belong to any of these sets, i.e. \(\left| \mathcal {O}\left( x, y\right) \right| \le 1\).

Proof

-

a)

Let (x, y) be a Karush–Kuhn–Tucker point of \(\mathcal {S}\) and \(\sum \nolimits _{i=1}^n y_i>n-s\). Then, there exist multipliers \((\lambda , \mu , \eta , \nu )\), such that (9)–(11) are fulfilled. Since \( \mu _3=0\), we have that the \((n+i)\)-th row of (9) reads as

$$\begin{aligned} c_i=\left\{ \begin{array}{ll} - \mu _{2,i},&{}\text{ for } i \in \mathcal {E}( y)\backslash \mathcal {H}( x, y), \\ - \mu _{2,i}+ \eta ^\ge _i x_i, &{}\text{ for } i \in \mathcal {E}( y) \cap \mathcal {H}^\ge ( x, y),\\ - \mu _{2,i}- \eta ^\le _i x_i, &{}\text{ for } i \in \mathcal {E}( y) \cap \mathcal {H}^\le ( x, y),\\ \eta ^\ge _i x_i, &{}\text{ for } i \in \mathcal {H}^\ge ( x, y)\backslash \mathcal {E}( y),\\ - \eta ^\le _i x_i, &{}\text{ for } i \in \mathcal {H}^\le ( x, y)\backslash \mathcal {E}( y),\\ \nu _i,&{}\text{ for } i \in \mathcal {N}( y),\\ 0,&{}\text{ else }. \end{array}\right. \end{aligned}$$Due to (10), (11), and \(c>0\), it must hold that \(i\in \mathcal {N}( y)\) for all \(i \in \left\{ 1,\ldots ,n\right\} \). This, however, contradicts \(\sum \nolimits _{i=1}^n y_i>n-s\).

-

b)

As in the proof of statement a), we conclude that \( \mu _3>0\) for a Karush–Kuhn–Tucker point (x, y) of \(\mathcal {S}\). Hence, the \((n+i)\)-th row now reads as

$$\begin{aligned} c_i=\left\{ \begin{array}{ll} - \mu _{2,i}+ \mu _3,&{}\text{ for } i \in \mathcal {E}( y)\backslash \mathcal {H}( x, y), \\ - \mu _{2,i}+ \mu _3+ \eta ^\ge _i x_i, &{}\text{ for } i \in \mathcal {E}( y) \cap \mathcal {H}^\ge ( x, y),\\ - \mu _{2,i}+ \mu _3- \eta ^\le _i x_i, &{}\text{ for } i \in \mathcal {E}( y) \cap \mathcal {H}^\le ( x, y),\\ \mu _3+ \eta ^\ge _i x_i, &{}\text{ for } i \in \mathcal {H}^\ge ( x, y)\backslash \mathcal {E}( y),\\ \mu _3- \eta ^\le _i x_i, &{}\text{ for } i \in \mathcal {H}^\le ( x, y)\backslash \mathcal {E}( y),\\ \mu _3+ \nu _i,&{}\text{ for } i \in \mathcal {N}( y),\\ \mu _3,&{}\text{ else }. \end{array}\right. \end{aligned}$$(12)It follows from (12) and the components of c being pairwise different that there can be at most one element \({{\bar{i}}} \in \mathcal {O}\left( x, y\right) \). If \(\mathcal {E}( y) \cup \mathcal {H}( x, y)\) consists of fewer than \(n-s-1\) elements, we get:

$$\begin{aligned} \sum \limits _{i=1}^n y_i\le (n-s-2)\cdot (1+\varepsilon )+y_{{\bar{i}}}<(n-s-1)\cdot (1+\varepsilon )<n-s, \end{aligned}$$a contradiction. Finally, we assume that \(\mathcal {N}( y)\) consists of more than s elements. In this case, there are at most \(n-s-1\) nonvanishing components of y. Consequently,

$$\begin{aligned} \sum \limits _{i=1}^n y_i\le (n-s-1)\cdot (1+\varepsilon )<n-s \end{aligned}$$provides a contradiction.

\(\square \)

We apply the general result on the Scholtes-type regularization of MPOC in our context for the regularized continuous reformulation \(\mathcal {R}\), see [4].

Theorem 3

(Convergence from \(\mathcal {S}\) to \(\mathcal {R}\), cf. [4]) Suppose that a sequence of Karush–Kuhn–Tucker points \((x^{t},y^{t})\) of \(\mathcal {S}\) converges to \(\left( {\bar{x}},{\bar{y}}\right) \) for \(t \rightarrow 0\). If MPOC-LICQ holds at \(\left( {\bar{x}},{\bar{y}}\right) \), then it is a T-stationary point of \(\mathcal {R}\).

From the proof of Theorem 3 in [4] also the convergence of the corresponding multipliers can be deduced.

Remark 1

(Convergence of multipliers) Let \(\left( \lambda ^t,\mu ^t,\eta ^t,\nu ^t\right) \) be the multipliers of the Karush–Kuhn–Tucker points \(\left( x^{t},y^{t}\right) \) of \(\mathcal {S}\) and \(\left( {\bar{\lambda }},{\bar{\mu }},{\bar{\sigma }}, {\bar{\varrho }}\right) \) of the T-stationary point \(\left( {\bar{x}}, {\bar{y}}\right) \) of \(\mathcal {R}\) as in Theorem 3. Due to MPOC-LICQ at \(\left( {\bar{x}}, {\bar{y}}\right) \), we have:

-

a)

\(\lim \limits _{t \rightarrow 0} \lambda ^t={\bar{\lambda }}\), \(\lim \limits _{t \rightarrow 0} \mu ^t={\bar{\mu }}\),

-

b)

\(\lim \limits _{t \rightarrow 0} \left( \eta _{i}^{\ge ,t}-\eta _{i}^{\le ,t}\right) y_{i}^t={\bar{\sigma }}_{1,i}\), \(i \in a_{01}\left( {\bar{x}}, {\bar{y}}\right) \),

-

c)

\(\lim \limits _{t \rightarrow 0} \nu _{i}^{t}+ \left( \eta _{i}^{\ge ,t}-\eta _{i}^{\le ,t}\right) x_{i}^t={\bar{\sigma }}_{2,i}\), \(i \in a_{10}\left( {\bar{x}}, {\bar{y}}\right) \),

-

d)

\(\lim \limits _{t \rightarrow 0} \left( \eta _{i}^{\ge ,t}-\eta _{i}^{\le ,t}\right) y_{i}^t={\bar{\varrho }}_{1,i}\), \(\lim \limits _{t \rightarrow 0} \nu _{i}^{t}+ \left( \eta _{i}^{\ge ,t}-\eta _{i}^{\le ,t}\right) x_{i}^t={\bar{\varrho }}_{2,i}\), \(i \in a_{00}\left( {\bar{x}}, {\bar{y}}\right) \).

The convergence of nondegenerate Karush–Kuhn–Tucker points of \(\mathcal {S}\) does not prevent the limiting T-stationary point of \(\mathcal {S}\) from being degenerate. Let us present in Example 1 the failure of NDT2. Examples with the failure of NDT1, NDT3, or NDT4 are not difficult to construct analogously.

Example 1

(Failure of NDT2) We consider the following Scholtes-type regularization \(\mathcal {S}\) with \(n=2\) and \(s=1\):

as well as the point \((x^t,y^t)=(t,1,1,0)\).We claim that this point is a nondegenerate Karush–Kuhn–Tucker point for \(t<\frac{1}{2}-\sqrt{\frac{13}{72}}\). Indeed, it holds:

with the positive multipliers \(\mu _3^t=c_1+2t-2t^2\), \(\eta _1^{\le ,t}=2-2t\), \(\nu _2^t=\frac{5}{36}-2t+2t^2\). The tangent space is \( \mathcal {T}^\mathcal {S}_{(x^t,y^t)} =\left\{ \xi \in \mathbb {R}^{4}\,\left| \,\xi _1=\xi _3=\xi _4=0 \right. \right\} \). The Hessian of the corresponding Lagrange function is

Therefore, it is straightforward that \(D^2\,L^\mathcal {S}(x^t,y^t)\restriction _{\mathcal {T}^{\mathcal {S}}_{(x^t,y^t)}}\) is nonsingular. We conclude that ND1-ND3 are fulfilled at \((x^t,y^t)\). Moreover, \((x^t,y^t)\) converges to \(({\bar{x}}, {\bar{y}})=(0,1,1,0)\) if \(t \rightarrow 0\). This point is T-stationary for the corresponding regularized continuous reformulation \(\mathcal {R}\) according to Theorem 3, since MPOC-LICQ is fulfilled. Indeed, we obtain the T-stationarity condition

with the unique multipliers \({\bar{\mu }}_{1}=0, {\bar{\mu }}_3=c_1, {\bar{\sigma }}_{1,1}=-2, {\bar{\sigma }}_{2,2}=\frac{5}{36}\). However, NDT2 is violated at \(({\bar{x}}, {\bar{y}})\). \(\square \)

Due to Example 1, we cannot expect that a T-stationary point of \(\mathcal {R}\), which is the limit of a sequence of nondegenerate Karush–Kuhn–Tucker points of \(\mathcal {S}\), is also nondegenerate. Instead, we intend to examine its type if assuming nondegeneracy. Next Lemma 3 provides some valuable insights into the relations between active index sets while doing so.

Lemma 3

(Active index sets) Suppose a sequence of Karush–Kuhn–Tucker points \(\left( x^{t},y^{t}\right) \) of \(\mathcal {S}(t)\) converges to \(\left( {\bar{x}},{\bar{y}}\right) \) for \(t \rightarrow 0\). Moreover, let \(({\bar{x}},{\bar{y}})\) be a nondegenerate T-stationary point of \(\mathcal {R}\). Then, for all sufficiently small t it holds:

-

(a)

\(Q_0\left( {\bar{x}}\right) =Q_0\left( x^{t}\right) \),

-

(b)

\(\mathcal {E}\left( {\bar{y}}\right) =\mathcal {E}\left( y^{t}\right) \),

-

(c)

\(a_{00}\left( {\bar{x}}, {\bar{y}}\right) \subset \mathcal {H}\left( x^t,y^t\right) \),

-

(d)

\(\mathcal {N}\left( y^t\right) \subset a_{10}\left( {\bar{x}},{\bar{y}}\right) \subset \mathcal {N}\left( y^t\right) \cup \mathcal {H}\left( x^t,y^t\right) \).

Proof

a) We start by proving \(Q_0\left( {\bar{x}}\right) =Q_0\left( x^{t}\right) \). Due to continuity arguments, we have \(Q_0\left( x^{t}\right) \subset Q_0\left( {\bar{x}}\right) \) for all sufficiently small t. Let us now assume that there exists \({\bar{i}} \in Q_0\left( {\bar{x}}\right) \backslash Q_0\left( x^{t}\right) \) along a subsequence. Hence, for the corresponding multipliers it holds \(\mu _{{\bar{i}}}^t=0\). NDT1 allows us to apply Remark 1, and we thus have \({\bar{\mu }}_{{\bar{i}}}=\lim \limits _{t \rightarrow \infty } \mu _{{\bar{i}}}^t=0\), a contradiction to NDT2. Consequently, \(Q_0\left( {\bar{x}}\right) =Q_0\left( x^{t}\right) \) holds for all sufficiently small t.

b) Next, we prove \(\mathcal {E}\left( {\bar{y}}\right) =\mathcal {E}\left( y^{t}\right) \). Again, continuity arguments provide \(\mathcal {E}\left( y^{t}\right) \subset \mathcal {E}\left( {\bar{y}}\right) \) for all sufficiently small t. Similar to the first part of the proof, we now assume there exists \({\bar{i}} \in \mathcal {E}\left( {\bar{y}}\right) \backslash \mathcal {E}\left( y^{t}\right) \) along a subsequence. As we have seen in Lemma 1, T-stationarity of \(({\bar{x}}, {\bar{y}})\) implies in particular \(c_{{\bar{i}}}=-{\bar{\mu }}_{2,{\bar{i}}}+{\bar{\mu }}_{3,{\bar{i}}}\). Moreover, NDT1 and Remark 1 provide \(\lim \limits _{t \rightarrow 0}\mu _{3}^{t}={\bar{\mu }}_{3}\). Since \({\bar{i}} \notin \mathcal {N}\left( y^{t}\right) \), we distinguish the following cases:

- (i):

-

\({\bar{i}} \in \mathcal {H}^{\ge }\left( x^{t},y^{t}\right) \backslash \mathcal {E}\left( y^{t}\right) \). Karush–Kuhn–Tucker conditions for \(\left( x^t, y^t\right) \) imply \(c_{{\bar{i}}}= \mu ^t_{3,{\bar{i}}}+ \eta ^{\ge ,t}_{{\bar{i}}}x_{{\bar{i}}}\), cf. (12). It follows \( -{\bar{\mu }}_{2,{\bar{i}}}+{\bar{\mu }}_{3,{\bar{i}}}=\mu ^t_{3,{\bar{i}}}+ \eta ^{\ge ,t}_{{\bar{i}}}x_{{\bar{i}}}\). By taking the limit, we can cancel out \({\bar{\mu }}_{3,{\bar{i}}}\) and \(\mu ^t_{3,{\bar{i}}}\). This leads to a contradiction because the left-hand side of the equation is strictly negative due to NDT2 and the right-hand side is nonnegative since \(\eta ^{\ge ,t}_{{\bar{i}}}\) is nonnegative and \(x_{{\bar{i}}}\) is positive.

- (ii):

-

\({\bar{i}} \in \mathcal {H}^{\le }\left( x^{t},y^{t}\right) \backslash \mathcal {E}\left( y^{t}\right) \). By using (12), we get \(c_{{\bar{i}}}= \mu ^t_{3,{\bar{i}}}- \eta ^{\le ,t}_{{\bar{i}}}x_{{\bar{i}}}\). This leads to a contradiction just as in the previous case.

- (iii):

-

\({\bar{i}} \in \mathcal {O}\left( x^{t},y^{t}\right) \). Analogously, we obtain \(c_{{\bar{i}}}= \mu ^t_{3,{\bar{i}}}\) from (12). It follows \(-{\bar{\mu }}_{2,{\bar{i}}}+{\bar{\mu }}_{3,{\bar{i}}}=\mu ^t_{3,{\bar{i}}}\). Taking the limits leads to \({\bar{\mu }}_{2,{\bar{i}}}=0\), a contradiction with NDT2.

Altogether, \(\mathcal {E}\left( {\bar{y}}\right) \backslash \mathcal {E}\left( y^{t}\right) =\emptyset \) for all sufficiently small t, and the assertion follows.

c) Clearly, \(a_{00}\left( {\bar{x}}, {\bar{y}}\right) \cap \mathcal {E}(y^t)=\emptyset \) for sufficiently small t.

Let us assume there exists an \({\bar{i}} \in a_{00}({\bar{x}},{\bar{y}})\cap \mathcal {N}\left( y^t\right) \). In view of (12), we have \(c_{{\bar{i}}}= \mu _3^t + \nu _{{\bar{i}}}^t\). Due to the T-stationarity of \(\left( {\bar{x}}, {\bar{y}}\right) \), the \((n+i)\)-th row of (5) reads as

This provides \(c_{{\bar{i}}}= {\bar{\varrho }}_{2,{\bar{i}}}+{\bar{\mu }}_3\). According to Remark 1, we have \(\lim \limits _{t \rightarrow 0}\mu _{3}^{t}={\bar{\mu }}_3\). Consequently, it must hold \( \lim \limits _{t \rightarrow 0} \nu _{{\bar{i}}}^t={\bar{\varrho }}_{2,{\bar{i}}}\). This, however, cannot be true since \(\nu _{{\bar{i}}}^t\ge 0\), while \({\bar{\varrho }}_{2,{\bar{i}}}<0\) due to NDT3 from the nondegeneracy of \(({\bar{x}}, {\bar{y}})\), a contradiction. Let us assume now that there exists an \({\bar{i}} \in a_{00}({\bar{x}},{\bar{y}})\cap \mathcal {O}\left( x^t,y^t\right) \). Analogously, we get \({\bar{\varrho }}_{2,{\bar{i}}}=0\), again a contradiction to NDT3. Overall, we get the assertion.

d) Clearly, \(a_{01}\left( {\bar{x}},{\bar{y}}\right) \cap \mathcal {N}(y^t) =\emptyset \) for sufficiently small t. From c) we also know that \(a_{00}\left( {\bar{x}},{\bar{y}}\right) \cap \mathcal {N}(y^t) =\emptyset \). Altogether, the first inclusion of the assertion follows immediately. Further, it also holds \(a_{10}\left( {\bar{x}}, {\bar{y}}\right) \cap \mathcal {E}(y^t)= \emptyset \) for sufficiently small t. Let us assume there exists an \({\bar{i}} \in a_{10}({\bar{x}},{\bar{y}})\cap \mathcal {O}\left( x^t,y^t\right) \). Due to (12), we have \(c_{{\bar{i}}}=\mu _3^t\). In view of Lemma 1c), there exists an index \({\tilde{i}} \in a_{01}({\bar{x}},{\bar{y}})\backslash \mathcal {E}\left( {\bar{y}}\right) \). Thus, T-stationarity of \(({\bar{x}}, {\bar{y}})\) implies via (13) that \(c_{{\tilde{i}}}={\bar{\mu }}_3\). By taking the limit and Remark 1, we obtain \(c_{{\bar{i}}}=c_{{\tilde{i}}}\), but \({\bar{i}}\not ={\tilde{i}}\), a contradiction to the choice of c. \(\square \)

Theorem 4 highlights the convergence properties of the Scholtes-type regularization method. Its proof can be found in the Appendix below.

Theorem 4

(Convergence from \(\mathcal {S}\) to \(\mathcal {R}\) again) Suppose that a sequence of nondegenerate Karush–Kuhn–Tucker points \((x^{t},y^{t})\) of \(\mathcal {S}\) with quadratic index m converges to \(\left( {\bar{x}},{\bar{y}}\right) \) for \(t \rightarrow 0\). If \(({\bar{x}},{\bar{y}})\) is a nondegenerate T-stationary point of \(\mathcal {R}\), then we have for its T-index:

If additionally NDT6 holds at \(({\bar{x}},{\bar{y}})\), then the indices coincide, i.e. \(TI= m\).

Let us illustrate the necessity of NDT6 for the validity of Theorem 4.

Example 2

(Necessity of NDT6) We consider the following Scholtes-type regularization \(\mathcal {S}\) with \(n=2\), \(s=1\) and \(0< c_1 < c_2\):

as well as the point \(\left( x^t,y^t\right) =(0,1,1,0)\). We claim that this point is a nondegenerate Karush–Kuhn–Tucker point. Indeed, it holds:

with the positive multipliers \(\mu _{1,1}^t=2,\mu _3^t=c_1,\nu _2^t=c_2-c_1\). Obviously, LICQ and strict complementarity, i.e. ND1 and ND2, respectively, are fulfilled. We show that \(D^2\,L^\mathcal {S}(x^t,y^t)\restriction _{\mathcal {T}^{\mathcal {S}}_{(x^t,y^t)}}\) is nonsingular and calculate the number of its negative eigenvalues. The tangent space is \(\mathcal {T}^{\mathcal {S}}_{(x^t,y^t)}=\left\{ \xi \in \mathbb {R}^{4}\,\left| \, \xi _1+\xi _2=0, \xi _3=\xi _4=0 \right. \right\} \). For the Hessian of the corresponding Lagrange function we have:

Thus, for \(\xi \in \mathcal {T}^{\mathcal {S}}_{(x^t,y^t)}\) it holds:

Hence, ND3 is also fulfilled, the Karush–Kuhn–Tucker point \(\left( x^t,y^t\right) \) is nondegenerate and its quadratic index equals one, i.e. \(m=1\) in Theorem 4. The limiting point is \(({\bar{x}},{\bar{y}})=(0,1,1,0)\). This point is T-stationary for the corresponding regularized continuous reformulation \(\mathcal {R}\) according to Theorem 3, since MPOC-LICQ is fulfilled. Indeed, we have:

with the unique multipliers \({\bar{\mu }}_{1,1}=2,{\bar{\mu }}_3=c_1,{\bar{\sigma }}_{1,1}=0,{\bar{\sigma }}_{2,2}=c_2-c_1.\) It is easy to see that this point is nondegenerate with vanishing T-index, i.e. \(TI=0\), since \(a_{00}({\bar{x}},{\bar{y}})=\emptyset \) and \(\mathcal {T}^{\mathcal {R}}_{({\bar{x}},{\bar{y}})}=\{0\}\). Note that additionally \(\left\{ i\in a_{01}\left( {\bar{x}},{\bar{y}}\right) \,\left| \,{\bar{\sigma }}_{1,i}=0\right. \right\} =\{1\}\). Although all assumptions of Theorem 4 are fulfilled, we have here:

With other words, the saddle points of the Scholtes-type regularization \(\mathcal {S}\) approximate a minimizer of the regularized continuous reformulation \(\mathcal {R}\). The reason is that the \(\sigma \)-multipliers corresponding to zero x- and nonzero y-variables vanish. The lower bound given in Theorem 4 is attained. \(\square \)

Next, we point out that the assumption NDT6 is not restrictive at all.

Remark 2

(Genericity for NDT6) Let us briefly sketch why condition NDT6 must be generically fulfilled at the T-stationary points of \(\mathcal {R}\). First, we note that all T-stationary points of \(\mathcal {R}\) are generically nondegenerate, see [9]. Now, let us count the losses of freedom induced by the definition of a T-stationary point. For feasibility we have \(\left| P\right| \) equality constraints, \(\left| Q_0\right| \) active inequality constraints, \(\left| \mathcal {E}\right| \) bounding constraints on the y-variables, potentially one summation constraint, and \(\left| a_{01}\right| +\left| a_{10}\right| +2\left| a_{00}\right| \) orthogonality type constraints. Additional losses of freedom come from the T-stationarity condition. They amount to \(2n-\left| P\right| -\left| Q_0\right| -\left| \mathcal {E}\right| -1-\left| a_{01}\right| -\left| a_{10}\right| -2\left| a_{00}\right| \) if the summation constraint is active, and to \(2n-\left| P\right| -\left| Q_0\right| -\left| \mathcal {E}\right| -\left| a_{01}\right| -\left| a_{10}\right| -2\left| a_{00}\right| \) otherwise. In both cases, the losses of freedom are equal to the number of variables 2n. The violation of NDT6 would produce an additional loss of freedom, which would imply that the total available degrees of freedom 2n are exceeded. By virtue of the structured jet transversality theorem from [12], this cannot happen generically. \(\square \)

Let us examine the set of multipliers from Theorem 4 in terms of CCOP.

Lemma 4

Let \({\bar{x}}\) be a nondegenerate M-stationary point of CCOP. Then, for any \({\bar{y}}\) such that \(({\bar{x}},{\bar{y}})\) is a T-stationary point of \(\mathcal {R}\) we have

Proof

We refer to the proof of Theorem 3.7 from [9]. There, it was shown how any T-stationary point \(({\bar{x}},{\bar{y}})\) of \(\mathcal {R}\) can be constructed by means of a nondegenerate M-stationary point \({\bar{x}}\) of CCOP. Specifically, the corresponding multipliers were set as

We conclude

Let us assume that \(\left\| {\bar{x}}\right\| _0<s\) additionally holds. Hence, in virtue of NDM3 we have

By recalling \(a_{01}\left( {\bar{x}},{\bar{y}}\right) \subset I_0\left( {\bar{x}}\right) \), the assertion follows.

Suppose now \(\left\| {\bar{x}}\right\| _0=s\) instead. Due to Lemma 1b), we have

Since \(I_0\left( {\bar{x}}\right) =a_{00}\left( {\bar{x}},{\bar{y}}\right) \cup a_{01}\left( {\bar{x}},{\bar{y}}\right) \), we conclude \(a_{00}\left( {\bar{x}},{\bar{y}}\right) =\emptyset \). Thus, \(I_0\left( {\bar{x}}\right) =a_{01}\left( {\bar{x}},{\bar{y}}\right) \) and the assertion follows. \(\square \)

In view of Theorem 1 we get the following convergence properties of the proposed Scholtes-type regularization with respect to the underlying CCOP.

Corollary 1

(Convergence from \(\mathcal {S}\) to CCOP)

-

(a)

Suppose that a sequence of Karush–Kuhn–Tucker points \((x^{t},y^{t})\) of \(\mathcal {S}\) converges to \(\left( {\bar{x}},{\bar{y}}\right) \) for \(t \rightarrow 0\). If CC-LICQ holds at \({\bar{x}}\), then \({\bar{x}}\) is an M-stationary point of CCOP.

-

(b)

Suppose that a sequence of nondegenerate Karush–Kuhn–Tucker points \((x^{t},y^{t})\) of \(\mathcal {S}\) with quadratic index m converges to \(\left( {\bar{x}},{\bar{y}}\right) \) for \(t \rightarrow 0\). If \({\bar{x}}\) is a nondegenerate M-stationary point of CCOP, then we have for its M-index MI:

$$\begin{aligned} \max \left\{ m - \left| \left\{ i\in I_{0}\left( {\bar{x}}\right) \,\left| \,{\bar{\gamma }}_{i}=0\right. \right\} \right| , 0\right\} \le MI \le m. \end{aligned}$$If additionally NDM5 holds, then the indices coincide, i.e. \(MI=m\).

Proof

-

a)

Due to continuity arguments, \(({\bar{x}},{\bar{y}})\) is feasible for \(\mathcal {R}\). Let us show that the latter implies feasibility of \({\bar{x}}\) for CCOP. For this purpose we assume instead \(\left\| {\bar{x}}\right\| _0>s\). Consequently, we have

$$\begin{aligned} n-s>\left| I_0({\bar{x}})\right| \ge \left| a_{01}({\bar{x}},{\bar{y}})\right| . \end{aligned}$$Thus, it holds for \(({\bar{x}},{\bar{y}})\):

$$\begin{aligned} \sum \limits _{i=1}^n{\bar{y}}_i=\sum \limits _{i \in a_{01}({\bar{x}},{\bar{y}})}{\bar{y}}_i\le (n-s-1)(1+\varepsilon )< n-s, \end{aligned}$$a contradiction to its feasibility. Overall, \({\bar{x}}\) has to be feasible for CCOP and, thus, we can apply Proposition 3.2a) from [9]. The latter states that if \({\bar{x}}\) is feasible for CCOP and satisfies CC-LICQ, then MPOC-LICQ holds at any \(({\bar{x}}, y)\) that is feasible for \(\mathcal {R}\). Hence, in view of Theorem 3, \(({\bar{x}}, {\bar{y}})\) is a T-stationary point of \(\mathcal {R}\). Therefore, \({\bar{x}}\) is M-stationary, due to Theorem 1b).

-

b)

We deduce as above that \(({\bar{x}}, {\bar{y}})\) is a T-stationary point of \(\mathcal {R}\). Using Theorem 1, we have that \(({\bar{x}},{\bar{y}})\) is nondegenerate fulfilling NDT5. According to Theorem 4, for its T-index TI it holds

$$\begin{aligned} \max \left\{ m - \left| \left\{ i\in a_{01}\left( {\bar{x}},{\bar{y}}\right) \,\left| \,{\bar{\sigma }}_{1,i}=0\right. \right\} \right| , 0\right\} \le TI \le m. \end{aligned}$$However, we again use Theorem 1 to conclude \(TI=MI\). In view of Lemma 4, the assertion follows.

\(\square \)

Let us briefly comment on condition NDM5. It ensures M-stationary points to have the same index as the approximating Karush–Kuhn–Tucker points of \(\mathcal {S}\).

Remark 3

(On condition NDM5) It follows from Lemma 4 that for a nondegenerate M-stationary point \({\bar{x}}\) of CCOP the following statements are equivalent:

-

a)

NDM5 holds at \({\bar{x}}\),

-

b)

NDT6 holds at a T-stationary point \(({\bar{x}},{\bar{y}})\) of \(\mathcal {R}\),

-

c)

NDT6 holds at all T-stationary points \(({\bar{x}},{\bar{y}})\) of \(\mathcal {R}\).

Further, due to Theorem 1a), all M-stationary points are induced by at least one T-stationary point. Theorem 1b), Remark 2, and the equivalence above provide that all M-stationary points generically fulfill NDM5. As a consequence, we conclude that generically the bounds given in Corollary 1 are tight, i.e. \(MI=m\). The latter holds in particular for \(\Vert {\bar{x}}\Vert _0 <s\) regardless of NDM5, since NDM3 suffices. \(\square \)

Now, we prove that the Scholtes-type regularization method is well-defined. For the proof see again the Appendix below.

Theorem 5

(Well-posedness of \(\mathcal {S}\) from \(\mathcal {R}\)) Let \(({\bar{x}}, {\bar{y}})\) be a nondegenerate T-stationary point of \(\mathcal {R}\) with T-index m, additionally, fulfilling NDT6. Then, for all sufficiently small t there exists a nondegenerate Karush–Kuhn–Tucker point \((x^t,y^t)\) of \(\mathcal {S}\) within a neighborhood of \(({\bar{x}}, {\bar{y}})\), which has the same quadratic index m. Moreover, for any fixed t sufficiently small, such \((x^t,y^t)\) is the unique Karush–Kuhn–Tucker point of \(\mathcal {S}\) in a sufficiently small neighborhood of \(({\bar{x}}, {\bar{y}})\).

Again, we state the result analogous to Theorem 5 in terms of CCOP.

Corollary 2

(Well-posedness of \(\mathcal {S}\) from CCOP) Let \({\bar{x}}\) be a nondegenerate M-stationary point of CCOP with M-index m, additionally, fulfilling NDM5. Then, for all sufficiently small t there exists a nondegenerate Karush–Kuhn–Tucker point \((x^t,y^t)\) of S with \(x^t\) being within a neighborhood of \({\bar{x}}\), which has the same quadratic index m.

Proof

Due to Theorem 1a), there exists at least one nondegenerate T-stationary point \(({\bar{x}},{\bar{y}})\) of \(\mathcal {R}\). Moreover, Lemma 4 provides that it also fulfills NDT6. Thus, the assertion follows straightforward in view of Theorem 5. \(\square \)

Let us compare our results with those for the initially proposed continuous reformulation (1) and the Scholtes-type relaxation (2) from [5] and [6], respectively. There, the concept of S-stationarity for (1) becomes crucial.

Definition 13

(S-stationary, [5]) A feasible point \(({\bar{x}},{\bar{y}})\) of (1) is called S-stationary if there exist multipliers

such that the following conditions hold:

Example 3

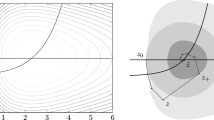

We consider the following CCOP with \(n=3\) and \(s=1\):

It has minimizers at (1, 0, 0), (0, 1, 0), and (0, 0, 1) as well as a saddle point at (0, 0, 0). It is straightforward to check that all these points are nondegenerate M-stationary points, which additionally fulfill NDM5. For its continuous reformulation (1) we have

We get as its S-stationary points:

and

Hence, we have a continuum of saddle points. Moreover, it was shown in [4] that all S-stationary points of reformulation (1) are degenerate T-stationary points, i.e. violating at least one of the conditions NDT1-NDT4. Further, we turn our attention to the Scholtes-type regularization (2)

For t sufficiently small its Karush–Kuhn–Tucker points include

where

For every minimizer of the underlying CCOP there exist at least two sequences of Karush–Kuhn–Tucker points of the Scholtes-type regularization (2), which approximate the corresponding S-stationary points of the continuous reformulation (1), i.e.

For the saddle point of CCOP there exist at least four sequences of Karush–Kuhn–Tucker points of the Scholtes-type regularization (2), which approximate the corresponding S-stationary points of the continuous reformulation (1), i.e.

We list M-, S-stationary, and Karush–Kuhn–Tucker points in Table 1.

Let us apply our results to the given CCOP. In view of Theorem 1a), we know that the regularized continuous reformulation \(\mathcal {R}\) has in total five T-stationary points, all of them being nondegenerate. Three of them are minimizers and two of them are saddle points of \(\mathcal {R}\). Also, we know from Remark 3 that all of them fulfill NDT6. Due to Theorem 3, any convergent sequence of Karush–Kuhn–Tucker points of the Scholtes-type regularization \(\mathcal {S}\) converges to one of these T-stationary points of \(\mathcal {R}\). We apply Theorem 5 to conclude that for any fixed t sufficiently small there are exactly five Karush–Kuhn–Tucker points of \(\mathcal {S}\). All of them are nondegenerate. Three of them are minimizers and two of them are saddle points of \(\mathcal {S}\). Overall, not only the global structure of \(\mathcal {R}\) is more accessible than that of (1), but also the global structure of \(\mathcal {S}\) is more accessible than that of (2). This shows the advantage of our approach in comparison to the existing literature, at least for the presented example. \(\square \)

4 Conclusions

In [9], the number of saddle points for the regularized continuous reformulation of CCOP has been estimated. Namely, each saddle point of CCOP generates exponentially many saddle points of \(\mathcal {R}\), all of them having the same index. It has been concluded there that the introduction of auxiliary y-variables shifts the complexity of dealing with the cardinality constraint in CCOP into the appearance of multiple saddle points for its continuous reformulation. From our extended convergence analysis of the Scholtes-type regularization it follows that the number of its saddle points also grows exponentially as compared to that of CCOP. We emphasize that this issue is at the core of numerical difficulties if solving CCOP up to global optimality by means of the Scholtes-type regularization method. To the best of our knowledge this is the first paper studying convergence properties of the Scholtes-type regularization method in the vicinity of saddle points, rather than of minimizers. The ideas from our analysis can be potentially applied not only for classes of nonsmooth optimization problems, such as MPCC, MPVC, MPSC, and MPOC, but also for other regularization schemes known from the literature.

References

Scholtes, S.: Convergence properties of a regularization scheme for mathematical programs with complementarity constraints. SIAM J. Optim. 11, 918–936 (2001)

Izmailov, A.F., Solodov, M.V.: Mathematical programs with vanishing constraints: Optimality conditions, sensitivity, and a relaxation method. J. Optim. Theory Appl. 142, 501–532 (2009)

Kanzow, C., Mehlitz, P., Steck, D.: Relaxation schemes for mathematical programmes with switching constraints. Optim. Methods Softw. 36, 1223–1258 (2021)

Lämmel, S., Shikhman, V.: Optimality conditions for mathematical programs with orthogonality type constraints. Set-Valued Variat. Anal. 31(9) (2023)

Burdakov, O.P., Kanzow, C., Schwartz, A.: Mathematical programs with cardinality constraints: reformulation by complementarity-type conditions and a regularization method. SIAM J. Optim. 26, 397–425 (2016)

Bucher, M., Schwartz, A.: Second-order optimality conditions and improved convergence results for regularization methods for cardinality-constrained optimization problems. J. Optim. Theory Appl. 178, 383–410 (2018)

Branda, M., Bucher, M., Červinka, M., Schwartz, A.: Convergence of a Scholtes-type regularization method for cardinality-constrained optimization problems with an application in sparse robust portfolio optimization. Comput. Optim. Appl. 70, 503–530 (2018)

Jongen, H.T., Jonker, P., Twilt, F.: Nonlinear Optimization in Finite Dimensions. Kluwer Academic Publishers, Dordrecht (2000)

Lämmel, S., Shikhman, V.: Global aspects of the continuous reformulation for cardinality-constrained optimization problems. Optimization (2023). https://doi.org/10.1080/02331934.2023.2249014. (Online first)

Lämmel, S., Shikhman, V.: Cardinality-constrained optimization problems in general position and beyond. Pure Appl. Funct. Anal. 8(4), 1107–1133 (2023)

Červinka, M., Kanzow, C., Schwartz, A.: Constraint qualifications and optimality conditions for optimization problems with cardinality constraints. Math. Program. 160, 353–377 (2016)

Günzel, H.: The structured jet transversality theorem. Optimization 57, 159–164 (2008)

Jongen, H.T., Meer, K., Triesch, E.: Optimization Theory. Kluwer Academic Publishers, Dordrecht (2004)

Acknowledgements

The authors would like to thank the anonymous referees for suggesting valuable improvements of the paper.

Funding

Open Access funding enabled and organized by Projekt DEAL.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors have no relevant financial or non-financial interests to disclose.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix

Appendix

Proof of Theorem 4

The proof will be divided into 4 major steps.

- Step 1a.:

-

We rewrite the tangent space corresponding to the Karush–Kuhn–Tucker point \(\left( x^t,y^t\right) \). For that, we use Lemma 2a) which provides that the summation constraint is active:

$$\begin{aligned} \mathcal {T}^\mathcal {S}_{\left( x^t,y^t\right) }=\left\{ \xi \in \mathbb {R}^{2n}\,\left| \, \begin{array}{l} \begin{pmatrix} Dh_p( x^t),0\end{pmatrix} \xi =0, p \in P, \begin{pmatrix} Dg_q( x^t),0\end{pmatrix}\xi =0,q \in Q_0( x^t),\\ \begin{pmatrix} 0,e \end{pmatrix}\xi =0, \begin{pmatrix} 0,e_i \end{pmatrix}\xi =0, i\in \mathcal {E}(y^t),\\ \begin{pmatrix} y_i^te_i,x_i^te_i \end{pmatrix}\xi =0, i \in \mathcal {H}\left( x^t,y^t\right) \cap a_{00}\left( {\bar{x}}, {\bar{y}}\right) ,\\ \begin{pmatrix} y_i^te_i,x_i^te_i \end{pmatrix}\xi =0, i \in \mathcal {H}\left( x^t,y^t\right) \cap a_{01}\left( {\bar{x}}, {\bar{y}}\right) ,\\ \begin{pmatrix} y_i^te_i,x_i^te_i \end{pmatrix}\xi =0, i \in \mathcal {H}\left( x^t,y^t\right) \cap a_{10}\left( {\bar{x}}, {\bar{y}}\right) ,\\ \begin{pmatrix} 0,e_i \end{pmatrix}\xi =0, i \in \mathcal {N}\left( y^t\right) \end{array} \right. \right\} . \end{aligned}$$In total there are, due to LICQ,

$$\begin{aligned} \alpha ^\mathcal {S}_t= & {} \left| P\right| +\left| Q_0\left( x^t\right) \right| +1+ \left| \mathcal {E}\left( y^t\right) \right| + \left| \mathcal {H}\left( x^t,y^t\right) \cap a_{00}\left( {\bar{x}},{\bar{y}}\right) \right| \\{} & {} +\left| \mathcal {H}\left( x^t,y^t\right) \cap a_{01}\left( {\bar{x}},{\bar{y}}\right) \right| +\left| \mathcal {H}\left( x^t,y^t\right) \cap a_{10}\left( {\bar{x}},{\bar{y}}\right) \right| +\left| \mathcal {N}\left( y^t\right) \right| \end{aligned}$$linearly independent vectors involved. We use Lemma 3a) and 3b) to substitute \(\left| Q_0\left( x^t\right) \right| \) with \(\left| Q_0\left( {\bar{x}}\right) \right| \) and \(\left| \mathcal {E}\left( y^t\right) \right| \) with \(\left| \mathcal {E}\left( {\bar{y}}\right) \right| \), respectively. The latter set has cardinality of \(n-s-1\) due to Lemma 1c). Additionally, we use Lemma 3c) and 3d) to conclude:

$$\begin{aligned} \alpha ^\mathcal {S}_{t}= & {} \left| P\right| +\left| Q_0\left( {\bar{x}}\right) \right| +1+ n-s-1 +\left| a_{00}\left( {\bar{x}},{\bar{y}}\right) \right| \\{} & {} +\left| \mathcal {H}\left( x^t,y^t\right) \cap a_{01}\left( {\bar{x}},{\bar{y}}\right) \right| +\left| a_{10}\left( {\bar{x}},{\bar{y}}\right) \right| . \end{aligned}$$Finally, \(\left| a_{00}\left( {\bar{x}},{\bar{y}}\right) \right| +\left| a_{10}\left( {\bar{x}},{\bar{y}}\right) \right| =s\), cf. Lemma 1b). Thus, we have:

$$\begin{aligned} \alpha ^\mathcal {S}_{t}= & {} \left| P\right| +\left| Q_0\left( {\bar{x}}\right) \right| +\left| \mathcal {H}\left( x^t,y^t\right) \cap a_{01}\left( {\bar{x}},{\bar{y}}\right) \right| +n. \end{aligned}$$ - Step 1b.:

-

We examine the tangent space corresponding to the T-stationary point \(({\bar{x}},{\bar{y}})\). For this purpose, we consider the following vectors from its definition:

$$\begin{aligned} \begin{pmatrix} 0\\ e_i \end{pmatrix},\,i\in \mathcal {E}({\bar{y}}),\quad \begin{pmatrix} 0\\ e_i \end{pmatrix},\,i \in a_{10}\left( {\bar{x}}, {\bar{y}}\right) \cup a_{00}\left( {\bar{x}}, {\bar{y}}\right) ,\quad \begin{pmatrix} 0\\ e \end{pmatrix}. \end{aligned}$$The latter vector is involved due to Lemma 1a). The number of these vectors is due to Lemma 1c) equal to \((n-s-1)+ s+1=n\). Moreover, they are linearly independent due to MPOC-LICQ. Hence, we can write the respective tangent space as

$$\begin{aligned} \mathcal {T}^\mathcal {R}_{({\bar{x}},{\bar{y}})}=\left\{ \xi \in \mathbb {R}^{2n}\,\left| \, \begin{array}{l} \begin{pmatrix} Dh_p({\bar{x}}), 0\end{pmatrix} \xi =0, p \in P, \begin{pmatrix} Dg_q({\bar{x}}), 0\end{pmatrix}\xi =0,q \in Q_0({\bar{x}}),\\ \begin{pmatrix} e_i,0 \end{pmatrix}\xi =0, i \in a_{00}({\bar{x}},{\bar{y}}) \cup a_{01}({\bar{x}},{\bar{y}}), \xi _{n+1}=\ldots =\xi _{2n}=0 \end{array} \right. \right\} . \end{aligned}$$In total there are, due to MPOC-LICQ,

$$\begin{aligned} \alpha ^\mathcal {R}=\left| P\right| +\left| Q_0\left( {\bar{x}}\right) \right| +\left| a_{00}({\bar{x}},{\bar{y}})\right| +\left| a_{01}({\bar{x}},{\bar{y}})\right| +n \end{aligned}$$linearly independent vectors involved.

- Step 2.:

-

Let \(\mathcal {T}\subset \mathbb {R}^{2n}\) be a linear subspace. We denote the number of negative eigenvalues of \(D^2\,L^\mathcal {S}\left( x^t, y^t\right) \restriction _{\mathcal {T}}\) by \(QI^\mathcal {S}_{t,\mathcal {T}}\). Analogously, \(QI^\mathcal {R}_{t,\mathcal {T}}\) stands for the number of negative eigenvalues of \(D^2\,L^\mathcal {R}\left( x^t, y^t\right) \restriction _{\mathcal {T}}\) and \(\overline{QI}^\mathcal {R}_{\mathcal {T}}\) stands for the number of negative eigenvalues of \(D^2\,L^\mathcal {R}\left( {\bar{x}}, {\bar{y}} \right) \restriction _{\mathcal {T}}\). We have the following relation between the involved Hessians of the Lagrange functions by denoting \(E(i)=e_ie_{n+i}^T+e_{n+i}e_i^T\), \(i =1, \ldots , n\):

$$\begin{aligned} \displaystyle D^2 L^\mathcal {S}\left( x^t, y^t\right)= & {} \displaystyle D^2 L^\mathcal {R}\left( x^t, y^t\right) - \sum \limits _{i\in \mathcal {H}^\ge \left( x^t,y^t\right) } \eta _i^{\ge ,t} E(i)\displaystyle + \sum \limits _{i\in \mathcal {H}^\le \left( x^t,y^t\right) } \eta _i^{\le ,t} E(i).\nonumber \\ \end{aligned}$$(14) - Step 2a.:

-

It holds for t sufficiently small:

$$\begin{aligned} QI^\mathcal {S}_{t,\mathcal {T}^\mathcal {R}_{\left( {\bar{x}},{\bar{y}}\right) }}=\overline{QI}^\mathcal {R}_{\mathcal {T}^\mathcal {R}_{\left( {\bar{x}},{\bar{y}}\right) }}. \end{aligned}$$Indeed, by using (14), we derive for any \(\xi \in \mathcal {T}^\mathcal {R}_{\left( {\bar{x}},{\bar{y}}\right) }\):

$$\begin{aligned} \xi ^T D^2 L^\mathcal {S}\left( x^t, y^t\right) \xi= & {} \xi ^T D^2 L^\mathcal {R}\left( x^t, y^t\right) \xi - \displaystyle \sum \limits _{i\in \mathcal {H}^\ge \left( x^t,y^t\right) } 2\eta _i^{\ge ,t} \xi _i \xi _{n+i} \nonumber \\{} & {} \displaystyle + \sum \limits _{i\in \mathcal {H}^\le \left( x^t,y^t\right) } 2\eta _i^{\le ,t} \xi _i \xi _{n+i}= \xi ^T D^2 L^\mathcal {R}\left( x^t, y^t\right) \xi , \end{aligned}$$(15)since \(\xi _{n+1}=\ldots =\xi _{2n}=0\) as seen in Step 1b. Hence, we get \( QI^\mathcal {S}_{t,\mathcal {T}^\mathcal {R}_{\left( {\bar{x}},{\bar{y}}\right) }}=QI^\mathcal {R}_{t,\mathcal {T}^\mathcal {R}_{\left( {\bar{x}},{\bar{y}}\right) }}\). Due to NDT4, continuity arguments provide \( QI^\mathcal {R}_{t,\mathcal {T}^\mathcal {R}_{\left( {\bar{x}},{\bar{y}}\right) }}=\overline{QI}^\mathcal {R}_{\mathcal {T}^\mathcal {R}_{\left( {\bar{x}},{\bar{y}}\right) }}\).

- Step 2b.:

-

We claim that the numbers of positive and negative eigenvalues of \(D^2\,L^\mathcal {S}\left( x^t, y^t\right) \restriction _{\mathcal {T}^\mathcal {R}_{\left( {\bar{x}},{\bar{y}}\right) }}\) and of \(D^2\,L^\mathcal {S}\left( x^t, y^t\right) \restriction _{\mathcal {T}^\prime }\), respectively, coincide, where

$$\begin{aligned} \mathcal {T}^{\prime } = \left\{ \xi \in \mathbb {R}^{2n}\,\left| \, \begin{array}{l} \begin{pmatrix} Dh_p(x^t),0\end{pmatrix} \xi =0, p \in P, \begin{pmatrix} Dg_q(x^t),0\end{pmatrix}\xi =0,q \in Q_0({\bar{x}}),\\ \begin{pmatrix} 0,e \end{pmatrix}\xi =0, \begin{pmatrix} 0,e_i \end{pmatrix}\xi =0, i\in \mathcal {E}({\bar{y}}),\\ \begin{pmatrix} e_i,0 \end{pmatrix}\xi =0, i \in a_{00}({\bar{x}},{\bar{y}}), \begin{pmatrix} 0,e_i \end{pmatrix}\xi =0, i \in a_{00}({\bar{x}},{\bar{y}}),\\ \begin{pmatrix} y^t_ie_i,x^t_ie_i \end{pmatrix}\xi =0, i \in a_{01}({\bar{x}},{\bar{y}})\cup a_{10}({\bar{x}},{\bar{y}}) \end{array} \right. \right\} . \end{aligned}$$Let \(\left\{ \lambda ^+_1,\ldots ,\lambda ^+_{k^+}\right\} \) be the positive eigenvalues of \(D^2\,L^\mathcal {S}\left( x^t, y^t\right) \restriction _{\mathcal {T}^\mathcal {R}_{\left( {\bar{x}},{\bar{y}}\right) }}\) with corresponding eigenvectors \(\left\{ \xi ^+_1,\ldots ,\xi ^+_{k^+}\right\} \). Hence, for all \(k=1,\ldots , k^+\):

$$\begin{aligned} {\xi ^+_k}^T D^2 L^\mathcal {S}\left( x^t, y^t\right) \xi ^+_k>0. \end{aligned}$$We rewrite the tangent space

$$\begin{aligned} \mathcal {T}^\mathcal {R}_{\left( {\bar{x}},{\bar{y}}\right) }=\left\{ \xi \in \mathbb {R}^{2n}\,\left| \, \begin{array}{l} \begin{pmatrix} Dh_p({\bar{x}}),0\end{pmatrix} \xi =0, p \in P, \begin{pmatrix} Dg_q({\bar{x}}),0\end{pmatrix}\xi =0,q \in Q_0({\bar{x}}),\\ \begin{pmatrix} 0,e \end{pmatrix}\xi =0, \begin{pmatrix} 0,e_i \end{pmatrix}\xi =0, i\in \mathcal {E}({\bar{y}}),\\ \begin{pmatrix} e_i,0 \end{pmatrix}\xi =0, i \in a_{00}({\bar{x}},{\bar{y}}), \begin{pmatrix} 0,e_i \end{pmatrix}\xi =0, i \in a_{00}({\bar{x}},{\bar{y}}),\\ \begin{pmatrix} {\bar{y}}_ie_i,{\bar{x}}_ie_i \end{pmatrix}\xi =0, i \in a_{01}({\bar{x}},{\bar{y}})\cup a_{10}({\bar{x}},{\bar{y}}) \end{array} \right. \right\} . \end{aligned}$$Due to MPOC-LICQ, the application of the implicit function theorem provides the existence of \(\delta _2,\delta _3>0\) such that for all \(k=1,\ldots ,k^+\) and \(t<\delta _2\) there exists \(\xi _{k,t}\) with \(\left\| \xi _{k,t}-\xi ^+_k\right\| <\delta _3\) and \(\xi _{k,t}\in \mathcal {T}^\prime \). We can choose t even smaller, such that \(\xi _{1,t},\ldots ,\xi _{k^+,t}\) remain linearly independent and for all \(k=1,\ldots ,k^+\) it holds:

$$\begin{aligned} { \xi _{k,t}}^T D^2 L^\mathcal {S}\left( x^t, y^t\right) \xi _{k,t}>0. \end{aligned}$$Hence, \(D^2\,L^\mathcal {S}\left( x^t, y^t\right) \restriction _{\mathcal {T}^\prime }\) has at least \(k^+\) positive eigenvalues. If we repeat the above reasoning for negative eigenvalues, the matrix \(D^2\,L^\mathcal {S}\left( x^t, y^t\right) \restriction _{\mathcal {T}^\prime }\) has at least as many negative eigenvalues as \(D^2\,L^\mathcal {S}\left( x^t, y^t\right) \restriction _{\mathcal {T}^\mathcal {R}_{\left( {\bar{x}},{\bar{y}}\right) }}\). Additionally, we show that the dimensions of \(\mathcal {T}^\mathcal {R}_{\left( {\bar{x}},{\bar{y}}\right) }\) and \(\mathcal {T}^\prime \) coincide. By Step 1b, we have \(2n-\alpha ^\mathcal {R}\) for the dimension of \(\mathcal {T}^\mathcal {R}_{\left( {\bar{x}},{\bar{y}}\right) }\). Since MPOC-LICQ remains valid in the neighborhood of \(({\bar{x}}, {\bar{y}})\), we get again \(2n-\alpha ^{\mathcal {R}}\) for the dimension of \(\mathcal {T}^\prime \). By continuity arguments, NDT4 and (15) provide that \(D^2\,L^\mathcal {S}\left( x^t, y^t\right) \restriction _{\mathcal {T}^\mathcal {R}_{\left( {\bar{x}},{\bar{y}}\right) }}\) is nonsingular. Altogether, the assertion follows.

- Step 2c.:

-

We claim that

$$\begin{aligned} QI^\mathcal {S}_{t,\mathcal {T}^\mathcal {S}_{\left( x^t,y^t\right) }}\le QI^\mathcal {S}_{t,\mathcal {T}^\mathcal {R}_{\left( {\bar{x}},{\bar{y}}\right) }}+\alpha ^\mathcal {R}-\alpha ^\mathcal {S}_t. \end{aligned}$$For that, we focus on the dimension of \(\mathcal {T}^\mathcal {S}_{\left( x^t,y^t\right) }\). As a consequence of Step 1a it is \(2n-\alpha ^\mathcal {S}_t\). Due to continuity arguments, we can choose t small enough to ensure \(x^t_{i}\ne 0\), \(i\in a_{10}({\bar{x}},{\bar{y}})\) and \(y^t_{i}\ne 0\), \(i\in a_{01}({\bar{x}},{\bar{y}})\). Using this and Lemma 3a), 3b), and 3d), it follows that \(\mathcal {T}^\prime \subset \mathcal {T}^\mathcal {S}_{\left( x^t,y^t\right) }\). Therefore, using Step 2b, \( QI^\mathcal {S}_{t,\mathcal {T}^\mathcal {S}_{\left( x^t,y^t\right) }}\le 2n-\alpha ^\mathcal {S}_t-k^+\). We observe in view of NDT4 and Step 1b that \( QI^\mathcal {S}_{t,\mathcal {T}^\mathcal {R}_{\left( {\bar{x}},{\bar{y}}\right) }} =2n-\alpha ^\mathcal {R}-k^+\). The assertion follows immediately.

- Step 3.:

-

Let us show that

$$\begin{aligned} \max \left\{ m - \left| \left\{ i\in a_{01}\left( {\bar{x}},{\bar{y}}\right) \,\left| \,{\bar{\sigma }}_{1,i}=0\right. \right\} \right| , 0\right\} \le TI. \end{aligned}$$In view of Step 2a, Step 2c, and due to continuity, we have for t sufficiently small:

$$\begin{aligned} m= & {} QI^\mathcal {S}_{t,\mathcal {T}^\mathcal {S}_{\left( x^t,y^t\right) }}\overset{Step\,2c}{\le } QI^\mathcal {S}_{t,\mathcal {T}^\mathcal {R}_{\left( {\bar{x}},{\bar{y}}\right) }}+\alpha ^\mathcal {R}-\alpha ^\mathcal {S}_t \overset{Step\,2a}{=} \overline{QI}^\mathcal {R}_{\mathcal {T}^\mathcal {R}_{\left( {\bar{x}},{\bar{y}}\right) }} +\alpha ^\mathcal {R}-\alpha ^\mathcal {S}_t\\{} & {} \overset{Step\,1}{=}\ \overline{QI}^\mathcal {R}_{\mathcal {T}^\mathcal {R}_{\left( {\bar{x}},{\bar{y}}\right) }} +\left| a_{00}({\bar{x}},{\bar{y}})\right| +\left| a_{01}({\bar{x}},{\bar{y}})\right| \\{} & {} - \left| \mathcal {H}\left( x^t,y^t\right) \cap a_{01}\left( {\bar{x}},{\bar{y}}\right) \right| \\= & {} TI+\left| a_{01}({\bar{x}},{\bar{y}})\right| - \left| \mathcal {H}\left( x^t,y^t\right) \cap a_{01}\left( {\bar{x}},{\bar{y}}\right) \right| . \end{aligned}$$We show for t sufficiently small:

$$\begin{aligned} \left| a_{01}({\bar{x}},{\bar{y}})\right| - \left| \mathcal {H}\left( x^t,y^t\right) \cap a_{01}\left( {\bar{x}},{\bar{y}}\right) \right| \le \left| \left\{ i\in a_{01}\left( {\bar{x}},{\bar{y}}\right) \,\left| \,{\bar{\sigma }}_{1,i}=0\right. \right\} \right| , \end{aligned}$$and the assertion will follow immediately since \(TI\ge 0\). Clearly,

$$\begin{aligned} \left| a_{01}({\bar{x}},{\bar{y}})\right| - \left| \mathcal {H}\left( x^t,y^t\right) \cap a_{01}\left( {\bar{x}},{\bar{y}}\right) \right| = \left| a_{01}({\bar{x}},{\bar{y}})\backslash \mathcal {H}\left( x^t,y^t\right) \right| . \end{aligned}$$Suppose \({\bar{i}} \in a_{01}\left( {\bar{x}},{\bar{y}}\right) \) with \({\bar{\sigma }}_{1,{\bar{i}}}\ne 0\). In view of Remark 1, the difference \(\eta ^{\ge ,t}_{{\bar{i}}}-\eta ^{\le ,t}_{{\bar{i}}}\) cannot vanish for all t sufficiently small. In particular, one of the multipliers \(\eta ^{\ge ,t}_{{\bar{i}}}\) or \(\eta ^{\le ,t}_{{\bar{i}}}\) has to be not vanishing for all t sufficiently small. Hence, \({\bar{i}}\in \mathcal {H}\left( x^t,y^t\right) \). We therefore have:

$$\begin{aligned} a_{01}({\bar{x}},{\bar{y}})\backslash \mathcal {H}\left( x^t,y^t\right) \subset \left\{ i\in a_{01}\left( {\bar{x}},{\bar{y}}\right) \,\left| \,{\bar{\sigma }}_{1,i}= 0\right. \right\} . \end{aligned}$$ - Step 4.:

-

Without loss of generality—considering subsequences if needed—we can assume that for any \(i\in a_{00}({\bar{x}},{\bar{y}})\) at least one of the sequences \(\frac{x_i^t}{y_i^t}\) or \(\frac{y_i^t}{x_i^t}\) is convergent. First, we note that the quotients are well defined due to Lemma 3c). Moreover, if the former sequence does not contain a convergent subsequence, we find a subsequence that tends to plus or minus infinity. Consequently, the corresponding subsequence of the latter reciprocal sequence has to converge to zero. We define the following auxiliary sets:

$$\begin{aligned} a_{00}^x({\bar{x}},{\bar{y}})= & {} \left\{ i \in a_{00}({\bar{x}},{\bar{y}})\,\left| \, \frac{x^t_i}{y^t_i} \text{ converges } \text{ for } t \rightarrow 0 \right. \right\} , \\ a_{00}^y({\bar{x}},{\bar{y}})= & {} a_{00}({\bar{x}},{\bar{y}})\backslash a_{00}^x({\bar{x}},{\bar{y}}). \end{aligned}$$For \({\bar{i}} \in a_{00}^x\left( {\bar{x}},{\bar{y}}\right) \) we consider \(\mathcal {T}^{\mathcal {R}}_{\left( {\bar{x}},{\bar{y}}\right) }\) and replace two of the involved equations, namely \(\begin{pmatrix} e_{{\bar{i}}},0 \end{pmatrix}\xi =0\) and \(\begin{pmatrix} 0,e_{{\bar{i}}} \end{pmatrix}\xi =0\) by one equation \(\begin{pmatrix} e_{{\bar{i}}},\lim \limits _{t \rightarrow 0}\frac{x_{{\bar{i}}}^t}{y_{{\bar{i}}}^t}e_{{\bar{i}}} \end{pmatrix}\xi =0\). Clearly, the vectors involved in the definition of the newly generated linear space, i.e.

$$\begin{aligned} \mathcal {T}^{{{\bar{i}}}}=\left\{ \xi \in \mathbb {R}^{2n}\,\left| \, \begin{array}{l} \begin{pmatrix} Dh_p({\bar{x}}),0\end{pmatrix} \xi =0, p \in P, \begin{pmatrix} Dg_q({\bar{x}}),0\end{pmatrix}\xi =0,q \in Q_0({\bar{x}}),\\ \begin{pmatrix} 0,e \end{pmatrix}\xi =0, \begin{pmatrix} 0,e_i \end{pmatrix}\xi =0, i\in \mathcal {E}({\bar{y}}),\\ \begin{pmatrix} e_i,0 \end{pmatrix}\xi =0, i \in a_{00}({\bar{x}},{\bar{y}})\backslash \{{\bar{i}}\}, \begin{pmatrix} 0,e_i \end{pmatrix}\xi =0, i \in a_{00}({\bar{x}},{\bar{y}})\backslash \{{\bar{i}}\},\\ \begin{pmatrix} e_{{\bar{i}}},\lim \limits _{t \rightarrow 0}\frac{x_{{\bar{i}}}^t}{y_{{\bar{i}}}^t}e_{{\bar{i}}} \end{pmatrix}\xi =0, \\ \begin{pmatrix} {\bar{y}}_ie_i,{\bar{x}}_ie_i \end{pmatrix}\xi =0, i \in a_{01}({\bar{x}},{\bar{y}})\cup a_{10}({\bar{x}},{\bar{y}})\} \end{array} \right. \right\} , \end{aligned}$$remain linearly independent. The dimension of \( \mathcal {T}^{{{\bar{i}}}}\) is greater than the dimension of \(\mathcal {T}^{\mathcal {R}}_{\left( {\bar{x}},{\bar{y}}\right) }\) by one. Moreover, there exists \(\xi ^{{\bar{i}}} \in \mathcal {T}^{{{\bar{i}}}}\) with \( \xi ^{{\bar{i}}}_{n+{\bar{i}}} \not =0\). Indeed, assume that no such \(\xi ^{{\bar{i}}}\) exists, then we can add the equation \(\begin{pmatrix} 0,e_{{\bar{i}}} \end{pmatrix}\xi =0\) to the defining equations of \( \mathcal {T}^{ {{\bar{i}}}}\) without changing it. The resulting space, however, is identical to \(\mathcal {T}^{\mathcal {R}}_{\left( {\bar{x}},{\bar{y}}\right) }\), a contradiction. Without loss of generality, we assume \(\xi ^{{\bar{i}}}_{n+{\bar{i}}}=1\). Further, by straightforward application of the implicit function theorem and due to Lemma 3a) and 3b), we find a sequence of vectors \(\xi ^{{\bar{i}}}_t \in \mathcal {T}^{ {\bar{i}}}_{t}\) that converges to \(\xi ^{{\bar{i}}}\) for \(t \rightarrow 0\). For this, we define

$$\begin{aligned} \mathcal {T}^{{\bar{i}}}_t=\left\{ \xi \in \mathbb {R}^{2n}\,\left| \, \begin{array}{l} \begin{pmatrix} Dh_p(x^t),0\end{pmatrix} \xi =0, p \in P, \begin{pmatrix} Dg_q(x^t),0\end{pmatrix}\xi =0,q \in Q_0(x^t),\\ \begin{pmatrix} 0,e \end{pmatrix}\xi =0, \begin{pmatrix} 0,e_i \end{pmatrix}\xi =0, i\in \mathcal {E}(y^t),\\ \begin{pmatrix} e_i,0 \end{pmatrix}\xi =0, i \in a_{00}({\bar{x}},{\bar{y}})\backslash \{{\bar{i}}\}, \begin{pmatrix} 0,e_i \end{pmatrix}\xi =0, i \in a_{00}({\bar{x}},{\bar{y}})\backslash \{{\bar{i}}\},\\ \begin{pmatrix} e_{{\bar{i}}},\frac{x_{{\bar{i}}}^t}{y_{{\bar{i}}}^t} e_{{\bar{i}}} \end{pmatrix}\xi =0, \begin{pmatrix} y^t_ie_i,x^t_ie_i \end{pmatrix}\xi =0, i \in a_{01}({\bar{x}},{\bar{y}})\cup a_{10}({\bar{x}},{\bar{y}}) \end{array} \right. \right\} . \end{aligned}$$We again have, due to continuity arguments, that \(\xi ^{{\bar{i}}}_{t,n+{\bar{i}}} \not =0\). For \({\bar{i}} \in a_{00}^y\left( {\bar{x}},{\bar{y}}\right) \) we proceed analogously by considering \(\mathcal {T}^{\mathcal {R}}_{\left( {\bar{x}},{\bar{y}}\right) }\) again and replace two of the involved equations \(\begin{pmatrix} e_{{\bar{i}}},0 \end{pmatrix}\xi =0\) and \(\begin{pmatrix} 0,e_{{\bar{i}}} \end{pmatrix}\xi =0\) by the equation \(\begin{pmatrix} \lim \limits _{t \rightarrow 0}\frac{y_{{\bar{i}}}^t}{x_{{\bar{i}}}^t} e_{{\bar{i}}},e_{{\bar{i}}} \end{pmatrix}\xi =0\). By the same arguments as before, we find \(\xi ^{{\bar{i}}} \in \mathcal {T}^{{\bar{i}}}\) with \(\xi ^{{\bar{i}}}_{{\bar{i}}} \ne 0\). Again we will assume \(\xi ^{{\bar{i}}}_{{\bar{i}}} = 1\) and find a sequence of vectors \(\xi ^{{\bar{i}}}_t \in \mathcal {T}^{ {\bar{i}}}_{t}\) that converges to \(\xi ^{{\bar{i}}}\) for \(t \rightarrow 0\). Due to continuity, it holds then \(\xi ^{{\bar{i}}}_{t,{\bar{i}}} \ne 0\).

It is straightforward to verify the following observations for t sufficiently small:

-

a)

Let \(\left\{ \xi ^{\prime ,1},\ldots ,\xi ^{\prime ,\ell }\right\} \) be a base of \(\mathcal {T}^{\prime }\), cf. Step 2b, then \(\left\{ \xi ^{\prime ,1},\ldots ,\xi ^{\prime ,\ell }\right\} \cup \left\{ \xi ^{{\bar{i}}}_{t}\,\left| \,{\bar{i}} \in a_{00}({\bar{x}},{\bar{y}})\right. \right\} \) is a set of linear independent vectors. In fact, suppose for some coefficients \(b_{i} \in \mathbb {R}\), \(i \in a_{00}\left( {\bar{x}},{\bar{y}}\right) ,\beta _i \in \mathbb {R},\,i=1,\ldots ,\ell \) it holds:

$$\begin{aligned} \sum \limits _{i \in a_{00}\left( {\bar{x}},{\bar{y}}\right) } b_{i}\xi ^{i}_{t} +\sum \limits _{i=1}^{\ell }\beta _i\xi ^{\prime ,i}=0. \end{aligned}$$For \({\bar{i}} \in a_{00}^x\left( {\bar{x}},{\bar{y}}\right) \) we consider the \((n+{\bar{i}})\)-th row of this sum

$$\begin{aligned} b_{{\bar{i}}}\underbrace{\xi ^{{\bar{i}}}_{t,n+{\bar{i}}}}_{\ne 0}+\sum \limits _{i \in a_{00}\left( {\bar{x}},{\bar{y}}\right) \backslash \left\{ {\bar{i}}\right\} } b_{i}\underbrace{\xi ^{i}_{t,n+{\bar{i}}}}_{=0}+\sum \limits _{i=1}^{\ell }\beta _i\underbrace{\xi ^{\prime ,i}_{n+{\bar{i}}}}_{=0}=0. \end{aligned}$$If instead \({\bar{i}} \in a_{00}^y\left( {\bar{x}},{\bar{y}}\right) \) we consider the \({\bar{i}}\)-th row of the sum

$$\begin{aligned} b_{{\bar{i}}}\underbrace{\xi ^{{\bar{i}}}_{t,{\bar{i}}}}_{\ne 0}+\sum \limits _{i \in a_{00}\left( {\bar{x}},{\bar{y}}\right) \backslash \left\{ {\bar{i}}\right\} } b_{i}\underbrace{\xi ^{i}_{t,{\bar{i}}}}_{=0}+\sum \limits _{i=1}^{\ell }\beta _i\underbrace{\xi ^{\prime ,i}_{{\bar{i}}}}_{=0}=0. \end{aligned}$$Altogether, it must hold \(b_{i} =0\), \(i \in a_{00}\left( {\bar{x}},{\bar{y}}\right) \). However, this implies

$$\begin{aligned} \sum \limits _{i=1}^{\ell }\beta _i\xi ^{\prime ,i}=0. \end{aligned}$$Hence, \(\beta _i=0\) for \(i=1,\ldots ,\ell \).

-

b)

It holds \(\xi ^{{\bar{i}}}_{t} \in \mathcal {T}^\mathcal {S}_{\left( x^t,y^t\right) }\), cf. Step 1a, for any \({\bar{i}} \in a_{00}({\bar{x}},{\bar{y}})\).

-

c)

It holds \(\left( \eta _i^{\le ,t}-\eta _i^{\ge ,t}\right) \xi ^{{\bar{i}}}_{t,i}\xi ^{{\bar{i}}}_{t,n+i} \le 0, i \in \mathcal {H}\left( x^t,y^t\right) \). Since \(\xi ^{{\bar{i}}}_{t} \in \mathcal {T}^\mathcal {S}_{\left( x^t,y^t\right) }\), we obtain:

$$\begin{aligned} \left( \eta _i^{\le ,t}-\eta _i^{\ge ,t}\right) \xi ^{{\bar{i}}}_{t,i}\xi ^{{\bar{i}}}_{t,n+i} =\left( \eta _i^{\ge ,t}-\eta _i^{\le ,t}\right) \left( \xi ^{{\bar{i}}}_{t,n+i}\right) ^2\frac{x^t_i}{y^t_i}. \end{aligned}$$If \(i\in \mathcal {H}^{\ge }\left( x^t,y^t\right) \), we have \(x_i^t<0,y_i^t>0\) and \(\eta _i^{\le ,t}=0\). Moreover, due to ND2, we have \(\eta _i^{\ge ,t}>0\). The assertion follows immediately. The other case \(i\in \mathcal {H}^{\le }\left( x^t,y^t\right) \) is completely analogous.

-

d)

It holds \(\lim \limits _{t\rightarrow 0}\left( \eta _{{\bar{i}}}^{\le ,t}-\eta _{{\bar{i}}}^{\ge ,t}\right) \xi ^{{\bar{i}}}_{t,{\bar{i}}}\xi ^{{\bar{i}}}_{t,n+{\bar{i}}}=-\infty \). We calculate:

$$\begin{aligned} \left( \eta _{{\bar{i}}}^{\le ,t}-\eta _{{\bar{i}}}^{\ge ,t}\right) \xi ^{{\bar{i}}}_{t,{{\bar{i}}}}\xi ^{{\bar{i}}}_{t,n+{{\bar{i}}}}= & {} \left\{ \begin{array}{ll} \left( \eta _{{\bar{i}}}^{\ge ,t}-\eta _{{\bar{i}}}^{\le ,t}\right) \left( \xi ^{{\bar{i}}}_{t,n+{\bar{i}}}\right) ^2\frac{x^t_{{\bar{i}}}}{y^t_{{\bar{i}}}}&{} \text{ for } {\bar{i}} \in a_{00}^x({\bar{x}},{\bar{y}}),\\ \left( \eta _{{\bar{i}}}^{\ge ,t}-\eta _{{\bar{i}}}^{\le ,t}\right) \left( \xi ^{{\bar{i}}}_{t,{\bar{i}}}\right) ^2\frac{y^t_{{\bar{i}}}}{x^t_{{\bar{i}}}}&{} \text{ for } {\bar{i}} \in a_{00}^y({\bar{x}},{\bar{y}}). \end{array}\right. \end{aligned}$$Let us suppose \({\bar{i}} \in a_{00}^x({\bar{x}},{\bar{y}})\). We have \(y^t_{{\bar{i}}}\ne 0\) and, thus, \(\nu ^t_{{\bar{i}}}=0\). We use Remark 1 and NDT3 to conclude that the sequence \( \left( \eta _{{\bar{i}}}^{\ge ,t}-\eta _{{\bar{i}}}^{\le ,t}\right) x^t_{{\bar{i}}}\) converges to \(\varrho _{2,{\bar{i}}}<0\) for \(t \rightarrow 0\). Further, \(\frac{1}{y^t_{{\bar{i}}}}>0\) tends to infinity for \(t \rightarrow 0\). Finally, \(\left( \xi ^{{\bar{i}}}_{t,n+{\bar{i}}}\right) ^2\) converges to 1 for \(t \rightarrow 0\), due to the construction of \(\xi ^{{\bar{i}}}_t\). Thus, the assertion follows. Instead, let us suppose \({\bar{i}} \in a_{00}^y({\bar{x}},{\bar{y}})\). This time, we have that \(\left( \xi ^{{\bar{i}}}_{t,{\bar{i}}}\right) ^2\) converges to 1 for \(t \rightarrow 0\). Due to Remark 1 and NDT3, \(\left( \eta _{{\bar{i}}}^{\ge ,t}-\eta _{{\bar{i}}}^{\le ,t}\right) y^t_{{\bar{i}}}\) converges to \(\varrho _{1,{\bar{i}}}\ne 0\) for \(t \rightarrow 0\). If \({\bar{i}}\in \mathcal {H}^{\ge }\left( x^t,y^t\right) \), then \(\varrho _{1,{\bar{i}}}\) is positive from here. Also, \(\frac{1}{x^t_{{\bar{i}}}}<0\) tends to minus infinity for \(t \rightarrow 0\). The other case \({\bar{i}}\in \mathcal {H}^{\le }\left( x^t,y^t\right) \) is completely analogous.

-

e)

We notice that \(\displaystyle \xi ^{{\bar{i}} ^T}_{t} D^2\,L^\mathcal {R}\left( x^t, y^t\right) \xi ^{{\bar{i}}}_{t}\) converges for \(t \rightarrow 0\) due to the construction above.

Finally, for \({\bar{i}}\in a_{00}({\bar{x}},{\bar{y}})\) we estimate:

Thus, due to d) and e), \(\xi ^{{\bar{i}} ^T}_{t} D^2\,L^\mathcal {S}\left( x^t, y^t\right) \xi ^{{\bar{i}}}_{t}\) has to be negative for t small enough. As we have seen in Step 2c, it holds \(\mathcal {T}^\prime \subset \mathcal {T}^\mathcal {S}_{\left( x^t,y^t\right) }\). Then, due a) and b), we have

By Step 2a, we have \(QI^\mathcal {S}_{t,\mathcal {T}^\mathcal {R}_{\left( {\bar{x}},{\bar{y}}\right) }}=\overline{QI}^\mathcal {R}_{\mathcal {T}^\mathcal {R}_{\left( {\bar{x}},{\bar{y}}\right) }}\), and by Step 2b, \(QI^\mathcal {S}_{t,\mathcal {T}^\mathcal {R}_{\left( {\bar{x}},{\bar{y}}\right) }}=QI^\mathcal {S}_{t,\mathcal {T}^\prime }\). Overall, we obtain:

\(\square \)

Proof of Theorem 5

(i) We show the existence of nondegenerate Karush–Kuhn–Tucker points of \(\mathcal {S}\) in a neighborhood of \(({\bar{x}},{\bar{y}})\).

- Step 1.:

-

First, we show that for all \(i\in a_{10}\left( {\bar{x}},{\bar{y}}\right) \) it holds \({\bar{\sigma }}_{2,i}\ne 0\). Assume contrarily that \({\bar{\sigma }}_{2,{\bar{i}}}= 0\) for some \({\bar{i}} \in a_{10}\left( {\bar{x}},{\bar{y}}\right) \). We then have due to T-stationarity, cf. (13):

$$\begin{aligned} c_{{\bar{i}}}={\bar{\sigma }}_{2,{\bar{i}}}+{\bar{\mu }}_3={\bar{\mu }}_3. \end{aligned}$$Moreover, we have in view of Lemma 1c) an index \({\tilde{i}} \in a_{01}\left( {\bar{x}}, {\bar{y}}\right) \backslash \mathcal {E}\left( {\bar{y}}\right) .\) Thus it holds, cf. (13), \(c_{{\tilde{i}}}={\bar{\mu }}_3\). Due to the assumption on c, we have \({\bar{i}}={\tilde{i}}\), a contradiction. Hence, we may write:

$$\begin{aligned} a_{10}\left( {\bar{x}},{\bar{y}}\right)= & {} \left\{ i\in a_{10}\left( {\bar{x}},{\bar{y}}\right) \,\left| \,{\bar{\sigma }}_{2,i}<0\right. \right\} \cup \left\{ i\in a_{10}\left( {\bar{x}},{\bar{y}}\right) \,\left| \,{\bar{\sigma }}_{2,i}>0\right. \right\} \\= & {} a_{10}^<\left( {\bar{x}},{\bar{y}}\right) \cup a_{10}^>\left( {\bar{x}},{\bar{y}}\right) . \end{aligned}$$Due to NDT6 and NDT3, we may split the other index sets as