Abstract

Background

The diagnosis of Parkinson’s disease (PD) and evaluation of its symptoms require in-person clinical examination. Remote evaluation of PD symptoms is desirable, especially during a pandemic such as the coronavirus disease 2019 pandemic. One potential method to remotely evaluate PD motor impairments is video-based analysis. In this study, we aimed to assess the feasibility of predicting the Unified Parkinson’s Disease Rating Scale (UPDRS) score from gait videos using a convolutional neural network (CNN) model.

Methods

We retrospectively obtained 737 consecutive gait videos of 74 patients with PD and their corresponding neurologist-rated UPDRS scores. We utilized a CNN model for predicting the total UPDRS part III score and four subscores of axial symptoms (items 27, 28, 29, and 30), bradykinesia (items 23, 24, 25, 26, and 31), rigidity (item 22) and tremor (items 20 and 21). We trained the model on 80% of the gait videos and used 10% of the videos as a validation dataset. We evaluated the predictive performance of the trained model by comparing the model-predicted score with the neurologist-rated score for the remaining 10% of videos (test dataset). We calculated the coefficient of determination (R2) between those scores to evaluate the model’s goodness of fit.

Results

In the test dataset, the R2 values between the model-predicted and neurologist-rated values for the total UPDRS part III score and subscores of axial symptoms, bradykinesia, rigidity, and tremor were 0.59, 0.77, 0.56, 0.46, and 0.0, respectively. The performance was relatively low for videos from patients with severe symptoms.

Conclusions

Despite the low predictive performance of the model for the total UPDRS part III score, it demonstrated relatively high performance in predicting subscores of axial symptoms. The model approximately predicted the total UPDRS part III scores of patients with moderate symptoms, but the performance was low for patients with severe symptoms owing to limited data. A larger dataset is needed to improve the model’s performance in clinical settings.

Similar content being viewed by others

Background

Parkinson’s disease (PD) is the second most common neurodegenerative disease, characterized by bradykinesia, resting tremor, muscle rigidity, and responsiveness to dopaminergic treatment [1, 2]. PD diagnosis and the evaluation of the effectiveness of its treatment require clinical examination. However, owing to the coronavirus disease 2019 pandemic, telemedicine, especially video consultation, has been promoted to reduce the risk of transmission [3, 4]. To further promote the use of telemedicine for PD, the development of methods that can be used to support the evaluation of PD symptoms in telemedicine is needed.

One potential method to evaluate PD motor impairments remotely is video-based analysis [5]. Recently, convolutional neural networks (CNNs), a type of deep learning algorithm, have been used to analyze human actions from videos [6]. However, to date, these models have not been used to evaluate PD symptoms. If these CNN models can be used to evaluate PD symptoms from videos obtained using a standard video camera, remote evaluation through web cameras or smartphone applications, without in-person assessment, may become feasible.

In this study, we focused on gait videos because the gait of patients with PD includes many features characteristic of PD symptoms, such as bradykinesia, shortness of step length, postural abnormality, decreased arm swinging, and freezing of gait. In addition, as the recording of such gait videos does not require specialized skills and is not time-consuming, evaluation of PD symptoms from gait videos would be cost-effective. In both clinical practice and research, the Unified Parkinson’s Disease Rating Scale [7] (UPDRS) is widely used to evaluate PD motor symptoms. Therefore, the aim of this study was to assess the feasibility of predicting UPDRS scores from gait video data of patients with PD using a CNN model.

Methods

Patients and video recording

This study included patients with PD who were video recorded from April 2013 to January 2021 while being rated according to the UPDRS [7] for the diagnosis or evaluation of the efficacy of treatment at Hokkaido University Hospital. The diagnosis of PD was made based on the UK Parkinson’s Disease Society Brain Bank criteria [8]. According to the accepted diagnostic criteria, we excluded patients with the following parkinsonian disorders: drug-induced parkinsonism due to dopamine receptor blocking agents; vascular parkinsonism; and atypical forms of parkinsonism, such as progressive supranuclear palsy, multiple system atrophy, or corticobasal degeneration [9]. Additionally, we excluded patients with a history of stroke, hospitalization for a psychiatric disorder, or other neurological, metabolic, or neoplastic disorders, as well as those with symptomatic musculoskeletal diseases such as acute bone fracture, spinal canal stenosis, and osteoarthritis. The Hoehn and Yahr (HY) stages, Mini-Mental State Examination (MMSE) scores, and levodopa equivalent daily dose (LEDD) scores [10, 11] at the first UPDRS assessment were obtained for each patient using their medical records. We also obtained information on whether each patient received device-aided therapies (deep brain stimulation [DBS] or levodopa-carbidopa intestinal gel [LCIG] treatment) from the medical records.

The study protocol was approved by the institutional review board of the Hokkaido University Hospital (approval number: 020–0446), and the requirement for informed consent was waived owing to the retrospective nature of the study. Procedures involving experiments on human participants were performed in accordance with the ethical standards of the Committee on Human Experimentation of the institution in which the experiments were conducted.

We used video data recorded during gait examination to predict the severity of motor symptoms. Videos were captured using a consumer-grade video camera (HDR-XR500V and HDR-CX470B, Sony Corporation, Tokyo, Japan) at 30 fps with a resolution of 1280 × 720 px in MP4 format. In the video recordings, patients wore either a hospital gown provided by the hospital or simple, comfortable clothes. The recordings were conducted in a flat hallway at Hokkaido University Hospital. The camera was placed on a tripod in a fixed location during the recording. The participants were instructed to walk directly toward the camera, turn around, and walk directly away again. Although the walking distance was not predetermined, the patients were instructed to begin walking away from the camera at a distance of 5 to 7 m and then return to a position in front of the camera. They were permitted to use a cane or handrail or to receive assistance from medical staff during the video recording if needed. Tha patients received walking assistance without considering the laterality of their symptoms, and no specific protocol for providing walking assistance was established beforehand. We excluded the video data of patients who walked for less than 10 s and those who could not walk even with assistance (i.e., the score of item 30 of the UPDRS part III was 4). Videos in which the camera was unstable during the recording were also excluded. Whether the video was taken during the medication-on or medication-off phases varied across patients and was not determined in advance. In some cases, certain patients were recorded in both the medication-on and medication-off phases. Video data recorded for the same patient with and without medication and DBS (medication on/off and DBS on/off, respectively) or recorded on different dates were regarded as different videos. We did not repeatedly record videos of the same participant on the same date and during the same treatment state.

Assessment of UPDRS score

Patients were evaluated using the UPDRS part III at the time of gait analysis, and this examination was included in the video recording. Two experienced movement disorder specialists (T. Kano from April 2013 to June 2017 and S. Shirai from July 2017 to January 2021), both Japanese Society of Neurology-certified neurologists, rated the UPDRS part III score.

Preprocessing of video data

Ten-second clips were extracted from the recorded videos, specifically those segments where patients began walking. We converted all the frames in the 10 s clips (300 frames per clip) into static images in JPEG format. The static images were resized to 224 × 398 px, and the resized images were cropped to retain only the center 224 × 224 px.

CNN architecture

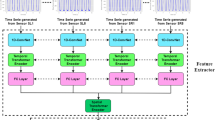

We used the ECO-Lite CNN architecture [6] in this study, an overview of which is provided in Fig. 1. ECO-Lite is an end-to-end CNN architecture that learns spatiotemporal features from videos. It was originally developed to analyze human action videos and exhibited high performance in classifying 400 human actions in the “Kinetics” video dataset [12]. This CNN model consists of the following two submodules: 2D-Net and 3D-Net. 2D-Net is a neural network with two-dimensional convolutional layers that are used to capture visual features of images from individual frames, whereas 3D-Net has three-dimensional convolutional layers that are used to capture temporal relations between frames.

The input frames were processed with the CNN model, as follows. First, the static frames extracted from videos were provided as input to 2D-Net; second, the output feature maps from 2D-Net were stacked temporally and fed to 3D-Net; and third, the output features from 3D-Net were used for making predictions. For each submodule, we chose the same models as those in the original report [6]: we used a subpart of the “BN-Inception” architecture [13] for 2D-Net and a subpart of the “3D-ResNet18” architecture [14] for 3D-Net, and we attached a fully connected layer for the prediction of the UPDRS score of the input video. We hypothesized that 2D-Net would extract static features of PD, such as postural abnormality, and 3D-Net would extract temporal features, such as walking speed, arm swing, and freezing of gait.

The input data for the model in the original report were 16 frames extracted from each video [6]. Therefore, as input data in this study, we also used 16 color frames, 224 × 224-px in size, extracted at equal intervals from each gait video. The frames were processed using a two-dimensional convolutional network (2D Net) to yield 96 feature maps, 28 × 28 px in size, for each frame. These feature maps were stacked temporally and fed into a three-dimensional convolutional network (3D Net), which was used to analyze the relationships between different frames. Thus, the size of the stacked feature maps used as input was 96 × 16 × 28 × 28. The final output was a predicted score of the UPDRS for each video.

Overview of the ECO-Lite architecture. Input data were RGB color images, 224 × 224 px in size. Sixteen frames were used from each video. The frames were processed using a two-dimensional convolutional network (2D Net) to yield 96 feature maps, 28 × 28 px in size, for each frame. These feature maps were stacked temporally and fed into a three-dimensional convolutional network (3D Net), which was used to analyze the relationships between different frames. Thus, the size of the stacked feature maps used as input was 96 × 16 × 28 × 28. The final output was a predicted score of the Unified Parkinson’s Disease Rating Scale (UPDRS) part III for each video

Model training

At first, we tried to have the model predict the total UPDRS part III score (maximum score: 108) from the gait videos. However, the videos did not contain some aspects of PD symptoms such as voice, tremor, and rigidity, and it would have been difficult for the model to predict the total UPDRS part III score. Therefore, we categorized UPDRS part III into four subscores: axial symptoms, bradykinesia, rigidity, and tremor. The definitions for each subscore were as follows: Axial symptoms included the sum of scores of item 27 (arising from a chair), 28 (posture), 29 (gait), and 30 (postural instability) (with a subscore range of 0–16); bradykinesia consisted of the sum of scores of items 23 (finger taps, total of bilateral hands), 24 (hand movements, total of bilateral hands), 25 (rapid alternating movements of hands, total of bilateral hands), 26 (leg agility, total of bilateral legs), and 31 (body bradykinesia and hypokinesia) (with a score range of 0–36); rigidity was indicated by the score of item 22 (rigidity, total score of head, bilateral hands, and legs) (with a subscore range of 0–20); tremor comprised the sum of scores of items 20 (tremor at rest, total score of head, bilateral hands, and legs) and 21 (action or postural tremor, total of bilateral hands) (with a subscore range of 0–28). We also evaluated the CNN model’s capability to predict these subscores from the gait videos.

In this study, we obtained an average of 10 videos per patient; however, this number greatly varied between patients. Therefore, we divided all video data randomly by stratifying the videos based on the UPDRS score instead of individual patients to create the training, validation, and test datasets (i.e., we permitted videos from the same patient to be in multiple datasets). We stratified the videos into three groups according to the total UPDRS part III score as follows: (1) mild (bottom third), (2) moderate (middle third), and (3) severe (top third). By stratifying the videos according to the UPDRS score, we intended to train the model equally using videos with various severities of PD symptoms. Then, all the video data were randomly divided into 80% (591 videos) for the training dataset, 10% (73 videos) for the validation dataset, and 10% (73 videos) for the test dataset, each with the same proportion of each stratified group. The training dataset was used to train the model, whereas the validation dataset was used to improve hyperparameters, such as the number of training epochs and the learning rate (lr). Finally, we used the test dataset to evaluate the model’s prediction performance using the parameters that showed the best prediction performance in the validation dataset.

The model was trained to predict the total UPDRS part III score and each subscore separately (in this study, we developed five distinct models to predict the total UPDRS part III score and each of the four subscores). The model-predicted scores were compared with neurologists-assigned scores. The prediction errors were calculated as the mean squared error between these two scores. The CNN parameters were updated to reduce the mean squared error in predicting the total UPDRS part III score or the individual four subscores. In the previous report [6], the same model was trained using the “Kinetics” video dataset; therefore, we assumed that the already-trained model could capture basic visual features and their temporal patterns to recognize 400 different human actions. As a result of preliminary evaluations, we implemented a warm-start strategy using the parameter values of this pretrained model as initial values. We fine-tuned those parameters by gradually training submodules with a small lr in the following schedule: 1–20 epochs, only the final fully connected layer was trained with random initialization and lr = 0.001; 21–50 epochs, the fully connected layer and 3D-Net module were trained with lr = 0.0005; 51–70 epochs, all the parameters of the model were trained with lr = 0.0001; and 71–100 epochs, all the parameters of the model were trained with lr = 0.00001. For parameter optimization, we used the Adam optimizer with a weight decay of 0.0005. We set a mini-batch size of 8. We also applied the following data augmentation techniques in every training epoch:

-

random horizontal flip (flip** the videos with a 50% probability).

-

random rotation (rotating the videos within 5°).

-

random color jitter (changing the color values of the videos within 50%).

We used the Python (version 3.8.8) programming language and PyTorch deep learning library (version 1.5.1) [ The video data are not available for public access because of patient privacy concerns. All other anonymized data can be provided by the corresponding author on reasonable request. Convolutional neural network Deep brain stimulation Levodopa equivalent daily dose Learning rate Mean absolute error Parkinson’s disease Coefficient of determination Standard deviation Unified Parkinson’s Disease Rating Scale Nussbaum RL, Ellis CE. Alzheimer’s disease and Parkinson’s disease. N Engl J Med. 2003;348:1356–64. Kalia LV, Lang AE. Parkinson’s disease. Lancet. 2015;386:896–912. Bloem BR, Dorsey ER, Okun MS. The coronavirus disease 2019 crisis as catalyst for telemedicine for chronic neurological disorders. JAMA Neurol. 2020;77:927–28. Ohannessian R, Duong TA, Odone A. Global telemedicine implementation and integration within health systems to fight the COVID-19 pandemic: a call to action. JMIR Public Health Surveill. 2020;6:e18810. Kidziński Ł, Yang B, Hicks JL, Rajagopal A, Delp SL, Schwartz MH. Deep neural networks enable quantitative movement analysis using single-camera videos. Nat Commun. 2020;11:4054. Zolfaghari M, Singh K, Brox T. ECO: efficient convolutional network for online video understanding. Lecture Notes in Computer Science. Proceedings of the European Conference on Computer Vision (ECCV). 2018:713 – 30. Martínez-Martín P, Gil-Nagel A, Gracia LM, Gómez JB, Martínez-Sarriés J, Bermejo F. Unified Parkinson’s Disease Rating Scale characteristics and structure. The Cooperative Multicentric Group. Mov Disord. 1994;9:76–83. Hughes AJ, Daniel SE, Kilford L, Lees AJ. Accuracy of clinical diagnosis of idiopathic Parkinson’s disease: a clinico-pathological study of 100 cases. J Neurol Neurosurg Psychiatry. 1992;55:181–4. Litvan I, Bhatia KP, Burn DJ, Goetz CG, Lang AE, McKeith I, et al. Movement Disorders Society scientific issues committee report: SIC Task Force appraisal of clinical diagnostic criteria for parkinsonian disorders. Mov Disord. 2003;18:467–86. Tomlinson CL, Stowe R, Patel S, Rick C, Gray R, Clarke CE. Systematic review of levodopa dose equivalency reporting in Parkinson’s disease. Mov Disord. 2010;25:2649–53. Schade S, Mollenhauer B, Trenkwalder C. Levodopa equivalent dose conversion factors: an updated proposal including opicapone and safinamide. Mov Disord Clin Pract. 2020;7:343–5. Kay W, Carreira J, Simonyan K, Zhang B, Hillier C, Vijayanarasimhan S, et al. The kinetics human action video dataset. ar**v. 2017. https://doi.org/10.48550/ar**v.1705.06950. Ioffe S, Szegedy C. Batch normalization: accelerating deep network training by reducing internal covariate shift. Presented at the 32nd International Conference on Machine Learning, Lille, France; vol. 2015; 2015. Tran D, Ray J, Shou Z, Chang SF, Paluri M. ConvNet architecture search for spatiotemporal feature learning. ar**v. 2017. https://doi.org/10.48550/ar**v.1708.05038. Paszke A, Gross S, Massa F, Lerer A, Bradbury J, Chanan G, et al. PyTorch: an imperative style, high-performance deep learning library. ar**v. 2019. https://doi.org/10.48550/ar**v.1912.01703. Cubo E, Gabriel-Galán JMT, Martínez JS, Alcubilla CR, Yang C, Arconada OF, et al. Comparison of office-based versus home web-based clinical assessments for Parkinson’s disease. Mov Disord. 2012;27:308–11. Goetz CG, Tilley BC, Shaftman SR, Stebbins GT, Fahn S, Martinez-Martin P, et al. Movement Disorder Society-Sponsored revision of the Unified Parkinson’s Disease Rating Scale (MDS-UPDRS): scale presentation and clinimetric testing results. Mov Disord. 2008;23:2129–70. Stillerova T, Liddle J, Gustafsson L, Lamont R, Silburn P. Remotely assessing symptoms of Parkinson’s disease using videoconferencing: a feasibility study. Neurol Res Int. 2016;2016:4802570. Cilia R, Cereda E, Akpalu A, Sarfo FS, Cham M, Laryea R, et al. Natural history of motor symptoms in Parkinson’s disease and the long-duration response to levodopa. Brain. 2020;143:2490–501. Baumann CR. Epidemiology, diagnosis and differential diagnosis in Parkinson’s disease tremor. Parkinsonism Relat Disord. 2012;18:90–2. Tran D, Bourdev L, Fergus R, Torresani L, Paluri M. Learning spatiotemporal features with 3d convolutional networks. Proceedings of the I.E.E.E. International Conference on Computer Vision. 2015:4489-97. Carreira J, Andrew Z. Quo vadis, action recognition? a new model and the kinetics dataset. Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. 2017:6299 – 308. Li MH, Mestre TA, Fox SH, Taati B. Vision-based assessment of parkinsonism and levodopa-induced dyskinesia with pose estimation. J Neuroeng Rehabil. 2018;15:97. Sato K, Nagashima Y, Mano T, Iwata A, Toda T. Quantifying normal and parkinsonian gait features from home movies: practical application of a deep learning-based 2D pose estimator. PLoS ONE. 2019;14:e0223549. Shin JH, Ong JN, Kim R, Park SM, Choi J, Kim HJ, et al. Objective measurement of limb bradykinesia using a marker-less tracking algorithm with 2D-video in PD patients. Parkinsonism Relat Disord. 2020;81:129–35. Park KW, Lee EJ, Lee JS, Jeong J, Choi N, Jo S, et al. Machine learning-based automatic rating for cardinal symptoms of Parkinson disease. Neurology. 2021;96:e1761–9. Silva de Lima AL, Smits T, Darweesh SKL, Valenti G, Milosevic M, Pijl M, et al. Home-based monitoring of falls using wearable sensors in Parkinson’s disease. Mov Disord. 2020;35:109–15. Rovini E, Maremmani C, Cavallo F. How wearable sensors can support Parkinson’s disease diagnosis and treatment: a systematic review. Front Neurosci. 2017;11:555. Kubota KJ, Chen JA, Little MA. Machine learning for large-scale wearable sensor data in Parkinson’s disease: concepts, promises, pitfalls, and futures. Mov Disord. 2016;31:1314–26. Hoops S, Nazem S, Siderowf AD, Duda JE, **e SX, Stern MB, et al. Validity of the MoCA and MMSE in the detection of MCI and dementia in Parkinson disease. Neurology. 2009;73:1738–45. Not applicable. This work was supported by JSPS KAKENHI [grant number JP22K20843] and Grants-in-Aid from the Research Committee of CNS Degenerative Diseases; Research on Policy Planning and Evaluation for Rare and Intractable Diseases; and Health, Labour and Welfare Sciences Research Grants, the Ministry of Health, Labour and Welfare, Japan [grant number 20FC1049]. The funding source had no role in study design; in the collection, analysis, and interpretation of data; in the writing of the report; or in the decision to submit the article for publication. KE acquired the data and was a major contributor to writing the manuscript. SS, II, MM, and TK treated the patients with Parkinson’s disease and acquired data. IT, HY, and IY critically revised the manuscript for important intellectual content. All authors read and approved the final manuscript. The authors declare no competing interests. The study protocol was approved by the institutional review board of the Hokkaido University Hospital (approval number: 020–0446), and the requirement for informed consent was waived owing to the retrospective nature of the study. Procedures involving experiments on human participants were performed in accordance with the ethical standards of the Committee on Human Experimentation of the institution in which the experiments were conducted. Not applicable. Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations. Below is the link to the electronic supplementary material. : Supporting Table S1. Demographic data for each patient (N = 74). Demographic data for each patient and the MMSE and LEDD scores for each patient. : Supporting Table S2. Number of videos and the Unified Parkinson’s Disease Rating Scale scores for each patient (N = 74). Number of videos and the total UPDRS score for each patient, as well as the tremor, rigidity, bradykinesia, and axial subscores. Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/. The Creative Commons Public Domain Dedication waiver (http://creativecommons.org/publicdomain/zero/1.0/) applies to the data made available in this article, unless otherwise stated in a credit line to the data. Eguchi, K., Takigawa, I., Shirai, S. et al. Gait video-based prediction of unified Parkinson’s disease rating scale score: a retrospective study.

BMC Neurol 23, 358 (2023). https://doi.org/10.1186/s12883-023-03385-2 Received: Accepted: Published: DOI: https://doi.org/10.1186/s12883-023-03385-2Data Availability

Abbreviations

References

Acknowledgements

Funding

Author information

Authors and Affiliations

Contributions

Corresponding author

Ethics declarations

Competing interests

Ethics approval and consent to participate

Consent for publication

Additional information

Publisher’s Note

Electronic supplementary material

Additional file 1

Additional file 2

Rights and permissions

About this article

Cite this article

Keywords