Abstract

Decision-making software mainly based on Machine Learning (ML) may contain fairness issues (e.g., providing favourable treatment to certain people rather than others based on sensitive attributes such as gender or race). Various mitigation methods have been proposed to automatically repair fairness issues to achieve fairer ML software and help software engineers to create responsible software. However, existing bias mitigation methods trade accuracy for fairness (i.e., trade a reduction in accuracy for better fairness). In this paper, we present a novel search-based method for repairing ML-based decision making software to simultaneously increase both its fairness and accuracy. As far as we know, this is the first bias mitigation approach based on multi-objective search that aims to repair fairness issues without trading accuracy for binary classification methods. We apply our approach to two widely studied ML models in the software fairness literature (i.e., Logistic Regression and Decision Trees), and compare it with seven publicly available state-of-the-art bias mitigation methods by using three different fairness measurements. The results show that our approach successfully increases both accuracy and fairness for 61% of the cases studied, while the state-of-the-art always decrease accuracy when attempting to reduce bias. With our proposed approach, software engineers that previously were concerned with accuracy losses when considering fairness, are now enabled to improve the fairness of binary classification models without sacrificing accuracy.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Discrimination occurs when a decision about a person is made based on sensitive attributes such as race or gender rather than merit. This suppresses opportunities of deprived groups or individuals (e.g., in education, or finance) (Kamiran et al. 2012, 2018). While software systems do not explicitly incorporate discrimination, they are not spared from biased decisions and unfairness. For example, Machine Learning (ML) software, which nowadays is widely used in critical decision-making software such as software justice risk assessment (Angwin et al. 2016; Berk et al. 2018) and pedestrian detection for autonomous driving systems (Li et al. 2023) has shown to exhibit discriminatory behaviours (Pedreshi et al. 2008). Such discriminatory behaviours can be highly detrimental, affecting human rights (Mehrabi et al. 2019), profit and revenue (Mikians et al. 2012), and can also fall under regulatory control (Pedreshi et al. 2008; Chen et al. 2019; Romei and Ruggieri 2011). To combat this, software fairness aims to provide algorithms that operate in a non-discriminatory manner (Friedler et al. 2019) for humans.

Due to its importance as a non-functional property, software fairness has recently received a lot of attention, in the literature of software engineering (Zhang et al. 2020; Brun and Meliou 2018; Zhang and Harman 2021; Horkoff 2019; Chakraborty et al. 2020; Tizpaz-Niari et al. 2022; Hort et al. 2021; Chen et al. 2022b). Indeed, it is the duty of software engineers and researchers to create responsible software.

A simple approach for repairing fairness issues in ML software is the removal of sensitive attributes (i.e., attributes that constitute discriminative decisions, such as age, gender, or race) from the training data. However, this has shown to not be able to combat unfairness and discriminative classification, owing to correlation of other attributes with sensitive attributes (Kamiran and Calders 2009; Calders et al. 2009; Pedreshi et al. 2008). Therefore, more advanced methods have been proposed in the literature, which apply bias mitigationFootnote 1 at different stages of the software development process. Bias mitigation has been applied before training software models (pre-processing) (Calmon et al. 2017; Feldman et al. 2015; Chakraborty et al. 2020; Kamiran and Calders 2012), during the training process (in-processing) (Zhang et al. 2018; Kearns et al. 2018; Celis et al. 2019; Berk et al. 2017; Zafar et al. 2017), and after a software model has been trained (post-processing) (Pleiss et al. 2017; Hardt et al. 2016; Calders and Verwer 2010; Kamiran et al. 2010, 2018). However, there are limitations for the applicability of these methods and it has been shown that they often reduce bias at the cost of accuracy (Kamiran et al. 2012, 2018), known as the price of fairness (Berk et al. 2017).

In this paper, we introduce the use of a multi-objective search-based procedure to mutate binary classification models in a post-processing stage, in order to automatically repair software fairness and accuracy issues and conduct a thorough empirical study to evaluate its feasibility and effectiveness. Here, binary classification models represent an important component of fairness research, with hundreds of publications addressing their fairness improvements (Hort et al. 2023a). We apply our method on two widely-studied binary classification models in ML software fairness research, namely Logistic Regression (Feldman et al. 2015; Chakraborty et al. 2020; Zafar et al. 2017; Kamiran et al. 2012; Kamishima et al. 2012; Kamiran et al. 2018) and Decision Trees (Kamiran et al. 2010, 2012, 2018; Žliobaite et al. 2011), which belong to two different families of classifiers. These two models are also widely adopted in practice on fairness-critical scenarios, mainly due to their advantages in explainability.Footnote 2 We investigate the performance on four widely adopted datasets, and measure the fairness with three widely-adopted fairness metrics. Furthermore, we benchmark our method with all existing post-processing methods publicly available from the popular IBM AIF360 framework (Bellamy et al. 2018), as well as three pre-processing and one in-processing bias mitigation method.

The results show that our approach is able to improve both accuracy and fairness of Logistic Regression and Decision Tree classifiers in 61% of the cases. The three post-processing bias mitigation methods we studied conform to the fairness-accuracy trade-off and therefore decrease accuracy when attempting to mitigate bias. Among all post-processing repair methods, our approach achieves the highest accuracy in 100% of the cases, while also achieving the lowest bias in 33% of these. When compared to pre- and in-processing bias mitigation methods, our approaches show a better or comparable performance (i.e., they are not outperformed by the existing methods) in 87% of the evaluations. With our approach, engineers are able to develop fairer binary classification models without the need to sacrifice accuracy.

In summary, we make the following contributions:

-

We propose a novel application of multi-objective search to debias classification models in a post-processing fashion.

-

We carry out a thorough empirical study to evaluate the applicability and effectiveness of our search-based post-processing approach to two different classification models (Logistic Regression and Decision Trees) on four publicly available datasets, and benchmark it to seven state-of-the-art post-processing methods according to three fairness metrics.

Additionally, we make our scripts and experimental results publicly available to allow for replication and extension of our work (Hort et al. 2023d).

The rest of the paper is organized as follows. Section 2 provides the background and related work on fairness research, including fairness metrics and bias mitigation methods. Section 3 introduces our approach that is used to adapt trained classification models. The experimental design is described in Section 4. Threats are outlined in Section 4.5, while experiments and results are presented in Section 5. Section 6 concludes.

2 Background and Related Work

This section introduces some background on the fairness of software systems, measuring fairness, and bias mitigation methods that have been proposed to improve the fairness of software systems.

2.1 Software Fairness

In recent years, the fairness of software systems has risen in importance, and gained attention from both the software engineering (Zhang et al. 2020; Brun and Meliou 2018; Zhang and Harman 2021; Horkoff 2019; Chakraborty et al. 2020; Hort et al. 2021; Chen et al. 2022b; Sarro 2023; Hort et al. 2023c) and the machine learning research communities (Berk et al. 2017; Kamishima et al. 2012; Kamiran et al. 2012; Calders and Verwer 2010).

While software systems can be designed to reduce discrimination, previous work has observed that this is frequently accompanied by a reduction of the accuracy or correctness of said models (Kamiran and Calders 2012; Feldman et al. 2015; Corbett-Davies et al. 2017; Hort et al. 2023c).

The power of multi-objective approaches can improve such fairness-accuracy trade off (Sarro 2023). Hort et al. (2023c) showed that multi-objective evolutionary search is effective to simultaneously improve for semantic correctness and fairness of word embeddings model. Chen et al. (2022b) proposed MAAT, a novel ensemble approach able to combines ML models optimized for different objectives: fairness and ML performance. Such a combination allow MAAT to outpefrom state-of-the-art methods in 92.2% of the overall cases evaluated. Chakraborty et al. (2020) also integrated bias mitigation into the design of ML software by leveraging a multi-objective search for hyperparameter tuning of a Logistic Regression model. This work has inspired our approach to integrate bias mitigation into the software development process, however at a different stage. While Chakraborty et al. (2020) considered pre- and in-processing approach for bias mitigation, we propose a post-processing approach. Moreover, our approach is not focused on a single classification model, but can be transferred to multiple ones, as we show by using it to improve Logistic Regression and Decision Tree models. Lastly, while their multi-objective optimization does not prevent the improvement of accuracy and fairness at the same time, our approach demands the improvement of both. Perera et al. (2022) proposed a search-based fairness testing approach for testing regression-based machine learning systems, and their empirical results revealed that it is effective to reduce group discrimination in Emergency Department wait-time prediction software.

To ensure fair software, testing methods have been also proposed to address individual discrimination (Horkoff 2019; Zhang et al. 2020; Zhang and Harman 2021; Ma et al. 2016) proposed a post-processing method based on equalized odds. A classifier is said to satisfy equalized odds when it is independent of protected attribute and true label (i.e., true positive and false positive rates across privileged and unprivileged group are equal). Given a trained classification model, they used linear programming to derive an unbiased one. Another variant of the equalized odds bias mitigation method has been proposed by Pleiss et al. (2017). In contrast to the original equalized odds method, they used calibrated probability estimates of the classification model (e.g., if 100 instances receive \(p=0.6\), then 60% of them should belong to the favorable label 1).

Our herein proposed post-processing approach differs from the leaf relabeling approach proposed by Kamiran et al. (2010), as we do apply changes to the classification model only if they increase accuracy and reduce bias. In other words, our approach is the first to deliberately optimize classification models for accuracy and fairness at the same time, unlike existing methods that are willing to reduce bias at the cost of accuracy (Berk et al. 2017). Overall, we apply a search procedure rather than deterministic approaches (Kamiran et al. 2010, 2012, 2018; Hardt et al. 2016; Pleiss et al. 2017) and we do not assume that bias reduction has to come with a decrease in accuracy. To the best of our knowledge our proposal is the first to improve classification models according to both fairness and accuracy by mutating the classification model itself, rather than manipulating the training data or the predictions.

2.3 Fairness Measurement

There are two primary methods to measure fairness of classification models: individual fairness and group fairness (Speicher et al. 2018). While individual fairness is concerned with an equal treatment of similar individuals (Dwork et al. 2012), group fairness requires equal treatment of different population groups. Such groups are divided by protected attributes, such as race, age or gender. Thereby, one group is said to be privileged if it is more likely to get an advantageous outcome than another, unprivileged group.

Due to the difficulty of determining the degree of similarity between individuals (Jacobs and Wallach 2021), it is common in the literature to focus on group fairness metrics. In particular, we investigate three group fairness metrics (all publicly available in the AIF360 framework (Bellamy et al. 2018)) to measure the fairness of a classification model, which are frequently used in the domain of software fairness (Zhang and Harman 2021; Chakraborty et al. 2020, 2021; Hort et al. 2021) and are usually optimized by existing bias mitigation methods such as Statistical Parity Difference, Average Odds Difference, and Equal Opportunity Difference.

Proceeding, we use \(\hat{y}\) to denote a prediction of a classification model. We use D to denote a group (privileged or unprivileged). We use Pr to denote probability.

The Statistical Parity Difference (SPD) requires that predictions are made independently of protected attributes (Zafar et al. 2017). Therefore, favourable and unfavourable classifications for each demographic group should be identical over the whole population (Dwork et al. 2012):

The Average Odds Difference (AOD) averages the differences in False Positive Rate (FPR) and True Positive Rate (TPR) among privileged and unprivileged groups (Hardt et al. 2016):

The Equal Opportunity Difference (EOD) corresponds to the TPR difference (Hardt et al. 2016):

Following previous work on fairness in SE (Chakraborty et al. 2020; Zhang and Harman 2021), we are interested in the absolute values of these metrics. Thereby, each metric is minimized at zero, indicating that no bias is residing in a classification model.

3 Proposed Approach

This section introduces the search-based procedure we propose for mutating classification models to simultaneously improve both accuracy and fairness. In addition, we describe implementation details for two classification models (Logistic Regression, Decision Trees) to perform such a procedure.

3.1 Procedure

Our search-based post-processing procedure aims to iteratively mutate a trained classification model in order to improve both accuracy and fairness at the same time. For this purpose, we require a representation of the classification model that allows changes (“mutation”) to the prediction function. To simplify the mutation process, we apply mutation incrementally (i.e., repeatedly changing small aspects of the classifier). Such a procedure is comparable to the local optimisation algorithm hill climbing. Based on an original solution, hill climbing evaluates neighboring solutions and selects them only if it improves the original fitness (Harman et al. 2010). We mutate a trained classification model clf with the goal to achieve improvements in accuracy and fairness. In this context, the fitness function measures the accuracy and fairness of clf on a validation dataset (i.e., a dataset that has not been used during the initial training of clf). “Accuracy” (acc) refers to the standard accuracy in machine learning, which is the number of correct predictions against the total number of predictions. To measure fairness, we use the three fairness metrics introduced in Section 2.3 (SPD, AOD, EOD).

Algorithm 1 outlines our procedure to improve accuracy and fairness of a trained classification model clf. In line 4, fitness(clf) determines the fitness of the modified classification model in terms of accuracy (\(acc'\)) and a fairness metric (\(fair'\)). In our empirical study we experiment with three different fairness metrics (see Section 2.3), one at a time. If desired, fitness(clf) can also be modified to take multiple fairness metrics into account simultaneously.

We only apply a mutation if the accuracy and fairness of the mutated model (\(acc',fair'\)) are better than the accuracy and fairness of the previous classification model (acc, fair) (Line 5). If that is not the case, the mutation is reverted (\(undo\_mutation\)) and the procedure continues until the terminal condition is met. A mutation of the trained model at each iteration of the search process that leads to an improvement in one objective (either accuracy or fairness) will almost certainly change the other objective at the same time. If the other objective is not worsened, the change is kept; otherwise, the change is reverted. This effect is accumulated over each iteration.

To show the generalizability of the approach, and in line with previous work (Kamiran et al. 2012, 2018; Chakraborty et al. 2020), we use the default configuration, as provided by scikit (Pedregosa et al. 2011) to train the classification models before applying our post-processing procedure.

3.2 Logistic Regression

Representation. Logistic Regression (LR) is a linear classifier that can be used for binary classification. Given training data, LR determines the best weights for its coefficients. Below, we illustrate the computation of the LR prediction with four tuneable weights (\(b_0,b_1,b_2,b_3\)). At first, Equation 4 presents the computation of predictions with a regular linear regression classifier. To make a prediction, LR uses this the Linear prediction in a sigmoid function (Equation 5):

This prediction function determines the binary label of a 3-dimensional input (\(x_1,x_2,x_3\)). In a binary classification scenario, we treat predictions \(\ge 0.5\) as label 1, and 0 otherwise.

This shows that the binary classification is determined by n variables (\(b_0 \dots b_{n-1}\)). To represent an LR model, we store the n coefficients in an n-dimensional vector.

Mutation

Given that an LR classification model can be represented by one-dimensional vector, we mutate single vector elements to create mutated variants of the model. In particular, we pick an element at random and multiply it by a value within a range of \(\{-10\%, 10\%\}\). We performed an analysis on different degrees of noise and mutation operators for LR models in Section 5.4.

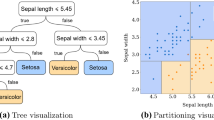

3.3 Decision Tree

Representation. Decision Trees (DT) are classification models that solve the classification process by creating tree-like solutions, which create leaves and branches based on features of the training data. We are interested in binary DTs. In binary DTs, every interior node (i.e., all nodes except for leaves) have exactly two child nodes (left and right).

Mutation

We use pruning as a means to mutate DTs. The pruning process deletes all the children of an interior node, transforming it into a leaf node, and has shown to improve the accuracy of DT classification in previous work (Breiman et al. 1984; Quinlan 1987; Breslow and Aha 1997). In particular, we pick an interior node i at random and treat it as a leaf node by removing all subjacent child nodes. We choose to use pruning, instead of leaf relabeling, because preliminary experiments showed that pruning outperforms leaf relabeling (i.e., Kamiran et al. (2010) used leaf relabeling in combination with an in-processing method but not in isolation).

4 Experimental Setup

In this section, we describe the experimental design we carry out to assess our search-based bias repair method for binary classification models (i.e., Logistic Regression and Decision Trees). We first introduce the research questions, followed by the subjects and the experimental procedure used to answer these questions.

4.1 Research Questions

Our evaluation aims to answer the following research questions:

RQ1: To what extent can the proposed search-based approach be used to improve both, accuracy and fairness, of binary classification models?

To answer this question, we apply our post-processing approach to LR and DTs (Section 3) on four datasets with a total of six protected attributes (Section 4.2).

The search procedure is guided by accuracy and each of the three fairness metrics (SPD, AOD, EOD) separately. Therefore, for each classification model, we perform 3 (fairness metrics) x 6 (datasets) = 18 experiments. For each of the fairness metrics, we mutate the classification models and measure changes in accuracy and the particular fairness metric used to guide the search (e.g., we post-process LR based on accuracy and SPD). We then determine whether the improvement in accuracy and fairness (as explained in Section 3) achieved by mutating the classification models are statistically significant, in comparison to the performance of the default classification model.

Furthermore, we compare optimization results from post-processing with existing bias mitigation methods:

RQ2: How does the proposed search-based approach compare to existing bias mitigation methods?

We address this research question in two steps. First, we perform a comparison with post-processing bias mitigation methods, which are applied at the same stage of the development process as our approach (RQ2.1). Afterwards, we compare our post-processing approach to pre- and in-processing methods (RQ2.2).

To answer both questions (RQ2.1 and RQ2.2), we benchmark our approach against existing and widely-used bias mitigation methods: three post-processing methods, three pre-processing methods and one in-processing method, which are all publicly available in the AIF360 framework (Bellamy et al. 2018). In particular, we applied these existing bias mitigation methods to LR and DTs on the same set of problems (i.e., the four datasets used also for RQ1 and RQ3) in order to compare their fairness-accuracy trade-off with the one achieved by our proposed approach. A description of the benchmarking bias mitigation methods is provided in Section 4.3, whereas the datasets used are described in Section 4.2.

While the objectives considered during the optimization procedure are improved, this has shown to carry detrimental effects on other objectives (Ferrucci et al. 2010; Chakraborty et al. 2020). Therefore, we determine the impact optimization for one fairness metric has on the other two fairness metrics, which have not been considered during the optimization procedure:

RQ3: What is the impact of post-processing guided by a single fairness metric on other fairness metrics?

To answer this question, we apply our post-processing method on LR and DTs. While optimizing for each of the three fairness metrics, we measure changes of the other two. We are then able to compare the fairness metrics before and after the optimization process, and visualize changes using boxplots. Moreover, we can determine whether there are statistically significant changes to “untouched” fairness metrics, which are not optimized for.

We perform additional experiments to gain insights on the importance of parameters when applying our post-processing method (i.e., terminal condition and mutation operations), and the performance of advanced binary classification models (e.g., neural networks) in comparison to Logistic Regression and Decision Tree classifiers. The investigation of parameter choices is addressed in Section 5.4, advanced classification models are investigated in Section 5.5.

4.2 Datasets

We perform our experiments on four real-world datasets used in previous software fairness work (Chakraborty et al. 2020; Zhang and Harman 2021) with a total of six protected attributes.

The Adult Census Income (Adult) (Kohav 2023) contains financial and demographic information about individuals from the 1994 U.S. census. The privileged and unprivileged groups are distinguished by whether their income is above 50 thousand dollars a year.

The Bank Marketing (Bank) (Moro et al. 2014) dataset contains details of a direct marketing campaign performed by a Portuguese banking institution. Predictions are made to determine whether potential clients are likely to subscribe to a term deposit after receiving a phone call. The dataset also includes information on the education and type of job of individuals.

The Correctional Offender Management Profiling for Alternative Sanctions (COMPAS) (propublica 2023) dataset contains the criminal history and demographic information of offenders in Broward County, Florida. To indicate whether a previous offender is likely to re-offend, they receive a recidivism label.

The Medical Expenditure Panel Survey (MEPS19) represents a large scale survey of families and individuals, their medical providers, and employers across the United States.Footnote 3 The favourable label is determined by “Utilization” (i.e., how frequently individuals frequented medical providers).

In Table 1, we provide the following information about the four datasets: number of rows and features, the favourable label and majority class. In addition, we list the protected attributes for each dataset (as provided by the AIF360 framework (Bellamy et al. 2018)), which are investigated in our experiments, and the respective privileged and unprivileged groups for each protected attribute.

4.3 Benchmark Bias Mitigation Methods

As our proposed method belongs to the category of post-processing methods, we compare it with all the state-of-art post-processing bias mitigation methods made publicly available in the AIF360 framework (Bellamy et al. 2018), as follows (Section 2.2):

-

Reject Option Classification (ROC) (Kamiran et al. 2012, 2018);

-

Equalized odds (EO) (Hardt et al. 2016);

-

Calibrated Equalized Odds (CO) (Pleiss et al. 2017).

AIF360 (Bellamy et al. 2018) provides ROC and CO with the choice of three different fairness metrics to guide the bias mitigation procedure (Section 2.3). ROC can be applied with SPD, AOD, and EOD. CO can be applied with False Negative rate (FNR), False Positive Rate (FPR), and a “weighed” combination of both. We apply both, ROC and CO, with each of the available fairness metrics. EO does not provide choices for fairness metrics to users.

While our focus lies on the empirical evaluation of our post-processing approach with approaches of the same type, we also consider a comparison with pre- and in-processing methods (RQ2-2, Section 5.5). In particular, we compare our approach to the following pre-processing and in-processing methods:

-

Optimized Pre-processing (OP) (Calmon et al. 2017): Probabilistic transformation of features and labels in the dataset.

-

Learning Fair Representation (LFR) (Zemel et al. 2013): Intermediate representation learning to obfuscate protected attributes.

-

Reweighing (RW) (Kamiran and Calders 2012; Calders et al. 2009): Reweighing the importance (weigh) of instances from the privileged and unprivileged group in the dataset.

-

Exponentiated gradient reduction (RED) (Agarwal et al. 2018): Two player game to find the best randomized classifier under fairness constraints.

The three pre-processing methods (OP, LFR, RW) are classification model-agnostic and can be easily be applied Logistic Regression and Decision Tree models (i.e., training data can be changed independent of the classification model used). Whereas, in order to apply RED, the in-processing approach proposed by Agarwal et al. (2018), one needs to provide a classification model (Logistic Regression or Decision Tree) and a fairness notion. In our case, we apply RED with three different fairness notions: “DemographicParity” (\(RED_{DP}\)), “EqualizedOdds” (\(RED_{EO}\)), “TruePositiveRate” (\(RED_{TPR}\)). These three notions coincide with our evaluation metrics, SPD, AOD and EOD, respectively.

4.4 Validation and Evaluation Criteria

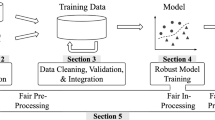

To validate the effectiveness of our post-processing approach to improve accuracy and fairness of binary classification models, we apply it to LR and DT. Since our optimization approach applies random mutations, we expect variation in the results. Figure 1 illustrates the empirical evaluation procedure of our method for a single datasplit. At first, we split the data in three sets: training (70%), validation (15%), test (15%).Footnote 4 To mitigate variation, we apply each bias mitigation method, including our newly proposed approach on 50 different data splits.

The training data is used to create a classifier which we can post-process. Once a classifier is trained (i.e., Logistic Regression or Decision Tree), we apply our optimization approach 30 times (Step 2).Footnote 5 To then determine the performance (accuracy and fairness) of our approach on a single data split, we compute the Pareto-optimal setFootnote 6 based on the performance on the validation set. Once we obtain the Pareto-set of optimized classification models based on their performance on the validation set, we average their performance on the test set. Performance on the test set (i.e., accuracy and fairness) is used to compare different bias mitigation methods and determine their effectiveness. Each run of our optimization approach is limited to 2, 500 iterations (terminal condition, Algorithm 1). The existing post-processing methods are deterministic, and therefore applied only once for each data split.

To assess the effectiveness of our approach (RQ1) and compare it with existing bias mitigation methods (RQ2), we consider both summary statistics (i.e., average accuracy and fairness), statistical significance tests and effect size measures, and Pareto-optimality. Furthermore, we use boxplots to visualize the impact of optimizing accuracy and one fairness metric on the other two fairness metrics (RQ3).

Pareto-optimality states that a solution a is not worse in all objectives than another solution b and better in at least one (Harman et al. 2010). We use Pareto-optimality to both measure how often our approach dominates the default classification model or is Pareto-optimal, and to plot the set of solutions found to be non-dominated (and therefore equally viable) with respect to the state-of-the-art (RQs1-2). In the case where there are two objectives, such as ours, this leads to a two dimensional Pareto surface.

To determine whether the differences in the results achieved by all approaches are statistical significant, we use the Wilcoxon Signed-Rank test, which is a non-parametric test that makes no assumptions about underlying data distribution (Wilcoxon 1992). We set the confidence limit, \(\alpha \), at 0.05 and applied the Bonferroni correction for multiple hypotheses testing (\(\alpha /K\), where K is the number of hypotheses).Footnote 7 This correction is the most conservative of all corrections and its usage allows us to avoid the risk of Type I errors (i.e., incorrectly rejecting the Null Hypothesis and claiming predictability without strong evidence). In particular, depending on the RQ, we test the following null hypothesis:

(RQ1) \(H_0\): The fairness and accuracy achieved by \(approach_x\) is not improved with respect to the default classification model. The alternative hypothesis is as follows: \(H_1\): The fairness and accuracy achieved by \(approach_x\) improves with respect to the default classification model. In this context, “improved” means that the accuracy is increased and fairness metric values are decreased (e.g., a SPD of 0 indicates that there is no unequal treatment of privileged and unprivileged groups).

(RQ3) \(H_0\): Optimizing for accuracy and fairness metric \(m_1\) does not improve fairness metric\(m_2\) with respect to the default classification model. The alternative hypothesis is as follows: \(H_1\): Optimizing for accuracy and fairness metric \(m_1\) improves fairness metric\(m_2\) with respect to the default classification model. For this RQ, we summarise the results of the Wilcoxon tests by counting the number of win-tie-loss as follows: p–value<0.01 (win), p–value>0.99 (loss), and 0.01\(\le \) p–value \(\ge \)0.99 (tie), as done in previous work (Sarro et al. 2017; Kocaguneli et al. 2011; Sarro et al. 2018; Sarro and Petrozziello 2018).

In addition to evaluating statistical significance, we measure the effect size based on the Vargha and Delaney’s \(\hat{A}_{12}\) non-parametric measure (Vargha and Delaney 2000), which does not require that the data is normally distributed (Arcuri and Briand 2014). The \(\hat{A}_{12}\) measure compares an algorithm A with another algorithm B, to determine the probability that A performs better than B with respect to a performance measure M:

In this formula, m and n represent the number of observations made with algorithm A and B respectively; \(R_1\) denotes the rank sum of observations made with A. If A performs better than B, \(\hat{A}_{12}\) can display one of the following effect sizes: \(\hat{A}_{12} \ge 0.72\) (large), 0.64 \(< \hat{A}_{12} < 0.72\) (medium), 0.56 \(< \hat{A}_{12} < 0.64\) (small), although these thresholds are not definitive (Sarro et al. 2016).

4.5 Threats to Validity

The internal validity of our study relies in the confidence that the experimental results we obtained are trustworthy and correct. To alleviate possible threats to the internal validity, we applied our post-processing method and existing bias mitigation methods 50 times, under different train/validation/test splits. This allowed us to use statistical significance tests to further assess our results and findings. We have used traditional measures used in the software fairness literature to assess ML accuracy, while we recognise alternative measures could be used to take into account data imbalance (Chen et al. 2023b; Moussa and Sarro 2022).

Threats to external validity related to generalizability of our results, are primarily concerned with the datasets, approaches and metrics we investigated. To mitigate this threat we have considered in this study all datasets publicly available which have been previously used in the literature to solve the same problem. Using more data in the future will further increase the generalizability of our results. Furthermore, we have successfully applied our post-processing method on two inherently different classification models (Logistic Regression, Decision Trees), which strengthens the confidence that our approach could be applied to other binary classifiers. We have also explored all state-of-the-art post-processing debiasing methods in addition to three pre-processing and one in-processing method available from the AIF360 framework (Bellamy et al.

Reduction: Multiply a single vector element by a random value within a range of \(\{-noise, noise\}\). Adjustment: Multiply a single vector element by a random value within a range of \(\{1-noise, 1+noise\}\). Vector: Multiply each vector element by a random value within a range of \(\{1-noise, 1+noise\}\). We investigate a total of three different levels of noise for mutation (0.05, 0.1, 0.2). While an increased number of steps should always be beneficial for improving a classification model (i.e., the chance of finding more fairness and accuracy improvements is higher), the question is whether the additional costs are justified. For this purpose, we consider three terminal conditions: 1000, 2500 and 5000 steps. Figure 4 compares the number of successful modifications achieved by modifying Logistic Regression models with different degrees of noise, as well as the benefit of performing additional steps in the optimization procedure for the three mutation operators (Reduction, Adjustment, Vector). For the two mutation operators that modify a single element, Reduction and Adjustment, we can observe that the highest number of successful modifications is achieved by a mutation weight of 0.2. Among the 36 cases (two mutation operators \(\times \) six datasets \(\times \) three terminal conditions), there is only one case where a mutation weight of 0.1 achieves a higher number of successful mutations (i.e., 5.67 with a weight of 0.1 over 5.62 with a weight of 0.2, with Reduction). Using a mutation weight 0.2 for Vector modifications only achieves the highest number of successful modification for one of the six datasets (Compas-sex). Given that Vector modifications are more intrusive than the other mutation operators (i.e., modifying each vector element as opposed to modifying a single one), changes might be too big, or a stage where no further changes are applicable is reached quicker with high-noise modifications. When applying Reduction modifications, an average 92.9% of all successful modification are performed in the first 1000 steps. Within an additional 1500 steps (i.e., terminal condition of 2500 steps), 5.6% of successful modification are performed. Only 1.6% of all successful modifications are performed in the last 2500 steps, from 2501 to 5000. While the percentages vary over datasets (e.g., after 1000 steps, 98% and 85% of modifications are performed for the Adult and COMPAS dataset respectively), it can be seen that the benefit of additional steps decreases over time, as the majority of modifications are performed within the first 1000 steps. Vector and Adjustment show similar results. The last 2500 steps (from 2501 to 5000) performed 10-15% of the modifications, while more than 60% of successful modifications are performed in the first 1000 steps. This confirms that the early steps of the optimization procedure are of higher importance than later iterations. Given the low amount of additional modification achieved after 5000 steps, it is appears justified to not increase the limit for modifying Logistic Regression models further for our experiments (RQ1-RQ3), with the chances of potential improvements when using a mutation weight of 0.2. However, one could argue for decreasing the number of steps to 1000, which would decrease the runtime of our algorithm while retaining at least 60% of the successful modifications, depending on the mutation operator. Lastly, we compare the quality of changes between the three mutation operators. This allows us to not only compare the amount of modifications but also the effectiveness of different operators. For this purpose, we illustrate the pareto-fronts for each of the fairness metrics in combination with the achieved accuracy in Figure 5. Among the nine mutated LR models (three mutation operators with three different levels of noise, after 5000 steps), we only visualize non-dominated ones. The modification operator that is part of the most pareto-fronts is a Vector modification with a noise level of 0.2 (in 16 out of 18 pareto-fronts). Reduction and Adjustment are part of three to six pareto-fronts, depending on the level of noise used. This illustrates that the quality of improvements is influenced by the choice of mutation operators. Pareto-fronts of the three different mutation operators (Reduction, Adjustment, Vector), and three levels of noise (0.2 - black, 0.1 - gray, 0.05 - white). Results are shown for four datasets: Adult (A), COMPAS (C), Bank (B), MEPS19 (M). Three protected attributes are considered: race (R), sex (S), age (A). The y-axis shows accuracy; the x-axis shows the respective fairness metric Commonly, the effectiveness of bias mitigation methods is evaluated for a given classification model (e.g., which bias mitigation method should be applied to the model) rather than to compare performances across models (e.g., which model should the bias mitigation methods be applied to). Nonetheless, it can be interesting to compare the performance of more advanced binary classification models for potential future applications. For this purpose, we consider three advanced types of tree-based and regression-based classification models: Random Forest (RF), Gradient Boosting (GB), Neural Network (NN). Following existing fairness approaches (Chen et al. 2023b), our NN model consists of five hidden layers (64, 32, 16, 8, 4, neurons respectively) and is trained for 20 epochs. In accordance with our implementation of LR and DT models, RF and GB are implemented using the default configurations provided by scikit (Pedregosa et al. 2011). Table 10 presents the accuracy achieved by each of the advanced classification models, Logistic Regression and Decision Trees, and our post-processing approach applied to both these models. To take fairness metrics in account, we count how often each classification model is part of any of the 18 fairness-accuracy pareto-fronts (six datasets and three fairness metrics), which illustrates trade-offs between fairness and accuracy. Among all classification models, GB achieves the highest accuracy on all datasets, and outperforms RFs and NNs. NNs are outperformed by unmodified LR models for all datasets. RFs are outperformed by our optimized LR models in 5 out of 6 cases for accuracy, except for the Bank dataset. While DTs have the lowest accuracy, they also show the lowest degree of bias in 15 out of 18 cases. The only dataset for which DTs do not achieve the lowest degree of bias is the Bank dataset. For all three fairness metrics, NNs achieve the lowest degree of bias for the Bank dataset. This suggests, that it can be beneficial to carefully investigate and select suitable classification models for each use case. Moreover, we observe that there is a trade-off between accuracy and fairness, as the classification model with the highest accuracy is never the one with lowest bias and vice versa. Nonetheless, it can be promising to use Boosting models as a starting point to apply bias mitigation to, as they exhibited the highest accuracy.5.5 Advanced Classification Models

6 Conclusions and Future Work

We proposed a novel search-based approach to mutate classification models in a post-processing stage, in order to simultaneously repair fairness and accuracy issues. This approach differentiates itself from existing bias mitigation methods, which conform to the fairness-accuracy trade-off (i.e., repair fairness issues come at a cost of a reduced accuracy). We performed a large scale empirical study to evaluate our approach with two popular binary classifiers (Logistic Regression and Decision Trees) on four widely used datasets and three fairness metrics, publicly available in the popular IBM AIF360 framework (Bellamy et al. 5.4 and 5.5.

Data Availability

We make our scripts and experimental results publicly available to allow for replication and extension of our work (Hort et al. 2023d).

Notes

In this paper, we use term “bias repair” and “bias mitigation” alternatively to refer to the activities conducted to improve software fairness.

Decision-making scenarios that highly demand fairness often require high explainability, while low explainability is a big disadvantage of big complex models such as Deep Neural Networks.

We have performed a comparison of different data splits (i.e., it is beneficial to train with more data by combining train and validation) set but could not find systematic advantages. Further details can be found in our online appendix (Hort et al. 2023d).

There is no particular reason for choosing to run it 30 times, this number can be adjusted as one sees fit. Ideally the more runs the better, in order to cater for the inherent stochastic nature of the approach, yet limited computational resources or time may limit the number of repetitions performed. In practice, only one classification model can be used, therefore one can apply our approach multiple times and select a model from the Pareto-front, or use the entire search budget on building a single optimal classification model.

This is the set of solutions that are non-dominated to each other but are superior to the rest of solutions in the search space. In other words each solution of the Pareto-set includes at least one objective inferior to another solution in that Pareto-set, although both solutions are superior to others in the rest of the search space with respect to all objectives.

References

Agarwal A, Beygelzimer A, Dudík M, Langford J, Wallach H (2018) A reductions approach to fair classification. In: International conference on machine learning. PMLR, 60–69

Aggarwal A, Lohia P, Nagar S, Dey K, Saha D (2019) Black box fairness testing of machine learning models. In: Proceedings of the 2019 27th ACM joint meeting on european software engineering conference and symposium on the foundations of software engineering. pp. 625–635

Angell R, Johnson B, Brun Y, Meliou A. (2018) Themis: Automatically testing software for discrimination. In: Proceedings of the 2018 26th ACM joint meeting on european software engineering conference and symposium on the foundations of software engineering. pp. 871–875

Angwin J, Larson J, Mattu S, Kirchner L (2016) Machine bias. ProPublica. See https://www.propublica.org/article/machine-bias-risk-assessments-in-criminal-sentencing/

Arcuri A, Briand L (2014) A Hitchhiker’s guide to statistical tests for assessing randomized algorithms in software engineering. STVR 24(3):219–250

Bellamy RKE, Dey K, Hind M, Hoffman SC, Houde S, Kannan K, Lohia P, Martino J, Mehta S, Mojsilovic A, et al (2018) AI Fairness 360: An extensible toolkit for detecting, understanding, and mitigating unwanted algorithmic bias. ar**v:1810.01943

Berk R, Heidari H, Jabbari S, Joseph M, Kearns M, Morgenstern J, Neel S, Roth A (2017) A Convex Framework for Fair Regression. FAT-ML Workshop

Berk R, Heidari H, Jabbari S, Kearns M, Roth A (2018) Fairness in criminal justice risk assessments: The state of the art. Sociological Methods & Research. https://doi.org/10.1177/0049124118782533

Biswas S, Rajan H (2020) Do the Machine Learning Models on a Crowd Sourced Platform Exhibit Bias? An Empirical Study on Model Fairness. ar**v:2005.12379

Breiman L, Friedman J, Stone CJ, Olshen RA (1984) Classification and regression trees. CRC Press

Breslow LA, Aha DW (1997) Simplifying decision trees: A survey. Knowl Eng Rev 12(1):1–40

Brun Y, Meliou A (2018) Software fairness. In: Proceedings of the 2018 26th ACM joint meeting on european software engineering conference and symposium on the foundations of software engineering. 754–759

Calders T, Kamiran F, Pechenizkiy M (2009) Building classifiers with independency constraints. In: 2009 IEEE international conference on data mining workshops. IEEE, 13–18

Calders T, Karim A, Kamiran F, Ali W, Zhang X (2013) Controlling attribute effect in linear regression. In 2013 IEEE 13th international conference on data mining. IEEE, 71–80

Calders T, Verwer S (2010) Three naive Bayes approaches for discrimination-free classification. Data Min Knowl Discov 21(2):277–292

Calmon F, Wei D, Vinzamuri B, Ramamurthy KN, Varshney KR (2017) Optimized pre-processing for discrimination prevention. In Advances in neural information processing systems. 3992–4001

Celis LE, Huang L, Keswani V, Vishnoi NK (2019) Classification with fairness constraints: A meta-algorithm with provable guarantees. In: Proceedings of the conference on fairness, accountability, and transparency. 319–328

Chakraborty J, Majumder S, Menzies T (2021) Bias in machine learning software: Why? how? what to do?. In: Proceedings of the 29th ACM joint meeting on european software engineering conference and symposium on the foundations of software engineering. 429–440

Chakraborty J, Majumder S, Yu Z, Menzies T (2020) Fairway: a way to build fair ML software. In: Proceedings of the 28th ACM joint meeting on european software engineering conference and symposium on the foundations of software engineering. 654–665

Chen J, Kallus N, Mao X, Svacha G, Udell M (2019) Fairness under unawareness: Assessing disparity when protected class is unobserved. In: Proceedings of the conference on fairness, accountability, and transparency. 339–348

Chen Z, Zhang JM, Hort M, Sarro F, Harman M (2022a) Fairness Testing: A Comprehensive Survey and Analysis of Trends. ar**v:2207.10223

Chen Z, Zhang JM, Sarro F, Harman M (2022b) MAAT: a novel ensemble approach to addressing fairness and performance bugs for machine learning software. In: Proceedings of the 30th ACM joint european software engineering conference and symposium on the foundations of software engineering. 1122–1134

Chen Z, Zhang JM, Sarro F, Harman M (2023) A Comprehensive Empirical Study of Bias Mitigation Methods for Machine Learning Classifiers. ACM Trans Softw Eng Methodol 32(4):106:1-106:30

Chen Z, Zhang JM, Sarro F, Harman M (2023b) A Comprehensive Empirical Study of Bias Mitigation Methods for Machine Learning Classifiers. ACM Trans Softw Eng Methodol

Chen Z, Zhang JM, Sarro F, Harman M (2024) Fairness Improvement with Multiple Protected Attributes: How Far Are We?. In: International conference on software engineering (ICSE)

Corbett-Davies S, Pierson E, Feller A, Goel S, Huq A (2017)Algorithmic decision making and the cost of fairness. In: Proceedings of the 23rd ACM SIGKDD international conference on knowledge discovery and data mining. 797–806

Dwork C, Hardt M, Pitassi T, Reingold O, Zemel R (2012) Fairness through awareness. In: Proceedings of the 3rd innovations in theoretical computer science conference. 214–226

Feldman M, Friedler SA, Moeller J, Scheidegger C, Venkatasubramanian S (2015) Certifying and removing disparate impact. In: proceedings of the 21th ACM SIGKDD international conference on knowledge discovery and data mining. 259–268

Ferrucci F, Gravino C, Oliveto R, Sarro F (2010) Genetic Programming for Effort Estimation: An Analysis of the Impact of Different Fitness Functions. In: 2nd International symposium on search based software engineering. 89–98

Friedler SA, Scheidegger C, Venkatasubramanian S, Choudhary S, Hamilton EP, Roth D (2019) A comparative study of fairness-enhancing interventions in machine learning. In: Proceedings of the conference on fairness, accountability, and transparency. ACM, 329–338

Galhotra S, Brun Y, Meliou A (2017) Fairness testing: testing software for discrimination. In: Proceedings of the 2017 11th joint meeting on foundations of software engineering. ACM, 498–510

Gohar U, Cheng L (2023) A Survey on Intersectional Fairness in Machine Learning: Notions, Mitigation, and Challenges. In: Elkind E(Ed) Proceedings of the thirty-second international joint conference on artificial intelligence, IJCAI-23, . International Joint Conferences on Artificial Intelligence Organization, 6619–6627 https://doi.org/10.24963/ijcai.2023/742

Hardt M, Price E, Srebro N (2016) Equality of opportunity in supervised learning. In: Advances in neural information processing systems. 3315–3323

Harman M, McMinn P, De Souza JT, Yoo S (2010) Search based software engineering: Techniques, taxonomy, tutorial. In: Empirical software engineering and verification. Springer, 1–59

Harrison G, Hanson J, Jacinto C, Ramirez J, Ur B (2020) An empirical study on the perceived fairness of realistic, imperfect machine learning models. In: Proceedings of the 2020 conference on fairness, accountability, and transparency. 392–402

Horkoff J (2019) Non-functional requirements for machine learning: Challenges and new directions. In: 2019 IEEE 27th international requirements engineering conference (RE). IEEE, 386–391

Hort M, Chen Z, Zhang JM, Harman M, Sarro F (2023a) Bias mitigation for machine learning classifiers: A comprehensive survey. ACM J Resp Comput

Hort M, Chen Z, Zhang JM, Harman M, Sarro F (2023b) Bias Mitigation for Machine Learning Classifiers: A Comprehensive Survey. ACM J Resp Comput. ar**v:2207.07068

Hort M, Moussa R, Sarro F (2023) Multi-objective search for gender-fair and semantically correct word embeddings. Appl Soft Comput 133(109916):1568–4946. https://doi.org/10.1016/j.asoc.2022.109916

Hort M, Sarro F (2021) Did you do your homework? Raising awareness on software fairness and discrimination. In: 2021 36th IEEE/ACM international conference on automated software engineering (ASE). IEEE, 1322–1326

Hort M, Zhang JM, Harman M, Sarro F (2023d) On-line Appendix to the article Search-based Automatic Repair for Fairness and Accuracy in Decision-making Software https://github.com/SOLAR-group/Fairness-Postprocessing

Hort M, Zhang JM, Sarro F, Harman M (2021) Fairea: A model behaviour mutation approach to benchmarking bias mitigation methods. In: Proceedings of the 29th ACM joint meeting on european software engineering conference and symposium on the foundations of software engineering. 994–1006

Jacobs AZ, Wallach H (2021) Measurement and Fairness. In: Proceedings of the 2021 ACM conference on fairness, accountability, and transparency (FAccT ’21). Association for Computing Machinery, New York, NY, USA, 375-385. 9781450383097 https://doi.org/10.1145/3442188.3445901

Kamiran F, Calders T (2009) Classifying without discriminating. In: 2009 2nd international conference on computer, control and communication. IEEE, 1–6

Kamiran F, Calders T (2012) Data preprocessing techniques for classification without discrimination. Knowl Inf Syst 33(1):1–33

Kamiran F, Calders T, Pechenizkiy M (2010) Discrimination aware decision tree learning. In: 2010 IEEE international conference on data mining. IEEE, 869–874

Kamiran F, Karim A, Zhang X (2012) Decision theory for discrimination-aware classification. In: 2012 IEEE 12th international conference on data mining. IEEE, 924–929

Kamiran F, Mansha S, Karim A, Zhang X (2018) Exploiting reject option in classification for social discrimination control. Inf Sci 425:18–33

Kamishima T, Akaho S, Asoh H, Sakuma J (2012) Fairness-aware classifier with prejudice remover regularizer. In: Joint european conference on machine learning and knowledge discovery in databases. Springer, 35–50

Kearns M, Neel S, Roth A, Wu ZS (2018) Preventing Fairness Gerrymandering: Auditing and Learning for Subgroup Fairness. In: Dy J, Krause A (Eds) Proceedings of Machine Learning Research, Vol. 80. PMLR, Stockholmsmässan, Stockholm Sweden, 2564–2572. http://proceedings.mlr.press/v80/kearns18a.html

Kocaguneli E, Menzies T, Keung JW (2011) On the value of ensemble effort estimation. IEEE TSE 38(6):1403–1416

Kohav R (2023) Adult data set. http://archive.ics.uci.edu/ml/datasets/adult

Li X, Chen Z, Zhang JM, Sarro F, Zhang Y, Liu X (2023) Dark-Skin Individuals Are at More Risk on the Street: Unmasking Fairness Issues of Autonomous Driving Systems. ar**v:abs/2308.02935

Ma M, Tian Z, Hort M, Sarro F, Zhang H, Lin Q, Zhang D (2022) Enhanced Fairness Testing via Generating Effective Initial Individual Discriminatory Instances. ar**v:cs.SE/2209.08321

Mehrabi N, Morstatter F, Saxena N, Lerman K, Galstyan A (2019) A survey on bias and fairness in machine learning. ar**v:1908.09635

Mikians J, Gyarmati L, Erramilli V, Laoutaris N (2012) Detecting price and search discrimination on the internet. In: Proceedings of the 11th ACM workshop on hot topics in networks. 79–84

Moro S, Cortez P, Rita P (2014) A data-driven approach to predict the success of bank telemarketing. Decis Support Syst 62:22–31

Moussa R, Sarro F (2022) On the Use of Evaluation Measures for Defect Prediction Studies. In: 2022 ACM SIGSOFT international symposium on software testing and analysis (ISSTA. ACM

Pedregosa F, Varoquaux G, Gramfort A, Michel V, Thirion B, Grisel O, Blondel M, Prettenhofer P, Weiss R, Dubourg V, Vanderplas J, Passos A, Cournapeau D, Brucher M, Perrot M, Duchesnay E (2011) Scikit-learn: Machine Learning in Python. J Mach Learn Res 12:2825–2830

Pedreshi D, Ruggieri S, Turini F (2008) Discrimination-aware data mining. In: Proceedings of the 14th ACM SIGKDD international conference on Knowledge discovery and data mining. 560–568

Perera A, Aleti A, Tantithamthavorn C, Jiarpakdee J, Turhan B, Kuhn L, Walker K (2022) Search-Based Fairness Testing for Regression-Based Machine Learning Systems. Empir Softw Eng 27(3):79. https://doi.org/10.1007/s10664-022-10116-7

Pessach D, Shmueli E (2022) A review on fairness in machine learning. ACM Comput Surv (CSUR) 55(3):1–44

Pleiss G, Raghavan M, Wu F, Kleinberg J, Weinberger KQ (2017) On fairness and calibration. In: Advances in neural information processing systems. 5680–5689

propublica (2023) data for the propublica story ‘machine bias’. https://github.com/propublica/compas-analysis/

Quinlan JR (1987) Simplifying decision trees. Int J Man-Mach Stud 27(3):221–234

Romei A, Ruggieri S (2011) A multidisciplinary survey on discrimination analysis

Sarro F (2023) Search-based software engineering in the era of modern software systems. In: Procs. of the 31st IEEE international requirements engineering conferece

Sarro F, Ferrucci F, Harman M, Manna A, Ren J (2017) Adaptive Multi-Objective Evolutionary Algorithms for Overtime Planning in Software Projects. IEEE TSE 43(10):898–917

Sarro F, Harman M, Jia Y, Zhang Y (2018) Customer rating reactions can be predicted purely using app features. In: IEEE international requirements engineering conference. 76–87

Sarro F, Petrozziello A (2018) Linear Programming As a Baseline for Software Effort Estimation. ACM TOSEM 27(3):12:1-12:28

Sarro F, Petrozziello A, Harman M. (2016). Multi-objective software effort estimation. In Procs. of the international conference on software engineering (ICSE). IEEE, 619–630

Savani Y, White C, Govindarajulu NS (2020) Intra-processing methods for debiasing neural networks. Adv Neural Inf Process 33(2020):2798–2810

Speicher T, Heidari H, Grgic-Hlaca N, Gummadi KP, Singla A, Weller A, Zafar MB (2018) A unified approach to quantifying algorithmic unfairness: Measuring individual & group unfairness via inequality indices. In: Proceedings of the 24th ACM SIGKDD international conference on knowledge discovery & data mining. 2239–2248

Tizpaz-Niari S, Kumar A, Tan G, Trivedi A (2022) Fairness-aware configuration of machine learning libraries. ar**v:2202.06196

Udeshi S, Arora P, Chattopadhyay S (2018) Automated directed fairness testing. In Proceedings of the 33rd ACM/IEEE international conference on automated software engineering. 98–108

Vargha A, Delaney HD (2000) A critique and improvement of the CL common language effect size statistics of McGraw and Wong. J Educ Behav Stat 25(2):101–132

Wilcoxon F (1992) Individual comparisons by ranking methods. In: Breakthroughs in statistics. Springer, 196–202

Zafar MB, Valera I, Rogriguez MG, Gummadi KP (2017) Fairness constraints: Mechanisms for fair classification. In: Artificial intelligence and statistics. 962–970

Zemel R, Wu Y, Swersky K, Pitassi T, Dwork C (2013) Learning fair representations. In: International conference on machine learning. 325–333

Zhang BH, Lemoine B, Mitchell M (2018) Mitigating unwanted biases with adversarial learning. In: Proceedings of the 2018 AAAI/ACM conference on ai, ethics, and society. ACM, 335–340

Zhang J, Harman M (2021) Ignorance and Prejudice in Software Fairness. In: 2021 IEEE/ACM 43th international conference on software engineering (ICSE). IEEE

Zhang JM, Harman M, Ma L, Liu Y (2020) Machine Learning Testing: Survey, Landscapes and Horizons. IEEE Trans Softw Eng 1(1)

Žliobaite I, Kamiran F, Calders T (2011) Handling conditional discrimination. In: 2011 IEEE 11th international conference on data mining. IEEE, 992–1001

Acknowledgements

This work is supported by the ERC grant no. 741278 (EPIC). Max Hort is supported through the ERCIM ‘Alain Bensoussan’ Fellowship Programme.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflicts of interest

The authors declare that they have no conflict of interest.

Additional information

Communicated by: Brittany Johnson || Justin Smith

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

This article belongs to the Topical Collection: Special Issue on Equitable Data and Technology.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article’s Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article’s Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Hort, M., Zhang, J.M., Sarro, F. et al. Search-based Automatic Repair for Fairness and Accuracy in Decision-making Software. Empir Software Eng 29, 36 (2024). https://doi.org/10.1007/s10664-023-10419-3

Accepted:

Published:

DOI: https://doi.org/10.1007/s10664-023-10419-3