Abstract

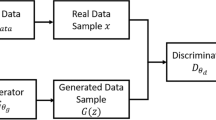

Mode collapse is a very common issue in Generative Adversarial Networks. To alleviate the mode collapse, we introduce a novel semi-supervised GAN-based generative model and propose a quantitative criterion to describe the degree of mode collapse. We design several schemes in the experiments to observe the effect of semi-supervised learning on mode collapse. In addition, the semi-supervised model can capture both supervised and unsupervised disentangled representation at the same time by introducing similarity constraint loss, so that generated image is higher-quality and more varied. The architecture leverages a few labels to control some factors on the class-conditional representation and captures other interpretable unsupervised representations with a large amount of unlabeled data. Both quantitative and visual results on the CIFAR-10 and SVHN datasets verify the ability of the proposed architecture.

Similar content being viewed by others

References

Arjovsky M, Bottou L (2017) Towards principled methods for training generative adversarial networks. Stat, pp 1050

Augustus Odena Christopher Olah JS (2017) Conditional image synthesis with auxiliary classifier gans. In: International conference on learning representations, pp 2642–2651

Chen X, Duan Y, Houthooft R, Schulman J, Sutskever I, Abbeel P (2016) Infogan: Interpretable representation learning by information maximizing generative adversarial nets. In: Advances in neural information processing systems, pp 2172–2180

Bang D, Shim H (2021) mggan: Solving mode collapse using manifold guided training. In: Proceedings of the IEEE/CVF international conference on computer vision, pp 2347–2356

Goodfellow I, Pouget-Abadie J, Mirza M, Xu B, Warde-Farley D, Ozair S, Courville A, Bengio Y (2014) Generative adversarial nets. In: Advances in neural information processing systems, pp 2672–2680

Gulrajani I, Ahmed F, Arjovsky M, Dumoulin V, Courville AC (2017) Improved training of wasserstein gans. In: Advances in neural information processing systems, pp 5769–5779

Heusel M, Ramsauer H, Unterthiner T, Nessler B, Hochreiter S (2017) Gans trained by a two time-scale update rule converge to a local nash equilibrium. Advances in neural information processing systems 30

Krizhevsky A, Hinton G (2009) Learning multiple layers of features from tiny images

LeCun Y, Cortes C (2010) MNIST handwritten digit database. http://yann.lecun.com/exdb/mnist/

Li W, Fan L, Wang Z, Ma C, Cui X (2021) Tackling mode collapse in multi-generator gans with orthogonal vectors. Pattern Recogn 110:107646

Li X, Chen L, Wang L, Wu P, Tong W (2018) Scgan: Disentangled representation learning by adding similarity constraint on generative adversarial nets. IEEE Access

Li Y, Singh KK, Ojha U, Lee YJ (2020) Mixnmatch: Multifactor disentanglement and encoding for conditional image generation. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp 8039–8048

Makhzani A, Shlens J, Jaitly N, Goodfellow I, Frey B (2016) Adversarial autoencoders. In: International conference on learning representations

Mao X, Li Q, **e H, Lau RY, Wang Z, Paul Smolley S (2017) Least squares generative adversarial networks. In: Proceedings of the IEEE international conference on computer vision, pp 2794–2802

Metz L, Poole B, Pfau D, Sohl-Dickstein J (2017) Unrolled generative adversarial networks. In: International conference on learning representations

Mirza M, Osindero S (2014) Conditional generative adversarial nets. Computer Science, pp 2672–2680

Miyato T, Kataoka T, Koyama M, Yoshida Y (2018) Spectral normalization for generative adversarial networks. In: International conference on learning representations

Netzer Y, Wang T, Coates A, Bissacco A, Wu B, Ng AY (2011) Reading digits in natural images with unsupervised feature learning. In: NIPS Workshop on deep learning and unsupervised feature learning, vol 2011, pp 5

Durall R, Chatzimichailidis A, Labus P (2021) Combating mode collapse in gan training: An empirical analysis using hessian eigenvalues. In: VISIGRAPP, pp 211–218

Russakovsky O, Deng J, Su H, Krause J, Satheesh S, Ma S, Huang Z, Karpathy A, Khosla A, Bernstein M et al (2015) Imagenet large scale visual recognition challenge. Int J Comput Vis 115(3):211–252

Salimans T, Goodfellow I, Zaremba W, Cheung V, Radford A, Chen X (2016) Improved techniques for training gans. In: Advances in neural information processing systems, pp 2234–2242

Salimans T, Goodfellow I, Zaremba W, Cheung V, Radford A, Chen X (2016) Improved techniques for training gans. Advances in neural information processing systems 29

Singh KK, Ojha U, Lee YJ (2019) Finegan: Unsupervised hierarchical disentanglement for fine-grained object generation and discovery. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp 6490– 6499

Sohn K, Lee H, Yan X (2015) Learning structured output representation using deep conditional generative models. Advances in neural information processing systems 28

Srivastava N, Hinton G, Krizhevsky A, Sutskever I, Salakhutdinov R (2014) Dropout: a simple way to prevent neural networks from overfitting. J Mach Learn Res 15(1):1929–1958

Szegedy C, Vanhoucke V, Ioffe S, Shlens J, Wojna Z (2016) Rethinking the inception architecture for computer vision. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 2818–2826

Villani C (2009) Optimal transport, old and new. grundlehren der mathematischen wissenshaften 338

Zagoruyko S, Komodakis N (2016) Wide residual networks

Zhu Z, Luo P, Wang X, Tang X (2014) Multi-view perceptron: a deep model for learning face identity and view representations. In: Advances in neural information processing systems, pp 217–225

Acknowledgements

This work is supported in part by Shanghai science and technology committee under grant No. 21511100600. We appreciate the High Performance Computing Center of Shanghai University, and Shanghai Engineering Research Center of Intelligent Computing System (No. 19DZ2252600) for providing the computing resources and technical support.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Appendix

Appendix

FID and SFID are calculated separately for each category of generated images, but for the final comparison, the average of these numbers is calculated. In the comparative experiments, the features of 3k generated images are selected to fit the generated distribution, and the features of all the testing images were used to fit the real distribution. After that, fid and SFID are calculated according to formula (1). As shown in Tables 6 and 7, the smaller SFID is, the higher the classification accuracy is, which is consistent with our intuitive motivation. In other words, the better the diversity of generated images is, the higher the classification accuracy will be.

Rights and permissions

About this article

Cite this article

Li, X., Luan, Y. & Chen, L. Semi-supervised GAN with similarity constraint for mode diversity. Appl Intell 53, 3933–3946 (2023). https://doi.org/10.1007/s10489-022-03771-2

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10489-022-03771-2