Search

Search Results

-

Convergence rate of multiple-try Metropolis independent sampler

The multiple-try Metropolis method is an interesting extension of the classical Metropolis–Hastings algorithm. However, theoretical understanding...

-

Analysis and Comparison of Firefly Algorithm for Measuring Convergence Rate in Distributed Environment

Evolutionary algorithms are widely adapted by researcher for obtaining optimal result in different applications. Firefly algorithm is one of the...

-

Convergence rate and exponential stability of backward Euler method for neutral stochastic delay differential equations under generalized monotonicity conditions

This work focuses on the numerical approximations of neutral stochastic delay differential equations with their drift and diffusion coefficients...

-

Convergence rate bounds for iterative random functions using one-shot coupling

One-shot coupling is a method of bounding the convergence rate between two copies of a Markov chain in total variation distance, which was first...

-

The Convergence of Incremental Neural Networks

The investigation of neural network convergence represents a pivotal and indispensable area of research, as it plays a crucial role in unraveling the...

-

Rates of robust superlinear convergence of preconditioned Krylov methods for elliptic FEM problems

This paper considers the iterative solution of finite element discretizations of second-order elliptic boundary value problems. Mesh independent...

-

Convergence of Distributions on Paths

We study the convergence of distributions on finite paths of weighted digraphs, namely the family of Boltzmann distributions and the sequence of...

-

On the Distributional Convergence of Temporal Difference Learning

Temporal Difference (TD) learning is one of the most simple but efficient algorithms for policy evaluation in reinforcement learning. Although the...

-

Weight Prediction Boosts the Convergence of AdamW

In this paper, we introduce weight prediction into the AdamW optimizer to boost its convergence when training the deep neural network (DNN) models....

-

Several accelerated subspace minimization conjugate gradient methods based on regularization model and convergence rate analysis for nonconvex problems

In this paper, four accelerated subspace minimization conjugate gradient methods based on 2-regularization or 3-regularization models with different...

-

Influence of Initial Guess on the Convergence Rate and the Accuracy of Wang–Landau Algorithm

AbstractThe influence of the initial guess for the density of states on the convergence rate of the Wang–Landau algorithm was studied. The simulation...

-

Analyzing the Convergence of Federated Learning with Biased Client Participation

Federated Learning (FL) is a promising decentralized machine learning framework that enables a massive number of clients (e.g., smartphones) to...

-

On the convergence of tracking differentiator with multiple stochastic disturbances

This paper investigates the convergence, noise-tolerance, and filtering performance of a tracking differentiator in the presence of multiple...

-

Distributed Training of Deep Neural Networks: Convergence and Case Study

Deep neural network training on a single machine has become increasingly difficult due to a lack of computational power. Fortunately, distributed...

-

Enhanced adaptive-convergence in Harris’ hawks optimization algorithm

This paper presents a novel enhanced adaptive-convergence in Harris’ hawks optimization algorithm (EAHHO). In EAHHO, considering that Harris’ hawks...

-

Convergence and Recovery Guarantees of Unsupervised Neural Networks for Inverse Problems

Neural networks have become a prominent approach to solve inverse problems in recent years. While a plethora of such methods was developed to solve...

-

Debugging convergence problems in probabilistic programs via program representation learning with SixthSense

Probabilistic programming aims to open the power of Bayesian reasoning to software developers and scientists, but identification of problems during...

-

Boundedness and Convergence of Mini-batch Gradient Method with Cyclic Dropconnect and Penalty

Dropout is perhaps the most popular regularization method for deep learning. Due to the stochastic nature of the Dropout mechanism, the convergence...

-

Properties and practicability of convergence-guaranteed optimization methods derived from weak discrete gradients

The ordinary differential equation (ODE) models of optimization methods allow for concise proofs of convergence rates through discussions based on...

-

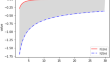

On the convergence of the numerical blow-up time for a rescaling algorithm

Berger and Kohn (Comm. Pure Appl. Math. 41 , 841–863 1988) proposed an algorithm to compute approximate blow-up times for those evolution equations...