Abstract

Many of the computing systems programmed using Machine Learning are opaque: it is difficult to know why they do what they do or how they work. Explainable Artificial Intelligence aims to develop analytic techniques that render opaque computing systems transparent, but lacks a normative framework with which to evaluate these techniques’ explanatory successes. The aim of the present discussion is to develop such a framework, paying particular attention to different stakeholders’ distinct explanatory requirements. Building on an analysis of “opacity” from philosophy of science, this framework is modeled after accounts of explanation in cognitive science. The framework distinguishes between the explanation-seeking questions that are likely to be asked by different stakeholders, and specifies the general ways in which these questions should be answered so as to allow these stakeholders to perform their roles in the Machine Learning ecosystem. By applying the normative framework to recently developed techniques such as input heatmap**, feature-detector visualization, and diagnostic classification, it is possible to determine whether and to what extent techniques from Explainable Artificial Intelligence can be used to render opaque computing systems transparent and, thus, whether they can be used to solve the Black Box Problem.

Similar content being viewed by others

Notes

It is worth distinguishing two distinct streams within the Explainable AI research program. The present discussion focuses on attempts to solve the Black Box Problem by analyzing computing systems so as to render them transparent post hoc, i.e., after they have been developed. In contrast, the discussion will not consider efforts to avoid the Black Box Problem altogether, by modifying the relevant ML methods so that the computers being programmed do not become opaque in the first place (for discussion see, e.g., Doran et al.

Gwern Branwen maintains a helpful online resource on this particular example, listing different versions and assessing their probable veracity: https://www.gwern.net/Tanks (retrieved January 25, 2019).

The program that mediates between “input” and “output”—the learned program—must not be confused with the learning algorithm that is used to develop (i.e., to program) the system in the first place.

Intuitively, answers to how- and/or where-questions specify the EREs that are causally relevant to the behavior that is being explained. Although there are longstanding philosophical questions about the particular kinds of elements that may be considered causally relevant, the present focus on intervention suggests a maximally inclusive approach (see also Woodward, 2003).

Although there is a clear sense in which interventions can also be achieved by modifying a system’s inputs—a different sin will typically lead to a different sout—interventions on the mediating states, transitions, or realizers are likely to be far more wide-ranging and systematic.

Curiously, in such scenarios, a computing system’s hardware components become analogous to the “Black Box” voice recorders used on commercial airliners.

Strictly speaking, because the aim of the GANs in this study is not detection but generation, the relevant units might more appropriately be called feature generators.

References

Bau, D., Zhu, J.-Y., Strobelt, H., Zhou, B., Tenenbaum, J. B., Freeman, W. T., & Torralba, A. (2018). GAN dissection: visualizing and understanding generative adversarial networks. Ar**v, 1811, 10597.

Bechtel, W., & Richardson, R. C. (1993). Discovering complexity: decomposition and localization as strategies in scientific research (MIT Press ed.). Cambridge, Mass: MIT Press.

Bickle, J. (2006). Reducing mind to molecular pathways: explicating the reductionism implicit in current cellular and molecular neuroscience. Synthese, 151(3), 411–434. https://doi.org/10.1007/s11229-006-9015-2.

Buckner, C. (2018). Empiricism without magic: transformational abstraction in deep convolutional neural networks. Synthese, 195(12), 5339–5372. https://doi.org/10.1007/s11229-018-01949-1.

Burrell, J. (2016). How the machine ‘thinks’: Understanding opacity in machine learning algorithms. Big Data & Society, 3(1), 205395171562251. https://doi.org/10.1177/2053951715622512.

Busemeyer, J. R., & Diederich, A. (2010). Cognitive modeling. Sage.

Chemero, A. (2000). Anti-representationalism and the dynamical stance. Philosophy of Science.

Churchland, P. M. (1981). Eliminative Materialism and the Propositional Attitudes. The Journal of Philosophy, 78(2), 67–90.

Clark, A. (1993). Associative engines: connectionism, concepts, and representational change. MIT Press.

Dennett, D. C. (1987). The Intentional Stance. Cambridge, MA: MIT Press.

Doran, D., Schulz, S., & Besold, T. R. (2017). What does explainable AI really mean? a new conceptualization of perspectives. Ar**v, 1710, 00794.

Durán, J. M., & Formanek, N. (2018). Grounds for trust: essential epistemic opacity and computational reliabilism. Minds and Machines, 28(4), 645–666. https://doi.org/10.1007/s11023-018-9481-6.

Elman, J. L. (1990). Finding structure in time. Cognitive Science, 14, 179–211.

European Commission.(2016) Regulation (EU) 2016/679 of the European Parliament and of the Council of 27 April 2016 on the protection of natural persons with regard to the processing of personal data and on the free movement of such data, and repealing Directive 95/46/EC (General Data Protection Regulation)

Fodor, J. A. (1987). Psychosemantics. Cambrdige, MA: MIT Press.

Goodman, B., & Flaxman, S. (2016). European Union regulations on algorithmic decision-making and a" right to explanation". Ar**v, 1606, 08813.

Guidotti, R., Monreale, A., Ruggieri, S., Pedreschi, D., Turini, F., & Giannotti, F. (2018). Local rule-based explanations of Black Box Decision Systems. Ar**v, 1805, 10820.

Hohman, F. M., Kahng, M., Pienta, R., & Chau, D. H. (2018). Visual analytics in deep learning: an interrogative survey for the next frontiers. IEEE Transactions on Visualization and Computer Graphics.

Humphreys, P. (2009). The philosophical novelty of computer simulation methods. Synthese, 169(3), 615–626.

Hupkes, D., Veldhoen, S., & Zuidema, W. (2018). Visualisation and’diagnostic classifiers’ reveal how recurrent and recursive neural networks process hierarchical structure. Journal of Artificial Intelligence Research, 61, 907–926.

Lake, B. M., Ullman, T. D., Tenenbaum, J. B., & Gershman, S. J. (2017). Building machines that learn and think like people. The Behavioral and Brain Sciences.

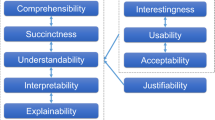

Lipton, Z. C. (2016). The mythos of model interpretability. Ar**v, 1606, 03490.

Marcus, G. (2018). Deep learning: a critical appraisal. Ar**v, 1801, 00631.

Marr, D. (1982). Vision: a computational investigation into the human representation and processing of visual information. Cambridge, MA: MIT Press.

McClamrock, R. (1991). Marr’s three levels: a re-evaluation. Minds and Machines, 1(2), 185–196.

Minsky, M. (ed) (1968). Semantic Information Processing. Cambridge, MA: MIT Press.

Montavon, G., Samek, W., & Müller, K.-R. (2018). Methods for interpreting and understanding deep neural networks. Digital Signal Processing, 73, 1–15. https://doi.org/10.1016/j.dsp.2017.10.011.

Pfeiffer, M., & Pfeil, T. (2018). Deep learning with spiking neurons: opportunities and challenges. Frontiers in Neuroscience, 12. https://doi.org/10.3389/fnins.2018.00774.

Piccinini, G., & Craver, C. F. (2011). Integrating psychology and neuroscience: functional analyses as mechanism sketches. Synthese, 183(3), 283–311. https://doi.org/10.1007/s11229-011-9898-4.

Pylyshyn, Z. W. (1984). Computation and cognition. Cambridge, MA: MIT Press.

Ramsey, W. (1997). Do connectionist representations earn their explanatory keep? Mind & Language, 12(1), 34–66.

Ras, G., van Gerven, M., & Haselager, P. (2018). Explanation methods in deep learning: users, values, concerns and challenges. In Explainable and Interpretable Models in Computer Vision and Machine Learning (pp. 19–36). Springer.

Ribeiro, M. T., Singh, S., & Guestrin, C. (2016). Why should I trust you?: explaining the predictions of any classifier. Ar**v, 1602, 04938v3.

Rieder, G., & Simon, J. (2017). Big data: a new empiricism and its epistemic and socio-political consequences. In Berechenbarkeit der Welt? Philosophie und Wissenschaft im Zeitalter von Big Data (pp. 85–105). Wiesbaden: Springer VS.

Russell, S.J., Norvig, P. & Davis, E. (2010). Artificial Intelligence: A Modern Approach (3rd ed.). Upper Saddle River, NJ: Prentice Hall.

Shagrir, O. (2010). Marr on computational-level theories. Philosophy of Science, 77(4), 477–500.

Shallice, T., & Cooper, R. P. (2011). The organisation of mind. Oxford: Oxford University Press.

Smolensky, P. (1988). On the proper treatment of connectionism. Behavioral and Brain Sciences, 11(1), 1–23.

Stinson, C. (2016). Mechanisms in psychology: rip** nature at its seams. Synthese, 193(5), 1585–1614. https://doi.org/10.1007/s11229-015-0871-5.

Szegedy, C., Zaremba, W., Sutskever, I., Bruna, J., Erhan, D., Goodfellow, I., & Fergus, R. (2013). Intriguing properties of neural networks. Ar**v, 1312, 6199.

Tomsett, R., Braines, D., Harborne, D., Preece, A., & Chakraborty, S. (2018). Interpretable to whom? a role-based model for analyzing interpretable machine learning systems. Ar**v, 1806, 07552.

Wachter, S., Mittelstadt, B., & Floridi, L. (2017). Why a right to explanation of automated decision-making does not exist in the general data protection regulation. International Data Privacy Law, 2017.

Zednik, C. (2017). Mechanisms in cognitive science. In S. Glennan & P. Illari (Eds.), The Routledge Handbook of Mechanisms and Mechanical Philosophy (pp. 389–400). London: Routledge.

Zednik, C. (2018). Will machine learning yield machine intelligence? In Philosophy and Theory of Artificial Intelligence 2017.

Zerilli, J., Knott, A., Maclaurin, J., & Gavaghan, C. (2018). Transparency in algorithmic and human decision-making: is there a double standard? Philosophy & Technology. https://doi.org/10.1007/s13347-018-0330-6.

Acknowledgements

This work was supported by the German Research Foundation (DFG project ZE 1062/4-1). The author would also like to thank Cameron Buckner and Christian Heine for written comments on earlier drafts. The initial impulse for this work came during discussions of the consortium on "Artificial Intelligence - Life Cycle Processes and Quality Requirements" at the German Institute for Standardization (DIN SPEC 92001). However, the final product is the work of the author.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

About this article

Cite this article

Zednik, C. Solving the Black Box Problem: A Normative Framework for Explainable Artificial Intelligence. Philos. Technol. 34, 265–288 (2021). https://doi.org/10.1007/s13347-019-00382-7

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s13347-019-00382-7