Abstract

Purpose

We developed predictive models using different programming languages and different computing platforms for machine learning (ML) and deep learning (DL) that classify clinical diagnoses in patients with epiphora. We evaluated the diagnostic performance of these models.

Methods

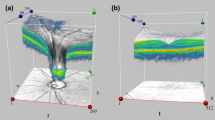

Between January 2016 and September 2017, 250 patients with epiphora who underwent dacryocystography (DCG) and lacrimal scintigraphy (LS) were included in the study. We developed five different predictive models using ML tools, Python-based TensorFlow, R, and Microsoft Azure Machine Learning Studio (MAMLS). A total of 27 clinical characteristics and parameters including variables related to epiphora (VE) and variables related to dacryocystography (VDCG) were used as input data. Apart from this, we developed two predictive convolutional neural network (CNN) models for diagnosing LS images. We conducted this study using supervised learning.

Results

Among 500 eyes of 250 patients, 59 eyes had anatomical obstruction, 338 eyes had functional obstruction, and the remaining 103 eyes were normal. For the data set that excluded VE and VDCG, the test accuracies in Python-based TensorFlow, R, multiclass logistic regression in MAMLS, multiclass neural network in MAMLS, and nuclear medicine physician were 81.70%, 80.60%, 81.70%, 73.10%, and 80.60%, respectively. The test accuracies of CNN models in three-class classification diagnosis and binary classification diagnosis were 72.00% and 77.42%, respectively.

Conclusions

ML-based predictive models using different programming languages and different computing platforms were useful for classifying clinical diagnoses in patients with epiphora and were similar to a clinician’s diagnostic ability.

Similar content being viewed by others

References

Lee JG, Jun S, Cho YW, Lee H, Kim GB, Seo JB, et al. Deep learning in medical imaging: general overview. Korean J Radiol. 2017;18:570–84.

Choi H. Deep learning in nuclear medicine and molecular imaging: current perspectives and future directions. Nucl Med Mol Imaging. 2018;52:109–18.

Fau KK, Wu D, Fau WD, Gong K, Fau GK, Dutta J, et al. Penalized PET reconstruction using deep learning prior and local linear fitting. IEEE Trans Med Imaging. 2018;37.

Choi JY. Radiomics and deep learning in clinical imaging: what should we do? Nucl Med Mol Imaging. 2018;52:89–90.

Muhlestein WE, Akagi DS, Kallos JA, Morone PJ, Weaver KD, Thompson RC, et al. Using a guided machine learning ensemble model to predict discharge disposition following meningioma resection. J Neurol Surg B Skull Base. 2018;79:123–30.

Papp L, Potsch N, Grahovac M, Schmidbauer V, Woehrer A, Preusser M, et al. Glioma survival prediction with combined analysis of in vivo (11)C-MET PET features, ex vivo features, and patient features by supervised machine learning. J Nucl Med. 2018;59:892–9.

Juarez-Orozco LE, Knol RJJ, Sanchez-Catasus CA, Martinez-Manzanera O, van der Zant FM, Knuuti J. Machine learning in the integration of simple variables for identifying patients with myocardial ischemia. J Nucl Cardiol 2018.

Betancur J, Otaki Y, Motwani M, Fish MB, Lemley M, Dey D, et al. Prognostic value of combined clinical and myocardial perfusion imaging data using machine learning. J Am Coll Cardiol Img 2017.

Vonica OA, Obi E, Sipkova Z, Soare C, Pearson AR. The value of lacrimal scintillography in the assessment of patients with epiphora. Eye (Lond). 2017;31:1020–6.

Sagili S, Selva D, Malhotra R, Malhotra R. Lacrimal scintigraphy: “interpretation more art than science”. Orbit. 2012;31:77–85.

Cuthbertson FM, Webber S. Assessment of functional nasolacrimal duct obstruction—a survey of ophthalmologists in the southwest. Eye (Lond). 2004;18:20–3.

Okser S, Pahikkala T, Airola A, Salakoski T, Ripatti S, Aittokallio T. Regularized machine learning in the genetic prediction of complex traits. PLoS Genet. 2014;10:e1004754.

Krittanawong C, Zhang H, Wang Z, Aydar M, Kitai T. Artificial intelligence in precision cardiovascular medicine. J Am Coll Cardiol. 2017;69:2657–64.

Burlina P, Pacheco KD, Joshi N, Freund DE, Bressler NM. Comparing humans and deep learning performance for grading AMD: a study in using universal deep features and transfer learning for automated AMD analysis. Comput Biol Med. 2017;82:80–6.

Gibbons C, Richards S, Valderas JM, Campbell J. Supervised machine learning algorithms can classify open-text feedback of doctor performance with human-level accuracy. J Med Internet Res. 2017;19:e65.

Sun B, Lam D, Yang D, Grantham K, Zhang T, Mutic S, et al. A machine learning approach to the accurate prediction of monitor units for a compact proton machine. Med Phys. 2018;45:2243–51.

Pan L, Cheng C, Haberkorn U, Dimitrakopoulou-Strauss A. Machine learning-based kinetic modeling: a robust and reproducible solution for quantitative analysis of dynamic PET data. Phys Med Biol. 2017;62:3566–81.

Fontenla-Romero O, Perez-Sanchez B, Guijarro-Berdinas B. LANN-SVD: a non-iterative SVD-based learning algorithm for one-layer neural networks. IEEE Trans Neural Netw Learn Syst 2017:1–6.

Yao Y, Rosasco L, Caponnetto A. On early stop** in gradient descent learning. Constr Approx. 2007;26:289–315.

Baldi P, Sadowski P. The dropout learning algorithm. Artif Intell. 2014;210:78–122.

Hira ZM, Gillies DF. A review of feature selection and feature extraction methods applied on microarray data. Adv Bioinforma. 2015;2015:198363.

Figueroa RL, Zeng-Treitler Q, Kandula S, Ngo LH. Predicting sample size required for classification performance. BMC Med Inform Decis Mak. 2012;12:8.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of Interest

Yong-** Park, Ji Hoon Bae, Mu Heon Shin, Seung Hyup Hyun, Young Seok Cho, Yearn Seong Choe, Joon Young Choi, Kyung-Han Lee, Byung-Tae Kim, and Seung Hwan Moon declare that they have no conflict of interest.

Ethical Approval

All procedures performed in studies involving human participants were in accordance with the ethical standards of our institutional review board and with the 1964 Helsinki declaration and its later amendments or comparable ethical standards.

Informed Consent

The institutional review board of our institute approved this retrospective study, and the requirement to obtain informed consent was waived.

Additional information

Publisher’s Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Electronic Supplementary Material

ESM 1

(DOCX 27 kb)

Supplementary Figure 1

(PNG 785 kb)

Supplementary Figure 2

(PNG 4086 kb)

Supplementary Figure 3

(PNG 2527 kb)

Rights and permissions

About this article

Cite this article

Park, YJ., Bae, J.H., Shin, M.H. et al. Development of Predictive Models in Patients with Epiphora Using Lacrimal Scintigraphy and Machine Learning. Nucl Med Mol Imaging 53, 125–135 (2019). https://doi.org/10.1007/s13139-019-00574-1

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s13139-019-00574-1