Abstract

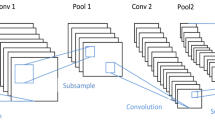

Most convolutional neural network based grasp detection methods evaluate the predicted grasp by computing its overlap with the selected ground truth grasp. But for typical grasp datasets, not all graspable examples are labelled as ground truths. Hence, directly back propagating the generated loss during training could not fully reveal the graspable ability of the predicted grasp. In this paper, we integrate the grasp map** mechanism with the convolutional neural network, and propose a multi-scale, multi-grasp detection model. First, we connect each labeled grasp and refine them by discarding inconsistent and redundant connections to form the grasp path. Then, the predicted grasp is mapped to the grasp path and the error between them is used for back-propagation as well as grasp evaluation. Last, they are combined into the multi-grasp detection framework to detect grasps with efficiency. Experimental results both on Cornell Gras** Dataset and real-world robotic gras** system verify the effectiveness of our proposed method. In addition, its detection accuracy keeps relatively stable even in the circumstance of high Jaccard threshold.

Similar content being viewed by others

References

A. Llopart, O. Ravn, and N. A. Andersen, “Door and cabinet recognition using convolutional neural nets and realtime method fusion for handle detection and gras**,” Proc. of IEEE 3rd International Conf. on Control, Automation and Robotics (ICCAR), pp. 144–149, 2017.

B. Huang, Z. Li, X. Wu, A. Ajoudani, A. Bicchi, and J. Liu, “Coordination control of a dual-arm exoskeleton robot using human impedance transfer skills,” IEEE Trans. on Systems Man & Cybernetics Systems, vol. 49, no. 5, pp. 954–963, 2017.

P. R. Wurman, and J. M. Romano, “The amazon picking challenge 2015,” IEEE Robotics and Automation Magazine, vol. 22, no. 3, pp. 10–12, 2015.

C. Eppner, S. Höfer, R. Jonschkowski, R. Martín-Martín, A. Sieverling, V. Wall, and O. Brock, “Lessons from the amazon picking challenge: four aspects of building robotic systems,” Robotics: Science and Systems. 2016.

P. Huang, F. Zhang, J. Cai, D. Wang, Z. Meng, and J. Guo, “Dexterous tethered space robot: design, measurement, control, and experiment,” IEEE Trans. on Aerospace and Electronic Systems, vol. 53, no. 3, pp. 1452–1468, 2017.

A. Bicchi, and V. Kumar, “Robotic gras** and contact: a review,” Proc. of IEEE International Conf. on Robotics and Automation, vol. 1, pp. 348–353, 2000.

A. Bicchi, “On the closure properties of robotic gras**,” International Journal of Robotics Research, vol. 14, no. 4, pp. 319–334, 1995.

A. Jabalameli, N. Ettehadi, and A. Behal, “Edge-based recognition of novel objects for robotic gras**,” ar**&publication_year=2018&author=Jabalameli%2CA&author=Ettehadi%2CN&author=Behal%2CA"> Google Scholar

K. Huebner, and D. Kragic, “Selection of robot pre-grasps using box-based shape approximation,” Proc. of IEEE/RSJ International Conf. on Intelligent Robots and Systems (IROS), pp. 1765–1770, 2008.

R. B. Rusu, A. Holzbach, R. Diankov, G. Bradski, and M. Beetz, “Perception for mobile manipulation and gras** using active stereo,” Proc. of 9th IEEE-RAS International Conf. on Humanoid Robots, pp. 632–638, 2009.

S. Jain, and B. Argall, “Grasp detection for assistive robotic manipulation,” Proc. of IEEE International Conf. on Robotics and Automation (ICRA), pp. 2015–2021, 2016.

Q. V. Le, D. Kamm, A. F. Kara, and A. Y. Ng, “Learning to grasp objects with multiple contact points,” Proc. of IEEE International Conf. on Robotics and Automation (ICRA), pp. 5062–5069, 2010.

J. Bohg, A. Morales, T. Asfour, and D. Kragic, “Data-driven grasp synthesis—a survey,” IEEE Trans. on Robotics, vol. 30, no. 2, pp. 289–309, 2014.

A. Saxena, J. Driemeyer, and A. Y. Ng, “Robotic gras** of novel objects using vision,” The International Journal of Robotics Research, vol. 27, no. 2, pp. 157–173, 2008.

L. Chen, P. Huang, and Z. Zhao, “Refining object proposals using structured edge and superpixel contrast in robotic gras**,” Robotics and Autonomous Systems, vol. 100, pp. 194–205, 2018.

F. Chu, and P. A. Vela, “Deep grasp: detection and localization of grasps with deep neural networks,” ar**v preprint ar**v:1802.00520v1, 2018.

E. Johns, S. Leutenegger, and A. J. Davison, “Deep learning a grasp function for gras** under gripper pose uncertainty,” Proc. of IEEE/RSJ International Conf. on Intelligent Robots and Systems (IROS), pp. 4461–4468, 2016.

L. Pinto, and A. Gupta, “Supersizing self-supervision: learning to grasp from 50k tries and 700 robot hours,” Proc. of IEEE International Conf. on Robotics and Automation (ICRA), pp. 3406–3413, 2016.

I. Lenz, H. Lee, and A. Saxena, “Deep learning for detecting robotic grasps,” The International Journal of Robotics Research, vol. 34, no. 4–5, pp. 705–724, 2015.

J. Redmon, and A. Angelova, “Real-time grasp detection using convolutional neural networks,” Proc. of IEEE International Conf. on Robotics and Automation (ICRA), pp. 1316–1322, 2015.

D. Guo, T. Kong, F. Sun, and H. Liu, “Object discovery and grasp detection with a shared convolutional neural network,” Proc. of IEEE International Conf. on Robotics and Automation (ICRA), pp. 2038–2043, 2016.

Z. Chu, S. Yan, J. Hu, and S. Lu, “Impedance identification using tactile sensing and its adaptation for an underactuated gripper manipulation,” International Journal of Control, Automation and Systems, vol. 16, pp. 875–886, 2018.

H. Liu, Y. Yu, F. Sun, and J. Gu, “Visual-tactile fusion for object recognition,” IEEE Trans. on Automation Science and Engineering, vol. 14, no. 2, pp. 996–1008, 2017.

D. Guo, F. Sun, B. Fang, C. Yang, and N. ** using visual and tactile sensing,” Information Sciences, vol. 417, pp. 274–286, 2017.

H. Wang, B. Yang, Y. Liu, W. Chen, X. Liang, and R. Pfeifer, “Visual servoing of soft robot manipulator in constrained environments with an adaptive controller,” IEEE/ASME Trans. on Mechatronics, vol. 22, no. 1, pp. 41–50, 2016.

S. Huang, W. Chang, and J. Su, “Intelligent robotic gripper with adaptive gras** force,” International Journal of Control, Automation and Systems, vol. 15, pp. 2272–2282, 2017.

Y. Jiang, S. Moseson, and A. Saxena, “Efficient gras** from RGBD images: learning using a new rectangle representation,” Proc. of IEEE International Conf. on Robotics and Automation (ICRA), pp. 3304–3311, 2011.

H. Guo, X. Wu, and W. Feng, “Multi-stream deep networks for human action classification with sequential tensor decomposition,” Signal Processing, vol. 140, pp. 198–206, 2017.

L. Chen, P. Huang, and Z. Meng, “Convolutional multigrasp detection using grasp path for RGBD images,” Robotics and Autonomous Systems, vol. 113, pp. 93–103, 2019.

J. Watson, J. Hughes, and F. Iida, “Real-world, realtime robotic gras** with convolutional neural networks,” Proc. of Conf. Towards Autonomous Robotic Systems, pp. 617–626, 2017.

J. Redmon, and A. Farhadi, “Yolov3: an incremental improvement,” ar**vpreprint ar**v:1804.02767, 2018.

D. Guo, F. Sun, H. Liu, T. Kong, B. Fang, and N. **, “A hybrid deep architecture for robotic grasp detection,” Proc. of IEEE International Conf. on Robotics and Automation (ICRA), pp. 1609–1614, 2017.

Author information

Authors and Affiliations

Corresponding author

Additional information

Publisher’s Note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Recommended by Associate Editor Min Young Kim under the direction of Editor Euntai Kim. This work is supported by the National Natural Science Foundation of China (Grant No: 61725303, 91848205).

Lu Chen received his M.S. and Ph.D degrees from Northwestern Polytechnical University in 2015 and 2019, respectively. He is currently a Lecturer of Institute of Big Data Science and Industry at Shanxi University. His research interests include machine learning, robotic vision and low-light image enhancement.

Panfeng Huang received his B.S. and M.S. degrees from Northwestern Polytechnical University in 1998 and 2001, respectively, and a Ph.D. from the ChiI nese University of Hong Kong in the area of Automation and Robotics in 2005. He is currently a Professor of School of Astronautics and Vice Director of Research Center for Intelligent Robotics at the Northwestern Polytechnical University. His research interests include tethered space robotics, intelligent control, machine vision, space teleoperation.

Yuanhao Li is a Student in the School of Astronautics, Northwestern Polytechnical University and the National Key Laboratory of Aerospace Flight Dynamics. He received his B.S. and M.S. degrees in Automation at the same university. His research interests include robot vision, human gesture recognition and scene understanding.

Zhongjie Meng received his Ph.D. degree from Northwestern Polytechnical University, **&author=Lu%20Chen%20et%20al&contentID=10.1007%2Fs12555-019-0186-2©right=ICROS%2C%20KIEE%20and%20Springer&publication=1598-6446&publicationDate=2020-02-28&publisherName=SpringerNature&orderBeanReset=true">Reprints and permissions

About this article

Cite this article

Chen, L., Huang, P., Li, Y. et al. Detecting Graspable Rectangles of Objects in Robotic Gras**. Int. J. Control Autom. Syst. 18, 1343–1352 (2020). https://doi.org/10.1007/s12555-019-0186-2

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s12555-019-0186-2