Abstract

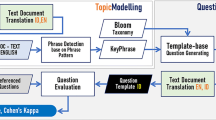

The rise of E-learning systems offers a vast amount of free educational content for every inquisitive e-learner. However, reading only e-content does not makes their learning effective. Posing appropriate questions during the reading process can aid in the learner’s comprehension. We present a novel approach to create educational Question-Answers on eBook content. The model first summarizes key information from an input document, which is then used for creating relevant Question-Answers (QAs). We build our educational Question-Answers generation model by fine-tuning a pretrained LM (T5) using maximum likelihood estimation. We also present a contrastive fine-tuning method, in which the contrastive loss is added between the positive and negative training feature pairs during the fine-tuning process. The extensive evaluation methods on QA dataset (FairytaleQA and HotPotQA) and NCERT e-book, demonstrate the effectiveness and practicability of our eQA model.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Banerjee, S., Lavie, A.: METEOR: an automatic metric for MT evaluation with improved correlation with human judgments. In: Proceedings of the ACL Workshop on Intrinsic and Extrinsic Evaluation Measures for Machine Translation and/or Summarization, Ann Arbor, Michigan, June 2005, pp. 65–72. Association for Computational Linguistics (2005)

Bishop, C.M.: Pattern Recognition and Machine Learning (Information Science and Statistics). Springer, Heidelberg (2006)

Chan, Y.-H., Fan, Y.-C.: A recurrent BERT-based model for question generation. In: Proceedings of the 2nd Workshop on Machine Reading for Question Answering, Hong Kong, China, November 2019, pp. 154–162. Association for Computational Linguistics (2019)

Chen, G., Yang, J., Gasevic, D.: A comparative study on question-worthy sentence selection strategies for educational question generation. In: Isotani, S., Millán, E., Ogan, A., Hastings, P., McLaren, B., Luckin, R. (eds.) AIED 2019. LNCS (LNAI), vol. 11625, pp. 59–70. Springer, Cham (2019). https://doi.org/10.1007/978-3-030-23204-7_6

Cho, W.S., et al.: Contrastive multi-document QG. In: Proceedings of the 16th European Conference, Chapter of the ACL, pp. 12–30 (2021)

De Kuthy, K., Kannan, M., Ponnusamy, H.S., Meurers, D.: Towards automatically generating questions under discussion to link information and discourse structure. In: Proceedings of the 28th International Conference on Computational Linguistics, pp. 5786–5798 (2020)

Demszky, D., Guu, K., Liang, P.: Transforming question answering datasets into natural language inference datasets. CoRR, abs/1809.02922 (2018)

Devlin, J., Chang, M.-W., Lee, K., Toutanova, K.: BERT: pre-training of deep bidirectional transformers for language understanding. In: Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, (Long and Short Papers), Minneapolis, Minnesota, June 2019, vol. 1, pp. 4171–4186. Association for Computational Linguistics (2019)

Dhole, K.D., Manning, C.D.: Syn-QG: syntactic and shallow semantic rules for question generation. ar**v, abs/2004.08694 (2020)

Dong, L., et al.: Unified Language Model Pre-training for Natural Language Understanding and Generation. Curran Associates Inc., Red Hook (2019)

Du, X., Shao, J., Cardie, C.: Learning to ask: neural question generation for reading comprehension. CoRR, abs/1705.00106 (2017)

Ebersbach, M., Feierabend, M., Nazari, K.B.B.: Comparing the effects of generating questions, testing, and restudying on students’ long-term recall in university learning. Appl. Cogn. Psychol. 34(3), 724–736 (2020)

Kurdi, G., Leo, J., Parsia, B., Sattler, U., Al-Emari, S.: A systematic review of automatic question generation for educational purposes. Int. J. Artif. Intell. Educ. 30, 121–204 (2020)

Golinkoff, R.M.: Language matters: denying the existence of the 30-million-word gap has serious consequences. Child Dev. 90(3), 985–992 (2019)

Gretz, S., Bilu, Y., Cohen-Karlik, E., Slonim, N.: The workweek is the best time to start a family - a study of GPT-2 based claim generation. In: Findings of the Association for Computational Linguistics, EMNLP 2020, November 2020. Association for Computational Linguistics, pp. 528–544 (2020)

Gwet, K.L.: On the Krippendorff’s alpha coefficient (2011)

Ganotice, F.A., Jr., Downing, K., Mak, T., Chan, B., Lee, W.Y.: Enhancing parent-child relationship through dialogic reading. Educ. Stud. 43(1), 51–66 (2017)

Kočiský, T., et al.: The NarrativeQA reading comprehension challenge. Trans. Assoc. Comput. Linguist. 6, 317–328 (2018)

Krishna, K., Iyyer, M.: Generating question-answer hierarchies. In: Proceedings of the 57th Annual Meeting of the ACL, Florence, Italy, pp. 2321–2334, July 2019. Association for Computational Linguistics (2019)

Kuamr, S., Chauhan, A.: Augmenting textbooks with CQA question-answers and annotated YouTube videos to increase its relevance. Neural Process Lett. (2022)

Kumar, S., Chauhan, A.: Enriching textbooks by question-answers using CQA. In: 2019 IEEE Region 10 Conference (TENCON), TENCON 2019, pp. 707–714 (2019)

Kumar, S., Chauhan, A.: Recommending question-answers for enriching textbooks. In: Bellatreche, L., Goyal, V., Fujita, H., Mondal, A., Reddy, P.K. (eds.) BDA 2020. LNCS, vol. 12581, pp. 308–328. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-66665-1_20

Kumar, S., Chauhan, A.: A finetuned language model for recommending cQA-QAs for enriching textbooks. In: Karlapalem, K., et al. (eds.) PAKDD 2021. LNCS (LNAI), vol. 12713, pp. 423–435. Springer, Cham (2021). https://doi.org/10.1007/978-3-030-75765-6_34

Labutov, I., Basu, S., Vanderwende, L.: Deep questions without deep understanding. In: Proceedings of the 53rd Annual Meeting of the Association for Computational Linguistics and the 7th International Joint Conference on Natural Language Processing (Volume 1: Long Papers), Bei**g, China, July 2015, pp. 889–898. Association for Computational Linguistics (2015)

Lewis, P., et al.: PAQ: 65 million probably-asked questions and what you can do with them. Trans. Assoc. Comput. Linguist. 9, 1098–1115 (2021)

Li, M., et al.: Timeline summarization based on event graph compression via time-aware optimal transport. In: Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, Punta Cana, Dominican Republic, pp. 6443–6456, November 2021. Association for Computational Linguistics (2021)

Lin, C.-Y.: ROUGE: a package for automatic evaluation of summaries. In: Text Summarization Branches Out, Barcelona, Spain, July 2004, pp. 74–81. Association for Computational Linguistics (2004)

Lin, T.-Y., Goyal, P., Girshick, R., He, K., DollÁr, P.: Focal loss for dense object detection. IEEE Trans. Pattern Anal. Mach. Intell. 42(2), 318–327 (2020)

Lyu, C., Shang, L., Graham, Y., Foster, J., Jiang, X., Liu, Q.: Improving unsupervised question answering via summarization-informed question generation. In: Proceedings of the EMNLP, pp. 4134–4148 (2021)

Nguyen, T., et al.: MS MARCO: a human generated machine reading comprehension dataset. CoRR, abs/1611.09268 (2016)

Pan, L., **e, Y., Feng, Y., Chua, T.-S., Kan, M.-Y.: Semantic graphs for generating deep questions. In: Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, July 2020, pp. 1463–1475. Association for Computational Linguistics (2020)

Pan, L., **e, Y., Feng, Y., Chua, T.-S., Kan, M.-Y.: Semantic graphs for generating deep questions. In: Proceedings of the 58th Annual Meeting of the ACL, pp. 1463–1475 (2020)

Papineni, K., Roukos, S., Ward, T., Zhu, W.-J.: Bleu: a method for automatic evaluation of machine translation. In: Proceedings of the 40th Annual Meeting on Association for Computational Linguistics, ACL 2002, USA, pp. 311–318. Association for Computational Linguistics (2002)

Pyatkin, V., Roit, P., Michael, J., Tsarfaty, R., Goldberg, Y., Dagan, I.: Asking it all: generating contextualized questions for any semantic role. CoRR, abs/2109.04832 (2021)

Qi, W., et al.: ProphetNet: predicting future n-gram for sequence-to-sequence pre-training. In: EMNLP 2020, pp. 2401–2410 (2020)

Radford, A., et al.: Learning transferable visual models from natural language supervision. CoRR, abs/2103.00020 (2021)

Radford, A., Wu, J., Child, R., Luan, D., Amodei, D., Sutskever, I.: Language Models are Unsupervised Multitask Learners (2019)

Ruan, S., et al.: Quizbot: a dialogue-based adaptive learning system for factual knowledge. In: Proceedings of the 2019 CHI Conference on Human Factors in Computing Systems, CHI 2019, pp. 1–13. Association for Computing Machinery, New York (2019)

Rush, A.M., Chopra, S., Weston, J.: A neural attention model for abstractive sentence summarization. In: Proceedings of the 2015 Conference on Empirical Methods in Natural Language Processing, Lisbon, Portugal, September 2015, pp. 379–389. Association for Computational Linguistics (2015)

Scialom, T., Piwowarski, B., Staiano, J.: Self-attention architectures for answer-agnostic neural question generation. In: Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, Florence, Italy, July 2019, pp. 6027–6032. Association for Computational Linguistics (2019)

Stasaski, K., Hearst, M.A.: Multiple choice question generation utilizing an ontology. In: Proceedings of the 12th Workshop on Innovative Use of NLP for Building Educational Applications, Copenhagen, Denmark, September 2017, pp. 303–312. Association for Computational Linguistics (2017)

Tuan, L.A., Shah, D.J., Barzilay, R.: Capturing greater context for question generation. CoRR, abs/1910.10274 (2019)

Wang, D., Liu, P., Zheng, Y., Qiu, X., Huang, X.: Heterogeneous graph neural networks for extractive document summarization. In: Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, July 2020, pp. 6209–6219. Association for Computational Linguistics (2020)

Wang, T., Yuan, X., Trischler, A.: A joint model for question answering and question generation. In: Learning to Generate Natural Language Workshop, ICML 2017 (2017)

Wang, Z., Lan, A.S., Nie, W., Waters, A.E., Grimaldi, P.J., Baraniuk, R.G.: QG-Net: a data-driven question generation model for educational content. In: Proceedings of the Fifth Annual ACM Conference on Learning at Scale, L@S 2018. Association for Computing Machinery, New York (2018)

Xu, J., Gan, Z., Cheng, Y., Liu, J.: Discourse-aware neural extractive text summarization. In: Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, pp. 5021–5031, July 2020. Association for Computational Linguistics (2020)

Xu, Y., Wang, D., Collins, P., Lee, H., Warschauer, M.: Same benefits, different communication patterns: comparing children’s reading with a conversational agent vs. a human partner. Comput. Educ. (2021)

Xu, Y., et al.: Fantastic questions and where to find them: FairytaleQA - an authentic dataset for narrative comprehension. Association for Computational Linguistics (2022)

Yang, Z., et al.: HotpotQA: a dataset for diverse, explainable multi-hop question answering. CoRR, abs/1809.09600 (2018)

Zhang, T., Kishore, V., Wu, F., Weinberger, K.Q., Artzi, Y.: BERTscore: evaluating text generation with BERT. CoRR, abs/1904.09675 (2019)

Zhao, Y., Ni, X., Ding, Y., Ke, Q.: Paragraph-level neural question generation with maxout pointer and gated self-attention networks. In: Proceedings of the 2018 Conference on Empirical Methods in Natural Language Processing, Brussels, Belgium, October–November 2018, pp. 3901–3910. Association for Computational Linguistics (2018)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Appendices

Appendix

A BERT Score for Semantic Match

Besides the standard evaluation metrics such as, METEOR and Rouge-L scores, we also employ BERTScore [50] to assess the semantic similarity of questions generated by our QG model with the ground-truth questions, and present the average precision, recall, and F1 values. Table 12 outlines the computed BERT scores for top k generated questions.The results show that our model can generate relevant questions for the given text.

B Few More Examples of Generated QAs for Text Document

Two more samples (input text), the ground truth QAs, generated QAs by CBQA model, and our edu-QA generation model. Tables 13 depict the sample text from NCERT CCT eBook, generated summary, QAs by our model and generated QAs by CBQA model [25]. Table 14 contain a generated QAs for the sample input text from FairytaleQA dataset, associated ground truth QAs, and generated summary, QAs by our model and generated QAs by CBQA model [25].

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Kumar, S., Chauhan, A., Kumar C., P. (2022). Learning Enhancement Using Question-Answer Generation for e-Book Using Contrastive Fine-Tuned T5. In: Roy, P.P., Agarwal, A., Li, T., Krishna Reddy, P., Uday Kiran, R. (eds) Big Data Analytics. BDA 2022. Lecture Notes in Computer Science, vol 13773. Springer, Cham. https://doi.org/10.1007/978-3-031-24094-2_5

Download citation

DOI: https://doi.org/10.1007/978-3-031-24094-2_5

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-24093-5

Online ISBN: 978-3-031-24094-2

eBook Packages: Computer ScienceComputer Science (R0)