Abstract

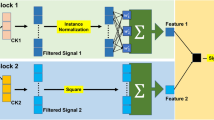

The attention mechanism is one of the most popular deep learning techniques in recent years and it is arguably able to produce human-interpretable results. In this research, we developed a classification model combining two self-attention modules and a convolutional neural network. This model achieved benchmark or superior performance on two electroencephalography (EEG) recording datasets. Moreover, we demonstrated that the self-attention modules were able to capture features, including average voltage of signal features and instant voltage change of the EEG signals, by visualizing the attention maps they produced.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Bahdanau, D., Cho, K., Bengio, Y.: Neural machine translation by jointly learning to align and translate (2014). http://arxiv.org/abs/1409.0473, cite arxiv:1409.0473Comment. Accepted at ICLR 2015 as oral presentation

Bang, J.S., Lee, S.W.: Interpretable convolutional neural networks for subject-independent motor imagery classification (2021)

Blankertz, B., et al.: The BCI competition. III: validating alternative approaches to actual BCI problems. IEEE Trans. Neural Syst. Rehabil. Eng. 14(2), 153–159 (2006). https://doi.org/10.1109/TNSRE.2006.875642

Chaudhari, S., Mithal, V., Polatkan, G., Ramanath, R.: An attentive survey of attention models (2021)

Chen, K., Wang, J., Chen, L.C., Gao, H., Xu, W., Nevatia, R.: ABC-CNN: an attention based convolutional neural network for visual question answering, November 2015

Cheng, J., Dong, L., Lapata, M.: Long short-term memory-networks for machine reading (2016)

Cisotto, G., Zanga, A., Chlebus, J., Zoppis, I., Manzoni, S., Markowska-Kaczmar, U.: Comparison of attention-based deep learning models for EEG classification (2020)

Cordonnier, J.B., Loukas, A., Jaggi, M.: On the relationship between self-attention and convolutional layers (2020)

Craik, A., He, Y., Contreras-Vidal, J.L.: Deep learning for electroencephalogram (EEG) classification tasks: a review. J. Neural Eng. 16(3), 031001 (2019)

Dosovitskiy, A., et al.: An image is worth 16x16 words: transformers for image recognition at scale (2021)

Goodfellow, I., Bengio, Y., Courville, A.: Deep Learning. MIT Press, Cambridge (2016)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition (2015)

Lee, Y.E., Lee, S.H.: EEG-transformer: self-attention from transformer architecture for decoding EEG of imagined speech (2021)

Liu, X., Shen, Y., Liu, J., Yang, J., **ong, P., Lin, F.: Parallel spatial-temporal self-attention CNN-based motor imagery classification for BCI. Front. Neurosci. 14 (2020). https://doi.org/10.3389/fnins.2020.587520. https://www.frontiersin.org/article/10.3389/fnins.2020.587520

Lotte, F., et al.: A review of classification algorithms for EEG-based brain-computer interfaces: a 10 year update. J. Neural Eng. 15(3), 031005 (2018)

Lotte, F., Lécuyer, A., Guan, C.: Towards a Fully Interpretable EEG-based BCI System, July 2010. https://hal.inria.fr/inria-00504658. Working paper or preprint

Luong, M.T., Pham, H., Manning, C.D.: Effective approaches to attention-based neural machine translation (2015)

Qu, X., Hall, M., Sun, Y., Sekuler, R., Hickey, T.J.: A personalized reading coach using wearable EEG sensors-a pilot study of brainwave learning analytics, pp. 501–507 (2018)

Qu, X., Liu, P., Li, Z., Hickey, T.: Multi-class time continuity voting for EEG classification. In: Frasson, C., Bamidis, P., Vlamos, P. (eds.) BFAL 2020. LNCS (LNAI), vol. 12462, pp. 24–33. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-60735-7_3

Qu, X., Mei, Q., Liu, P., Hickey, T.: Using EEG to distinguish between writing and ty** for the same cognitive task. In: Frasson, C., Bamidis, P., Vlamos, P. (eds.) BFAL 2020. LNCS (LNAI), vol. 12462, pp. 66–74. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-60735-7_7

Qu, X., Sun, Y., Sekuler, R., Hickey, T.: EEG markers of stem learning, pp. 1–9 (2018). https://doi.org/10.1109/FIE.2018.8659031

Schreyer, H.M., Gollisch, T.: Nonlinear spatial integration in retinal bipolar cells shapes the encoding of artificial and natural stimuli. Neuron 109(10), 1692–1706 (2021). https://doi.org/10.1016/j.neuron.2021.03.015

Smith, S.J.M.: EEG in the diagnosis, classification, and management of patients with epilepsy. J. Neurol. Neurosurg. Psychiatry 76(suppl 2), ii2–ii7 (2005). https://doi.org/10.1136/jnnp.2005.069245. https://jnnp.bmj.com/content/76/suppl_2/ii2

Sturm, I., Bach, S., Samek, W., Müller, K.R.: Interpretable deep neural networks for single-trial EEG classification (2016)

Sun, J., **e, J., Zhou, H.: EEG classification with transformer-based models. In: 2021 IEEE 3rd Global Conference on Life Sciences and Technologies (2021)

Vaswani, A., et al.: Attention is all you need 30 (2017). https://proceedings.neurips.cc/paper/2017/file/3f5ee243547dee91fbd053c1c4a845aa-Paper.pdf

Wairagkar, M., Hayashi, Y., Nasuto, S.J.: Dynamics of long-range temporal correlations in broadband EEG during different motor execution and imagery tasks. Front. Neurosci. 15 (2021). https://doi.org/10.3389/fnins.2021.660032. https://www.frontiersin.org/article/10.3389/fnins.2021.660032

Willett, F.R., Avansino, D.T., Hochberg, L.R., Henderson, J.M., Shenoy, K.V.: High-performance brain-to-text communication via imagined handwriting. Nature 593, 249–254 (2021)

Woo, S., Park, J., Lee, J.-Y., Kweon, I.S.: CBAM: convolutional block attention module. In: Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y. (eds.) ECCV 2018. LNCS, vol. 11211, pp. 3–19. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-01234-2_1

Yin, W., Schütze, H., **ang, B., Zhou, B.: ABCNN: attention-based convolutional neural network for modeling sentence pairs (2018)

Zhang, X., Yao, L., Wang, X., Monaghan, J., McAlpine, D., Zhang, Y.: A survey on deep learning-based non- invasive brain signals: recent advances and new frontiers. J. Neural Eng. 18, 031002 (2021)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Yi, L., Qu, X. (2022). Attention-Based CNN Capturing EEG Recording’s Average Voltage and Local Change. In: Degen, H., Ntoa, S. (eds) Artificial Intelligence in HCI. HCII 2022. Lecture Notes in Computer Science(), vol 13336. Springer, Cham. https://doi.org/10.1007/978-3-031-05643-7_29

Download citation

DOI: https://doi.org/10.1007/978-3-031-05643-7_29

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-05642-0

Online ISBN: 978-3-031-05643-7

eBook Packages: Computer ScienceComputer Science (R0)