Abstract

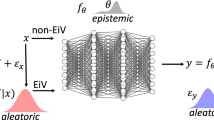

This work extends Bayesian regression as an adaptive that augmented by deep neural networks (the probabilistic encoder) to obtain the posterior probability distributions of the regression coefficients. We use variational inference to obtain the conditional distribution over the regression coefficients, which are the latent space given the observed data. Therefore, our model can recognize local conditional probability distribution of the regression coefficients for each observation as well as it can measure the uncertainty of the model locally. Experimental results on the benchmark datasets prove that our Bayesian Meta Regression (BMR) significantly outperforms baseline regression techniques and precisely measures the model uncertainty for each observation.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

McNeish, D.: On using Bayesian methods to address small sample problems. Struct. Equ. Model.: Multidiscip. J. 23(5), 750–773 (2016)

Chipman, H.A., George, E.I., McCulloch, R.E.: BART: Bayesian additive regression trees. Ann. Appl. Stat. 4(1), 266–298 (2010)

Hans, C.: Bayesian lasso regression. Biometrika 96(4), 835–845 (2009)

Bishop, C.M., Tip**, M.E.: Bayesian regression and classification. Nato Sci. Ser. sub Ser. III Comput. Syst. Sci. 190, 267–288 (2003)

Neal, R.M.: Bayesian learning for neural networks, 1st edn. Springer, New York (2012)

Faul, A.C., Tip**, M.E.: Analysis of sparse Bayesian learning. In: Advances in Neural Information Processing Systems, NIPS, pp. 383–389. MIT Press, Vancouver (2002)

Raftery, A.E., Madigan, D., Hoeting, J.A.: Bayesian model averaging for linear regression models. J. Am. Stat. Assoc. 92(437), 179–191 (1997)

Hinton, G.E., Plaut, D.C.: Using fast weights to deblur old memories. In: Proceedings of the Ninth Annual Conference of the Cognitive Science Society, CogSci, pp. 177–186. Hillsdale, New Jersey, Seattle, Washington, USA (1987)

Schmidhuber, J.: A neural network that embeds its own meta-levels. In: IEEE International Conference on Neural Networks, IJCNN-93, pp. 407–412. IEEE, San Francisco, CA, USA (1993)

Schmidhuber, J.: Learning to control fast-weight memories: an alternative to dynamic recurrent networks. Neural Comput. 4(1), 131–139 (1992)

Greengard, P.: The neurobiology of slow synaptic transmission. Science 294(5544), 1024–1030 (2001)

Munkhdalai, T., Yu, H.: Meta networks. In: Proceedings of the 34th International Conference on Machine Learning-Volume 70, ICML, pp. 2554–2563. JMLR, Sydney, Australia (2017)

Munkhdalai, T., Trischler, A.: Metalearning with hebbian fast weights. ar**v preprint ar**v:1807.05076. (2018). https://arxiv.org/abs/1807.05076. Last accessed 2020/02/10

Munkhdalai, T., Yuan, X., Mehri, S., Trischler, A.: Rapid adaptation with conditionally shifted neurons. In: Proceedings of the 35th International Conference on Machine Learning, ICML, pp. 3664–3673. JMLR, Stockholm, Sweden (2018)

Munkhdalai, T., Sordoni, A., Wang, T., Trischler, A.: Metalearned neural memory. In: Advances in Neural Information Processing Systems, NIPS, pp. 13310–13321. MIT Press, Vancouver (2019)

Ha, D., Dai, A., Le, Q.V.: Hypernetworks. In: Proceedings of the 5th International Conference on Learning Representations, ICLR, OpenReview.net, Toulon, France (2017)

Munkhdalai, L., Munkhdalai, T., Park, K.H., Amarbayasgalan, T., Erdenebaatar, E., Park, H.W., Ryu, K.H.: An end-to-end adaptive input selection with dynamic weights for forecasting multivariate time series. IEEE Access 7, 99099–99114 (2019)

Kingma, D.P., Welling, M.: Auto-encoding variational bayes. ar**v preprint ar**v:1312.6114. (2013). https://arxiv.org/pdf/1312.6114.pdf. Last accessed 2020/03/10

Blake, C.L., Merz, C.J.: UCI repository of machine learning databases. (1998). https://archive.ics.uci.edu/ml/datasets.php. Last accessed 2020/04/01

Cleveland, W.S., Devlin, S.J.: Locally weighted regression: an approach to regression analysis by local fitting. J. Am. Stat. Assoc. 83(403), 596–610 (1988)

Acknowledgements

This research was supported by Basic Science Research Program through the National Research Foundation of Korea (NRF) funded by the Ministry of Science, ICT and Future Planning (No. 2019K2A9A2A06020672) and (No. 2020R1A2B5B02001717).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2021 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Munkhdalai, L., Pham, V.H., Ryu, K.H. (2021). Bayesian Meta Regression. In: Pan, JS., Li, J., Ryu, K.H., Meng, Z., Klasnja-Milicevic, A. (eds) Advances in Intelligent Information Hiding and Multimedia Signal Processing. Smart Innovation, Systems and Technologies, vol 212. Springer, Singapore. https://doi.org/10.1007/978-981-33-6757-9_7

Download citation

DOI: https://doi.org/10.1007/978-981-33-6757-9_7

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-33-6756-2

Online ISBN: 978-981-33-6757-9

eBook Packages: Intelligent Technologies and RoboticsIntelligent Technologies and Robotics (R0)