Abstract

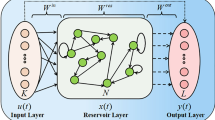

Reservoir Computing (RC) defines a Recurrent Neural Network (RNN) method that optimally processes transient information that generated based on recurrent tasks utilizing sequential datasets. As a typical actualization of the RC classic, Echo State Network (ESN) can train the transient data by linear optimization using very small training datasets and make the network operations fairly fast. However, classical ESNs use a completely randomized reservoir matrix to delineate the fundamental recursive operations and are accompanied by a large number of hyper-parameters to be optimized, such as input weights, reservoir weights, warm-up time steps, and activation functions. The recently proposed Nonlinear Vector Autoregressive (NVAR) algorithm which does not require random inputs and reservoir weights is equated with the traditional ESN reservoir. As a future direction of RC development, NVAR requires few hyper-parameters to be optimized. In this study, a multi-layer ESN model based on the NVAR (NVAR-MLESN) is constructed and applied to time series prediction. Three sequential benchmarks which are synthetic Mackey-Glass chaotic sequence, Sunspot sequence, and real-world Santa Fe Laser sequential dataset are implemented to show the advantage of NVAR-MLESN. Exhaustive simulated outcomes exhibit that our NVAR-MLESN is efficient to develop the performance of classical MLESN and single layer NVAR-ESN. Meanwhile, NVAR-MLESN has fewer parameters to be optimized, faster computing speed, and better performance comparing with the traditional deep ESNs or MLESNs.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Tanaka, G., Yamane, T., Héroux, J.B., et al.: Recent advances in physical reservoir computing: a review. Neural Netw. 115, 100–123 (2019)

Lukoševičius, M., Jaeger, H.: Reservoir computing approaches to recurrent neural network training. Comput. Sci. Rev. 3(3), 127–149 (2009)

Lukoševičius, M., Jaeger, H., Schrauwen, B.: Reservoir computing trends. KI-Künstliche Intelligenz 26(4), 365–371 (2012)

Verstraeten, D., Schrauwen, B., d’Haene, M., et al.: An experimental unification of reservoir computing methods. Neural Netw. 20(3), 391–403 (2007)

Jaeger, H., Haas, H.: Harnessing nonlinearity: predicting chaotic systems and saving energy in wireless communication. Science 304, 78–80 (2004)

Jaeger, H.: The “echo state” approach to analysing and training recurrent neural networks. Technology GMD Technical Report 148, German National Research Center for Information, Germany (2001)

Li, D., Han, M., Wang, J.: Chaotic time series prediction based on a novel robust echo state network. IEEE Trans. Neural Netw. Learn. Syst. 23(5), 787–799 (2012)

Skowronski, M.D., Harris, J.G.: Automatic speech recognition using a predictive echo state network classifier. Neural Netw. 20(3), 414–423 (2007)

Trentin, E., Scherer, S., Schwenker, F.: Emotion recognition fromspeechsignals via a probabilistic echo-state network. Pattern Recogn. Lett. 66, 4–12 (2015)

Ishu, K., van Der Zant, T., Becanovic, V., et al.: Identification of motion with echo state network. In: MTS/IEEE Techno-Ocean 2004 (IEEE Cat. No. 04CH37600), pp. 1205–1210. IEEE (2004)

Wang, L., Wang, Z., Liu, S.: An effective multivariate time series classification approach using echo state network and adaptive differential evolution algorithm. Expert Syst. Appl. 43, 237–249 (2016)

Ma, Q., Shen, L., Chen, W., et al.: Functional echo state network for time series classification. Inf. Sci. 373, 1–20 (2016)

Tanisaro, P., Heidemann, G.: Time series classification using time war** invariant echo state networks. In: The 15th IEEE International Conference on Machine Learning and Applications, pp. 831–836. IEEE (2016)

Hu, H., Wang, L., Lv, S.X.: Forecasting energy consumption and wind power generation using deep echo state network. Renew. Energy 154, 598–613 (2020)

Hu, H., Wang, L., Peng, L., et al.: Effective energy consumption forecasting using enhanced bagged echo state network. Energy 193, 116778 (2020)

Sun, L., **, B., Yang, H., et al.: Unsupervised EEG feature extraction based on echo state network. Inf. Sci. 475, 1–17 (2019)

Wang, H., Ni, C., Yan, X.: Optimizing the echo state network based on mutual information for modeling fed-batch bioprocesses. Neurocomputing 225, 111–118 (2017)

Wang, H., Yan, X.: Reservoir computing with sensitivity analysis input scaling regulation and redundant unit pruning for modeling fed-batch bioprocesses. Ind. Eng. Chem. Res. 53(16), 6789–6797 (2014)

Yperman, J., Becker, T.: Bayesian optimization of hyper-parameters in reservoir computing. ar**v preprint ar**v:1611.05193 (2016)

Thiede, L.A., Parlitz, U.: Gradient based hyperparameter optimization in echo state networks. Neural Netw. 115, 23–29 (2019)

Ma, Q., Chen, W., Wei, J., et al.: Direct model of memory properties and the linear reservoir topologies in echo state networks. Appl. Soft Comput. 22, 622–628 (2014)

Li, X., Bi, F., Yang, X., et al.: An echo state network with improved topology for time series prediction. IEEE Sens. J. 22, 5869–5878 (2022)

Gauthier, D.J., Bollt, E., Griffith, A., et al.: Next generation reservoir computing. Nat. Commun. 12(1), 1–8 (2021)

Gallicchio, C., Micheli, A., Pedrelli, L.: Deep reservoir computing: a critical experimental analysis. Neurocomputing 268, 87–99 (2017)

Gallicchio, C., Micheli, A.: Deep echo state network (DeepESN): a brief survey. ar**v preprint ar**v:1712.04323 (2017)

Gallicchio, C., Micheli, A., Pedrelli, L.: Design of deep echo state networks. Neural Netw. 108, 33–47 (2018)

Li, X., Zhang, W., Ding, Q.: Deep learning-based remaining useful life estimation of bearings using multi-scale feature extraction. Reliab. Eng. Syst. Saf. 182, 208–218 (2019)

Chouikhi, N., Ammar, B., Alimi, A.M.: Genesis of basic and multi-layer echo state network recurrent autoencoders for efficient data representations. ar**v preprint ar**v:1804.08996 (2018)

McDermott, P.L., Wikle, C.K.: Deep echo state networks with uncertainty quantification for spatio-temporal forecasting. Environmetrics 30(3), e2553 (2019)

Gonon, L., Ortega, J.P.: Reservoir computing universality with stochastic inputs. IEEE Trans. Neural Netw. Learn. Syst. 31(1), 100–112 (2019)

Hart, A.G., Hook, J.L., Dawes, J.H.P.: Echo state networks trained by Tikhonov least squares are L2 (μ) approximators of ergodic dynamical systems. Physica D 421, 132882 (2021)

Wang, H., Yan, X.: Optimizing the echo state network with a binary particle swarm optimization algorithm. Knowl. Based Syst. 86, 182–193 (2015)

Rodan, A., Tino, P.: Minimum complexity echo state network. IEEE Trans. Neural Netw. 22, 131–144 (2011)

Hu, R., Tang, Z.-R., Song, X., Luo, J., Wu, E.Q., Chang, S.: Ensemble echo network with deep architecture for time-series modeling. Neural Comput. Appl. 33(10), 4997–5010 (2020). https://doi.org/10.1007/s00521-020-05286-8

Wang, L., Su, Z., Qiao, J., Yang, C.: Design of sparse Bayesian echo state network for time series prediction. Neural Comput. Appl. 33(12), 7089–7102 (2020). https://doi.org/10.1007/s00521-020-05477-3

Yang, C., Qiao, J., Wang, L., Zhu, X.: Dynamical regularized echo state network for time series prediction. Neural Comput. Appl. 31(10), 6781–6794 (2018). https://doi.org/10.1007/s00521-018-3488-z

Ding, Y., Zhu, Y., Feng, J., et al.: Interpretable spatio-temporal attention LSTM model for flood forecasting. Neurocomputing 403, 348–359 (2020)

Acknowledgments

The authors gratefully acknowledge the support of the following foundations: National Natural Science Foundation of China (61603343 and 62173309), Key Research Projects of Henan Higher Education Institutions (22A413009).

Author information

Authors and Affiliations

Corresponding authors

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Wang, H., Liu, Y., Wang, D., Luo, Y., **n, J. (2022). Multi-layer Echo State Network with Nonlinear Vector Autoregression Reservoir for Time Series Prediction. In: Zhang, H., et al. Neural Computing for Advanced Applications. NCAA 2022. Communications in Computer and Information Science, vol 1637. Springer, Singapore. https://doi.org/10.1007/978-981-19-6142-7_37

Download citation

DOI: https://doi.org/10.1007/978-981-19-6142-7_37

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-19-6141-0

Online ISBN: 978-981-19-6142-7

eBook Packages: Computer ScienceComputer Science (R0)