Abstract

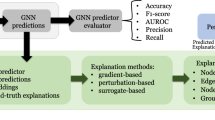

Interpretable machine learning, or explainable artificial intelligence, is experiencing rapid developments to tackle the opacity issue of deep learning techniques. In graph analysis, motivated by the effectiveness of deep learning, graph neural networks (GNNs) are becoming increasingly popular in modeling graph data. Recently, an increasing number of approaches have been proposed to provide explanations for GNNs or to improve GNN interpretability. In this chapter, we offer a comprehensive survey to summarize these approaches. Specifically, in the first section, we review the fundamental concepts of interpretability in deep learning. In the second section, we introduce the post-hoc explanation methods for understanding GNN predictions. In the third section, we introduce the advances of develo** more interpretable models for graph data. In the fourth section, we introduce the datasets and metrics for evaluating interpretation. Finally, we point out future directions of the topic.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Preview

Unable to display preview. Download preview PDF.

Similar content being viewed by others

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this chapter

Cite this chapter

Liu, N., Feng, Q., Hu, X. (2022). Interpretability in Graph Neural Networks. In: Wu, L., Cui, P., Pei, J., Zhao, L. (eds) Graph Neural Networks: Foundations, Frontiers, and Applications. Springer, Singapore. https://doi.org/10.1007/978-981-16-6054-2_7

Download citation

DOI: https://doi.org/10.1007/978-981-16-6054-2_7

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-16-6053-5

Online ISBN: 978-981-16-6054-2

eBook Packages: Computer ScienceComputer Science (R0)