Abstract

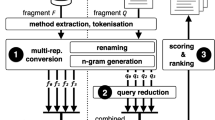

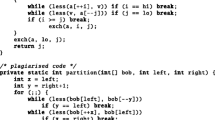

As code intelligence and collaborative computing advances, code representation models (CRMs) have demonstrated exceptional performance in tasks such as code prediction and collaborative code development by leveraging distributed computing resources and shared datasets. Nonetheless, CRMs are often considered unreliable due to their vulnerability to adversarial attacks, failing to make correct predictions when faced with inputs containing perturbations. Several adversarial attack methods have been proposed to evaluate the robustness of CRMs and ensure their reliable in application. However, these methods rely primarily on code’s textual features, without fully exploiting its crucial structural features. To address this limitation, we propose STRUCK, a novel adversarial attack method that thoroughly exploits code’s structural features. The key idea of STRUCK lies in integrating multiple global and local perturbation methods and effectively selecting them by leveraging the structural features of the input code during the generation of adversarial examples for CRMs. We conduct comprehensive evaluations of seven basic or advanced CRMs using two prevalent code classification tasks, demonstrating STRUCK’s effectiveness, efficiency, and imperceptibility. Finally, we show that STRUCK enables a more precise assessment of CRMs’ robustness and increases their resistance to structural attacks through adversarial training.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Codechef (2022). https://codechef.com/

Ahmad, W.U., Chakraborty, S., Ray, B., Chang, K.W.: A transformer-based approach for source code summarization. ar**v preprint ar**v:2005.00653 (2020)

Akhtar, N., Mian, A., Kardan, N., Shah, M.: Advances in adversarial attacks and defenses in computer vision: a survey. IEEE Access 9, 155161–155196 (2021)

Allamanis, M., Brockschmidt, M., Khademi, M.: Learning to represent programs with graphs. In: ICLR 2018 - Conference Track Proceedings (2018)

Ben-Nun, T., Hoefler, T.: Demystifying parallel and distributed deep learning: An in-depth concurrency analysis. ar**v: Learning (2018)

Cao, H., et al.: Prevention of gan-based privacy inferring attacks towards federated learning. In: Collaborative Computing: Networking, Applications and Worksharing (2022)

Carlini, N., Wagner, D.: Towards evaluating the robustness of neural networks. In: IEEE Symposium on Security and Privacy (2017)

Christlein, V., Riess, C., Jordan, J., Riess, C., Angelopoulou, E.: An evaluation of popular copy-move forgery detection approaches. IEEE Trans. Inf. Forensics Secur. 7(6), 1841–1854 (2012)

Dai, H., Li, H., Tian, T., Huang, X., Wang, L., Zhu, J., Song, L.: Adversarial attack on graph structured data, pp. 1115–1124. PMLR (2018)

Dong, S., Wang, P., Abbas, K.: A survey on deep learning and its applications. Comput. Sci. Rev. 40, 100379 (2021)

Feng, Z., et al.: Codebert: a pre-trained model for programming and natural languages. In: Empirical Methods in Natural Language Processing (2020)

Fernandes, P., Allamanis, M., Brockschmidt, M.: Structured neural summarization. In: ICLR (2019)

Gao, S., Gao, C., Wang, C., Sun, J., Lo, D.: Carbon: a counterfactual reasoning based framework for neural code comprehension debiasing (2022)

Guo, D., Lu, S., Duan, N., Wang, Y., Zhou, M., Yin, J.: Unixcoder: unified cross-modal pre-training for code representation. In: ACL 2022, Dublin, Ireland (2022)

Guo, D., et al.: Graphcodebert: pre-training code representations with data flow. In: Learning (2020)

Hellendoorn, V.J., Sutton, C., Singh, R., Maniatis, P., Bieber, D.: Global relational models of source code. In: 8th International Conference on Learning Representations, ICLR 2020, Addis Ababa, Ethiopia, 26–30 April 2020 (2020)

Iyer, S., Konstas, I., Cheung, A., Zettlemoyer, L.: Summarizing source code using a neural attention model. In: Proceedings of the 54th Annual Meeting of the Association for Computational Linguistics, pp. 2073–2083 (2016)

Kipf, T., Welling, M.: Semi-supervised classification with graph convolutional networks. ar**v: Learning (2016)

Li, J., Peng, J., Chen, L., Zheng, Z., Liang, T., Ling, Q.: Spectral adversarial training for robust graph neural network. IEEE Trans. Knowl. Data Eng. 35, 9240–9253 (2022)

Li, J., **e, T., Chen, L., **e, F., He, X., Zheng, Z.: Adversarial attack on large scale graph. IEEE Trans. Knowl. Data Eng. 35(1), 82–95 (2021)

Li, S., Zheng, X., Zhang, X., Chen, X., Li, W.: Facial expression recognition based on deep spatio-temporal attention network. In: Collaborative Computing: Networking, Applications and Worksharing (2022)

Mou, L., Li, G., Zhang, L., Wang, T., **, Z.: Convolutional neural networks over tree structures for programming language processing. In: National Conference on Artificial Intelligence (2014)

Pour, M., Li, Z., Ma, L., Hemmati, H.: A search-based testing framework for deep neural networks of source code embedding. In: ICST (2021)

Rabin, M.R.I., Bui, N.D.Q., Wang, K., Yu, Y., Jiang, L., Alipour, M.A.: On the generalizability of Neural Program Models with respect to semantic-preserving program transformations. Inf. Softw. Technol. 135, 106552 (2021)

Ramakrishnan, G., Albarghouthi, A.: Backdoors in neural models of source code. ar**v: Learning (2020)

Ramakrishnan, G., Henkel, J., Wang, Z., Albarghouthi, A., Jha, S., Reps, T.: Semantic robustness of models of source code. ar**v: Learning (2020)

Schuster, R., Song, C., Tromer, E., Shmatikov, V.: You autocomplete me: poisoning vulnerabilities in neural code completion. In: Usenix Security Symposium (2021)

Sun, X., Tong, M.: Hindom: a robust malicious domain detection system based on heterogeneous information network with transductive classification. Ar**v (2019)

Szegedy, C., et al.: Intriguing properties of neural networks. ar**v preprint ar**v:1312.6199 (2013)

Vasic, M., Kanade, A., Maniatis, P., Bieber, D., Singh, R.: Neural program repair by jointly learning to localize and repair. ar**v: Learning (2019)

Wu, R., Zhang, Y., Peng, Q., Chen, L., Zheng, Z.: A survey of deep learning models for structural code understanding. ar**v preprint ar**v:2205.01293 (2022)

Wu, X.: Blackbox adversarial attacks and explanations for automatic speech recognition. In: ESEC/FSE 2022 (2022)

Wu, Z., Pan, S., Chen, F., Long, G., Zhang, C., Philip, S.Y.: A comprehensive survey on graph neural networks. IEEE Trans. Neural Netw. Learn. Syst. 32(1), 4–24 (2020)

Yang, Z., Shi, J., He, J., Lo, D.: Natural attack for pre-trained models of code. In: ICSE 2022, New York, NY, USA (2022)

Yefet, N., Alon, U., Yahav, E.: Adversarial examples for models of code. In: Proceedings of the ACM on Programming Languages, vol. 4, no. OOPSLA, pp. 1–30 (2020)

Zhang, H., et al.: Towards robustness of deep program processing models-detection, estimation, and enhancement. TOSEM 31(3), 1–40 (2022)

Zhang, H., Li, Z., Li, G., Ma, L., Liu, Y., **, Z.: Generating adversarial examples for holding robustness of source code processing models. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 34, pp. 1169–1176 (2020)

Zhang, J., Wang, X., Zhang, H., Sun, H., Wang, K., Liu, X.: A novel neural source code representation based on abstract syntax tree. In: ICSE. IEEE (2019)

Zhang, W.E., Sheng, Q.Z., Alhazmi, A., Li, C.: Adversarial attacks on deep-learning models in natural language processing: a survey. ACM Trans. Intell. Syst. Technol. (TIST) 11(3), 1–41 (2020)

Zhang, W., Guo, S., Zhang, H., Sui, Y., Xue, Y., Xu, Y.: Challenging machine learning-based clone detectors via semantic-preserving code transformations. IEEE Trans. Softw. Eng. 49(5), 3052–3070 (2023)

Zhou, Y., Shi, D., Yang, H., Hu, H., Yang, S., Zhang, Y.: Deep reinforcement learning for multi-UAV exploration under energy constraints. In: Collaborative Computing: Networking, Applications and Worksharing (2022)

Acknowledgment

The research is supported by the National Key R&D Program of China under grant No. 2022YFF0902500, the Guangdong Basic and Applied Basic Research Foundation, China (No. 2023A1515011050). Liang Chen is the corresponding author.The research is supported by the National Key R&D Program of China under grant No. 2022YFF0902500, the Guangdong Basic and Applied Basic Research Foundation, China (No. 2023A1515011050). Liang Chen is the corresponding author.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2024 ICST Institute for Computer Sciences, Social Informatics and Telecommunications Engineering

About this paper

Cite this paper

Zhang, Y., Wu, R., Liao, J., Chen, L. (2024). Structural Adversarial Attack for Code Representation Models. In: Gao, H., Wang, X., Voros, N. (eds) Collaborative Computing: Networking, Applications and Worksharing. CollaborateCom 2023. Lecture Notes of the Institute for Computer Sciences, Social Informatics and Telecommunications Engineering, vol 562. Springer, Cham. https://doi.org/10.1007/978-3-031-54528-3_22

Download citation

DOI: https://doi.org/10.1007/978-3-031-54528-3_22

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-54527-6

Online ISBN: 978-3-031-54528-3

eBook Packages: Computer ScienceComputer Science (R0)