Abstract

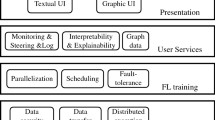

Since its debut in 2016, Federated Learning (FL) has been tied to the inner workings of Deep Neural Networks (DNNs); this allowed its development as DNNs proliferated but neglected those scenarios in which using DNNs is not possible or advantageous. The fact that most current FL frameworks only support DNNs reinforces this problem. To address the lack of non-DNN-based FL solutions, we propose MAFL (Model-Agnostic Federated Learning). MAFL merges a model-agnostic FL algorithm, AdaBoost.F, with an open industry-grade FL framework: Intel® OpenFL. MAFL is the first FL system not tied to any machine learning model, allowing exploration of FL beyond DNNs. We test MAFL from multiple points of view, assessing its correctness, flexibility, and scaling properties up to 64 nodes of an HPC cluster. We also show how we optimised OpenFL achieving a 5.5\(\times \) speedup over a standard FL scenario. MAFL is compatible with x86-64, ARM-v8, Power and RISC-V.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Arfat, Y., Mittone, G., Colonnelli, I., D’Ascenzo, F., Esposito, R., Aldinucci, M.: Pooling critical datasets with federated learning. In: IEEE PDP (2023)

Bartolini, A., Ficarelli, F., Parisi, E., Beneventi, F., Barchi, F., Gregori, D., et al.: Monte cimone: paving the road for the first generation of risc-v high-performance computers. In: IEEE SOCC, pp. 1–6 (2022)

Beltrán, E.T.M., Pérez, M.Q., Sánchez, P.M.S., Bernal, S.L., Bovet, G., Pérez, M.G., et al.: Decentralized federated learning: fundamentals, state-of-the-art, frameworks, trends, and challenges. ar**v preprint ar**v:2211.08413 (2022)

Beutel, D.J., Topal, T., Mathur, A., Qiu, X., Parcollet, T., de Gusmão, P.P., et al.: Flower: a friendly federated learning research framework. ar**v preprint ar**v:2007.14390 (2020)

Foley, P., Sheller, M.J., Edwards, B., Pati, S., Riviera, W., Sharma, M., et al.: OpenFL: the open federated learning library. Phys. Med. Biol. 67(21), 214001 (2022)

Freund, Y., Schapire, R.E.: A decision-theoretic generalization of on-line learning and an application to boosting. J. Comput. Syst. Sci. 55(1), 119–139 (1997)

He, C., Li, S., So, J., Zhang, M., Wang, H., Wang, X., et al.: FedML: a research library and benchmark for federated machine learning. ar**v preprint ar**v:2007.13518 (2020)

Holzinger, A., Langs, G., Denk, H., Zatloukal, K., Müller, H.: Causability and explainability of artificial intelligence in medicine. Wiley Interdisc. Rev. Data Min. Knowl. Discov. 9(4), 1312 (2019)

Kairouz, P., et al.: Advances and open problems in federated learning. Found. Trends Mach. Learn. 14(1–2), 1–210 (2021)

Kleanthous, C., Chatzis, S.: Gated mixture variational autoencoders for value added tax audit case selection. Knowl. Based Syst. 188, 105048 (2020)

Krizhevsky, A., Sutskever, I., Hinton, G.E.: Imagenet classification with deep convolutional neural networks. Commun. ACM 60(6), 84–90 (2017)

Liu, Y., Fan, T., Chen, T., Xu, Q., Yang, Q.: Fate: an industrial grade platform for collaborative learning with data protection. J. Mach. Learn. Res. 22(1), 10320–10325 (2021)

Ludwig, H., Baracaldo, N., Thomas, G., Zhou, Y., Anwar, A., Rajamoni, S., et al.: IBM federated learning: an enterprise framework white paper v0. 1. ar**v preprint ar**v:2007.10987 (2020)

Lyu, L., Yu, H., Ma, X., Chen, C., Sun, L., Zhao, J., et al.: Privacy and robustness in federated learning: attacks and defenses. IEEE Trans. Neural. Netw. Learn. Syst. 1–21 (2022)

McMahan, B., Moore, E., Ramage, D., Hampson, S., Agüera y Arcas, B.: Communication-efficient learning of deep networks from decentralized data. In: Proceedings of the 20th International Conference on Artificial Intelligence and Statistics AISTATS, vol. 54, pp. 1273–1282. PMLR, Fort Lauderdale, FL, USA (2017)

Meese, C., Chen, H., Asif, S.A., Li, W., Shen, C.C., Nejad, M.: BFRT: blockchained federated learning for real-time traffic flow prediction. In: IEEE CCGrid, pp. 317–326 (2022)

O’Mahony, N., et al.: Deep learning vs. traditional computer vision. In: Arai, K., Kapoor, S. (eds.) CVC 2019. AISC, vol. 943, pp. 128–144. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-17795-9_10

Polato, M., Esposito, R., Aldinucci, M.: Boosting the federation: cross-silo federated learning without gradient descent. In: IEEE IJCNN), pp. 1–10 (2022)

Riviera, W., Menegaz, G., Boscolo Galazzo, I.: FeLebrities: a user-centric assessment of federated learning frameworks. TechRxiv (2022)

Roth, H.R., Cheng, Y., Wen, Y., Yang, I., Xu, Z., Hsieh, Y.T., et al.: Nvidia flare: federated learning from simulation to real-world. ar**v preprint ar**v:2210.13291 (2022)

Sotthiwat, E., Zhen, L., Li, Z., Zhang, C.: Partially encrypted multi-party computation for federated learning. In: IEEE CCGrid, pp. 828–835 (2021)

Sutskever, I., Vinyals, O., Le, Q.V.: Sequence to sequence learning with neural networks. In: NeurIPS, pp. 3104–3112 (2014)

Warnat-Herresthal, S., Schultze, H., Shastry, K.L., Manamohan, S., Mukherjee, S., Garg, V., et al.: Swarm learning for decentralized and confidential clinical machine learning. Nature 594(7862), 265–270 (2021)

Zhavoronkov, A., Ivanenkov, Y.A., Aliper, A., Veselov, M.S., Aladinskiy, V.A., Aladinskaya, A.V., et al.: Deep learning enables rapid identification of potent DDR1 kinase inhibitors. Nat. Biotechnol. 37(9), 1038–1040 (2019)

Acknowledgments

This work has been supported by the Spoke “FutureHPC & BigData" of the ICSC - Centro Nazionale di Ricerca in “High Performance Computing, Big Data and Quantum Computing", funded by European Union - NextGenerationEU and the EuPilot project funded by EuroHPC JU under G.A. n. 101034126.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Mittone, G., Riviera, W., Colonnelli, I., Birke, R., Aldinucci, M. (2023). Model-Agnostic Federated Learning. In: Cano, J., Dikaiakos, M.D., Papadopoulos, G.A., Pericàs, M., Sakellariou, R. (eds) Euro-Par 2023: Parallel Processing. Euro-Par 2023. Lecture Notes in Computer Science, vol 14100. Springer, Cham. https://doi.org/10.1007/978-3-031-39698-4_26

Download citation

DOI: https://doi.org/10.1007/978-3-031-39698-4_26

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-39697-7

Online ISBN: 978-3-031-39698-4

eBook Packages: Computer ScienceComputer Science (R0)