Abstract

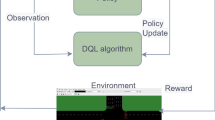

Recent development of deep reinforcement learning models has impacted many fields, especially decision based control systems. Urban traffic signal control minimizes traffic congestion as well as overall traffic delay. In this work, we use a decentralized multi-agent reinforcement learning model represented by a novel state and reward function. In comparison to other single agent models reported in literature, this approach uses minimal data collection to control the traffic lights. Our model is assessed using traffic data that has been synthetically generated. Additionally, we compare the outcomes to those of existing models and employ the Monaco SUMO Traffic (MoST) Scenario to examine real-time traffic data.

Finally, we use statistical model checking (specifically, the MultiVeStA) to check performance properties. Our model works well in all synthetic generated data and real time data.

B. Thamilselvam—Thamilselvam is supported by the DST NM-ICPS, Technology Innovation Hub on Autonomous Navigation and Data Acquisition Systems: TiHAN Foundation at Indian Institute of Technology IIT Hyderabad.

S. Kalyanasundaram—Subrahmanyam is supported by DST-SERB through the projects MTR/2020/000497 and CRG/2022/009400.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Bellman, R.: A Markovian decision process. J. Math. Mech. 6, 679–684 (1957)

Chen, C., et al.: Toward a thousand lights: decentralized deep reinforcement learning for large-scale traffic signal control. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 34, pp. 3414–3421 (2020)

Chen, Y., Li, C., Yue, W., Zhang, H., Mao, G.: Engineering a large-scale traffic signal control: a multi-agent reinforcement learning approach. In: IEEE Conference on Computer Communications Workshops (INFOCOM WKSHPS), IEEE INFOCOM 2021, pp. 1–6 (2021)

Codeca, L., Härri, J.: Monaco SUMO Traffic (MoST) scenario: a 3D mobility scenario for cooperative ITS. In: SUMO User Conference, Simulating Autonomous and Intermodal Transport Systems, SUMO 2018, 14–16 May 2018, Berlin, Germany, May 2018

De Schutter, B.: Optimizing acyclic traffic signal switching sequences through an extended linear complementarity problem formulation. Eur. J. Oper. Res. 139(2), 400–415 (2002)

Ducrocq, R., Farhi, N.: Deep reinforcement Q-learning for intelligent traffic signal control with partial detection. Int. J. Intell. Transp. Syst. Res. 21, 192–206 (2023)

Gallivan, S., Heydecker, B.: Optimising the control performance of traffic signals at a single junction. Transp. Res. Part B Methodol. 22(5), 357–370 (1988)

Gazis, D.C.: Optimum control of a system of oversaturated intersections. Oper. Res. 12(6), 815–831 (1964)

Legay, A., Lukina, A., Traonouez, L.M., Yang, J., Smolka, S.A., Grosu, R.: Statistical model checking. In: Steffen, B., Woeginger, G. (eds.) Computing and Software Science. LNCS, vol. 10000, pp. 478–504. Springer, Cham (2019). https://doi.org/10.1007/978-3-319-91908-9_23

Li, Z., Xu, C., Zhang, G.: A deep reinforcement learning approach for traffic signal control optimization. ar**v preprint ar**v:2107.06115 (2021)

Lopez, P.A., et al.: Microscopic traffic simulation using SUMO. In: The 21st IEEE International Conference on Intelligent Transportation Systems. IEEE, November 2018

Sebastio, S., Vandin, A.: MultiVeStA: statistical model checking for discrete event simulators. In: Horváth, A., Buchholz, P., Cortellessa, V., Muscariello, L., Squillante, M.S. (eds.) 7th International Conference on Performance Evaluation Methodologies and Tools, ValueTools 2013, pp. 310–315. ICST/ACM (2013)

Sen, S., Head, K.L.: Controlled optimization of phases at an intersection. Transp. Sci. 31(1), 5–17 (1997)

Smith, M.: Traffic control and route-choice; a simple example. Transp. Res. Part B Methodol. 13(4), 289–294 (1979)

Sutton, R., Barto, A.: Reinforcement learning: an introduction. IEEE Trans. Neural Netw. 9(5), 1054–1054 (1998)

Tan, M.: Multi-agent reinforcement learning: independent vs. cooperative agents. In: Proceedings of the Tenth International Conference on Machine Learning, pp. 330–337 (1993)

Thamilselvam, B., Kalyanasundaram, S., Parmar, S., Panduranga Rao, M.V.: Statistical model checking for traffic models. In: Campos, S., Minea, M. (eds.) SBMF 2021. LNCS, vol. 13130, pp. 17–33. Springer, Cham (2021). https://doi.org/10.1007/978-3-030-92137-8_2

Wang, X., Ke, L., Qiao, Z., Chai, X.: Large-scale traffic signal control using a novel multiagent reinforcement learning. IEEE Trans. Cybern. 51(1), 174–187 (2020)

Watkins, C.J., Dayan, P.: Q-learning. Mach. Learn. 8, 279–292 (1992)

Zhou, P., Chen, X., Liu, Z., Braud, T., Hui, P., Kangasharju, J.: DRLE: decentralized reinforcement learning at the edge for traffic light control in the IoV. IEEE Trans. Intell. Transp. Syst. 22(4), 2262–2273 (2020)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 IFIP International Federation for Information Processing

About this paper

Cite this paper

Thamilselvam, B., Kalyanasundaram, S., Panduranga Rao, M.V. (2023). Decentralized Multi Agent Deep Reinforcement Q-Learning for Intelligent Traffic Controller. In: Maglogiannis, I., Iliadis, L., MacIntyre, J., Dominguez, M. (eds) Artificial Intelligence Applications and Innovations. AIAI 2023. IFIP Advances in Information and Communication Technology, vol 675. Springer, Cham. https://doi.org/10.1007/978-3-031-34111-3_5

Download citation

DOI: https://doi.org/10.1007/978-3-031-34111-3_5

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-34110-6

Online ISBN: 978-3-031-34111-3

eBook Packages: Computer ScienceComputer Science (R0)