Abstract

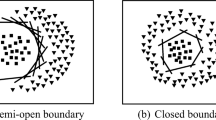

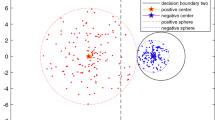

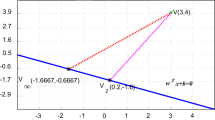

The classification loss functions used in deep neural network classifiers can be grouped into two categories based on maximizing the margin in either Euclidean or angular spaces. Euclidean distances between sample vectors are used during classification for the methods maximizing the margin in Euclidean spaces whereas the Cosine similarity distance is used during the testing stage for the methods maximizing margin in the angular spaces. This paper introduces a novel classification loss that maximizes the margin in both the Euclidean and angular spaces at the same time. This way, the Euclidean and Cosine distances will produce similar and consistent results and complement each other, which will in turn improve the accuracies. The proposed loss function enforces the samples of classes to cluster around the centers that represent them. The centers approximating classes are chosen from the boundary of a hypersphere, and the pairwise distances between class centers are always equivalent. This restriction corresponds to choosing centers from the vertices of a regular simplex. There is not any hyperparameter that must be set by the user in the proposed loss function, therefore the use of the proposed method is extremely easy for classical classification problems. Moreover, since the class samples are compactly clustered around their corresponding means, the proposed classifier is also very suitable for open set recognition problems where test samples can come from the unknown classes that are not seen in the training phase. Experimental studies show that the proposed method achieves the state-of-the-art accuracies on open set recognition despite its simplicity.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Balko, M., Por, A., Scheucher, M., Swanepoel, K., Valtr, P.: Almost-equidistant sets. Graphs and Combinatorics 36, 729–754 (2020)

Bendale, A., Boult, T.E.: Towards open set deep networks. In: CVPR (2016)

Cevikalp, H., Larlus, D., Neamtu, M., Triggs, B., Jurie, F.: Manifold based local classifiers: linear and nonlinear approaches. J. Signal Process. Syst. 61, 61–73 (2010)

Cevikalp, H., Neamtu, M.W.: Discriminative common vector method with kernels. IEEE Trans. Neural Networks 17, 1550–1565 (2006)

Cevikalp, H., Saglamlar, H.: Polyhedral conic classifiers for computer vision applications and open set recognition. IEEE Trans. Pattern Anal. Mach. Intell. 43, 608–622 (2021)

Cevikalp, H., Triggs, B.: Polyhedral conic classifiers for visual object detection and classification. In: IEEE Society Conference on Computer Vision and Pattern Recognition (CVPR) (2017)

Cevikalp, H., Uzun, B., Kopuklu, O., Ozturk, G.: Deep compact polyhedral conic classifier for open and closed set recognition. Pattern Recogn. 119(108080), 1–12 (2021)

Cevikalp, H., Uzun, B., Salk, Y., Saribas, H., Kopuklu, O.: From anomaly detection to open set recognition: Bridging the gap. Pattern Recogn. 138, 109385 (2023)

Chen, G., et al.: Learning open set network with discriminative reciprocal points. In: ECCV (2020)

Cortes, C., Vapnik, V.: Support vector networks. Mach. Learn. 20, 273–297 (1995)

Deng, J., Guo, J., Xue, N., Zafeiriou, S.: Arcface: Additive angular margin loss for deep face recognition. In: IEEE Society Conference on Computer Vision and Pattern Recognition (CVPR) (2019)

Deng, J., Zhou, Y., Zafeiriou, S.: Marginal loss for deep face recognition. In: IEEE Society Conference on Computer Vision and Pattern Recognition (CVPR) Workshops (2017)

Dhamija, A.R., Gunther, M., Boult, T.E.: Reducing network agnostophobia. In: Neural Information Processing Systems (NeurIPS) (2018)

Do, T.T., Tran, T., Reid, I., Kumar, V., Hoang, T., Carneiro, G.: A theoretically sound upper bound on the triplet loss for improving the efficiency of deep distance metric learning. In: CVPR (2019)

Duan, Y., Lu, J., Zhou, J.: Uniformface: Learning deep equidistributed representations for face recognition. In: IEEE Society Conference on Computer Vision and Pattern Recognition (CVPR) (2019)

Geng, C., Huang, S.J., Chen, S.: Recent advances in open set recognition: a survey. IEEE Trans. Pattern Anal. Mach. Intell. 43(10), 3614–3631 (2021)

Guo, Y., Zhang, L., Hu, Y., He, X., Gao, J.: MS-Celeb-1M: a dataset and benchmark for large-scale face recognition. In: Leibe, B., Matas, J., Sebe, N., Welling, M. (eds.) ECCV 2016. LNCS, vol. 9907, pp. 87–102. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-46487-9_6

Hall, P., Marron, J.S., Neeman, A.: Geometric representation of high dimension, low sample size data. J. Roy. Stat. Soc. B 67, 427–444 (2005)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: CVPR (2016)

Hoffer, E., Ailon, N.: Deep metric learning using triplet network. In: International Conference on Learning and Recognition (ICLR) Workshops (2015)

Huang, G.B., Mattar, M., Berg, T., Learned-Miller, E.: Labeled faces in the wild: A database forstudying face recognition in unconstrained environments. In: Workshop on faces in ‘Real-Life’ Images: detection, alignment, and recognition (2008)

Jimenez, L.O., Landgrebe, D.A.: Supervised classification in high dimensional space: geometrical, statistical, and asymptotical properties of multivariate data. IEEE Trans. Syst., Man, Cybern.-Part C: Appl. Rev. 28(1), 39–54 (1998)

Lin, R., et al.: Regularizing neural networks via minimizing hyperspherical energy. In: 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), pp. 6916–6925 (2020)

Liu, W., et al.: Learning towards minimum hyperspherical energy. In: Neural Information Processing Systems (NeurIPS) (2018)

Liu, W., Lin, R., Liu, Z., **ong, L., Scholkopf, B., Weller, A.: Learning with hyperspherical uniformity. In: International Conference on Artificial Intelligence and Statistics (AISTATS) (2021)

Liu, W., Wen, Y., Yu, Z., Li, M., Raj, B., Song, L.: Sphereface: Deep hypersphere embedding for face recognition. In: IEEE Society Conference on Computer Vision and Pattern Recognition (CVPR) (2017)

Liu, W., Wen, Y., Yu, Z., Yang, M.: Large-margin softmax loss for convolutional neural networks. In: International Conference on Machine Learning (ICML) (2016)

Mika, S., Ratsch, G., Weston, J., Scholkopf, B., Mullers, K.: Fisher discriminant analysis with kernels. In: Neural Networks for Signal Processing IX: Proceedings of the 1999 IEEE Signal Processing Society Workshop, pp. 41–48 (1999)

Miller, D., Sunderhauf, N., Milford, M., Dayoub, F.: Class anchor clustering: A loss for distance-based open set recognition. In: WACV (2021)

Moschoglou, S., Papaioannou, A., Sagonas, C., Deng, J., Kotsia, I., Zafeiriou, S.: Agedb: The first manually collected, in-the-wild age database. In: 2017 IEEE Conference on Computer Vision and Pattern Recognition Workshops (CVPRW). pp. 1997–2005 (2017). https://doi.org/10.1109/CVPRW.2017.250

Neal, L., Olson, M., Fern, X., Wong, W.K., Li, F.: Open set learning with counterfactual images. In: ECCV (2018)

Oza, P., Patel, V.M.: C2ae: Class conditioned auto-encoder for open-set recognition. In: CVPR (2019)

Papyan, V., Han, X., Donoho, D.L.: Prevalence of neural collapse during the terminal phase of deep learning training. Proc. Natl. Acad. Sci. 117, 24652–24663 (2020)

Perera, P., et al.: Generative-discriminative feature representations for open-set recognition. In: CVPR (2020)

Qi, C., Su, F.: Contrastive-center loss for deep neural networks. In: IEEE International Conference on Image Processing (ICIP) (2017)

Rahimi, A., Recht, B.: Random features for large-scale kernel machines. In: NIPS (2007)

Roy, S.K., Harandi, M., Nock, R., Hartley, R.: Siamese networks: The tale of two manifolds. In: International Conference on Computer Vision (2019)

Russakovsky, O., et al.: Imagenet large scale visual recognition challenge. Int. J. Comput. Vision 115, 201–252 (2015)

Scheirer, W.J., Rocha, A., Sapkota, A., Boult, T.E.: Towards open set recognition. IEEE Trans. Pattern Anal. Mach. Intell. 35, 1757–1772 (2013)

Schroff, F., Kalenichenko, D., Philbin, J.: Facenet: A unified embedding for face recognition and clustering. In: IEEE Society Conference on Computer Vision and Pattern Recognition (CVPR) (2015)

Sohn, K.: Improved deep metric learning with multi-class n-pair loss objective. In: Neural Information Processing Systems (NIPS) (2016)

Torralba, A., Fergus, R., Freeman, W.T.: 80 million tiny images: A large data set for nonparametric object and scene recognition. IEEE Trans. Pattern Anal. Mach. Intell. 30(11), 1958–1970 (2008)

Vedaldi, A., Zisserman, A.: Efficient additive kernels via explicit feature maps. IEEE Trans. Pattern Anal. Mach. Intell. 34, 480–492 (2012)

Wang, H., et al.: Cosface: Large margin cosine loss for deep face recognition. In: IEEE Society Conference on Computer Vision and Pattern Recognition (CVPR) (2018)

Wen, Y., Zhang, K., Li, Z., Qiao, Y.: A discriminative feature learning approach for deep face recognition. In: European Conference on Computer Vision (2016)

Wen, Y., Zhang, K., Li, Z., Qiao, Y.: A comprehensive study on center loss for deep face recognition. Int. J. Comput. Vision 127, 668–683 (2019)

Yang, H.M., Zhang, X.Y., Yin, F., Yang, Q., Liu, C.L.: Convolutional prototype network for open set recognition. IEEE Transactions on Pattern Analysis and Machine Intelligence, pp. 1–1 (2020). https://doi.org/10.1109/TPAMI.2020.3045079

Yoshihashi, R., Shao, W., Kawakami, R., You, S., Iida, M., Naemura, T.: Classification-reconstruction learning for open-set recognition. In: CVPR (2019)

Zhang, X., Fang, Z., Wen, Y., Li, Z., Qiao, Y.: Range loss for deep face recognition with long-tailed training data. In: International Conference on Computer Vision (2017)

Zheng, T., Deng, W.: Cross-pose lfw: A database for studying cross-pose face recognition in unconstrained environments. Bei**g University of Posts and Telecommunications, Tech. rep. (2018)

Zheng, T., Deng, W., Hu, J.: Cross-age LFW: A database for studying cross-age face recognition in unconstrained environments. CoRR abs/1708.08197 (2017), http://arxiv.org/abs/1708.08197

Acknowledgements

This work was supported by the Scientific and Technological Research Council of Turkey (TUBİTAK) under Grant number EEEAG-121E390.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Cevikalp, H., Saribas, H. (2023). Deep Simplex Classifier for Maximizing the Margin in Both Euclidean and Angular Spaces. In: Gade, R., Felsberg, M., Kämäräinen, JK. (eds) Image Analysis. SCIA 2023. Lecture Notes in Computer Science, vol 13886. Springer, Cham. https://doi.org/10.1007/978-3-031-31438-4_7

Download citation

DOI: https://doi.org/10.1007/978-3-031-31438-4_7

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-31437-7

Online ISBN: 978-3-031-31438-4

eBook Packages: Computer ScienceComputer Science (R0)