Abstract

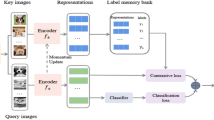

In this work, we consider supervised learning for image classification. Inspired by recent results in the field of supervised contrastive learning, we focus on the loss function for the feature encoder. We show that Softmax Cross Entropy (SCE) can be interpreted as a special kind of loss function in contrastive learning with prototypes. This insight provides a completely new perspective on cross entropy, allowing the derivation of a new generalized loss function, called Prototype Softmax Cross Entropy (PSCE), for use in supervised contrastive learning.

We prove both mathematically and experimentally that PSCE is superior to other loss functions in supervised contrastive learning. It only uses fixed prototypes, so no self-organizing part of contrastive learning is required, eliminating the memory bottleneck of previous solutions in supervised contrastive learning. PSCE can also be used equally successfully for both balanced and unbalanced data.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Ando, S., Huang, C.Y.: Deep over-sampling framework for classifying imbalanced data. In: Joint European Conference on Machine Learning and Knowledge Discovery in Databases (2017)

B., B.E., Frank, W.: Supervised learning of probability distributions by neural networks. In: Advances in Neural Information Processing Systems (1988)

Bardes, A., Ponce, J., LeCun, Y.: Vicreg: Variance-invariance-covariance regularization for self-supervised learning. In: International Conference on Learning Representations (2022)

Buda, M., Maki, A., Mazurowski, M.A.: A systematic study of the class imbalance problem in convolutional neural networks. In: Neural Networks (2018)

Byrd, J., Lipton, Z.: What is the effect of importance weighting in deep learning? In: International Conference on Machine Learning (2019)

Cao, K., Wei, C., Gaidon, A., Arechiga, N., Ma, T.: Learning imbalanced datasets with label-distribution-aware margin loss. In: Advances in Neural Information Processing Systems (2019)

Caron, M., Misra, I., Mairal, J., Goyal, P., Bojanowski, P., Joulin, A.: Unsupervised learning of visual features by contrasting cluster assignments. In: Neural Information Processing Systems (2020)

Chawla, N.V., Bowyer, K.W., Hall, L.O., Kegelmeyer, W.P.: Smote: synthetic minority over-sampling technique. J. Artifi. Intell. Res, (2002)

Chen, T., Kornblith, S., Norouzi, M., Hinton, G.: A simple framework for contrastive learning of visual representations. ar**v preprint ar**v:2002.05709 (2020)

Chen, X., He, K.: Exploring simple siamese representation learning. In: Computer Vision and Pattern Recognition (2021)

Cui, Y., Jia, M., Lin, T.Y., Song, Y., Belongie, S.: Class-balanced loss based on effective number of samples. In: Computer Vision and Pattern Recognition (2019)

Elsayed, G., Krishnan, D., Mobahi, H., Regan, K., Bengio, S.: Large margin deep networks for classification. In: Advances in Neural Information Processing Systems (2018)

Grill, J.B., et al.: Bootstrap your own latent a new approach to self-supervised learning. In: Neural Information Processing Systems (2020)

He, K., Fan, H., Wu, Y., **e, S., Girshick, R.: Momentum contrast for unsupervised visual representation learning. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (2020)

Hénaff, O.J., et al.: Data-efficient image recognition with contrastive predictive coding. ar**v preprint ar**v:1905.09272 (2019)

Hjelm, R.D., Fedorov, A., Lavoie-Marchildon, S., Grewal, K., Trischler, A., Bengio, Y.: Learning deep representations by mutual information estimation and maximization. In: International Conference on Learning Representations (2019)

Hong, Y., Han, S., Choi, K., Seo, S., Kim, B., Chang, B.: Disentangling label distribution for long-tailed visual recognition. In: Computer Vision and Pattern Recognition (CVPR) (2021)

Kang, B., Li, Y., **e, S., Yuan, Z., Feng, J.: Exploring balanced feature spaces for representation learning. In: International Conference on Learning Representations (2020)

Kang, B., et al.: Decoupling representation and classifier for long-tailed recognition. In: International Conference on Learning Representations (2017)

Khosla, P., et al.: Supervised contrastive learning. In: Advances in Neural Information Processing Systems (2020)

Krizhevsky, A., Hinton, G.: Learning multiple layers of features from tiny images. Technical report (2009)

Levin, E., Fleisher, M.: Accelerated learning in layered neural networks. Complex Systems (1988)

Li, J., Zhou, P., **ong, C., Hoi, S.C.: Prototypical networks for few-shot learning. In: Neural Information Processing System (2017)

Li, J., Zhou, P., **ong, C., Hoi, S.C.: Prototypical contrastive learning of unsupervised representations. In: International Conference on Learning Representations (2021)

Li, T., et al.: Targeted supervised contrastive learning for long-tailed recognition. In: Conference on Computer Vision and Pattern Recognition (2022)

Lin, T.Y., Goyal, P., Girshick, R., He, K., Dollár, P.: Focal loss for dense object detection. In: International Conference on Computer Vision (2017)

Menon, A.K., Jayasumana, S., Rawat, A.S., Jain, H., Veit, A., Kumar, S.: Long-tail learning via logit adjustment. In: International Conference on Learning Representations (2020)

Russakovsky, O., et al.: ImageNet large scale visual recognition challenge. Int. J. Comput. Vision 115(3), 211–252 (2015). https://doi.org/10.1007/s11263-015-0816-y

Sermanet, P., et al.: Time-contrastive networks: Self-supervised learning from video. In: International Conference on Robotics and Automation (2018)

Sukhbaatar, S., Bruna, J., Paluri, M., Bourdev, L., Fergu, R.: Training convolutional networks with noisy labels. ar**v preprint ar**v:1406.2080 (2014)

Tian, Y., Krishnan, D., Isola, P.: Contrastive multiview coding. ar**v preprint ar**v:1906.05849 (2019)

Wang, P., Han, K., Wei, X.S., Zhang, L., Wang, L.: Contrastive learning based hybrid networks for long-tailed image classification. In: Computer Vision and Pattern Recognition (2021)

Wu, Z., Efros, A.A., Yu, S.X.: Improving generalization via scalable neighborhood component analysis. In: Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y. (eds.) ECCV 2018. LNCS, vol. 11211, pp. 712–728. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-01234-2_42

Wu, Z., **ong, Y., Yu, S.X., Lin, D.: Unsupervised feature learning via non-parametric instance discrimination. In: Computer Vision and Pattern Recognition (2018)

Zbontar, J., **g, L., Misra, I., LeCun, Y., Deny, S.: Barlow twins: Self-supervised learning via redundancy reduction. In: Proceedings of Machine Learning Research (2021)

Zhou, B., Cui, Q., Wei, X.S., Chen, Z.M.: Bilateral-branch network with cumulative learning for long-tailed visual recognition. In: Conference on Computer Vision and Pattern Recognition (2020)

Acknowledgments

This work was partially supported by the “Research at Universities of Applied Sciences” program of the German Federal Ministry of Education and Research, funding code 13FH010IX6.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

1 Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Bytyqi, Q., Wolpert, N., Schömer, E., Schwanecke, U. (2023). Prototype Softmax Cross Entropy: A New Perspective on Softmax Cross Entropy. In: Gade, R., Felsberg, M., Kämäräinen, JK. (eds) Image Analysis. SCIA 2023. Lecture Notes in Computer Science, vol 13886. Springer, Cham. https://doi.org/10.1007/978-3-031-31438-4_2

Download citation

DOI: https://doi.org/10.1007/978-3-031-31438-4_2

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-31437-7

Online ISBN: 978-3-031-31438-4

eBook Packages: Computer ScienceComputer Science (R0)