Abstract

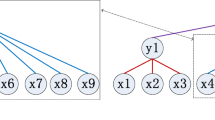

In traditional deep learning models, latent features to the downstream task are received only from the terminal layer of the feature extractor. The intermediate layers of a feature extractor contain significant spatially salient information which, when pooled by the interleaved pooling operations, is lost. These intermediate latent embeddings can improve the overall performance for vision tasks when leveraged properly. Recently, more complex combination schemes leveraging the intermediate embeddings directly for the downstream task have been proposed, but often require additional hyperparameters, increasing their computational cost and have limited generalizability between datasets.

In this paper, we propose, ConvMix, a novel, learned combination scheme for intermediate latent features of a deep convolutional neural network which can be trained without incurring additional training cost and can be readily transferred between datasets. ConvMix leverages features at multiple stages of a CNN to distill spatial information in images, and create a richer embedding for the downstream task. Giving the network a ‘wider view’ by leveraging multi-level spatially pooled features of the image enables better regularization by preventing learning specific indentifying features but rather focusing on the wider image itself. We visually confirm this ‘wider view’ via GradCam and show that ConvMix ensure that spatially salient features are prioritized in the latent embeddings. In our experiments on CIFAR10-100, CINIC10, STL10, SVHN and TinyImageNet datasets, we show that our approach not only achieves better performance compared to state-of-the-art approaches but more importantly the percentage gain in performance scales with the increase in model/problem complexity due to the internal regularization effect of ConvMix.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Achille, A., Soatto, S.: Information dropout: Learning optimal representations through noisy computation. IEEE Trans. Pattern Anal. Mach. Intell. 40(12), 2897–2905 (2018)

Bachlechner, T., Majumder, B.P., Mao, H., Cottrell, G., McAuley, J.: Rezero is all you need: Fast convergence at large depth. In: Uncertainty in Artificial Intelligence, pp. 1352–1361. PMLR (2021)

Belghazi, M.I., et al.: Mine: mutual information neural estimation. ar**v preprint ar**v:1801.04062 (2018)

Bengio, Y., Simard, P., Frasconi, P.: Learning long-term dependencies with gradient descent is difficult. IEEE Trans. Neural Netw. 5(2), 157–166 (1994)

Brown, T., et al.: Language models are few-shot learners. Adv. Neural Inform. Process. Syst. 33, 1877–1901 (2020)

Coates, A., Ng, A., Lee, H.: An analysis of single-layer networks in unsupervised feature learning. In: Proceedings of the Fourteenth International Conference on Artificial Intelligence and Statistics, pp. 215–223. JMLR Workshop and Conference Proceedings (2011)

Cubuk, E.D., Zoph, B., Mane, D., Vasudevan, V., Le, Q.V.: Autoaugment: Learning augmentation policies from data. ar**v preprint ar**v:1805.09501 (2018)

Cubuk, E.D., Zoph, B., Shlens, J., Le, Q.V.: Randaugment: Practical automated data augmentation with a reduced search space. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition Workshops, pp. 702–703 (2020)

Dabouei, A., Soleymani, S., Taherkhani, F., Nasrabadi, N.M.: Supermix: Supervising the mixing data augmentation. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 13794–13803 (2021)

Darlow, L.N., Crowley, E.J., Antoniou, A., Storkey, A.J.: Cinic-10 is not imagenet or cifar-10. ar**v preprint ar**v:1810.03505 (2018)

He, K., Zhang, X., Ren, S., Sun, J.: Delving deep into rectifiers: Surpassing human-level performance on imagenet classification. In: Proceedings of the IEEE international conference on computer vision, pp. 1026–1034 (2015)

He, K., Zhang, X., Ren, S., Sun, J.: Deep residual learning for image recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 770–778 (2016)

Ioffe, S., Szegedy, C.: Batch normalization: Accelerating deep network training by reducing internal covariate shift. In: International Conference on Machine Learning, pp. 448–456. PMLR (2015)

Klambauer, G., Unterthiner, T., Mayr, A., Hochreiter, S.: Self-normalizing neural networks. In: Advances in Neural Information Processing Systems, vol. 30 (2017)

Krizhevsky, A., Hinton, G., et al.: Learning multiple layers of features from tiny images (2009)

Krizhevsky, A., Sutskever, I., Hinton, G.E.: Imagenet classification with deep convolutional neural networks. In: Advances in Neural Information Processing systems, vol. 25 (2012)

Le, Y., Yang, X.: Tiny imagenet visual recognition challenge. CS 231N, 7(7), 3 (2015)

LeCun, Y., Bengio, Y., Hinton, G.: Deep learning. Nature 521(7553), 436–444 (2015)

LeCun, Y.A.,. Bottou, L., Orr, G.B., Müller, K.-R.: Efficient backprop. In: Neural networks: Tricks of the trade, pp. 9–48. Springer (2012). https://doi.org/10.1007/978-3-642-35289-8_3

Lin, M., Chen, Q., Yan, S.: Network in network. ar**v preprint ar**v:1312.4400 (2013)

Mishkin, D., Matas, J.: All you need is a good init. ar**v preprint ar**v:1511.06422 (2015)

Netzer, Y., Wang, T., Coates, A., Bissacco, A., Wu, B., Ng, A.Y.: Reading digits in natural images with unsupervised feature learning (2011)

Paszke, A., et al.: Pytorch: An imperative style, high-performance deep learning library. In: H. Wallach, H. Larochelle, A. Beygelzimer, F. d’Alché-Buc, E. Fox, and R. Garnett, editors, Advances in Neural Information Processing Systems vol. 32, pp. 8024–8035. Curran Associates Inc (2019)

Poole, B., Lahiri, S., Raghu, M., Sohl-Dickstein, J., Ganguli, S.: Exponential expressivity in deep neural networks through transient chaos. Advances in neural information processing systems vol. 29 (2016)

Radford, A., et al.: Language models are unsupervised multitask learners. OpenAI blog 1(8), 9 (2019)

Ramé, A., Sun, R., Cord, M.: Mixmo: Mixing multiple inputs for multiple outputs via deep subnetworks. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 823–833 (2021)

Russakovsky, O., et al.: ImageNet large scale visual recognition challenge. Int. J. Comput. Vision 115(3), 211–252 (2015). https://doi.org/10.1007/s11263-015-0816-y

Selvaraju, R.R., Cogswell, M., Das, A., Vedantam, R., Parikh, D., Batra, D.: Grad-cam: Visual explanations from deep networks via gradient-based localization. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 618–626 (2017)

Shwartz-Ziv, R., Tishby, N.: Opening the black box of deep neural networks via information. ar**v preprint ar**v:1703.00810 (2017)

Srivastava, R.K., Greff, K., Schmidhuber, J.: Highway networks. ar**v preprint ar**v:1505.00387 (2015)

Tan, M., Le, Q.: Efficientnet: Rethinking model scaling for convolutional neural networks. In: International Conference on Machine Learning, pp. 6105–6114. PMLR (2019)

Tishby, N., Zaslavsky, N.: Deep learning and the information bottleneck principle. In: 2015 ieee information theory workshop (itw), pp. 1–5. IEEE (2015)

Arif, M.U.I., Jameel, M., Grabocka, J., Schmidt-Thieme, L.: Phantom embeddings: Using embeddings space for model regularization in deep neural networks. In: LWDA, pp. 47–58 (2020)

Van der Maaten, L., Hinton, G.: Visualizing data using t-sne. J. Mach. Learn. Res. 9(11) (2008)

Verma, V., et al.: Manifold mixup: learning better representations by interpolating hidden states (2018)

**ao, L., Bahri, Y., Sohl-Dickstein, J., Schoenholz, S., Pennington, J.: Dynamical isometry and a mean field theory of cnns: How to train 10,000-layer vanilla convolutional neural networks. In: International Conference on Machine Learning, pp. 5393–5402. PMLR, (2018)

**e, S., Girshick, R., Dollár, P., Tu, Z., He, K.: Aggregated residual transformations for deep neural networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1492–1500 (2017)

Yun, S., Han, D., Oh, J., Chun, S., Choe, J., Yoo, Y.: Cutmix: Regularization strategy to train strong classifiers with localizable features. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 6023–6032 (2019)

Zagoruyko, S., Komodakis, N.: Wide residual networks. ar**v preprint ar**v:1605.07146 (2016)

Zhang, H., Cisse, M., Dauphin, Y.N., Lopez-Paz, D.: mixup: Beyond empirical risk minimization. ar**v preprint ar**v:1710.09412 (2017)

Zhang, H., Dauphin, Y.N., Ma, T.: Fixup initialization: Residual learning without normalization. ar**v preprint ar**v:1901.09321 (2019)

Zhu, J., Shi, L., Yan, J., Zha, H.: Automix: Mixup networks for sample interpolation via cooperative barycenter learning. In: European Conference on Computer Vision, pp. 633–649. Springer (2020)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Appendices

A Appendix

B Richer Embeddings

Continuing our discussion about the ConvMix generating better-separated embeddings, in Fig. 7 we present the TSNE plots on the test set embeddings of a ResNet18 trained on CIFAR10 dataset. We have quantified the inter-class already in the main text (Table 1), here we present qualitative proof for our claim that leveraging the latent features at multiple stages generates better embeddings. In Fig. 7 we compare methods that work on the latent space, namely Manifold MixUp and ReZero, against ConvMix.

Analysing Fig. 7, we can see that the class centers and better separated in our methods when compared to the others but a deeper inspection is needed to see how the different classes are represented in by our method. CIFAR10 dataset has some classes that are frequently confused together, namely Cats-Dogs, Airplane-Ship, and the 4-legged animals Cats-Dogs-Deers. This effect can be seen in the TSNE plots, we looking at Manifold MixUp we can see this clearly with cats, dogs, and deers all being clustered in relatively similar areas. This is an intuitive finding since these classes are intrinsically close together however, this also leads to misclassification. In our method, we see that while cats and dogs occupy a close place in the embedding space, the deer class is well separated. This effect is caused by the earlier features of the CNN being used since in the earlier stages of a CNN still maintains spatial saliency in the image features.

Moving on to the other troublesome classes, Airplane-Ship. We can rationalize why they would be placed together in the embeddings space by Manifold MixUp and ReZero. Both these classes contain a lot of blue in them due to the sea and sky. In our method, we see a substantial separation between the two classes indicating that using the latent features at multiple stages have enabled to model to resolve between the shapes of the subject in the pictures and therefore, place them in well separated embedding spaces.

C GradCAM for Explainability

We argue in the main text of the paper that ConvMix has an internal regularization effect, enabling the model to generalize better than the baseline methods (Manifold MixUp and ReZero). We qualitatively demonstrate this effect in the final model by presenting the gradCAMs on the CIFAR10 validation split for a fair demonstration of the viability of our claim. In Fig. 6 we show that both Manifold Mixup and ReZero tend to have a very narrow focus and use key areas in the image to classify the images. However, ConvMix shows that it makes the classification by using a more holistic view of the input. This can be seen in the last row of Fig. 6, ConvMix enables the model to maintain a "wider view" of the input by spreading the focus on the subject as a whole rather than just key aspects of the input.

GradCam visualizations for CIFAR10 dataset trained using ResNet18, comparing Manifold Mixup, ReZero, and ConvMix. The Dark red region indicates the salient features in the images learned by the network. GradCams for ConvMix embeddings (at layer4) indicate that our method learns embeddings that take into account a wider area of the input. (Color figure online)

The key benefit of this characteristic can be realized when we take the learning from Fig. 6 and put it in the context of Fig. 7. We have a peculiar situation seen in the TSNE plots where Cats, Birds, and Frogs are located close together in both Manifold Mixup and ReZero due to this focus on key areas. Using ConvMix, we can see that Cats and Birds still occupy a similar but well-separated space, however, frogs have been moved away further away in the embedding space. This shows a strength of ConvMix to be able to use the features of frogs are multiple stages and rightly place them away from birds and Cats.

Another example we would like to point out here is the Automobile-Ship pair, which can be seen to be closer together in the Manifold Mixup and ReZero method. We can understand what the model is trying to do here by comparing the GradCAMs for Automobiles and Ships. With the narrow view of Manifold Mixup and ReZero, the model sees similar features such as windows, doors, and frames. However, by looking at the wider view offered by ConvMix, we can see the model making use of the entire image for classification. Resultantly, we see that Automobiles and Ships are well separated in the embedding space.

Rights and permissions

Copyright information

© 2023 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Arif, M.u.I., Burchert, J., Schmidt-Thieme, L. (2023). ConvMix: Combining Intermediate Latent Features in Deep Convolutional Neural Networks. In: Gade, R., Felsberg, M., Kämäräinen, JK. (eds) Image Analysis. SCIA 2023. Lecture Notes in Computer Science, vol 13886. Springer, Cham. https://doi.org/10.1007/978-3-031-31438-4_11

Download citation

DOI: https://doi.org/10.1007/978-3-031-31438-4_11

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-31437-7

Online ISBN: 978-3-031-31438-4

eBook Packages: Computer ScienceComputer Science (R0)