Abstract

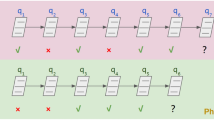

Knowledge tracing (KT) aims to predict student performance on the next question according to historical records. Recently deep learning-based models for KT task successfully modeling student responses receive good prediction results of student performance. The student responses encoded as input of KT models use a one-hot encoding. We find that one-hot encoding represents student responses on different items related to the same concepts in completely different vectors. However, items related to the same concept have certain relationships in the real world so the student has a similar representation in these items. In this paper, we propose a new method named Contrastive Deep Knowledge Tracing (CDKT) for providing a reasonable representation of students. We evaluate our model using three public benchmark datasets and the experimental results demonstrate improvements over state-of-the-art methods.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Sarsa, S., Leinonen, J., Hellas, A.: Deep learning models for knowledge tracing: review and empirical evaluation. ar**v preprint ar**v:2112.15072 (2021)

Zhang, Y., Dai, H., Yun, Y., et al.: Meta-knowledge dictionary learning on 1-bit response data for student knowledge diagnosis. Knowl.-Based Syst. 205, 106290 (2020)

Zhang, Y., An, R., Cui, J., et al.: Undergraduate grade prediction in Chinese higher education using convolutional neural networks. In: LAK21: 11th International Learning Analytics and Knowledge Conference, pp. 462–468 (2021)

Staudemeyer, Ralf, C., Morris, E.R.: Understanding LSTM-a tutorial into long short-term memory recurrent neural networks. ar**v preprint ar**v:1909.09586 (2019)

Yun, Y., Dai, H., Cao, R., Zhang, Y., Shang, X.: Self-paced graph memory network for student GPA prediction and abnormal student detection. In: Roll, I., McNamara, D., Sosnovsky, S., Luckin, R., Dimitrova, V. (eds.) AIED 2021. LNCS (LNAI), vol. 12749, pp. 417–421. Springer, Cham (2021). https://doi.org/10.1007/978-3-030-78270-2_74

Dai, H., Zhang, Y., Yun, Y., Shang, X.: An improved deep model for knowledge tracing and question-difficulty discovery. In: Pham, D.N., Theeramunkong, T., Governatori, G., Liu, F. (eds.) PRICAI 2021. LNCS (LNAI), vol. 13032, pp. 362–375. Springer, Cham (2021). https://doi.org/10.1007/978-3-030-89363-7_28

Le-Khac, P.H., Healy, G., Smeaton, A.F.: Contrastive representation learning: a framework and review. IEEE Access (2020)

Chen, T., et al.: A simple framework for contrastive learning of visual representations. In: International Conference on Machine Learning. PMLR (2020)

Dai, B., Lin, D.: Contrastive learning for image captioning. ar**v preprint ar**v:1710.02534 (2017)

Saeed, A., Grangier, D., Zeghidour, N.: Contrastive learning of general-purpose audio representations. In: ICASSP 2021–2021 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP). IEEE (2021)

Giorgi, J.M., et al.: DECLUTR: deep contrastive learning for unsupervised textual representations. ar**v preprint ar**v:2006.03659 (2020)

Malhotra, P., et al.: Long short term memory networks for anomaly detection in time series. In: Proceedings, 89 (2015)

Acknowledgement

This study was funded in part by National Natural Science Foundation of China (61802313, U1811262, 61772426), Key Research and Development Program of China (2020AAA0108500), Reformation Research on Education and Teaching at Northwestern Polytechnical University (2021JGY31), Education And Teaching Reform Research Project of Northwestern Polytechnical University (2022JGY62).

Author information

Authors and Affiliations

Corresponding authors

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 Springer Nature Switzerland AG

About this paper

Cite this paper

Dai, H., Yun, Y., Zhang, Y., Zhang, W., Shang, X. (2022). Contrastive Deep Knowledge Tracing. In: Rodrigo, M.M., Matsuda, N., Cristea, A.I., Dimitrova, V. (eds) Artificial Intelligence in Education. Posters and Late Breaking Results, Workshops and Tutorials, Industry and Innovation Tracks, Practitioners’ and Doctoral Consortium. AIED 2022. Lecture Notes in Computer Science, vol 13356. Springer, Cham. https://doi.org/10.1007/978-3-031-11647-6_54

Download citation

DOI: https://doi.org/10.1007/978-3-031-11647-6_54

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-11646-9

Online ISBN: 978-3-031-11647-6

eBook Packages: Computer ScienceComputer Science (R0)