Abstract

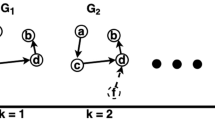

Graph Neural Network (GNN) has shown powerful learning and reasoning ability. However, graphs in the real world generally exist dynamically, i.e., the topological structure of graphs is constantly evolving over time. On the one hand, the learning ability of the networks declines since the existing GNNs cannot process the graph streaming data. On the other hand, the cost of retraining GNNs from scratch becomes prohibitively high with the increasing scale of graph streaming data. Therefore, we propose an online incremental learning framework IncreGNN based on GNN in this paper, which solves the problem of high computational cost of retraining GNNs from scratch, and prevents catastrophic forgetting during incremental training. Specifically, we propose a sampling strategy based on node importance to reduce the amount of training data while preserving the historical knowledge. Then, we present a regularization strategy to avoid over-fitting caused by insufficient sampling. The experimental evaluations show the superiority of IncreGNN compared to existing GNNs in link prediction task.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Aljundi, R., Babiloni, F., Elhoseiny, M., Rohrbach, M., Tuytelaars, T.: Memory aware synapses: Learning what (not) to forget. In: ECCV, pp. 139–154 (2018)

Galke, L., Franke, B., Zielke, T., Scherp, A.: Lifelong learning of graph neural networks for open-world node classification. In: IJCNN, pp. 1–8 (2021)

Li, Z., Hoiem, D.: Learning without forgetting. IEEE Trans. Pattern Analysis and Machine Intelligence 40(12), 2935–2947 (2017)

Mallya, A., Lazebnik, S.: PackNet: adding multiple tasks to a single network by iterative pruning. In: CVPR, pp. 7765–7773 (2018)

Pareja, A., et al.: EvolveGCN: evolving graph convolutional networks for dynamic graphs. In: AAAI, vol. 34, pp. 5363–5370 (2020)

Peng, Y., Choi, B., Xu, J.: Graph learning for combinatorial optimization: a survey of state-of-the-art. Data Sci. Eng. 6(2), 119–141 (2021)

Rebuffi, S.A., Kolesnikov, A., Sperl, G., Lampert, C.H.: iCaRL: incremental classifier and representation learning. In: CVPR, pp. 2001–2010 (2017)

Sankar, A., Wu, Y., Gou, L., Zhang, W., Yang, H.: DySAT: deep neural representation learning on dynamic graphs via self-attention networks. In: WSDM, pp. 519–527 (2020)

Serra, J., Suris, D., Miron, M., Karatzoglou, A.: Overcoming catastrophic forgetting with hard attention to the task. In: International Conference on Machine Learning, pp. 4548–4557. PMLR (2018)

Trivedi, R., Farajtabar, M., Biswal, P., Zha, H.: DyRep: learning representations over dynamic graphs. In: ICLR (2019)

Veličković, P., Cucurull, G., Casanova, A., Romero, A., Lio, P., Bengio, Y.: Graph attention networks. ar**v preprint ar**v:1710.10903 (2017)

Wang, J., Song, G., Wu, Y., Wang, L.: Streaming graph neural networks via continual learning. In: CIKM, pp. 1515–1524 (2020)

Xu, Y., Zhang, Y., Guo, W., Guo, H., Tang, R., Coates, M.: GraphSAIL: graph structure aware incremental learning for recommender systems. In: CIKM, pp. 2861–2868 (2020)

Acknowledgements

This work is supported by the National Natural Science Fondation of China (62072083 and U1811261).

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Wei, D., Gu, Y., Song, Y., Song, Z., Li, F., Yu, G. (2022). IncreGNN: Incremental Graph Neural Network Learning by Considering Node and Parameter Importance. In: Bhattacharya, A., et al. Database Systems for Advanced Applications. DASFAA 2022. Lecture Notes in Computer Science, vol 13245. Springer, Cham. https://doi.org/10.1007/978-3-031-00123-9_59

Download citation

DOI: https://doi.org/10.1007/978-3-031-00123-9_59

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-00122-2

Online ISBN: 978-3-031-00123-9

eBook Packages: Computer ScienceComputer Science (R0)