Abstract

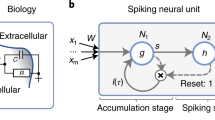

Spiking neural networks are being investigated both as biologically plausible models of neural computation and also as a potentially more efficient type of neural network. Recurrent neural networks in the form of networks of gating memory cells have been central in state-of-the-art solutions in problem domains that involve sequence recognition or generation. Here, we design an analog Long Short-Term Memory (LSTM) cell where its neurons can be substituted with efficient spiking neurons, where we use subtractive gating (following the subLSTM in [1]) instead of multiplicative gating. Subtractive gating allows for a less sensitive gating mechanism, critical when using spiking neurons. By using fast adapting spiking neurons with a smoothed Rectified Linear Unit (ReLU)-like effective activation function, we show that then an accurate conversion from an analog subLSTM to a continuous-time spiking subLSTM is possible. This architecture results in memory networks that compute very efficiently, with low average firing rates comparable to those in biological neurons, while operating in continuous time.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Costa, R., Assael, I.A., Shillingford, B., de Freitas, N., Vogels, T.: Cortical microcircuits as gated-recurrent neural networks. In: Advances in Neural Information Processing Systems, pp. 272–283 (2017)

Attwell, D., Laughlin, S.: An energy budget for signaling in the grey matter of the brain. J. Cereb. Blood Flow Metab. 21(10), 1133–1145 (2001)

Esser, S., et al.: Convolutional networks for fast, energy-efficient neuromorphic computing. In: PNAS, p. 201604850, September 2016

Neil, D., Pfeiffer, M., Liu, S.C.: Learning to be efficient: algorithms for training low-latency, low-compute deep spiking neural networks (2016)

Diehl, P., Neil, D., Binas, J., Cook, M., Liu, S.C., Pfeiffer, M.: Fast-classifying, high-accuracy spiking deep networks through weight and threshold balancing. In: IEEE IJCNN, pp. 1–8, July 2015

O’Connor, P., Neil, D., Liu, S.C., Delbruck, T., Pfeiffer, M.: Real-time classification and sensor fusion with a spiking deep belief network. Front. Neurosci. 7, 178 (2013)

Hunsberger, E., Eliasmith, C.: Spiking deep networks with LIF neurons. ar**v preprint ar**v:1510.08829 (2015)

Hochreiter, S., Schmidhuber, J.: Long short-term memory. Neural Comput, 9(8), 1735–1780 (1997)

Shrestha, A., et al.: A spike-based long short-term memory on a neurosynaptic processor (2017)

Davies, M., Srinivasa, N., Lin, T.H., Chinya, G., Cao, Y., Choday, S.H., Dimou, G., Joshi, P., Imam, N., Jain, S.: Loihi: a neuromorphic manycore processor with on-chip learning. IEEE Micro 38(1), 82–99 (2018)

Zambrano, D., Bohte, S.: Fast and efficient asynchronous neural computation with adapting spiking neural networks. ar**v preprint ar**v:1609.02053 (2016)

Bohte, S.: Efficient spike-coding with multiplicative adaptation in a spike response model. In: NIPS, vol. 25, pp. 1844–1852 (2012)

Gers, F.A., Schraudolph, N.N., Schmidhuber, J.: Learning precise timing with LSTM recurrent networks. J. Mach. Learn. Res. 3(Aug), 115–143 (2002)

Denève, S., Machens, C.K.: Efficient codes and balanced networks. Nature Neurosci. 19(3), 375–382 (2016)

Bakker, B.: Reinforcement learning with long short-term memory. In: NIPS, vol. 14, pp. 1475–1482 (2002)

Harmon, M., Baird III, L.: Multi-player residual advantage learning with general function approximation. Wright Laboratory, 45433–7308 (1996)

Rombouts, J., Bohte, S., Roelfsema, P.: Neurally plausible reinforcement learning of working memory tasks. In: NIPS, vol. 25, pp. 1871–1879 (2012)

Cho, K., et al.: Learning phrase representations using RNN encoder-decoder for statistical machine translation. ar**v preprint ar**v:1406.1078 (2014)

Greff, K., Srivastava, R.K., Koutník, J., Steunebrink, B.R., Schmidhuber, J.: LSTM: a search space odyssey. IEEE Trans. Neural Netw. Learn. Syst. 28(10), 2222–2232 (2017)

Jozefowicz, R., Zaremba, W., Sutskever, I.: An empirical exploration of recurrent network architectures. In: International Conference on Machine Learning, pp. 2342–2350 (2015)

Acknowledgments

DZ is supported by NWO NAI project 656.000.005.

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2018 Springer Nature Switzerland AG

About this paper

Cite this paper

Pozzi, I., Nusselder, R., Zambrano, D., Bohté, S. (2018). Gating Sensory Noise in a Spiking Subtractive LSTM. In: Kůrková, V., Manolopoulos, Y., Hammer, B., Iliadis, L., Maglogiannis, I. (eds) Artificial Neural Networks and Machine Learning – ICANN 2018. ICANN 2018. Lecture Notes in Computer Science(), vol 11139. Springer, Cham. https://doi.org/10.1007/978-3-030-01418-6_28

Download citation

DOI: https://doi.org/10.1007/978-3-030-01418-6_28

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-01417-9

Online ISBN: 978-3-030-01418-6

eBook Packages: Computer ScienceComputer Science (R0)