Abstract

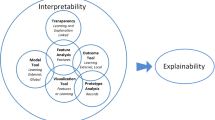

In the rapidly develo** artificial intelligence, the explainability of the proposed hypotheses and confidence in the outstanding solutions remain important problem areas. The article discusses various approaches to explainability for users of the recommendations of computer systems that they receive. The differences in the concepts of transparency and explainability are pointed out. The concepts of interpretation of results in a formal form and meaningful explanation are compared. Particular attention is paid to the need for a directed explanation for users of different levels of decision-making. The problem of trust in artificial intelligence systems is presented from various positions, which should collectively formulate the integral trust of users to the solutions obtained. Briefly, promising areas of development of artificial intelligence discussed at a Russian conference on artificial intelligence are indicated.

Similar content being viewed by others

REFERENCES

B. W. Boehm and A. Jain, “An initial theory of value-based software engineering,” in Value-Based Software Engineering, Ed. by S. Biffl, A. Aurum, B. Boehm, H. Erdogmus, and P. Grünbacher (Springer, Berlin, 2006), pp. 15–37. https://doi.org/10.1007/3-540-29263-2_2

J. Born, D. Beymer, D. Rajan, A. Coy, V. V. Mukherjee, M. Manica, P. Prasanna, D. Ballah, M. Guindy, D. Shaham, P. L. Shah, E. Karteris, J. L. Robertus, M. Gabrani, and M. Rosen-Zvi, “On the role of artificial intelligence in medical imaging of COVID-19,” Patterns 2, 100269 (2021). https://doi.org/10.1016/j.patter.2021.100269

B. Bouchon-Meunier, M. J. Lesot, and C. Marsala, “The visionary in explainable artificial intelligence,” Turk. World Math. Soc. J. Appl. Eng. Math. 12 (1), 5–13 (2021).

European Commission, “Ethics guidelines for trustworthy AI,” (2019). https://digital-strategy.ec.europa.eu/en/library/ethics-guidelines-trustworthy-ai

D. Gunning and D. Aha, “DARPA’s explainable artificial intelligence (XAI) program,” AI Mag. 40 (2), 44–58 (2019). https://doi.org/10.1609/aimag.v40i2.2850

A. Holzinger and H. Müller, “Toward human–AI interfaces to support explainability and causability in medical AI,” Computer 54 (10), 78–86 (2021). https://doi.org/10.1109/mc.2021.3092610

U. Johansson, T. Lofstrom, R. Konig, C. Sonstrod, and L. Niklasson, “Rule extraction from opaque models– A slightly different perspective,” in 2006 5th Int. Conf. on Machine Learning and Applications (ICMLA’06), Orlando, Fla., 2006 (IEEE, 2006), pp. 22–27. https://doi.org/10.1109/icmla.2006.46

C. J. Kelly, A. Karthikesalingam, M. Suleyman, G. Corrado, and D. King, “Key challenges for delivering clinical impact with artificial intelligence,” BMC Med. 17, 195 (2019). https://doi.org/10.1186/s12916-019-1426-2

B. A. Kobrinskii, “Multisidedness in explainability of decisions of artificial intelligence systems,” in Engineering of Enterprises and Knowledge Management: Proc. 25th Russ. Sci. Conf., Ed. by Yu. F. Tel’nov (Ross. Ekon. Univ. im. G.V. Plekhanova, Moscow, 2022), Vol. 1, pp. 164–171.

J. A. Kroll, J. Huey, S. Barocas, W. F. Edward, J. R. Reidenberg, D. G. Robinson, and H. Yu, “Accountable algorithm,” Univ. Pennsylvania Law Rev. 165, 633–705 (2017). https://ssrn.com/abstract=2765268

M. Langer, D. Oster, T. Speith, H. Hermanns, L. Kästner, E. Schmidt, A. Sesing, and K. Baum, “What do we want from explainable artificial intelligence (XAI)?–A stakeholder perspective on XAI and a conceptual model guiding interdisciplinary XAI research,” Artif. Intell. 296, 103473 (2021). https://doi.org/10.1016/j.artint.2021.103473

C. Marsala and B. Bouchon-Meunier, “Fuzzy data mining and management of interpretable and subjective information,” Fuzzy Sets Syst. 281, 252–259 (2015). https://doi.org/10.1016/j.fss.2015.08.021

C. McGregor, C. Catley, A. James, and J. Padbury, “Next generation neonatal health informatics with Artemis,” in User Centred Networked Health Care, Ed. by A. Moen, S. K. Andersen, J. Aarts, and P. Hurlen, Studies in Health Technology and Informatics, Vol. 169 (IOS Press, 2011), pp. 115–119.

M. Nagendran, Ya. Chen, C. A. Lovejoy, A. C. Gordon, M. Komorowski, H. Harvey, E. Topol, J. P. A. Ioannidis, G. S. Collins, and M. Maruthappu, “Artificial intelligence versus clinicians: Systematic review of design, reporting standards, and claims of deep learning studies,” BMJ 368, m689 (2020). https://doi.org/10.1136/bmj.m689

P. J. Phillips, C. A. Hahn, P. C. Fontana, D. A. Broniatowski, and M. A. Przybocki, Four Principles of Explainable Artificial Intelligence: National Institute of Standards and Technology Interagency or Internal Report 8312 (US Department of Commerce, National Institute of Standards and Technology, 2020). https://doi.org/10.6028/nist.ir.8312-draft

D. Scheek, M. H. Rezazade Mehrizi, and E. Ranschaert, “Radiologists in the loop: The roles of radiologists in the development of AI applications,” Eur. Radiology 31, 7960–7968 (2021). https://doi.org/10.1007/s00330-021-07879-w

J. Thiagarajan, B. Venkatesh, D. Rajan, and P. Sattigeri, “Improving reliability of clinical models using prediction calibration,” in Uncertainty for Safe Utilization of Machine Learning in Medical Imaging, and Graphs in Biomedical Image Analysis, Ed. by C. H. Sudre, H. Fehri, T. Arbel, C. F. Baumgartner, A. Dalca, R. Tanno, K. Van Leemput, W. M. Wells, A. Sotiras, B. Papiez, E. Ferrante, and S. Parisot, Lecture Notes in Computer Science, Vol. 12443 (Springer, Cham, 2020), pp. 71–80. https://doi.org/10.1007/978-3-030-60365-6_8

M. van den Berg and O. Kuiper, XAI in the Financial Sector: A Conceptual Framework for Explainable AI (XAI) (Hogeschool Utrecht, Utrecht, 2020). https://www.researchgate.net/publication/344079379_XAI_in_the_Financial_Sector_A_Conceptual_Framework_for_Explainable_AI_XAI.

S. N. Vassilyev, “Abductive inference method in problems of explaining the observed,” J. Comput. Syst. Sci. Int. 60, 153–161 (2021). https://doi.org/10.1134/s1064230721010111

P. Winter and A. Carusi, “If you’re going to trust the machine, then that trust has got to be based on something: Validation and the co-constitution of trust in develo**, artificial intelligence (AI) for the early diagnosis of pulmonary hypertension (PH),” Sci. Technol. Stud. 35, 58–77 (2022). https://doi.org/10.23987/sts.102198

L. Zadeh, “Is there a need for fuzzy logic?,” Inf. Sci. 178, 2751–2779 (2008). https://doi.org/10.1016/j.ins.2008.02.012

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

The author declares that he has no conflicts of interest.

Additional information

Boris Arkad’evich Kobrinskii (born on November 28, 1944)—Head of the Department of Intellectual Decision Support Systems of the Artificial Intelligence Research Institute of the Federal Research Center “Computer Science and Control” of the Russian Academy of Sciences, Doctor of Science, Professor, Honored Scientist of the Russian Federation. From 2007 to present, Professor of the Department of Medical Cybernetics and Informatics of the Pirogov Russian National Research Medical University, where he has been teaching a course on artificial intelligence. Since 2022, co-head of the master’s program “Intellectual Technologies in Medicine” at the Faculty of Computational Mathematics and Cybernetics of the Lomonosov Moscow State University. Chairman of the Scientific Council of the Russian Association of Artificial Intelligence.

Author (coauthor) more than 500 scientific works, including ten monographs and three textbooks. Within the framework of the problem area of artificial intelligence, the concept of engineering of shaped series, the paradigm of creating logical-linguistic-image intelligent systems, the concept of knowledge-controlled information systems, a modified version of the confidence factors of Shortliffe experts, and more than 30 intelligent decision support systems for medicine were formulated.

Rights and permissions

About this article

Cite this article

Kobrinskii, B.A. Artificial Intelligence: Problems, Solutions, and Prospects. Pattern Recognit. Image Anal. 33, 217–220 (2023). https://doi.org/10.1134/S1054661823030203

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1134/S1054661823030203