Abstract

Recently clustering for datasets with different shapes, densities and noises has attracted more and more attention from scholars. However, most current clustering algorithms improve the clustering performance at the expense of the simplicity, and cannot balance well between the clustering quality and the operability for the users. To solve this problem, we propose a new algorithm called stratification clustering based on density, hierarchy and partition (SDHP) by effectively integrating the advantages of the density-based, hierarchical-based and partition-based clustering. First, a new parameter-free local density estimation strategy based on the bidirectional natural neighbor relationship named local density based on natural neighbor (NN-LD) is proposed to identify the core part of each sub-cluster. Then, a new stratification strategy based on the NN-LD Stratification-NN-LD (S-NN-LD) is proposed to divide the entire dataset into two layers, the core layer and the edge layer, to simplify the dataset structure and make the algorithm robust to noises. Next, the hierarchical-based single-linkage algorithm is adopted in the core layer to obtain the initial clustering result since it has advantages on clustering the datasets with various shapes and densities. Finally, to improve the clustering accuracy of samples in the edge layer, a combination of a new local inter-cluster distance measure based on the average of neighbor distances and the partitioning clustering is adopted to match these samples to the sub-clusters in the initial clustering result. The experiments on twenty datasets show that the SDHP has better clustering accuracy, and can be applied in practice well compared with four popular hierarchical clustering algorithms, four recent density-based clustering algorithms, and a state-of-the-art partitioning clustering algorithm. The source code can be downloaded from https://github.com/qi111678/SDHP.

Similar content being viewed by others

Data availability

The Aggregation, Compound, Pathbased, Spiral, R15, Flame and D31 are from the clustering basic benchmark (http://cs.uef.fi/sipu/datasets/). The Seeds, Iris, Yeast, Waveform, Wdbc, Breast, Pageblocks, Wine and Glass are the public datasets, they are available in the UCI Machine Learning Repository (http://archive.ics.uci.edu/ml/). The Circles (noise = 0), Moons, Circles (noise = 0.1), five illustrative datasets and the Chinese wine market data are available on request from the corresponding author.

References

Arthur D, Vassilvitskii S (2007) k-Means plus plus: the advantages of careful seeding. In: Proceedings of the eighteenth annual ACM-SIAM symposium on discrete algorithms, pp 1027–1035

Ahmad A, Khan SS (2020) initKmix—a novel initial partition generation algorithm for clustering mixed data using k-means-based clustering. Expert Syst Appl 167(2):114149. https://doi.org/10.1016/j.eswa.2020.114149

Brunner TA, Siegrist M (2011) A consumer-oriented segmentation study in the Swiss wine market. Br Food J 113(3):353–373. https://doi.org/10.1108/00070701111116437

Bruwer J, Roediger B, Herbst F (2017) Domain-specific market segmentation: a wine-related lifestyle (WRL) approach. Asia Pac J Mark Logist 29(1):4–26. https://doi.org/10.1108/apjml-10-2015-0161

Bibi M, Abbasi WA, Aziz W, Khalil S, Uddin M, Iwendi C, Gadekallu TR (2022) A novel unsupervised ensemble framework using concept-based linguistic methods and machine learning for twitter sentiment analysis. Pattern Recogn Lett 158:80–86. https://doi.org/10.1016/j.patrec.2022.04.004

Crespi-Vallbona M, Dimitrovski D (2016) Food markets visitors: a typology proposal. Br Food J 118(4):840–857. https://doi.org/10.1108/bfj-11-2015-0420

Cheng D, Zhu Q, Huang J, Wu Q, Yang L (2019) A local cores-based hierarchical clustering algorithm for data sets with complex structures. Neural Comput Appl 31(11):8051–8068. https://doi.org/10.1007/s00521-018-3641-8

Cheng D, Zhu Q, Huang J, Wu Q, Yang L (2019) A hierarchical clustering algorithm based on noise removal. Int J Mach Learn Cybern 10(7):1591–1602. https://doi.org/10.1007/s13042-018-0836-3

Capo M, Perez A, Lozano J (2020) An efficient split-merge re-start for the K-means algorithm. IEEE Trans Knowl Data Eng. https://doi.org/10.1109/tkde.2020.3002926

Chen L, Chen F, Liu Z, Lv M, He T, Zhang S (2022) Parallel gravitational clustering based on grid partitioning for large-scale data. Appl Intell. https://doi.org/10.1007/s10489-022-03661-7

Du M, Ding S, Jia H (2016) Study on density peaks clustering based on k-nearest neighbors and principal component analysis. Knowl Based Syst 99:135–145. https://doi.org/10.1007/s10489-022-03661-7

Du G, Li X, Zhang L, Liu L, Zhao C (2021) Novel automated K-means++ algorithm for financial data sets. Math Probl Eng 2021:1–12. https://doi.org/10.1155/2021/5521119

Ester M, Kriegel HP, Sander S, Xu X (1996) A density-based algorithm for discovering clusters in large spatial databases with noise. In: KDD’96: Proceedings of the second international conference on knowledge discovery and data mining, pp 226–231

Emmendorfer LR, Canuto AMDP (2021) A generalized average linkage criterion for hierarchical agglomerative clustering. Appl Soft Comput 100:106990. https://doi.org/10.1016/j.asoc.2020.106990

Fan J (2019) OPE-HCA: an optimal probabilistic estimation approach for hierarchical clustering algorithm. Neural Comput Appl 31(7):2095–2105. https://doi.org/10.1007/s00521-015-1998-5

Güzel İ, Kaygun A (2020) A new non-Archimedan metric on persistent homology. Comput Stat. https://doi.org/10.1007/s00180-021-01187-z

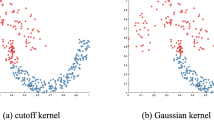

Huang T, Wang S, Zhu W (2020) An adaptive kernelized rank-order distance for clustering non-spherical data with high noise. Int J Mach Learn Cybern 11(8):1735–1747. https://doi.org/10.1007/s13042-020-01068-9

Hou H, Ding S, Xu X (2022) A deep clustering by multi-level feature fusion. Int J Mach Learn Cybern. https://doi.org/10.1007/s13042-022-01557-z

Jahan M, Hasan M (2021) A robust fuzzy approach for gene expression data clustering. Soft Comput 25(23):14583–14596. https://doi.org/10.1007/s00500-021-06397-7

Köse E, Hocaoğlu AK (2022) Clustering with density based initialization and Bhattacharyya based merging. Turk J Electr Eng Comput Sci 30(3):502–517. https://doi.org/10.55730/1300-0632.3794

Kaliji SA, Imami D, Canavari M, Gjonbalaj M, Gjokaj E (2022) Fruit-related lifestyles as a segmentation tool for fruit consumers. Br Food J 124(13):126–142. https://doi.org/10.1108/bfj-09-2021-1001

Liu Y, Ma Z, Yu F (2017) Adaptive density peak clustering based on K-nearest neighbors with aggregating strategy. Knowl Based Syst 133:208–220. https://doi.org/10.1016/j.knosys.2017.07.010

López-Rosas CA, Espinoza-Ortega A (2018) Understanding the motives of consumers of Mezcal in Mexico. Br Food J 120(7):1643–1656. https://doi.org/10.1108/bfj-07-2017-0381

Li Y, Chu X, Tian D, Feng J, Mu W (2021) Customer segmentation using K-means clustering and the adaptive particle swarm optimization algorithm. Appl Soft Comput. https://doi.org/10.1016/j.asoc.2021.107924

Li C, Wang H, Jiang F, Zhang Y, Peng Y (2022) A new clustering mining algorithm for multi-source imbalanced location data. Inf Sci 584:50–64. https://doi.org/10.1016/j.ins.2021.10.029

Mu W, Zhu H, Tian D, Feng J (2017) Profiling wine consumers by price segment: a case study in Bei**g, China. Ital J Food Sci 29(3):377–397

Maciejewski G, Mokrysz S, Wróblewski Ł (2019) Segmentation of coffee consumers using sustainable values: cluster analysis on the polish coffee market. Sustainability 11(3):613. https://doi.org/10.3390/su11030613

Naderipour M, Zarandi MHF, Bastani S (2022) A fuzzy cluster-validity index based on the topology structure and node attribute in complex networks. Expert Syst Appl 187:115913. https://doi.org/10.1016/j.eswa.2021.115913

Paschen J, Paschen U, Kietzmann JH (2016) À votre santé-conceptualizing the AO typology for luxury wine and spirits. Int J Wine Bus Res 28(2):170–186

Prabhagar MV, Punniyamoorthy M (2020) Development of new agglomerative and performance evaluation models for classification. Neural Comput Appl 32(7):2589–2600. https://doi.org/10.1007/s00521-019-04297-4

Qaddoura R, Faris H, Aljarah I (2020) An efficient clustering algorithm based on the k-nearest neighbors with an indexing ratio. Int J Mach Learn Cybern 11(3):675–714. https://doi.org/10.1007/s13042-019-01027-z

Qi J, Li Y, ** H, Feng J, Mu W (2022) User value identification based on an improved consumer value segmentation algorithm. Kybernetes. https://doi.org/10.1108/K-01-2022-0049

Rodriguez A, Laio A (2014) Clustering by fast search and find of density peaks. Science 344(6191):1492–1496. https://doi.org/10.1126/science.1242072

Ros F, Guillaume S (2018) Protras: a probabilistic traversing sampling algorithm. Expert Syst Appl 105:65–76. https://doi.org/10.1016/j.eswa.2018.03.052

Ros F, Guillaume S (2019) A hierarchical clustering algorithm and an improvement of the single linkage criterion to deal with noise. Expert Syst Appl 128:96–108. https://doi.org/10.1016/j.eswa.2019.03.031

Shi J, Ye L, Li Z, Zhan D (2022) Unsupervised binary protocol clustering based on maximum sequential patterns. CMES Comput Model Eng Sci 130(1):483–498. https://doi.org/10.32604/cmes.2022.017467

Turkoglu B, Uymaz SA, Kaya E (2022) Clustering analysis through artificial algae algorithm. Int J Mach Learn Cybern 13(4):1179–1196. https://doi.org/10.1007/s13042-022-01518-6

Tellaroli P (2022) SingleCross-clustering: an algorithm for finding elongated clusters with automatic estimation of outliers and number of clusters. Commun Stat Simul Comput 51(5):2412–2428. https://doi.org/10.1080/03610918.2019.1697449

Ventorimr IM, Luchi D, Rodrigues AL, Varejão FM (2021) BIRCHSCAN: a sampling method for applying DBSCAN to large datasets. Expert Syst Appl 184(1):115518. https://doi.org/10.1016/j.eswa.2021.115518

Wang G, Song Q (2016) Automatic clustering via outward statistical testing on density metrics. IEEE Trans Knowl Data Eng 28(8):1971–1985. https://doi.org/10.1109/tkde.2016.2535209

**e J, Gao H, **e W, Liu X, Grant PW (2016) Robust clustering by detecting density peaks and assigning points based on fuzzy weighted K-nearest neighbors. Inf Sci 354:19–40. https://doi.org/10.1016/j.ins.2016.03.011

Yuan X, Yu H, Liang J, Xu B (2021) A novel density peaks clustering algorithm based on K nearest neighbors with adaptive merging strategy. Int J Mach Learn Cybern 12(10):2825–2841. https://doi.org/10.1007/s13042-021-01369-7

Yan J, Chen J, Zhan J, Song S, Zhang Y, Zhao M, Liu Y, Xu W (2022) Automatic identification of rock discontinuity sets using modified agglomerative nesting algorithm. Bull Eng Geol Environ. https://doi.org/10.1007/s10064-022-02724-w

Yang Q, Gao W, Han G, Li Z, Tian M, Zhu S, Deng Y (2023) HCDC: a novel hierarchical clustering algorithm based on density-distance cores for data sets with varying density. Inf Syst 114:102159. https://doi.org/10.1016/j.is.2022.102159

Zhu Q, Feng J, Huang J (2016) Natural neighbor: A self-adaptive neighborhood method without parameter K. Pattern Recogn Lett 80:30–36. https://doi.org/10.1016/j.patrec.2016.05.007

Zhou S, Liu F (2020) A novel internal cluster validity index. J Intell Fuzzy Syst 38(4):4559–4571. https://doi.org/10.3233/jifs-191361

Zhou J, Zhai L, Pantelous AA (2020) Market segmentation using high-dimensional sparse consumers data. Expert Syst Appl 145:113136. https://doi.org/10.1016/j.eswa.2019.113136

Zhou Z, Si G, Sun H, Qu K, Hou W (2022) A robust clustering algorithm based on the identification of core points and KNN kernel density estimation. Expert Syst Appl 195:116573. https://doi.org/10.1016/j.eswa.2022.116573

Acknowledgements

This study was supported by the earmarked fund for CARS-29 and the open funds of the Key Laboratory of Viticulture and Enology, Ministry of Agriculture, PR China.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

The authors declare that we have no conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Qi, J., Li, Y., **, H. et al. A novel stratification clustering algorithm based on a new local density estimation method and an improved local inter-cluster distance measure. Int. J. Mach. Learn. & Cyber. 14, 4251–4283 (2023). https://doi.org/10.1007/s13042-023-01893-8

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s13042-023-01893-8