Abstract

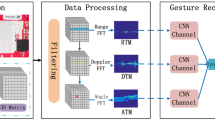

The lightweight human-robot interaction model with high real-time, high accuracy, and strong anti-interference capability can be better applied to future lunar surface exploration and construction work. Based on the feature information inputted from the monocular camera, the signal acquisition and processing fusion of the astronaut gesture and eye-movement modal interaction can be performed. Compared with the single mode, the human-robot interaction model of bimodal collaboration can achieve the issuance of complex interactive commands more efficiently. The optimization of the target detection model is executed by inserting attention into YOLOv4 and filtering image motion blur. The central coordinates of pupils are identified by the neural network to realize the human-robot interaction in the eye movement mode. The fusion between the astronaut gesture signal and eye movement signal is performed at the end of the collaborative model to achieve complex command interactions based on a lightweight model. The dataset used in the network training is enhanced and extended to simulate the realistic lunar space interaction environment. The human-robot interaction effects of complex commands in the single mode are compared with those of complex commands in the bimodal collaboration. The experimental results show that the concatenated interaction model of the astronaut gesture and eye movement signals can excavate the bimodal interaction signal better, discriminate the complex interaction commands more quickly, and has stronger signal anti-interference capability based on its stronger feature information mining ability. Compared with the command interaction realized by using the single gesture modal signal and the single eye movement modal signal, the interaction model of bimodal collaboration is shorter about 79% to 91% of the time under the single mode interaction. Regardless of the influence of any image interference item, the overall judgment accuracy of the proposed model can be maintained at about 83% to 97%. The effectiveness of the proposed method is verified.

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

References

Ding L, Gao H B, Deng Z Q, et al. Three-layer intelligence of planetary exploration wheeled mobile robots: Robint, virtint, and humint. Sci China Tech Sci, 2015, 58: 1299–1317

Zhuang H, Wang N, Gao H, et al. Power consumption characteristics research on mobile system of electrically driven large-load-ratio six-legged robot. Chin J Mech Eng, 2023, 36: 1–3

Liu Z, Zhuang H C, Gao H B, et al. Static force analysis of foot of electrically driven heavy-duty six-legged robot under tripod gait. Chin J Mech Eng, 2018, 31: 63

Zhuang H C, Gao H B, Deng Z Q. Gait planning research for an electrically driven large-load-ratio six-legged robot. Appl Sci, 2017, 7: 296

Zhuang H C, Gao H B, Deng Z Q, et al. A review of heavy-duty legged robots. Sci China Tech Sci, 2014, 57: 298–314

Wang G, Shi Z C, Shang Y, et al. Precise monocular vision-based pose measurement system for lunar surface sampling manipulator. Sci China Tech Sci, 2019, 62: 1783–1794

Cordes F, Ahrns I, Bartsch S, et al. LUNARES: Lunar crater exploration with heterogeneous multi robot systems. Intel Serv Robotics, 2011, 4: 61–89

Dunker P A, Lewinger W A, Hunt A J, et al. A biologically inspired robot for lunar in-situ resource utilization. In: Proceedings of 2009 IEEE/RSJ International Conference on Intelligent Robots and Systems. Louis, 2009. 5039–5044

Rodríguez-Martínez D, Van Winnendael M, Yoshida K. High-speed mobility on planetary surfaces: A technical review. J Field Robotics, 2019, 36: 1436–1455

Che X, Nemchin A, Liu D, et al. Age and composition of young basalts on the Moon, measured from samples returned by Chang’e-5. Science, 2021, 374: 887–890

Mazur J E, Crain W R, Looper M D, et al. New measurements of total ionizing dose in the lunar environment. Space Weather, 2011, 9: S07002

Chen M, Lin H, Wen Y, et al. Construction of a virtual lunar environment platform. Int J Digital Earth, 2013, 6: 469–482

Ding L, Zhou R, Yuan Y, et al. A 2-year locomotive exploration and scientific investigation of the lunar farside by the Yutu-2 rover. Sci Robot, 2022, 7: 1–3

Horányi M, Sternovsky Z, Lankton M, et al. The lunar dust experiment (LDEX) onboard the lunar atmosphere and dust environment explorer (LADEE) mission. Space Sci Rev, 2014, 185: 93–113

Perzanowski D, Schultz A C, Adams W, et al. Building a multimodal human-robot interface. IEEE Intell Syst, 2001, 16: 16–21

Kim Y, Yoon W C. Generating task-oriented interactions of service robots. IEEE Trans Syst Man Cybern Syst, 2014, 44: 981–994

Csapo A, Gilmartin E, Grizou J, et al. Multimodal conversational interaction with a humanoid robot. In: Proceedings of 2012 IEEE 3rd International Conference on Cognitive Infocommunications (CogInfoCom). Kosice, 2012. 667–672

Hong A, Lunscher N, Hu T, et al. A multimodal emotional humanrobot interaction architecture for social robots engaged in bidirectional communication. IEEE Trans Cybern, 2021, 51: 5954–5968

Iba S, Paredis C J J, Khosla P K. Interactive multimodal robot programming. Int J Robotics Res, 2005, 24: 83–104

Arkin J, Park D, Roy S, et al. Multimodal estimation and communication of latent semantic knowledge for robust execution of robot instructions. Int J Robotics Res, 2020, 39: 1279–1304

Kryuchkov B, Syrkin L, Usov V, et al. Using augmentative and alternative communication for human-robot interaction during maintaining habitability of a lunar base. In: Proceedings of International Conference on Interactive Collaborative Robotics. St. Petersburg, 2017. 95–104

Fong T, Scholtz J, Shah J A, et al. A preliminary study of peer-to-peer human-robot interaction. In: Proceedings of 2006 IEEE International Conference on Systems, Man and Cybernetics. Taipei, 2006. 3198–3203

Wibirama S, Murnani S, Setiawan N A. Spontaneous gaze gesture interaction in the presence of noises and various types of eye movements. In: Proceedings of ACM Symposium on Eye Tracking Research and Applications. Stuttgart, 2020. 1–5

Fujii K, Gras G, Salerno A, et al. Gaze gesture based human robot interaction for laparoscopic surgery. Med Image Anal, 2018, 44: 196–214

Nickel K, Stiefelhagen R. Visual recognition of pointing gestures for human-robot interaction. Image Vision Computing, 2007, 25: 1875–1884

Meena R, Jokinen K, Wilcock G. Integration of gestures and speech in human-robot interaction. In: Proceedings of 2012 IEEE 3rd International Conference on Cognitive Infocommunications (CogInfoCom). Kosice, 2012. 673–678

Liu Z T, Pan F F, Wu M, et al. A multimodal emotional communication based humans-robots interaction system. In: Proceedings of 35th Chinese Control Conference (CCC). Chengdu, 2016. 6363–6368

Zhang J, Wang B, Zhang C, et al. An EEG/EMG/EOG-based multimodal human-machine interface to real-time control of a soft robot hand. Front Neurorobot, 2019, 13: 1–13

Li Z, Jarvis R. Visual interpretation of natural pointing gestures in 3D space for human-robot interaction. In: Proceedings of 11th International Conference on Control Automation Robotics & Vision. Singapore, 2010. 2513–2518

Ye P J, Sun Z Z, Zhang H, et al. An overview of the mission and technical characteristics of Change’4 Lunar Probe. Sci China Tech Sci, 2017, 60: 658–667

Ye P J, Sun Z Z, Rao W, et al. Mission overview and key technologies of the first Mars probe of China. Sci China Tech Sci, 2017, 60: 649–657

Zhang H Y, Wang Y, Chen L P, et al. In-situ lunar dust deposition amount induced by lander landing in Chang’E-3 mission. Sci China Tech Sci, 2020, 63: 520–527

Yu J, Zhang W. Face mask wearing detection algorithm based on improved YOLO-v4. Sensors, 2021, 21: 3263

Guo F, Qian Y, Shi Y. Real-time railroad track components inspection based on the improved YOLOv4 framework. Automation Construction, 2021, 125: 103596

Dewi C, Chen R C, Jiang X, et al. Deep convolutional neural network for enhancing traffic sign recognition developed on YOLOv4. Multimed Tools Appl, 2022, 81: 37821–37845

Guo Q, Liu J, Kaliuzhnyi M. YOLOX-SAR: High-precision object detection system based on visible and infrared sensors for SAR remote sensing. IEEE Sens J, 2022, 22: 17243–17253

Woo S, Park J, Lee J Y, et al. Cbam: Convolutional block attention module. In Proceedings of 2018 European conference on computer vision (ECCV). Munich, 2018. 3–19

Kim H M, Kim J H, Park K R, et al. Small object detection using prediction head and attention. In: Proceedings of 2022 International Conference on Electronics, Information, and Communication (ICEIC). Jeju, 2022. 1–4

Wang S H, Fernandes S L, Zhu Z, et al. AVNC: Attention-based VGG-style network for COVID-19 diagnosis by CBAM. IEEE Sens J, 2021, 22: 17431–17438

Zhuang H, **a Y, Wang N, et al. High inclusiveness and accuracy motion blur real-time gesture recognition based on YOLOv4 model combined attention mechanism and DeblurGanv2. Appl Sci, 2021, 11: 9982

Peng Y, Tang Z, Zhao G, et al. Motion blur removal for UAV-based wind turbine blade images using synthetic datasets. Remote Sens, 2022, 14: 87

Tomosada H, Kudo T, Fujisawa T, et al. GAN-based image deblurring using DCT discriminator. In: Proceedings of 25th International Conference on Pattern Recognition (ICPR). Milan, 2021. 3675–3681

Băiașu A M, Dumitrescu C. Contributions to driver fatigue detection based on eye-tracking. Int J Circuits Syst Signal Processing, 2021, 15: 1–7

Li C M, Qi Z L, Nan J, et al. Human face detection algorithm via Haar cascade classifier combined with three additional classifiers. In: Proceedings of the 13th IEEE International Conference on Electronic Measurement and Instruments (ICEMI). Yangzhou, 2017. 483–487

Gong H, Hsieh S S, Holmes David R. I, et al. An interactive eye-tracking system for measuring radiologists’ visual fixations in volumetric CT images: Implementation and initial eye-tracking accuracy validation. Med Phys, 2021, 48: 6710–6723

Saleh N, Tarek A. Vision-based communication system for patients with amyotrophic lateral sclerosis. In: Proceedings of the 3rd Novel Intelligent and Leading Emerging Sciences Conference (NILES). Giza, 2021. 19–22

Chen S, Liu C. Eye detection using discriminatory Haar features and a new efficient SVM. Image Vision Computing, 2015, 33: 68–77

Author information

Authors and Affiliations

Corresponding authors

Additional information

This work was supported by the National Natural Science Foundation of China (Grant No. 51505335), the Industry University Cooperation Collaborative Education Project of the Department of Higher Education of the Chinese Ministry of Education (Grant No. 202102517001), the Tian** Postgraduate Scientific Research Innovation Project (Special Project of Intelligent Network Vehicle Connection) (Grant No. 2021YJSO2S33), the Tian** Postgraduate Scientific Research Innovation Project (Grant No. 2021YJSS216), and the Doctor Startup Project of Tian** University of Technology and Education (Grant No. KYQD 1806).

Rights and permissions

About this article

Cite this article

Zhuang, H., **a, Y., Wang, N. et al. Interactive method research of dual mode information coordination integration for astronaut gesture and eye movement signals based on hybrid model. Sci. China Technol. Sci. 66, 1717–1733 (2023). https://doi.org/10.1007/s11431-022-2368-y

Received:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11431-022-2368-y