Abstract

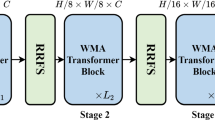

Breathtaking advances in face forgery techniques produce visually untraceable deepfake videos, thus potential malicious abuse of these techniques has sparked great concerns. Existing deepfake detectors primarily focus on specific forgery patterns with global features extracted by CNN backbones for forgery detection. Due to inadequate exploration of content and texture features, they often suffer from overfitting method-specific forged regions, thus exhibiting limited generalization to increasingly realistic forgeries. In this paper, we propose a Wavelet-guided Texture-Content HiErarchical Relation (WATCHER) Learning framework to delve deeper into the relation-aware texture-content features. Specifically, we propose a Wavelet-guided AutoEncoder scheme to retrieve the general visual representation, which is aware of high-frequency details for understanding forgeries. To further excavate fine-grained counterfeit clues, a Texture-Content Attention Maps Learning module is presented to enrich the contextual information of content and texture features via multi-level attention maps in a hierarchical learning protocol. Finally, we propose a Progressive Multi-domain Feature Interaction module in pursuit to perform semantic reasoning on relationship-enhanced texture-content forgery features. Extensive experiments on popular benchmark datasets substantiate the superiority of our WATCHER model, consistently trum** state-of-the-art methods by a significant margin.

Similar content being viewed by others

Data Availibility Statement

The datasets underlying the results of this research are accessible upon reasonable request from the corresponding author or the first author.

References

Afchar, D., Nozick, V., Yamagishi, J., & Echizen, I. (2018). Mesonet: A compact facial video forgery detection network. In 2018 IEEE international workshop on information forensics and security (pp. 1–7).

Aujol, J.-F., & Chambolle, A. (2005). Dual norms and image decomposition models. International Journal of Computer Vision, 63, 85–104.

Cao, J., Ma, C., Yao, T., Chen, S., Ding, S., & Yang, X. (2022). End-to-end reconstruction-classification learning for face forgery detection. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 4113–4122).

Chen, S., Yao, T., Chen, Y., Ding, S., Li, J., & Ji, R. (2021). Local relation learning for face forgery detection. In Proceedings of the AAAI conference on artificial intelligence (Vol. 35, pp. 1081–1088).

Chollet, F. (2017). Xception: Deep learning with depthwise separable convolutions. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 1251–1258).

Dang, H., Liu, F., Stehouwer, J., Liu, X., & Jain, A.K. (2020). On the detection of digital face manipulation. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 5781–5790).

Dolhansky, B., Howes, R., Pflaum, B., Baram, N., & Ferrer, C.C. (2019). The deepfake detection challenge (dfdc) preview dataset. ar** detection. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 4490–4499).

Huang, H., He, R., Sun, Z., & Tan, T. (2017). Wavelet-SRNET: A wavelet-based CNN for multi-scale face super resolution. In Proceedings of the IEEE international conference on computer vision (pp. 1689–1697).

Jiang, L., Li, R., Wu, W., Qian, C., & Loy, C.C. (2020). Deeperforensics-1.0: A large-scale dataset for real-world face forgery detection. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 2889–2898).

Khosla, P., Teterwak, P., Wang, C., Sarna, A., Tian, Y., Isola, P., Maschinot, A., Liu, C., & Krishnan, D. (2020). Supervised contrastive learning. Advances in Neural Information Processing Systems, 33, 18661–18673.

Kim, H., Choi, Y., Kim, J., Yoo, S., & Uh, Y. (2021). Exploiting spatial dimensions of latent in GAN for real-time image editing. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 852–861).

Kipf, T.N., & Welling, M. (2017). Semi-supervised classification with graph convolutional networks. In 5th international conference on learning representations, ICLR 2017, Toulon, France, April 24–26, 2017, conference track proceedings.

Kowalski, M. (2018). FaceSwap. https://github.com/marekkowalski/faceswap Accessed 01 August 2020.

Li, J., & **. ar**v preprint ar**v:1912.13457

Li, L., Bao, J., Zhang, T., Yang, H., Chen, D., Wen, F., & Guo, B. (2020). Face x-ray for more general face forgery detection. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 5001–5010).

Li, Y., Chang, M.-C., & Lyu, S. (2018). In ictu oculi: Exposing ai created fake videos by detecting eye blinking. In 2018 IEEE international workshop on information forensics and security (pp. 1–7).

Li, J., **e, H., Li, J., Wang, Z., & Zhang, Y. (2021). Frequency-aware discriminative feature learning supervised by single-center loss for face forgery detection. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 6458–6467).

Li, Y., Yang, X., Sun, P., Qi, H., & Lyu, S. (2020). Celeb-df: A large-scale challenging dataset for deepfake forensics. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 3207–3216).

Liang, J., Shi, H., & Deng, W. (2022). Exploring disentangled content information for face forgery detection. ar**v preprint ar**v:2207.09202

Lin, M. (2015). Bilinear CNN models for fine-grained visual recognition. In Proceedings of the IEEE international conference on computer vision (pp. 1449–1457).

Liu, H., Li, X., Zhou, W., Chen, Y., He, Y., Xue, H., Zhang, W., & Yu, N. (2021). Spatial-phase shallow learning: rethinking face forgery detection in frequency domain. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 772–781).

Liu, Z., Qi, X., & Torr, P. H. (2020). Global texture enhancement for fake face detection in the wild. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 8060–8069).

Liu, L., Zhao, L., Long, Y., Kuang, G., & Fieguth, P. (2012). Extended local binary patterns for texture classification. Image and Vision Computing, 30(2), 86–99.

Luo, Y., Zhang, Y., Yan, J., & Liu, W. (2021). Generalizing face forgery detection with high-frequency features. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 16317–16326).

Miao, C., & Tan, Z. (2022). Hierarchical frequency-assisted interactive networks for face manipulation detection. IEEE Transactions on Information Forensics and Security, 17, 3008–3021.

Miao, C., & Tan, Z. (2023). F 2 trans: High-frequency fine-grained transformer for face forgery detection. IEEE Transactions on Information Forensics and Security, 18, 1039–1051.

Nguyen, H. H., Yamagishi, J., and Echizen, I. (2019). Capsule-forensics: Using capsule networks to detect forged images and videos. In 2019–2019 IEEE international conference on acoustics, speech and signal processing (ICASSP) (pp. 2307–2311).

Ojala, T., Pietikainen, M., & Maenpaa, T. (2002). Multiresolution gray-scale and rotation invariant texture classification with local binary patterns. IEEE Transactions on Pattern Analysis and Machine Intelligence, 24(7), 971–987.

Paszke, A., Gross, S., Chintala, S., Chanan, G., Yang, E., DeVito, Z., Lin, Z., Desmaison, A., Antiga, L., & Lerer, A. (2017). Automatic differentiation in pytorch.

Perov, I., Gao, D., Chervoniy, N., Liu, K., Marangonda, S., Umé, C., Dpfks, M., Facenheim, C. S., et al. (2020). Deepfacelab: A simple, flexible and extensible face swap** framework.

Qian, Y., Yin, G., Sheng, L., Chen, Z., & Shao, J. (2020). Thinking in frequency: Face forgery detection by mining frequency-aware clues. In Proceedings of the IEEE conference on European conference on computer vision (pp. 86–103). Springer.

Rossler, A., Cozzolino, D., Verdoliva, L., Riess, C., Thies, J., & Nießner, M. (2019). Faceforensics++: Learning to detect manipulated facial images. In Proceedings of the IEEE international conference on computer vision (pp. 1–11).

Sagonas, C., Antonakos, E., Tzimiropoulos, G., Zafeiriou, S., & Pantic, M. (2016). 300 faces in-the-wild challenge: Database and results. Image and Vision Computing, 47, 3–18.

Selvaraju, R.R., Das, A., Vedantam, R., Cogswell, M., Parikh, D., & Batra, D. (2016). Grad-cam: Why did you say that? visual explanations from deep networks via gradient-based localization. ar**v e-prints.

Shen, Y., Gu, J., Tang, X., & Zhou, B. (2020). Interpreting the latent space of gans for semantic face editing. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 9240–9249).

Song, L., Fang, Z., Li, X., Dong, X., **, Z., Chen, Y., & Lyu, S. (2022). Adaptive face forgery detection in cross domain. In Proceedings of the European conference on computer vision (pp. 467–484). Springer.

Song, H., Huang, S., Dong, Y., & Tu, W.-W. (2023). Robustness and generalizability of deepfake detection: A study with diffusion models. ar**v preprint ar**v:2309.02218

Song, L., Li, X., Fang, Z., **, Z., Chen, Y., & Xu, C. (2022). Face forgery detection via symmetric transformer. In Proceedings of the 30th ACM international conference on multimedia (pp. 4102–4111).

Song, J., Cho, H., Yoon, J., & Yoon, S. M. (2017). Structure adaptive total variation minimization-based image decomposition. IEEE Transactions on Circuits and Systems for Video Technology, 28(9), 2164–2176.

Starck, J.-L., Elad, M., & Donoho, D. L. (2005). Image decomposition via the combination of sparse representations and a variational approach. IEEE Transactions on Image Processing, 14(10), 1570–1582.

Sun, K., & Liu, H. (2021). Domain general face forgery detection by learning to weight. In Proceedings of the AAAI conference on artificial intelligence (Vol. 35, pp. 2638–2646).

Sun, K., & Yao, T. (2022). Dual contrastive learning for general face forgery detection. In Proceedings of the AAAI conference on artificial intelligence (Vol. 36, pp. 2316–2324).

Suwajanakorn, S., & Seitz, S. M. (2017). Synthesizing Obama: Learning lip sync from audio. ACM Transactions on Graphics (TOG), 36(4), 1–13.

Tan, M., & Le, Q. (2019). Efficientnet: Rethinking model scaling for convolutional neural networks. In International conference on machine learning (pp. 6105–6114). PMLR.

Tan, Z., Yang, Y., Wan, J., Guo, G., & Li, S.Z. (2020). Relation-aware pedestrian attribute recognition with graph convolutional networks. In Proceedings of the AAAI conference on artificial intelligence (vol. 34, pp. 12055–12062).

Tan, Z., Yang, Y., Wan, J., Hang, H., Guo, G., & Li, S. Z. (2019). Attention-based pedestrian attribute analysis. IEEE Transactions on Image Processing, 28(12), 6126–6140.

Thies, J., Zollhofer, M., Stamminger, M., Theobalt, C., & Nießner, M. (2016). Face2face: Real-time face capture and reenactment of RGB videos. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 2387–2395).

ThiesJ,. (2019). Deferred neural rendering: Image synthesis using neural textures. ACM Transactions on Graphics (TOG), 38(4), 1–12.

Tolstikhin, I. O., Houlsby, N., Kolesnikov, A., Beyer, L., Zhai, X., Unterthiner, T., Yung, J., Steiner, A., Keysers, D., Uszkoreit, J., et al. (2021). MLP-mixer: An all-MLP architecture for vision. Advances in Neural Information Processing Systems, 34, 24261–24272.

Tora, M. (2018). Deepfakes. https://github.com/deepfakes/faceswap/tree/v2.0.0 Accessed 03 March 2021.

Veličković, P., Cucurull, G., Casanova, A., Romero, A., Lio, P., & Bengio, Y. (2017). Graph attention networks. ar**v preprint ar**v:1710.10903

Vincent, P., Larochelle, H., Bengio, Y., & Manzagol, P.-A. (2008). Extracting and composing robust features with denoising autoencoders. In Proceedings of the 25th international conference on machine learning (pp. 1096–1103).

Wang, C., & Deng, W. (2021). Representative forgery mining for fake face detection. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 14923–14932).

Wang, Y., & Yu, K. (2023). Dynamic graph learning with content-guided spatial-frequency relation reasoning for deepfake detection. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 7278–7287).

Wang, J., Wu, Z., Ouyang, W., Han, X., Chen, J., Jiang, Y.-G., & Li, S.-N. (2022). M2tr: Multi-modal multi-scale transformers for deepfake detection. In Proceedings of the 2022 international conference on multimedia retrieval (pp. 615–623).

Wang, X., Yu, K., Wu, S., Gu, J., Liu, Y., Dong, C., Qiao, Y., & Change Loy, C. (2018). Esrgan: Enhanced super-resolution generative adversarial networks. In Proceedings of the European conference on computer vision workshops (pp. 0–0).

Wang, J., Sun, Y., & Tang, J. (2022). Lisiam: Localization invariance Siamese network for Deepfake detection. IEEE Transactions on Information Forensics and Security, 17, 2425–2436.

Wei, X., Zhang, T., Li, Y., Zhang, Y., & Wu, F. (2020). Multi-modality cross attention network for image and sentence matching. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 10938–10947).

Woo, S., Park, J., Lee, J.-Y., & Kweon, I.S. (2018). CBAM: Convolutional block attention module. In Proceedings of the European conference on computer vision (pp. 3–19).

Xu, Y., Yin, Y., Jiang, L., Wu, Q., Zheng, C., Loy, C.C., Dai, B., & Wu, W. (2022). Transeditor: Transformer-based dual-space gan for highly controllable facial editing. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 7683–7692).

Yang, X., Li, Y., & Lyu, S. (2019). Exposing deep fakes using inconsistent head poses. In 2019-2019 IEEE international conference on acoustics, speech and signal processing (ICASSP) (pp. 8261–8265).

Yang, Z., & Liang, J. (2023). Masked relation learning for deepfake detection. IEEE Transactions on Information Forensics and Security, 18, 1696–1708.

Yu, Z., Zhao, C., Wang, Z., Qin, Y., Su, Z., Li, X., Zhou, F., & Zhao, G. (2020). Searching central difference convolutional networks for face anti-spoofing. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (pp. 5295–5305).

Zhao, T., Xu, X., Xu, M., Ding, H., et al. (2021). Learning self-consistency for deepfake detection. In Proceedings of the IEEE international conference on computer vision (pp. 15023–15033).

Zhao, Y., Yan, K., Huang, F., & Li, J. (2021). Graph-based high-order relation discovery for fine-grained recognition. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 15079–15088).

Zhao, H., Zhou, W., Chen, D., Wei, T., Zhang, W., & Yu, N. (2021). Multi-attentional deepfake detection. In Proceedings of the IEEE conference on computer vision and pattern recognition (pp. 2185–2194).

Zhuang, W., & Chu, Q. (2022). UIA-VIT: Unsupervised inconsistency-aware method based on vision transformer for face forgery detection. In Proceedings of the European conference on computer vision (pp. 391–407). Springer.

Zi, B., Chang, M., Chen, J., Ma, X., & Jiang, Y.-G. (2020). Wilddeepfake: A challenging real-world dataset for deepfake detection. In Proceedings of the 28th ACM international conference on multimedia (pp. 2382–2390).

Acknowledgements

This work is supported by National Key R &D Program of China (2021YFF0602101) and the National Natural Science Foundation of China (62172227).

Author information

Authors and Affiliations

Corresponding author

Additional information

Communicated by Segio Escalera.

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Wang, Y., Chen, C., Zhang, N. et al.  WATCHER: Wavelet-Guided Texture-Content Hierarchical Relation Learning for Deepfake Detection.

Int J Comput Vis (2024). https://doi.org/10.1007/s11263-024-02116-5

WATCHER: Wavelet-Guided Texture-Content Hierarchical Relation Learning for Deepfake Detection.

Int J Comput Vis (2024). https://doi.org/10.1007/s11263-024-02116-5

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s11263-024-02116-5