Abstract

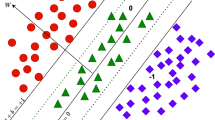

In recent developments, the traditional binary class SVM has evolved into a multi-class classifier utilizing a ‘1-versus-1-versus-rest’ approach named K−SVCR. This innovative version efficiently categorizes multi-class data samples, generating ternary mode outputs \(\{-1, 0, +1\}\), enabling simultaneous classification into three distinct classes. However, the availability of labelled data is scarce as it requires human effort for labelling it. But, the availability of unlabelled samples is easier as these are devoid of explicit class labels. This unlabelled data can be utilized by augmenting graph Laplacian regularisation term, evolving it into a semi-supervised learning approach for mode training. Thus, the model can capture samples’ distribution information available with abundant unlabeled data. Now, even a few labelled samples can elevate the effectiveness of the trained model on out-of-sample data. This article’s primary objective is to harness the potential of unlabeled data. To understand the intuition behind the proposed model two artificial datasets are considered. Further, the experiments were conducted on several real-world datasets from the UCI machine learning repository and the Flavia dataset for plant identification from leaf images, to evaluate the performance of the Semi-supervised graph Laplacian K−SVCR (Lap−KSVCR) model in terms of accuracy, precision, recall, and F-score of classification. It was observed that the model could achieve \(63\%\) accuracy by training on a single labelled sample per class in case of non-linear three class artificial datasets. Additionally, on UCI real-world dataset and Flavia dataset an improvement of up to \(17.5\%\), \(17.3\%\), \(30.1\%\), and \(17.2\%\) was observed in accuracy, precision, recall, and f-score, respectively when compared to K−SVCR.

Similar content being viewed by others

Availability of supporting data

In this work, we have not generated any raw data. However, the datasets analyzed during the current study are publicly available in the UCI machine learning repository, https://archive.ics.uci.edu and the Flavia dataset is available at http://flavia.sourceforge.net

References

Vapnik V (1995) Support-vector networks. Mach Learn 20:273–297

Vapnik VN (1998) The nature of statistical learning. (No Title)

Win KN, Li K, Chen J, Viger PF, Li K (2020) Fingerprint classification and identification algorithms for criminal investigation: A survey. Future Generation Comput Syst 110:758–771

Richhariya B, Gupta D (2019) Facial expression recognition using iterative universum twin support vector machine. Appl Soft Comput 76:53–67

Pardalos PM, Boginski VL, Vazacopoulos A (2008) Data Mining in Biomedicine vol. 7. Springer, ???

Tanveer M, Richhariya B, Khan RU, Rashid AH, Khanna P, Prasad M, Lin C (2020) Machine learning techniques for the diagnosis of alzheimer’s disease: A review. ACM Trans Multimed Comput, Commun, Appl (TOMM) 16(1s):1–35

Heikamp K, Bajorath J (2014) Support vector machines for drug discovery. Expert Opinion on Drug Discov 9(1):93–104

Goyal N, Kumar N, Gupta K (2022) Lower-dimensional intrinsic structural representation of leaf images and plant recognition. Signal, Image Video Process 1–8

Khemchandani R, Chandra S et al (2007) Twin support vector machines for pattern classification. IEEE Trans Pattern Anal Mach Intell 29(5):905–910

Tian Y-J, Ju X-C (2015) Nonparallel support vector machine based on one optimization problem for pattern recognition. J Operations Res Soc China 3:499–519

Mangasarian OL, Bennett KP, Parrado-Hernández E (2006) Exact 1-norm support vector machines via unconstrained convex differentiable minimization. J Mach Learn Res 7(7)

Huang X, Shi L, Suykens JA (2013) Support vector machine classifier with pinball loss. IEEE Trans Pattern Anal Mach Intell 36(5):984–997

Balasundaram S, Prasad SC (2020) Robust twin support vector regression based on huber loss function. Neural Comput Appl 32:11285–11309

Singla M, Ghosh D, Shukla K, Pedrycz W (2020) Robust twin support vector regression based on rescaled hinge loss. Pattern Recognit 105

Melacci S, Belkin M (2011) Laplacian support vector machines trained in the primal. J Machine Learn Res 12(3)

Weston J, Collobert R, Sinz F, Bottou L, Vapnik V (2006) Inference with the universum. In: Proceedings of the 23rd international conference on machine learning, pp 1009–1016

Fung G, Mangasarian OL (2003) Finite newton method for lagrangian support vector machine classification. Neurocomputing 55(1-2):39–55

Chapelle O, Scholkopf B, Zien A (2009) Semi-supervised learning (chapelle, o. et al., eds.; 2006)[book reviews]. IEEE Trans Neural Netw 20(3):542–542

Zhu XJ (2005) Semi-supervised learning literature survey

Xu Z, King I, Lyu MR-T, ** R (2010) Discriminative semi-supervised feature selection via manifold regularization. IEEE Trans Neural Netw 21(7):1033–1047

Belkin M, Niyogi P (2002) Using manifold stucture for partially labeled classification. In: Advances in neural information processing systems 15

Zhu X, Ghahramani Z, Lafferty JD (2003) Semi-supervised learning using gaussian fields and harmonic functions. In: Proceedings of the 20th international conference on machine learning (ICML-03), pp 912–919

Belkin M, Niyogi P, Sindhwani V (2006) Manifold regularization: A geometric framework for learning from labeled and unlabeled examples. J Machine Learn Res 7(11)

Sun S, **e X (2015) Semisupervised support vector machines with tangent space intrinsic manifold regularization. IEEE Trans Neural Netw Learn Syst 27(9):1827–1839

Tsang I, Kwok J (2006) Large-scale sparsified manifold regularization. In: Advances in neural information processing systems 19

Tang L, Tian Y, Pardalos PM (2019) A novel perspective on multiclass classification: Regular simplex support vector machine. Inf Sci 480:324–338

HG KU (2002) Pairwise classification and support vector machines. Adv Kernel Methods: Support Vector Learn

Hsu C-W, Lin C-J (2002) A comparison of methods for multiclass support vector machines. IEEE Trans Neural Netw 13(2):415–425

Angulo C, Parra X, Catala A (2003) K-svcr. a support vector machine for multi-class classification. Neurocomputing 55(1-2):57–77

Moosaei H, Hladík M (2022) Least squares approach to k-svcr multi-class classification with its applications. Ann Math Artif Intell 90(7–9):873–892

Tikhonov A (1963) Regularization of incorrectly posed problems. In: Soviet math. dokl., pp 1624–1627

Belkin M, Niyogi P, Sindhwani V (2005) On manifold regularization. In: International Workshop on Artificial Intelligence and Statistics, PMLR, pp 17–24

Qi Z, Tian Y, Shi Y (2014) Successive overrelaxation for laplacian support vector machine. IEEE Trans Neural Netw Learn Syst 26(4):674–683

Balasundaram S, Gupta D et al (2014) Lagrangian support vector regression via unconstrained convex minimization. Neural Netw 51:67–79

Lichman M et al (2013) UCI Machine Learn Repository. Irvine, CA, USA

Fisher R (1936) Iris Data Set. UCI Machine Learning Repository

German B (1987) Glass Identification. UCI Machine Learning Repository. https://doi.org/10.24432/C5WW2P

Siegler R (1994) Balance Scale. UCI Machine Learning Repository. https://doi.org/10.24432/C5488X

Nakai K (1996) Ecoli. UCI Machine Learning Repository. https://doi.org/10.24432/C5388M

Hayes-Roth B, Hayes-Roth F (1989) Hayes-Roth. UCI Machine Learning Repository. https://doi.org/10.24432/C5501T

Loh W-Y (1997) Teaching Assistant Evaluation. UCI Machine Learning Repository. https://doi.org/10.24432/C55P6M

Goyal N, Gupta K (2022) A hierarchical laplacian twsvm using similarity clustering for leaf classification. Cluster Comput 25(2):1541–1560

Wu SG, Bao FS, Xu EY, Wang Y-X, Chang Y-F, **ang Q-L (2007) A leaf recognition algorithm for plant classification using probabilistic neural network. In: 2007 IEEE International symposium on signal processing and information technology, IEEE, pp. 11–16

Goyal N, Gupta K, Kumar N (2019) Multiclass twin support vector machine for plant species identification. Multimed Tools Appl 78:27785–27808

Acknowledgements

The authors would like to thank the National Institute of Technology Kurukshetra, India for financially supporting the research work.

Funding

National Institute of Technology Kurukshetra, India has provided a fellowship to the first author, Mr. Vivek Prakash Srivastava to support this research work.

Author information

Authors and Affiliations

Contributions

Kapil Gupta has formulated the problems. Vivek Prakash Srivastava has coded and experimented on datasets.

Corresponding author

Ethics declarations

Conflict of Interest

We declare that the authors have no competing interests as defined by Springer, or other interests that might be perceived to influence the results and/or discussion reported in this paper.

Ethical approval

Not Applicable.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Srivastava, V.P., Kapil Semi supervised K–SVCR for multi-class classification. Multimed Tools Appl (2024). https://doi.org/10.1007/s11042-024-19228-2

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s11042-024-19228-2