Abstract

This study delves into Human–Computer Intelligent Interaction (HCII), a burgeoning interdisciplinary field that builds upon traditional Human–Computer Interaction (HCI) by integrating advanced technologies like Natural Language Processing (NLP) and Machine Learning (ML). In this paper, we scrutinize 5,781 HCII papers published between 2000 and 2023, narrowing our focus to 803 most relevant articles to construct co-citation and interdisciplinary networks based on the CiteSpace Software. Our findings reveal that the publications of the United States and China are relatively high with 558 and 616 publications respectively. Furthermore, we found that machine learning and deep learning have emerged as the prevalent methodologies in HCII, which currently emphasizes multimodal emotion recognition, facial expression recognition, and NLP. We predict that HCII will be integrated into advanced applications such as neural-based interactive games and multi-sensory environments. In sum, our analysis underscores HCII's role in advancing artificial intelligence, facilitating more intuitive and efficient human–computer interactions, and its prospective societal impact. We hope that our review and analysis may guide the efforts of researchers aiming to contribute to HCII and develop more powerful and intelligent methods, tools, and applications.

Similar content being viewed by others

Data availability

My manuscript has data included as electronic supplementary material.

References

Hirschberg K, Manning CD (2023) Advances in natural language processing. Science 349:261–266

Hussien RM, Al-Jubouri KQ, Gburi MA et al (1973) (2021) computer vision and image processing the challenges and opportunities for new technologies approach: A paper review. J Phys: Conf Ser 1:012002

Pantic M, Pentland A, Nijholt A, Huang TS (2007) Human computing and machine understanding of human behavior: a survey. In: Huang TS, Nijholt A, Pantic M, Pentland A (eds) Artifical intelligence for human computing. Lecture notes in computer science, vol 4451. Springer, Berlin, Heidelberg. https://doi.org/10.1007/978-3-540-72348-6_3

Lv Z, Poiesi F, Deep DQ et al (2022) Learning for intelligent human-computer interaction. Appl Sci 12:11457

Wang G, Li L, **ng S, Ding H (2018) Intelligent HMI in orthopedic navigation. Adv Experiment Med Biol 1093:207–224

Miao Y, Jiang Y, Peng L et al (2018) Telesurgery robot based on 5G tactile internet. Mobile Netw Appl 23:1645–1654

Li R, Fu H, Lo W, Chi Z, Song Z, Wen D (2019) Skeleton-based action recognition with key-segment descriptor and temporal step matrix model. IEEE Access 7:169782–169795. https://doi.org/10.1109/ACCESS.2019.2954744

Li P, Hou X, Duan X, Yip HM, Song G, Liu Y (2019) Appearance-based gaze estimator for natural interaction control of surgical robots. IEEE Access 7:25095–25110. https://doi.org/10.1109/ACCESS.2019.2900424

Li P, Hou X, Wei L, Song G, Duan X (2018) Efficient and low-cost deep-learning based gaze estimator for surgical robot control. In: 2018 IEEE international conference on real-time computing and robotics (RCAR). IEEE, Kandima, Maldives, pp 58–63. https://doi.org/10.1109/RCAR.2018.8621810

Senle Z, Rencheng S, Juan C, Yunfei Z, Xun C (2019) A feasibility study of a video-based heart rate estimation method with convolutional neural networks. In: 2019 IEEE International Conference on Computational Intelligence and Virtual Environments for Measurement Systems and Applications (CIVEMSA), pp 1–5. https://doi.org/10.1109/CIVEMSA45640.2019.9071634

Singh A, Kabra R, Kumar R, Lokanath MB, Gupta R, Shekhar SK (2021) On-device system for device directed speech detection for improving human computer interaction. IEEE Access 9:131758–131766. https://doi.org/10.1109/ACCESS.2021.3114371

Ioanna M, Alexandris C (2009) Verb processing in spoken commands for household security and appliances. In: Constantine S (ed) Universal access in human-computer interaction. Intelligent and ubiquitous interaction environments. Springer, Berlin Heidelberg, pp 92–99. https://doi.org/10.1007/978-3-642-02710-9_11

Hu W, **ang L, Zehua L (2022) Research on auditory performance of vehicle voice interaction in different sound index. In: Kurosu M (ed) Human-computer interaction. User experience and behavior. Springer International Publishing, Cham, pp 61–69. https://doi.org/10.1007/978-3-031-05412-9_5

Waldron SM, Patrick J, Duggan GB, Banbury S, Howes A (2008) Designing information fusion for the encoding of visual–spatial information. Ergonomics 51(6):775–797. https://doi.org/10.1080/00140130701811933

Li Z, Li X, Zhang J et al (2021) Research on interactive experience design of peripheral visual interface of autonomous vehicle. In: Kurosu M (eds) Human-computer interaction. Design and user experience case studies. HCII 2021. Lecture notes in computer science, 12764. Springer

Hazoor A, Terrafino A, Di Stasi LL et al (2022) How to take speed decisions consistent with the available sight distance using an intelligent speed adaptation system. Accid Anal Prev Sep174:106758

Lee KM, Moon Y, Park I, Lee J-g (2023) Voice orientation of conversational interfaces in vehicles. Behav Inf Technol 1–12. https://doi.org/10.1080/0144929X.2023.2166870

Shanthi N, Sathishkumar VE, Upendra Babu K, Karthikeyan P, Rajendran S, Allayear SM (2022) Analysis on the bus arrival time prediction model for human-centric services using data mining techniques. Comput Intell Neurosci 2022:7094654. https://doi.org/10.1155/2022/7094654

Kim H, Kim W, Kim J et al (2022) Study on the take-over performance of level 3 autonomous vehicles based on subjective driving tendency questionnaires and machine learning methods. ETRI J 45:75–92

Zhu Z, Ye A, Wen F, Dong X, Yuan K, Zou W (2010) Visual servo control of intelligent wheelchair mounted robotic arm. In: 2010 8th World Congress on Intelligent Control and Automation, pp 6506–6511. https://doi.org/10.1109/WCICA.2010.5554200

Chen L, Haiwei Y, Liu P (2019) Intelligent robot arm: Vision-based dynamic measurement system for industrial applications. In: Haibin Y, **guo L, Liu Lianqing J, Zhaojie LY, Dalin Z (eds) Intelligent robotics and applications. Springer International Publishing, Cham, pp 120–130. https://doi.org/10.1007/978-3-030-27541-9_11

Chen L, **aochun Z, Dimitrios C, Hongji Y (2022) ToD4IR: A humanised task-oriented dialogue system for industrial robots. IEEE Access 10:91631–91649. https://doi.org/10.1109/ACCESS.2022.3202554

Chen L, **ha P, Hahyeon K, Dimitrios C (2021) How can I help you? In: An intelligent virtual assistant for industrial robots. Association for Computing Machinery, New York. https://doi.org/10.1145/3434074.3447163

Wojciech K, Maciej M, Zurada Jacek M (2010) Intelligent E-learning systems for evaluation of user's knowledge and skills with efficient information processing. In: Rutkowski L, Rafa S, Tadeusiewicz R, Zadeh Lotfi A, Zurada Jacek M (eds) Artifical intelligence and soft computing. Springer, Berlin Heidelberg, pp 508–515. https://doi.org/10.1007/978-3-642-13232-2_62

Cheong Michelle LF, Chen Jean Y-C, Tian DB (2019) An intelligent platform with automatic assessment and engagement features for active online discussions. In: Wotawa F, Friedrich G, Pill I, Koitz-Hristov R, Ali M (eds) Advances and trends in artificial intelligence. From theory to practice. Springer International Publishing, Cham, pp 730–743. https://doi.org/10.1007/978-3-030-22999-3_62

Choi Y, Jeon H, Lee S et al (2022) Seamless-walk: Novel natural virtual reality locomotion method with a high-resolution tactile sensor. 2022 IEEE conference on Virtual Reality and 3D User Interfaces Abstracts and Workshops (VRW), pp 696–697

Amin M, Tubaishat A, Al-Obeidat F, Shah B, Karamat M (2022) Leveraging brain–computer interface for implementation of a bio-sensor controlled game for attention deficit people. Comput Electr Eng 102:108277. https://doi.org/10.1016/j.compeleceng.2022.108277

Gao Y, Anqi C, Susan C, Guangtao Z, Aimin H (2022) Analysis of emotional tendency and syntactic properties of VR game reviews. In: 2022 IEEE Conference on Virtual Reality and 3D User Interfaces Abstracts and Workshops (VRW), pp 648–649. https://doi.org/10.1109/VRW55335.2022.00175

Hu Z, Andreas B, Li S, Wang G (2021) FixationNet: Forecasting eye fixations in task-oriented virtual environments. IEEE Trans Vis Comput Graph 27(5):2681–2690. https://doi.org/10.1109/TVCG.2021.3067779

Kim J (2020) VIVR: Presence of Immersive Interaction for Visual Impairment Virtual Reality. IEEE Access, pp 196151–196159

Krepki R, Blankertz B, Curio G et al (2007) The Berlin Brain-Computer Interface (BBCI) – towards a new communication channel for online control in gaming applications. Multimed Tools Appl 33:73–90

García-Méndez S, Arriba-Pérez D, Francisco G-C, Francisco J, Regueiro-Janeiro JA, Gil-Castiñeira F (2021) Entertainment Chatbot for the digital inclusion of elderly people without abstraction capabilities. IEEE Access 9:75878–75891. https://doi.org/10.1109/ACCESS.2021.3080837

Lee W, Son G (2023) Investigation of human state classification via EEG signals elicited by emotional audio-visual stimulation. Multimed Tools Appl. https://doi.org/10.1007/s11042-023-16294-w

Razzaq MA, Hussain J, Bang J (2023) Hybrid multimodal emotion recognition framework for UX evaluation using generalized mixture functions. Sensors 23:4373

Jo AH, Kwak KC (2023) Speech emotion recognition based on two-stream deep learning model using korean audio information. Appl Sci 13:2167

Aleisa HN, Alrowais FM, Negm N et al (2023) Henry gas solubility optimization with deep learning based facial emotion recognition for human computer Interface. IEEE Access 11:62233–62241

Gagliardi G, Alfeo AL, Catrambone V, Diego C-R, Cimino Mario GCA, Valenza G (2023) Improving emotion recognition systems by exploiting the spatial information of EEG sensors. IEEE Access 11:39544–39554. https://doi.org/10.1109/ACCESS.2023.3268233

Eswaran KCA, Akshat P, Gayathri M (2023) Hand gesture recognition for human-computer interaction using computer vision. In: Kottursamy K, Bashir AK, Kose U, Annie U (eds) Deep Sciences for Computing and Communications. Springer Nature Switzerland, Cham, pp 77–90

Ansar H, Mudawi NA, Alotaibi SS et al (2023) Hand gesture recognition for characters understanding using convex Hull landmarks and geometric features. IEEE Access 11:82065–82078

Kothadiya DR, Bhatt CM, Rehman A, Alamri FS, Tanzila S (2023) SignExplainer: An explainable ai-enabled framework for sign language recognition with ensemble learning. IEEE Access. 11:47410–47419. https://doi.org/10.1109/ACCESS.2023.3274851

Salman SA, Zakir A, Takahashi H (2023) Cascaded deep graphical convolutional neural network for 2D hand pose estimation. In: Salman SA, Zakir A, Takahashi H (eds) Other conferences. https://api.semanticscholar.org/CorpusID:257799908

Lyu Y, An P, **ao Y, Zhang Z, Zhang H, Katsuragawa K, Zhao J (2023) Eggly: Designing mobile augmented reality neurofeedback training games for children with autism spectrum disorder. Assoc Comput Machin 7(2):1–29. https://doi.org/10.1145/3596251

Van Mechelen M, Smith RC, Schaper M-M, Tamashiro M, Bilstrup K-E, Lunding M, Petersen MG, Iversen OS (2023) Emerging technologies in K–12 education: A future HCI research agenda. Assoc Comput Machine 30(3):1073–0516. https://doi.org/10.1145/3569897

Ometto M (2022) An innovative approach to plant and process supervision. Danieli Intelligent Plant. IFAC-PapersOnLine 55(40):313–318. https://doi.org/10.1016/j.ifacol.2023.01.091

Matheus N, Joaquim J, João V, Regis K, Anderson M (2023) Exploring affordances for AR in laparoscopy. In: 2023 IEEE Conference on Virtual Reality and 3D User Interfaces Abstracts and Workshops (VRW), pp 147–151. https://doi.org/10.1109/VRW58643.2023.00037

Rawat KS, Sood SK (2021) Knowledge map** of computer applications in education using CiteSpace. Comput Appl Eng Educ 29:1324–1339

Grigsby Scott S (2018) Artificial intelligence for advanced human-machine symbiosis. In: Schmorrow DD, Fidopiastis CM (eds) Augmented cognition: intelligent technologies. Springer International Publishing, Cham, pp 255–266. https://doi.org/10.1007/978-3-319-91470-1_22

Gomes CC, Preto S (2018) Artificial intelligence and interaction design for a positive emotional user experience. In: Karwowski W, Ahram T(eds) Intelligent Human Systems Integration. IHSI 2018. Advances in intelligent systems and computing, Springer

Zhang C, Lu Y (2021) Study on artificial intelligence: The state of the art and future prospects[J]. J Ind Inf Integr 2021(23-):23

Ahamed MM (2017) Analysis of human machine interaction design perspective-a comprehensive literature review. Int J Contemp Comput Res 1(1):31–42

Li X (2020) Human–robot interaction based on gesture and movement recognition. Signal Process: Image Commun 81:115686

Majaranta P, Räihä K-J, Aulikki H, Špakov O (2019) Eye movements and human-computer interaction. In: Klein C, Ettinger U (eds) Eye movement research: an introduction to its scientific foundations and applications. Springer International Publishing, Cham, pp 971–1015

Bi L, Pan C, Li J, Zhou J, Wang X, Cao S (2023) Discourse-based psychological intervention alleviates perioperative anxiety in patients with adolescent idiopathic scoliosis in China: A retrospective propensity score matching analysis. BMC Musculoskelet Disord 24(1):422. https://doi.org/10.1186/s12891-023-06438-2

Maybury M (1998) Intelligent user interfaces: An introduction. In: Proceedings of the 4th international conference on intelligent user interfaces. Association for Computing Machinery, New York, NY, pp 3–4. https://doi.org/10.1145/291080.291081

Jaimes A, Sebe N (2005) Multimodal human computer interaction: A survey. Lect Notes Comput Sci 3766:1

Qiu Y (2004) Evolution and trends of intelligent user interfaces. Comput Sci. https://api.semanticscholar.org/CorpusID:63801008

Zhao Y, Wen Z (2022) Interaction design system for artificial intelligence user interfaces based on UML extension mechanisms. IOS Press 2022. https://doi.org/10.1155/2022/3534167

Margienė A, Simona R (2019) Trends and challenges of multimodal user interfaces. In: 2019 Open Conference of Electrical, Electronic and Information Sciences (eStream), pp 1–5. https://doi.org/10.1109/eStream.2019.8732156

Maybury MT (1998) Intelligent user interfaces: an introduction. In: International conference on intelligent user interfaces. https://api.semanticscholar.org/CorpusID:12602078

Stavros M, Nikolaos B (2016) A survey on human machine dialogue systems. In: 2016 7th International conference on information, intelligence, systems & applications (IISA), pp 1–7. https://doi.org/10.1109/IISA.2016.7785371

Vinoj PG, Jacob S, Menon VG, Balasubramanian V, Piran J (2021) IoT-powered deep learning brain network for assisting quadriplegic people. Comput Electr Eng 92:107113. https://doi.org/10.1016/j.compeleceng.2021.107113

Peruzzini M, Grandi F, Pellicciari M (2017) Benchmarking of tools for user experience analysis in industry 4.0. Procedia Manuf 11:806–813

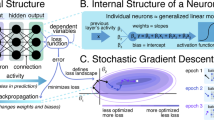

Mahesh B (2020) Machine learning algorithms-a review. Int J Sci Res (IJSR) 9:381–386

LeCun Y, Bengio Y, Hinton G (2015) Deep learning. Nature 521:436–444

Cantoni V, Cellario M, Porta M (2004) Perspectives and challenges in e-learning: towards natural interaction paradigms. J Vis Lang Comput 15(5):333–345

Changhoon O, Jungwoo S, **han C et al (2018) You help but only with enough details: Understanding user experience of co-creation with artificial intelligence. Assoc Comput Mach Pap 649:1–13

Turk M (2005) multimodal human-computer interaction. In: Kisačanin B, Pavlović V, Huang TS (eds) Real-time vision for human-computer interaction. Springer, Boston, MA, pp 269–283. https://doi.org/10.1007/0-387-27890-7_16

Singh SK, Chaturvedi A (2022) A reliable and efficient machine learning pipeline for American sign language gesture recognition using EMG sensors. Multimed Tools Appl 82(15):23833–23871. https://doi.org/10.1007/s11042-022-14117-y

Zhang J, Qiu X, Li X, Huang Z, Mingqiu W, Yumin D, Daniele B (2021) Support vector machine weather prediction technology based on the improved quantum optimization algorithm, vol 2021, Hindawi Limited, London, GBR. https://doi.org/10.1155/2021/6653659

Giatsintov A, Kirill M, Pavel B (2023) Architecture of the graphics system for embedded real-time operating systems. Tsinghua Sci Technol 28(3):541–551. https://doi.org/10.26599/TST.2022.9010028

Lee S, Jeeyun O, Moon W-K (2022) Adopting voice assistants in online shop**: examining the role of social presence, performance risk, and machine heuristic. Int J Hum–Comput Int 39:2978–2992. https://api.semanticscholar.org/CorpusID:250127863

Johannes P (2005) Spoken dialogue technology: toward the conversational user interface by Michael F. McTear. Comput Linguist 31(3):403–416. https://doi.org/10.1162/089120105774321136

Du Y, Qin J, Zhang S, et al (2018) Voice user interface interaction design research based on user mental model in autonomous vehicle. In: Kurosu M (eds) Human-computer interaction. Interaction technologies. HCI 2018. Lecture notes in computer science. Springer

Koni YJ, Al-Absi MA, Saparmammedovich SA, Jae LH (2020) AI-based voice assistants technology comparison in term of conversational and response time. Springer-Verlag, Berlin, Heidelberg, pp 370–379. https://doi.org/10.1007/978-3-030-68452-5_39

Li M, Li F, Pan J et al (2021) The MindGomoku: An online P300 BCI game based on bayesian deep learning. Sensors 21:1613

Alnuaim AA, Mohammed Z, Aseel A, Chitra S, Atef HW, Hussam T, Kumar SP, Rajnish R, Vijay K (2022) Human-computer interaction with detection of speaker emotions using convolution neural networks, vol 2022. Hindawi Limited, London, GBR. https://doi.org/10.1155/2022/7463091

Charissis V, Falah J, Lagoo R et al (2021) Employing emerging technologies to develop and evaluate in-vehicle intelligent systems for driver support: Infotainment AR HUD case study. Appl Sci 11:1397

Fan Y, Yang J, Chen J, et al (2021) A digital-twin visualized architecture for flexible manufacturing system. J Manuf Syst 2021(60-):60

Wang T, Li J, Kong Z, Liu X, Snoussi H, Lv H (2021) Digital twin improved via visual question answering for vision-language interactive mode in human–machine collaboration. J Manuf Syst 58:261–269. https://doi.org/10.1016/j.jmsy.2020.07.011

Tian W, Jiakun L, Yingjun D et al (2021) Digital twin for human-machine interaction with convolutional neural network. Int J Comput Integr Manuf 34(7–8):888–897

Zhang Q, Wei Y, Liu Z et al (2023) A framework for service-oriented digital twin systems for discrete workshops and its practical case study. Systems 11:156

El OI, Benouini R, Zenkouar K et al (2022) RGB-D feature extraction method for hand gesture recognition based on a new fast and accurate multi-channel cartesian Jacobi moment invariants. Multimed Tools Appl 81:12725–12757

Miah ASM, Shin J, Hasan MAM et al (2022) BenSignNet: Bengali sign language alphabet recognition using concatenated segmentation and convolutional neural network. Appl Sci 12:3933

Munea TL, Jembre YZ, Weldegebriel HT et al (2020) The progress of human pose estimation: A survey and taxonomy of models applied in 2D human pose estimation. IEEE Access, pp 133330–133348

**a H, Lei H, Yang J, Rahim K (2022) Human behavior recognition in outdoor sports based on the local error model and convolutional neural network, vol 2022. Hindawi Limited, London, GBR, pp 1687–5265. https://doi.org/10.1155/2022/6988525

Malibari AA, Alzahrani JS, Qahmash A (2022) Quantum water strider algorithm with hybrid-deep-learning-based activity recognition for human-computer interaction. Appl Sci 12:6848

Jia N, Zheng C, Sun W (2022) A multimodal emotion recognition model integrating speech, video and MoCAP. Multimed Tools Appl 81:32265–32286

Zhou Y, Feng Y, Zeng S, Pan B (2019) Facial expression recognition based on convolutional neural network. In: 2019 IEEE 10th International conference on software engineering and service science (ICSESS). IEEE, pp 410–413. https://doi.org/10.1109/ICSESS47205.2019.9040730

Yan T, Zhang ** WS, Haoxiang W (2021) Facial expression recognition using frequency neural network. IEEE Tran Image Process 30:444–457. https://doi.org/10.1109/TIP.2020.3037467

Bhavani R, Vijay MT, Kumar TR, Jonnadula N, Murali K, Harpreet K (2022) Deep learning techniques for speech emotion recognition. In: 2022 International conference on futuristic technologies (INCOFT), pp 1–5. https://doi.org/10.1109/INCOFT55651.2022.10094534

Lee CH, Yang HC, Su XQ (2022) A multimodal affective sensing model for constructing a personality-based financial advisor system. Appl Sci 12:10066

Haijuan D, Minglong L, Gengxin S (2022) Personalized smart clothing design based on multimodal visual data detection, vol 2022. Hindawi Limited, London, GBR. https://doi.org/10.1155/2022/4440652

Raptis GE, Kavvetsos G, Katsini C (2021) MuMIA: Multimodal interactions to better understand art contexts. Appl Sci 11:2695

Kumar PS, Singh SH, Shalendar B, Ravi J, Prasanna SRM (2022) Alzheimer's dementia recognition using multimodal fusion of speech and text embeddings. In: Kim J-H, Madhusudan S, Javed K, Shanker TU, Marigankar S, Dhananjay S (eds) Intelligent human computer interaction. Springer International Publishing, Cham, pp 718–728. https://doi.org/10.1007/978-3-030-98404-5_64

Šumak B, Brdnik S, Pusnik M (2021) Sensors and artificial intelligence methods and algorithms for human–computer intelligent interaction: A systematic map** study. Sensors 22. https://api.semanticscholar.org/CorpusID:245441300

Karpov AA, Yusupov RM (2018) Multimodal interfaces of human-computer interaction. Her Russ Acad Sci 88:67–74

Mobeen N, Muhammad Mansoor A, Eiad Y, Mazliham Mohd S (2021) A systematic review of human–computer interaction and explainable artificial intelligence in healthcare with artificial intelligence techniques. IEEE Access 9:153316–153348. https://doi.org/10.1109/ACCESS.2021.3127881

Diederich S, Brendel AB, Morana S et al (2022) (2022) on the design of and interaction with conversational agents: An organizing and assessing review of human-computer interaction research. J Assoc Inf Syst 1:23

Sheetal K, Shruti P, Jyoti C, Ketan K, Sashikala M, Ajith A (2022) AI-based conversational agents: a sco** review from technologies to future directions. IEEE Access 10:92337–92356. https://doi.org/10.1109/ACCESS.2022.3201144

Ren F, Bao Y (2020) A review on human-computer interaction and intelligent robots. Int J Inf Technol Decis Mak 19:5–47. https://api.semanticscholar.org/CorpusID:213516319

Vail EF III (1999) Knowledge map**: getting started with knowledge management. Inf Systs Manag 16(4):16–23. https://doi.org/10.1201/1078/43189.16.4.19990901/31199.3

Lin S, Shen T, Guo W (2021) Evolution and emerging trends of kansei engineering: A visual analysis based on CiteSpace. IEEE Access 9:111181–111202

Eck NJ, Waltman L (2010) Software survey: VOSviewer, a computer program for bibliometric map**. Scientometrics 84:523–538

Cobo MJ, López-Herrera AG, Herrera-Viedma EE et al (2012) SciMAT: A new science map** analysis software tool. J Am Soc Inform Sci Technol 63(8):1609–1630

Chaomei C (2018) visualizing and exploring scientific literature with citespace: an introduction. association for computing machinery. In: CHIIR '18, New York, NY, pp 369–370

Wei F, Grubesic TH, Bishop BW (2015) Exploring the gis knowledge domain using citespace. Prof Geogr 67:374–384

Zhong S, Chen R, Song F et al (2019) Knowledge map** of carbon footprint research in a LCA perspective: A visual analysis using CiteSpace. Processes 7:818

Jiaxi Y, Hong L (2022) Visualizing the knowledge domain in urban soundscape: A scientometric analysis based on CiteSpace. Int J Environ Res Public Health 19(21):13912. https://www.mdpi.com/1660-4601/19/21/13912

Chen Y, Wang Y, Zhou D (2021) Knowledge map of urban morphology and thermal comfort: A bibliometric analysis based on CiteSpace. Buildings 11:427

Chen C (2017) Science map**: A systematic review of the literature. J Data Inform Sci 2:1–40

Li K, ** Y, Akram MW et al (2020) Facial expression recognition with convolutional neural networks via a new face crop** and rotation strategy. Vis Comput 36:391–404

Wang J, **nyu S (2019) Human motion modeling based on single action context. In: 2019 4th International conference on communication and information systems (ICCIS), pp 89–94. https://doi.org/10.1109/ICCIS49662.2019.00022

**an Z, Zhan S, Jian G, Yanguo Z, Feng Z (2012) Real-time hand gesture detection and recognition by random forest. In: Maotai Z, Junpin S (eds) Communications and information processing. Springer, Berlin Heidelberg, pp 747–755. https://doi.org/10.1007/978-3-642-31968-6_89

Yong Z, Dong W, Hu B-G, Qiang J (2018) Weakly-supervised deep convolutional neural network learning for facial action unit intensity estimation. In: 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition. IEEE, pp 2314–2323. https://doi.org/10.1109/CVPR.2018.00246

Guo Y, Liu W, Wei D, Chen Q (2019) Emotional recognition based on EEG signals comparing long-term and short-term memory with gated recurrent unit using batch normalization. https://api.semanticscholar.org/CorpusID:181831183

Afsar MM, Saqib S, Ghadi YY, Alsuhibany SA, Jalal A, Park J (2022) Body worn sensors for health gaming and e-Learning in virtual reality. Comput Mater Contin. https://api.semanticscholar.org/CorpusID:251164186

Li X, Li Y (2022) Sports training strategies and interactive control methods based on neural network models. Comput Intell Neurosci 2022. https://api.semanticscholar.org/CorpusID:247332344

Ahn J, Nguyen TP, Kim Y-J, Kim T, Yoon J (2022) Automated analysis of three-dimensional CBCT images taken in natural head position that combines facial profile processing and multiple deep-learning models. Comput Methods Programs Biomed 226:107123. https://doi.org/10.1016/j.cmpb.2022.107123

** Y, Cho S, Fong S et al (2016) Gesture recognition method using sensing blocks. Wireless Pers Commun 91:1779–1797

Kaiming H, **angyu Z, Shaoqing R, Jian S (2016) Deep residual learning for image recognition. In: 2016 IEEE Conference on computer vision and pattern recognition (CVPR). IEEE, pp 770–778. https://doi.org/10.1109/CVPR.2016.90

Simonyan K, Zisserman A (2014) Very deep convolutional networks for large-scale image recognition. CoRR:abs/1409.1556. https://api.semanticscholar.org/CorpusID:14124313

Keselj A, Milicevic M, Zubrinic K et al The application of deep learning for the evaluation of user interfaces. Sensors 22:9336

Khalil RA, Jones E, Babar MI et al (2019) Speech emotion recognition using deep learning techniques: A Review. IEEE Access 7:117327–117345

Bai J (2009) Panel data models with interactive fixed effects. Econometrica 77(4):1229–1279

Wu F (2016) Study on composition and development of the database management system. In: Proceedings of the 2nd international conference on advances in mechanical engineering and industrial informatics (AMEII 2016). Atlantis Press, pp 159–163. https://doi.org/10.2991/ameii-16.2016.33

Ozcan T, Basturk A (2020) Static facial expression recognition using convolutional neural networks based on transfer learning and hyperparameter optimization. Multimed Tools Appl 79:26587–26604

Chen W, Yu C, Tu C et al (2020) A survey on hand pose estimation with wearable sensors and computer-vision-based methods. Sensors 20(4):1074

Lv T, **aojuan W, Lei J, Yabo X, Mei S (2020) A hybrid network based on dense connection and weighted feature aggregation for human activity recognition. IEEE Access 8:68320–68332. https://doi.org/10.1109/ACCESS.2020.2986246

Belhi A, Ahmed H, Alfaqheri T, Bouras A, Sadka AH, Foufou S (2023) An integrated framework for the interaction and 3D visualization of cultural heritage. Multimed Tools Appl 1–29. https://api.semanticscholar.org/CorpusID:255770349

Echeverry-Correa JD, Martínez González B, Hernández R, Córdoba Herralde R de, Ferreiros López J (2014) Dynamic topic-based adaptation of language models: a comparison between different approaches. https://api.semanticscholar.org/CorpusID:62300998

Souza KES, Seruffo MCR, De Mello Harold D, Da Souza Daniel S, MBR VM, (2019) User experience evaluation using mouse tracking and artificial intelligence. IEEE Access 7:96506–96515. https://doi.org/10.1109/ACCESS.2019.2927860

Shubhajit B, Peter C, Faisal K, Rachel M, Michael S (2021) Learning 3D head pose from synthetic data: A semi-supervised approach. IEEE Access 9:37557–37573. https://doi.org/10.1109/ACCESS.2021.3063884

Yu-Wei C, Soo-Chang P (2022) Domain adaptation for underwater image enhancement via content and style separation. IEEE Access 10:90523–90534. https://doi.org/10.1109/ACCESS.2022.3201555

Padfield N, Camilleri K, Camilleri T (2022) A comprehensive review of endogenous EEG-based BCIs for dynamic device control. Sensors 22:5802

Jacob S, Mukil A, Menon Varun G, Manoj KB, Jhanjhi NZ, Vasaki P, Shynu PG, Venki B (2020) An adaptive and flexible brain energized full body exoskeleton with IoT edge for assisting the paralyzed patients. IEEE Access 8:100721–100731. https://doi.org/10.1109/ACCESS.2020.2997727

Tang J, Liu Y, Jiang J et al (2019) Toward brain-actuated Mobile platform. Int J Hum-Comput Interact 35(10):846–858

** W (eds) Artificial intelligence. Springer Nature Switzerland, Cham, pp 548–553. https://doi.org/10.1007/978-3-031-20503-3_47

Choi DY, Deok-Hwan K, Cheol SB (2020) Multimodal attention network for continuous-time emotion recognition using video and EEG signals. IEEE Access 8:203814–203826. https://doi.org/10.1109/ACCESS.2020.3036877

Pérez FD, García-Méndez S, González-Castaño FJ et al (2021) Evaluation of abstraction capabilities and detection of discomfort with a newscaster chatbot for entertaining elderly users. Sensors 21:5515

**ong S, Wang R, Huang X (2022) Multidimensional latent semantic networks for text humor recognition. Sensors 22:5509

Wang H, Zhang Y, Yu X (2020) An overview of image caption generation methods. Comput Intell Neurosci 2020. https://api.semanticscholar.org/CorpusID:210956524

Casini L, Marchetti N, Montanucci A et al (2023) A human–AI collaboration workflow for archaeological sites detection. Sci Rep 13:8699

Narek M, Charles A-D, Alain P, Jean-Marc A, Didier S (2022) Human intelligent machine teaming in single pilot operation: a case study. In: Schmorrow DD, Fidopiastis CM (eds) Augmented cognition. Springer International Publishing, Cham, pp 348–360. https://doi.org/10.1007/978-3-031-05457-0_27

Foucher J, Anne-Claire C, Le GK, Thomas R, Valérie J, Thomas D, Jerémie L, Marielle P-R, François D, Grunwald Arthur J, Jean-Christophe S, Bardy Benoît G (2022) Simulation and classification of spatial disorientation in a flight use-case using vestibular stimulation. IEEE Access 10:104242–104269. https://doi.org/10.1109/ACCESS.2022.3210526

Funding

This research is funded by the State Key Laboratory of Mechanical Systems and Vibrations of China (Grant No.MSV202014); the Fundamental Research Funds for the Central Universities of China (HUST: 2020kfyXJJS014); the Major Philosophy and Social Science Research Project for Hubei Province Colleges and Universities of China (Grant No.20ZD002).

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflicts of interest

The authors declare that they have no conflict of interest.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Ding, Z., Ji, Y., Gan, Y. et al. Current status and trends of technology, methods, and applications of Human–Computer Intelligent Interaction (HCII): A bibliometric research. Multimed Tools Appl (2024). https://doi.org/10.1007/s11042-023-18096-6

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s11042-023-18096-6