Abstract

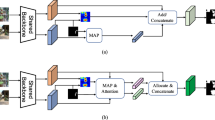

Few-shot Semantic Segmentation (FSS) endeavors to segment novel categories in a query image by referring to a support set comprising only a few annotated examples. Presently, many existing FSS methodologies primarily embrace the prototype learning paradigm and concentrate on optimizing the matching mechanism. However, these approaches tend to overlook the discrimination between the features of foreground background. Consequently, the segmentation results are often imprecise when it comes to capturing intricate structures, such as boundaries and small objects. In this study, we introduce the Discriminative Foreground-and-Background feature learning Network (DFBNet) to enhance the distinguishability of bilateral features. DFBNet comprises three major modules: a multi-level self-matching module (MSM), a feature separation module (FSM), and a semantic alignment module (SAM). The MSM generates prior masks separately for the foreground and background, employing a self-matching strategy across different feature levels. These prior masks are subsequently used as scaling factors within the FSM, where the features of the query’s foreground and background are independently scaled up and then concatenated along the channel dimension. Furthermore, we incorporate a two-layer Transformer encoder-based semantic alignment module (SAM) in DFBNet to refine the features, thereby creating a greater distinction between the foreground and background features. The performance of DFBNet is evaluated on the PASCAL-\(5^i\) and COCO-\(20^i\) benchmarks, demonstrating its superiority over existing solutions and establishing new state-of-the-art results in the field of few-shot semantic segmentation. The codes will be released if this paper is accepted.

Similar content being viewed by others

Data availability statement

The images used in this paper are all from famous popular image repositories, which are publicly accessible. Intermediate data or results generated during and/or analysed during the current study are available from the corresponding author on reasonable request.

References

Dong N, **ng EP (2018) Few-shot semantic segmentation with prototype learning. In: BMVC, vol 3

Zhang X, Wei Y, Yang Y, Huang TS (2020) Sg-one: Similarity guidance network for one-shot semantic segmentation. IEEE Trans Cybernet 50(9):3855–3865

Wang K, Liew JH, Zou Y, Zhou D, Feng J (2019) Panet: Few-shot image semantic segmentation with prototype alignment. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp 9197–9206

Yang B, Liu C, Li B, Jiao J, Ye Q (2020) Prototype mixture models for few-shot semantic segmentation. In: Computer Vision–ECCV 2020: 16th European Conference, Glasgow, UK, August 23–28, 2020, Proceedings, Part VIII 16. Springer, pp 763–778

Tian Z, Zhao H, Shu M, Yang Z, Li R, Jia J (2020) Prior guided feature enrichment network for few-shot segmentation. IEEE Trans Pattern Anal Mach Intell 44(2):1050–1065

**e G-S, Liu J, **ong H, Shao L (2021) Scale-aware graph neural network for few-shot semantic segmentation. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. pp 5475–5484

Li G, Jampani V, Sevilla-Lara L, Sun D, Kim J, Kim J (2021) Adaptive prototype learning and allocation for few-shot segmentation. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. pp 8334–8343

Chan S, Huang C, Bai C, Ding W, Chen S (2022) Res2-unext: a novel deep learning framework for few-shot cell image segmentation. Multimedia Tools Appl 81(10):13275–13288

Liu Y, Guo Y, Zhu Y, Yu M (2022) Mining semantic information from intra-image and cross-image for few-shot segmentation. Multimedia Tools Appl 81(13):18305–18326

Shi X, Wei D, Zhang Y, Lu D, Ning M, Chen J, Ma K, Zheng Y (2022) Dense cross-query-and-support attention weighted mask aggregation for few-shot segmentation. In: European Conference on Computer Vision. Springer, pp 151–168

Fan Q, Pei W, Tai Y-W, Tang C-K (2022) Self-support few-shot semantic segmentation. In: European Conference on Computer Vision. Springer, pp 701–719

Ding H, Zhang H, Jiang X (2023) Self-regularized prototypical network for few-shot semantic segmentation. Pattern Recognit 133:109018

Min H, Zhang Y, Zhao Y, Jia W, Lei Y, Fan C (2023) Hybrid feature enhancement network for few-shot semantic segmentation. Pattern Recognit 109291

Liu J, Bao Y, **e G-S, **ong H, Sonke J-J, Gavves E (2022) Dynamic prototype convolution network for few-shot semantic segmentation. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. pp 11553–11562

Long J, Shelhamer E, Darrell T (2015) Fully convolutional networks for semantic segmentation. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. pp 3431–3440

Chen L-C, Papandreou G, Kokkinos I, Murphy K, Yuille AL (2017) Deeplab: Semantic image segmentation with deep convolutional nets, atrous convolution, and fully connected crfs. IEEE Trans Pattern Anal Mach Intell 40(4):834–848

Zhao H, Shi J, Qi X, Wang X, Jia J (2017) Pyramid scene parsing network. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. pp 2881–2890

Zheng S, Lu J, Zhao H, Zhu X, Luo Z, Wang Y, Fu Y, Feng J, **ang T, Torr PH et al. (2021) Rethinking semantic segmentation from a sequence-to-sequence perspective with transformers. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. pp 6881–6890

**e E, Wang W, Yu Z, Anandkumar A, Alvarez JM, Luo P (2021) Segformer: Simple and efficient design for semantic segmentation with transformers. Adv Neural Inf Process Syst 34:12077–12090

Santoro A, Bartunov S, Botvinick M, Wierstra D, Lillicrap T (2016) Meta-learning with memory-augmented neural networks. In: International Conference on Machine Learning. pp 1842–1850, PMLR

Finn C, Abbeel P, Levine S (2017) Model-agnostic meta-learning for fast adaptation of deep networks. In: International Conference on Machine Learning. pp 1126–1135, PMLR

Vinyals O, Blundell C, Lillicrap T, Wierstra D et al. (2016) Matching networks for one shot learning. Adv Neural Inf Process Syst 29

Snell J, Swersky K, Zemel R (2017) Prototypical networks for few-shot learning. Adv Neural Inf Process Syst 30

Shaban A, Bansal S, Liu Z, Essa I, Boots B (2017) One-shot learning for semantic segmentation. ar**v:1709.03410

Min J, Kang D, Cho M (2021) Hypercorrelation squeeze for few-shot segmentation. In: Proceedings of the IEEE/CVF International Conference on Computer Vision. pp 6941–6952

Zhang G, Kang G, Yang Y, Wei Y (2021) Few-shot segmentation via cycle-consistent transformer. Adv Neural Inf Process Syst 34:21984–21996

Hong S, Cho S, Nam J, Lin S, Kim S (2022) Cost aggregation with 4d convolutional swin transformer for few-shot segmentation. In: European Conference on Computer Vision. pp 108–126, Springer

Liu Z, Lin Y, Cao Y, Hu H, Wei Y, Zhang Z, Lin S, Guo B (2021) Swin transformer: Hierarchical vision transformer using shifted windows. In: Proceedings of the IEEE/CVF International Conference on Computer Vision. pp 10012–10022

Liu Y, Zhang X, Zhang S He X (2020) Part-aware prototype network for few-shot semantic segmentation. In: Computer Vision–ECCV 2020: 16th European Conference, Glasgow, UK, August 23–28, 2020, Proceedings, Part IX 16. pp 142–158, Springer

Lu Z, He S, Zhu X, Zhang L, Song Y-Z, **ang T (2021) Simpler is better: Few-shot semantic segmentation with classifier weight transformer. In: Proceedings of the IEEE/CVF International Conference on Computer Vision. pp 8741–8750

Liu Y, Liu N, Yao X, Han J (2022) Intermediate prototype mining transformer for few-shot semantic segmentation. Adv Neural Inf Process Syst 35:38020–38031

Nguyen K, Todorovic S (2019) Feature weighting and boosting for few-shot segmentation. In: Proceedings of the IEEE/CVF International Conference on Computer Vision. pp 622–631

Rakelly K, Shelhamer E, Darrell T, Efros AA, Levine S (2018) Few-shot segmentation propagation with guided networks. ar**v:1806.07373

Li X, Wei T, Chen YP, Tai Y-W, Tang C-K (2020) Fss-1000: A 1000-class dataset for few-shot segmentation. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. pp 2869–2878

Lin T-Y, Maire M, Belongie S, Hays J, Perona P, Ramanan D, Dollár P, Zitnick CL (2014) Microsoft coco: Common objects in context. In: Computer Vision–ECCV 2014: 13th European Conference, Zurich, Switzerland, September 6-12, 2014, Proceedings, Part V 13. Springer, pp 740–755

Everingham M, Van Gool L, Williams CK, Winn J, Zisserman A (2010) The pascal visual object classes (voc) challenge. Inter J Comput Vision 88:303–338

Hariharan B, Arbeláez P, Bourdev L, Maji S, Malik J (2011) Semantic contours from inverse detectors. In: 2011 International Conference on Computer Vision. pp 991–998, IEEE

Aggarwal AK, Jaidka P (2022) Segmentation of crop images for crop yield prediction. Inter J Biol Biomed 7

Kaur A, Chauhan APS, Aggarwal AK (2022) Prediction of enhancers in dna sequence data using a hybrid cnn-dlstm model. IEEE/ACM Trans Comput Biol Bioinfo 20(2):1327–1336

He K, Zhang X, Ren S, Sun J (2016) Deep residual learning for image recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. pp 770–778

Deng J, Dong W, Socher R, Li L-J, Li K, Fei-Fei L (2009) Imagenet: A large-scale hierarchical image database. In: 2009 IEEE Conference on Computer Vision and Pattern Recognition. pp 248–255, Ieee

Aggarwal AK (2022) Learning texture features from glcm for classification of brain tumor mri images using random forest classifier. Trans Signal Process 18:60–63

Maini D, Aggarwal AK (2018) Camera position estimation using 2d image dataset. Int J Innov Eng Technol 10:199–203

Acknowledgements

This paper was supported by the 111 Project (B16009). The authors would like to thank the handling editor and anonymous reviewers very much for their constructive suggestions on improving quality of this paper.

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflicts of interests

The authors declare no known conflict of interests including funding and/or competing financial interests or personal relationships that could have appeared to influence the work reported in this paper.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Jiang, C., Zhou, Y., Liu, Z. et al. Learning discriminative foreground-and-background features for few-shot segmentation. Multimed Tools Appl 83, 55999–56019 (2024). https://doi.org/10.1007/s11042-023-17708-5

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s11042-023-17708-5