Abstract

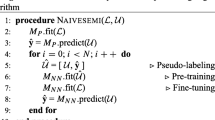

Semi-supervised regression aims to improve the performance of the learner with the help of unlabeled data. Popular approaches select some unlabeled data with high-quality pseudo labels to enrich the training set. In this paper, we propose a new approach with a semi-supervised regressor, a learner, and a respective loss function. First, an off-the-shelf semi-supervised regressor is trained to provide pseudo labels for all unlabeled data. These labels are often reliable enough to guide the learning process. Second, we design a neural network with dropout to train data with Gaussian noise added. In this way, the robustness of our learners is enhanced. Third, we design a weighted sum combining the supervised and unsupervised loss. The weight for pseudo-labels ramp-up over time, indicating more attention to the pseudo-labels. Six state-of-the-art algorithms are employed as the base model of our framework. Results on 15 real-world data sets show that our model has a significant improvement over the respective base regressor on most data sets.

Similar content being viewed by others

References

Zhu X-J, Goldberg AB (2009) Introduction to semi-supervised learning. Synthe Lect Artif Intell Mach Learn 3(1):1–130. https://doi.org/10.2200/S00196ED1V01Y200906AIM006

Engelen JEV, Hoos HH (2020) A survey on semi-supervised learning. Mach Learn 109(2):373–440. https://doi.org/10.1007/s10994-019-05855-6

Blum A, Mitchell T (1998) Combining labeled and unlabeled data with co-training. In: COLT, pp 92–100

Zhou Z-H, Li M (2005) Semi-supervised regression with co-training. In: IJCAI, pp 908–913

Zhou Z-H, Li M (2005) Tri-training: exploiting unlabeled data using three classifiers. IEEE Trans Knowl Data Eng 17(11):1529–1541. https://doi.org/10.1109/TKDE.2005.186

Hady MFA, Schwenker F, Palm G (2009) Semi-supervised learning for regression with co-training by committee. In: ICANN, pp 121–130

Qiao S, Shen W, Zhang Z, Wang B, Yuille A (2018) Deep co-training for semi-supervised image recognition. In: ECCV, pp 142–159

Chen D-D, Wang W, Gao W, Zhou Z-H (2018) Tri-Net for semi-supervised deep learning. In: IJCAI, pp 2014–2020

Zhang J, Li M, Gao K, Meng S, Zhou C (2021) Word and graph attention networks for semi-supervised classification. Knowl Inf Syst 63(11):2841–2859. https://doi.org/10.1007/s10115-021-01610-3

Zhang T, Zhu T, Han M, Chen F, Li J, Zhou W, Yu PS (2022) Fairness in graph-based semi-supervised learning. Knowl Inf Syst 65(2):543–570. https://doi.org/10.1007/s10115-022-01738-w

Lebichot B, Saerens M (2020) An experimental study of graph-based semi-supervised classification with additional node information. Knowl Inf Syst 62(11):4337–4371. https://doi.org/10.1007/s10115-020-01500-0

Jean N, **. In: ICLRW, pp 1–11

Wei H, Feng L, Chen X, An B (2020) Combating noisy labels by agreement: a joint training method with co-regularization. In: CVPR, pp 13726–13735

Tan C, **a J, Wu L, Li SZ (2021) Co-learning: Learning from noisy labels with self-supervision. In: ACM Int. Conf. Multimedia, pp 1405–1413

Khan FH, Qamar U, Bashir S (2017) A semi-supervised approach to sentiment analysis using revised sentiment strength based on sentiwordnet. Knowl Inf Syst 51(3):851–872. https://doi.org/10.1007/s10115-016-0993-1

Dai W, Li X, Cheng K-T (2023) Semi-supervised deep regression with uncertainty consistency and variational model ensembling via bayesian neural networks. In: AAAI, pp 1–10

Berthelot D, Carlini N, Cubuk ED, Kurakin A, Sohn K, Zhang H, Raffel C (2020) Remixmatch: semi-supervised learning with distribution alignment and augmentation anchoring. In: ICLR, pp 1–10

Kuo C-W, Ma C-Y, Huang J-B, Kira Z (2020) Featmatch: Feature-based augmentation for semi-supervised learning. In: ECCV, pp 479–495

Laine S, Aila T (2017) Temporal ensembling for semi-supervised learning. In: ICLR, pp 1–13

Miyato T, Maeda S-I, Koyama M, Ishii S (2019) Virtual adversarial training: a regularization method for supervised and semi-supervised learning. IEEE Trans Pattern Anal Mach Intell 41(8):1979–1993. https://doi.org/10.1109/TPAMI.2018.2858821

Tarvainen A, Valpola H (2007) Mean teachers are better role models: weight-averaged consistency targets improve semi-supervised deep learning results. In: NeurIPS, pp 1–16

Srivastava N, Hinton G, Krizhevsky A, Sutskever I, Salakhutdinov R (2014) Dropout: a simple way to prevent neural networks from overfitting. J Mach Learn Res 15(1):1929–1958

Ke Z, Wang D, Yan Q, Ren J, Lau RWH (2019) Dual student: breaking the limits of the teacher in semi-supervised learning. In: ICCV, pp 1–12

Sohn K, Berthelot D, Carlini N, Zhang Z, Zhang H, Raffel CA, Cubuk ED, Kurakin A, Li C-L (2020) Fixmatch: simplifying semi-supervised learning with consistency and confidence. In: NeurIPS, pp 596–608

Zhang B, Wang Y, Hou W, WU H, Wang J, Okumura M, Shinozaki T (2021) Flexmatch: boosting semi-supervised learning with curriculum pseudo labeling. In: NeurIPS, pp 18408–18419

Xu Y, Wei F, Sun X, Yang C, Shen Y, Dai B, Zhou B, Lin S (2022) Cross-model pseudo-labeling for semi-supervised action recognition. In: CVPR, pp 2959–2968

Bodla N, Hua G, Chellappa R (2018) Semi-supervised fusedGAN for conditional image generation. In: ECCV, pp 689–704

You C, Zhao R, Staib LH, Duncan JS (2022) Momentum contrastive voxel-wise representation learning for semi-supervised volumetric medical image segmentation. In: MICCAI, pp 639–652

Lin Y, Yao H, Li Z, Zheng G, Li X (2022) Calibrating label distribution for class-imbalanced barely-supervised knee segmentation. In: MICCAI, pp 109–118

Zheng M, You S, Huang L, Wang F, Qian C, Xu C (2022) Simmatch: semi-supervised learning with similarity matching. In: CVPR, pp 14451–14461

Yang L, Qi L, Feng L, Zhang W, Shi Y (2023) Revisiting weak-to-strong consistency in semi-supervised semantic segmentation. In: CVPR, pp 1–13

Lee D-H (2013) Pseudo-label: the simple and efficient semi-supervised learning method for deep neural networks. In: ICML Workshop, pp 1–20

Fazakis N, Karlos S, Kotsiantis S, Sgarbas K (2019) A multi-scheme semi-supervised regression approach. Pattern Recognit Lett 125:758–765. https://doi.org/10.1016/j.patrec.2019.07.022

Nigam K, Ghani R (2000) Analyzing the effectiveness and applicability of co-training. In: CIKM, pp 86–93

Wang W, Zhou Z-H (2013) Co-training with insufficient views. In: ACML, pp 467–482

Brefeld U, Gärtner T, Scheffer T, Wrobel S (2006) Efficient co-regularised least squares regression. In: ICML, pp 137–144

Wang X, Fu L, Ma L (2011) Semi-supervised support vector regression model for remote sensing water quality retrieving. Chin Geogr Sci 21:57–64

Bao L, Yuan X, Ge Z (2015) Co-training partial least squares model for semi-supervised soft sensor development. Chemom Intell Lab Syst 147:75–85. https://doi.org/10.1016/j.chemolab.2015.08.002

Liu Y, Xu Z, Li C (2018) Online semi-supervised support vector machine. Inf Sci 439–440:125–141. https://doi.org/10.1016/j.ins.2018.01.048

Chen X, Cao W, Gan C, Ohyama Y, She J, Wu M (2021) Semi-supervised support vector regression based on data similarity and its application to rock-mechanics parameters estimation. Eng Appl Artif Intell 104:104317. https://doi.org/10.1016/j.engappai.2021.104317

Kostopoulos G, Karlos S, Kotsiantis S, Ragos O, Tiwari S, Trivedi M, Kohle ML (2018) Semi-supervised regression: a recent review. J Intell Fuzzy Syst 35:1483–1500. https://doi.org/10.3233/JIFS-169689

Nigam K, Ghani R (2000) Analyzing the effectiveness and applicability of co-training. In: CIKM, pp 86–93

Brefeld U, Scheffer T (2004) Co-EM support vector learning. In: ICML, p 16

Zhou Z-H, Li M (2010) Semi-supervised learning by disagreement. Knowl Inf Syst 24(3):415–439. https://doi.org/10.1007/s10115-009-0209-z

Sun X, Gong D, Zhang W (2012) Interactive genetic algorithms with large population and semi-supervised learning. Appl Soft Comput 12(9):3004–3013. https://doi.org/10.1016/j.asoc.2012.04.021

Zhou Y, Goldman S (2004) Democratic co-learning. In: ICTAI, pp 594–602

Min F, Li Y, Liu L (2022) Self-paced safe co-training for regression. In: PAKDD, pp 71–82

Verma V, Kawaguchi K, Lamb A, Kannala J, Solin A, Bengio Y, Lopez-Paz D (2022) Interpolation consistency training for semi-supervised learning. Neural Netw 145:90–106. https://doi.org/10.1016/j.neunet.2021.10.008

Owen AB (2007) A robust hybrid of lasso and ridge regression. Contemp Math 443(7):59–72

Dong X, Yu Z, Cao W, Shi Y, Ma Q (2020) A survey on ensemble learning. Front Comput Sci 14:241–258. https://doi.org/10.1007/s11704-019-8208-z

Mobahi H, Farajtabar M, Bartlett PL (2020) Self-distillation amplifies regularization in Hilbert space. In: NeurIPS, pp 3351–3361

Zhang Z, Sabuncu M (2020) Self-distillation as instance-specific label smoothing. In: NeurIPS, pp 2184–2195

Gou J, Yu B, Maybank SJ, Tao D (2021) Knowledge distillation: a survey. Int J Comput Vision 129(6):1789–1819. https://doi.org/10.1007/s11263-021-01453-z

Wang Y, Chen H, Heng Q, Hou W, Fan Y, Wu Z, Wang J, Savvides M, Shinozaki T, Raj B, Schiele B, **e X (2023) Freematch: self-adaptive thresholding for semi-supervised learning. In: ICLR, pp 1–20

Chen H, Tao R, Fan Y, Wang Y, Wang J, Schiele B, **e X, Raj B, Savvides M (2023) Softmatch: addressing the quantity-quality tradeoff in semi-supervised learning. In: ICLR, pp 1–21

Rizve MN, Duarte K, Rawat YS, Shah M (2021) In defense of pseudo-labeling: an uncertainty-aware pseudo-label selection framework for semi-supervised learning. In: ICLR, pp 1–20

Xu Y, Shang L, Ye J, Qian Q, Li Y-F, Sun B, Li H, ** R (2021) Dash: semi-supervised learning with dynamic thresholding. In: ICML, pp 11525–11536

Yarowsky D (1995) Unsupervised word sense disambiguation rivaling supervised methods. In: ACL, pp 189–196

Cover T, Hart P (1967) Nearest neighbor pattern classification. IEEE Trans Inf Theory 13(1):21–27. https://doi.org/10.1109/TIT.1967.1053964

Li Y-F, Zha H-W, Zhou Z-H (2017) Learning safe prediction for semi-supervised regression. In: AAAI, pp 2217–2223

Shevade SK, Keerthi SS, Bhattacharyya C, Murthy KRK (2000) Improvements to the SMO algorithm for SVM regression. IEEE Trans Neural Netw 11(5):1188–1193. https://doi.org/10.1109/72.870050

Breiman L (2001) Random forests. Mach Learn 45(1):5–32. https://doi.org/10.1023/A:1010933404324

Barros RC, Ruiz DD, Basgalupp MP (2011) Evolutionary model trees for handling continuous classes in machine learning. Inf Sci 181(5):954–971. https://doi.org/10.1016/j.ins.2010.11.010

Timilsina M, Figueroa A, d’Aquin M, Yang H (2021) Semi-supervised regression using diffusion on graphs. Appl Soft Comput 104:107188. https://doi.org/10.1016/j.asoc.2021.107188

Seok K (2014) Semi-supervised regression based on support vector machine. J Korean Data Inf Sci Soc 25(2):447–454

Friedman M (1937) The use of ranks to avoid the assumption of normality implicit in the analysis of variance. J Am Stat Assoc 32(200):675–701. https://doi.org/10.1080/01621459.1937.10503522

Nemenyi PB (1963) Distribution-free multiple comparisons. PhD thesis, Princeton University

Acknowledgements

This work was supported by the National Social Science Foundation of China under Grant No. 22FZXB092. We thank Yan-Xue Wu for his valuable suggestions.

Author information

Authors and Affiliations

Contributions

CRediT authorship contribution statement Liyan Liu did methodology, software, writing—original draft; Haimin Zuo done formal analysis, writing—review & editing; Fan Min contributed to conceptualization, supervision, funding acquisition, writing—review & editing.

Corresponding author

Ethics declarations

Conflict interest

The authors declare that they have no known competing financial interests or personal relationships that could have appeared to influence the work reported in this paper.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Liu, L., Zuo, H. & Min, F. BSRU: boosting semi-supervised regressor through ramp-up unsupervised loss. Knowl Inf Syst 66, 2769–2797 (2024). https://doi.org/10.1007/s10115-023-02044-9

Received:

Revised:

Accepted:

Published:

Issue Date:

DOI: https://doi.org/10.1007/s10115-023-02044-9