Abstract

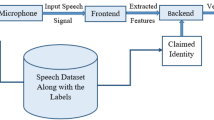

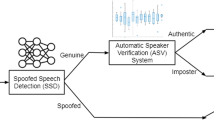

Automatic speaker verification (ASV) is the task of authenticating the claimed identity of a speaker from his/her voice characteristics. Despite the improved performance achieved by deep neural network (DNN)-based ASV systems, recent investigations exposed their vulnerability to adversarial attacks. Although the literature suggested a few defense strategies to mitigate the threat, most works fail to explain the characteristics of adversarial noise and its effect on speech signals. Understanding the effect of adversarial noise on signal characteristics helps in devising effective defense strategies. A closer analysis of adversarial noise characteristics reveals that the adversary predominantly manipulates the low-energy regions in the time–frequency representation of the test speech signal to overturn the ASV system decision. Inspired by this observation, we employed spectral masking techniques to arrest the information flow from the low-energy regions of the magnitude spectrogram. It is observed that the ASV system trained with masked spectral features is more robust to adversarial examples than the one trained on raw features. In addition, the proposed spectral masking strategy is compared with the most widely used adversarial training defense. The proposed method offers a relative improvement of 17.6 % and 23.7 % compared to the adversarial training defense for 48 and 33 dB SNR attacks, respectively. Finally, the feature sensitivity analysis is performed to demonstrate the robustness of the proposed approach against adversarial attacks.

Similar content being viewed by others

Data availability

The data sets generated during and/or analyzed during the current study are available in the Voxceleb-1 repository https://www.robots.ox.ac.uk/vgg/data/voxceleb. The noises utilized to perform data augmentation are available at https://www.openslr.org/17 and https://www.openslr.org/28.

Notes

Python WebRTC VAD interface: https://github.com/wiseman/py-webrtcvad.

References

K. Amino, T. Sugawara, T. Arai, Idiosyncrasy of nasal sounds in human speaker identification and their acoustic properties. Acoust. Sci. Technol. 27(4), 233–235 (2006)

Z. Bai, X.L. Zhang, Speaker recognition based on deep learning: an overview. Neural Netw. 140, 65–99 (2021)

A. Chan, Y. Tay, Y.S. Ong, J. Fu, Jacobian adversarially regularized networks for robustness. ar**v:1912.10185 (2019)

L.C. Chang, Z. Chen, C. Chen, G. Wang, Z. Bi, Defending against adversarial attacks in speaker verification systems. In: 2021 IEEE International Performance, Computing, and Communications Conference (IPCCC), pp. 1–8. IEEE (2021)

X. Chen, J. Wang, X.L. Zhang, W.Q. Zhang, K. Yang, Lmd: A learnable mask network to detect adversarial examples for speaker verification. ar**v:2211.00825 (2022)

X. Chen, J. Yao, X.L. Zhang, Masking speech feature to detect adversarial examples for speaker verification. In: 2022 Asia-Pacific Signal and Information Processing Association Annual Summit and Conference (APSIPA ASC), pp. 191–195. IEEE (2022)

Z. Chen, S. Wang, Y. Qian, K. Yu, Channel invariant speaker embedding learning with joint multi-task and adversarial training. In: ICASSP 2020-2020 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 6574–6578. IEEE (2020)

J. Deng, J. Guo, N. Xue, S. Zafeiriou, Arcface: additive angular margin loss for deep face recognition. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp. 4690–4699 (2019)

I.J. Goodfellow, J. Shlens, C. Szegedy, Explaining and harnessing adversarial examples. ar**v preprint ar**v:1412.6572 (2014)

Google: Webrtcvad. https://webrtc.org/

IDVoice: Innovative voice verification software from id r &d. https://www.idrnd.ai/voice-biometrics/

A. Ilyas, S. Santurkar, D. Tsipras, L. Engstrom, B. Tran, A. Madry, Adversarial examples are not bugs, they are features. In: Advances in neural information processing systems, 32, (2019)

M. Kiefte, Formants in speech perception. J. Acoust. Soc. Am. 140(4), 3162 (2016)

F. Kreuk, Y. Adi, M. Cisse, J. Keshet, Fooling end-to-end speaker verification with adversarial examples. In: 2018 IEEE international conference on acoustics, speech and signal processing (ICASSP), pp. 1962–1966. IEEE (2018)

A. Kurakin, I.J. Goodfellow, S. Bengio, Adversarial examples in the physical world, in Artificial intelligence safety and security. (Chapman and Hall, Boca Raton, 2018), pp.99–112

X. Li, J. Zhong, X. Wu, J. Yu, X. Liu, H. Meng, Adversarial attacks on GMM i-vector based speaker verification systems. In: ICASSP 2020-2020 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 6579–6583. IEEE (2020)

M. MohammadAmini, D. Matrouf, J.F. Bonastre, S. Dowerah, R. Serizel, D. Jouvet, Learning noise robust resnet-based speaker embedding for speaker recognition. In: Odyssey 2022: The Speaker and Language Recognition Workshop (2022)

A. Nagrani, J.S. Chung, A. Zisserman, Voxceleb: a large-scale speaker identification dataset. ar**v preprint ar**v:1706.08612 (2017)

M. Pal, A. Jati, R. Peri, C.C. Hsu, W. AbdAlmageed, S. Narayanan, Adversarial defense for deep speaker recognition using hybrid adversarial training. In: ICASSP 2021-2021 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 6164–6168. IEEE (2021)

B.S.M. Rafi, S. Sankala, K.S.R. Murty, Relative significance of speech sounds in speaker verification systems. Circuits Syst. Signal Process. 42(9), 5412–5427 (2023)

Real time network, text and speaker analytics for combating organized crime (Roxanne). https://roxanne-euproject.org/

M. Sambur, Selection of acoustic features for speaker identification. IEEE Trans. Acoust. Speech Signal Process. 23(2), 176–182 (1975)

A. Shafahi, M. Najibi, M.A. Ghiasi, Z. Xu, J. Dickerson, C. Studer, L.S. Davis, G. Taylor, T. Goldstein, Adversarial training for free!. In: Advances in Neural Information Processing Systems, 32 (2019)

S.I.I.P., (SIIP).: https://www.interpol.int/en/Who-we-are/Legal-framework/Information-communications-and-technology-ICT-law-projects/Speaker-Identification-Integrated-Project-SIIP

D. Snyder, D. Garcia-Romero, G. Sell, D. Povey, S. Khudanpur, X-vectors: Robust dnn embeddings for speaker recognition. In: 2018 IEEE international conference on acoustics, speech and signal processing (ICASSP), pp. 5329–5333. IEEE (2018)

P. Vaishnavi, T. Cong, K. Eykholt, A. Prakash, A. Rahmati, Can attention masks improve adversarial robustness? In: Engineering Dependable and Secure Machine Learning Systems: Third International Workshop, EDSMLS 2020, New York City, NY, USA, Feb 7, 2020, Revised Selected Papers 3, pp. 14–22. Springer (2020)

J. Villalba, Y. Zhang, N. Dehak, x-vectors meet adversarial attacks: Benchmarking adversarial robustness in speaker verification. In: INTERSPEECH, pp. 4233–4237 (2020)

Voiceprint, T.B.: https://www.tdbank.com/bank/tdvoiceprint.html

H. Wu, P.c. Hsu, J. Gao, S. Zhang, S. Huang, J. Kang, Z. Wu, H. Meng, H.y. Lee, Adversarial sample detection for speaker verification by neural vocoders. In: ICASSP 2022-2022 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 236–240. IEEE (2022)

H. Wu, X. Li, A.T. Liu, Z. Wu, H. Meng, H.Y. Lee, Improving the adversarial robustness for speaker verification by self-supervised learning. IEEE/ACM Trans. Audio Speech Lang. Process. 30, 202–217 (2021)

C. **e, Y. Wu, L.v.d. Maaten, A.L. Yuille, K. He, Feature denoising for improving adversarial robustness. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pp. 501–509 (2019)

Y.Q. Yu, S. Zheng, H. Suo, Y. Lei, W.J. Li, Cam: context-aware masking for robust speaker verification. In: ICASSP 2021-2021 IEEE International Conference on Acoustics, Speech and Signal Processing (ICASSP), pp. 6703–6707. IEEE (2021)

Y. Zhu, T. Ko, D. Snyder, B. Mak, D. Povey, Self-attentive speaker embeddings for text-independent speaker verification. In: Interspeech, 2018, 3573–3577 (2018)

Acknowledgements

This work was supported by DST National Mission Interdisciplinary Cyber-Physical Systems (NM-ICPS), Technology Innovation Hub on Autonomous Navigation and Data Acquisition Systems: TiHAN Foundations at Indian Institute of Technology (IIT) Hyderabad

Author information

Authors and Affiliations

Corresponding author

Ethics declarations

Conflict of interest

We declare that we have no known competing financial interests or personal relationships that could have appeared to influence the work reported in this paper

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Sreekanth, S., Sri Rama Murty, K. Defending Adversarial Attacks Against ASV Systems Using Spectral Masking. Circuits Syst Signal Process (2024). https://doi.org/10.1007/s00034-024-02665-7

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s00034-024-02665-7