Abstract

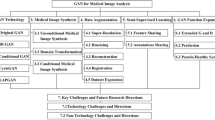

Medical imaging, a cornerstone of disease diagnosis and treatment planning, faces the hurdles of subjective interpretation and reliance on specialized expertise. Deep learning algorithms show improvements in automating medical image analysis, reducing radiologists' burden, and potentially enhancing patient outcomes. However, these algorithms require substantial quantities of high-quality labelled data for effective training and refinement. This paper proposes an innovative approach that harnesses few-shot learning (FSL) and generative adversarial networks (GANs) to overcome conventional methods' limitations in medical image classification. FSL, capable of learning from limited labelled examples, holds promise for scenarios where labelled data is scarce. However, the lack of interpretability in existing FSL models impedes their clinical adoption. To tackle this, this paper proposes a explainable FSL network, "MTUNet + + ," which integrates an attention mechanism to emphasize relevant regions in medical images. Furthermore, integrating a generative adversarial network, enhances the performance of MTUNet + + by generating synthetic medical images. Systematically eliminating misleading synthetic images improves the reliability and accuracy of medical image classification. Empirical evaluation on benchmark datasets underscores the effectiveness of the approach, achieving 85.19% and 69.28% accuracy for the HAM10000 and Kvasir datasets, respectively. This paper contributes to advancing AI-driven solutions in clinical practice, facilitating enhanced patient care and streamlined workflows within real-world healthcare settings.

Similar content being viewed by others

Data Availability

Not applicable.

References

Boeken, T, Feydy, J, Lecler, A, Soyer, P, Feydy, A, Barat, M, Duron, L (2023) Artificial intelligence in diagnostic and interventional radiology: where are we now?. Diagnostic and Interventional Imaging, 104. https://doi.org/10.1016/j.diii.2022.11.004

** W, Li X, Fatehi M, Hamarneh G (2023) Guidelines and evaluation of clinical explainable AI in medical image analysis. Medical Image Analysis 84:102684. https://doi.org/10.1016/j.media.2022.102684

Zhou T, Li Q, Lu H, Cheng Q, Zhang X (2023) GAN review: models and medical image fusion applications. Inf Fusion 91. https://doi.org/10.1016/j.inffus.2022.10.017

Liu B, Zhu Y, Song K, Elgammal A (2020) Towards faster and stabilized gan training for high-fidelity few-shot image synthesis. In: International Conference on Learning Representations

Wang B, Li L, Verma M, Nakashima Y, Kawasaki R, Nagahara H (2023) Match them up: visually explainable few-shot image classification. Appl Intell 53(9):10956–10977. https://doi.org/10.1007/s10489-022-04072-4

Vinyals O, Blundell C, Lillicrap T, Wierstra D (2016) Matching networks for one shot learning. Adv Neural Inf Process Syst 29

Ravi S, Larochelle H (2016) Optimization as a model for few-shot learning. In: International conference on learning representations

Snell J, Swersky K, Zemel R (2017) Prototypical networks for few-shot learning. Adv Neural Inf Process Syst 30

Finn C, Abbeel P, Levine S (2017) Model-agnostic meta-learning for fast adaptation of deep networks. In: International conference on machine learning, pp 1126–1135

Nichol A, Schulman J (2018) Reptile: a scalable metalearning algorithm. ar**v preprint ar**v:1803.02999

Sung F, Yang Y, Zhang L, **ang T, Torr PH, Hospedales TM (2018) Learning to compare: relation network for few-shot learning. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 1199–1208

Gidaris S, Komodakis N (2018) Dynamic few-shot visual learning without forgetting. In: Proceedings of the IEEE conference on computer vision and pattern recognition, pp 4367–4375

**ng C, Rostamzadeh N, Oreshkin BN, Pinheiro PO (2019) Adaptive cross-modal few-shot learning. Adv Neural Inf Process Syst 32

Hu SX, Moreno PG, **ao Y, Shen X, Obozinski G, Lawrence ND, Damianou A (2020) Empirical bayes transductive meta-learning with synthetic gradients. ar**v preprint ar**v:2004.12696. https://doi.org/10.48550/ar**v.2004.12696

Zhou L, Liu Y, Zhang P, Bai X, Gu L, Zhou J, Hancock E (2023) Information bottleneck and selective noise supervision for zero-shot learning. Mach Learn 112. https://doi.org/10.1007/s10994-022-06196-7

Wang RQ, Zhang XY, Liu CL (2021) Meta-prototypical learning for domain-agnostic few-shot recognition. IEEE Trans Neural Netw Learn Syst 33(11):6990–6996. https://doi.org/10.1109/TNNLS.2021.3083650

Gauch M, Beck M, Adler T, Kotsur D, Fiel S, Eghbal-zadeh H, Lehner S (2022) Few-shot learning by dimensionality reduction in gradient space. In: Conference on Lifelong Learning Agents, pp 1043–1064

Ribeiro MT, Singh S, Guestrin C (2016) Why should i trust you Explaining the predictions of any classifier. In: Proceedings of the 22nd ACM SIGKDD international conference on knowledge discovery and data mining, pp 1135–1144. https://doi.org/10.1145/2939672.2939778

Lundberg SM, Lee SI (2017) A unified approach to interpreting model predictions. Adv Neural Inf Process Syst 30

Vaswani A, Shazeer N, Parmar N, Uszkoreit J, Jones L, Gomez AN, Polosukhin I (2017) Attention is all you need. Adv Neural Inf Process Syst 30

Wang H, Wang Z, Du M, Yang F, Zhang Z, Ding S, Hu X (2020) Score-CAM: score-weighted visual explanations for convolutional neural networks. In: Proceedings of the IEEE/CVF conference on computer vision and pattern recognition workshops, pp 24–25

Selvaraju RR, Cogswell M, Das A, Vedantam R, Parikh D, Batra D (2017) Grad-cam: visual explanations from deep networks via gradient-based localization. In: Proceedings of the IEEE international conference on computer vision, pp 618–626

Chattopadhay A, Sarkar A, Howlader P, Balasubramanian VN (2018) Grad-cam++: generalized gradient-based visual explanations for deep convolutional networks. In: 2018 IEEE winter conference on applications of computer vision (WACV), pp 839–847. https://doi.org/10.1109/WACV.2018.00097

Li L, Wang B, Verma M, Nakashima Y, Kawasaki R, Nagahara H (2021) Scouter: slot attention-based classifier for explainable image recognition. In: Proceedings of the IEEE/CVF international conference on computer vision, pp 1046–1055

Sun J, Lapuschkin S, Samek W, Zhao Y, Cheung NM, Binder A (2020) Explain and improve: cross-domain few-shot-learning using explanations. ar**v preprint ar**v:2007.08790, 1. https://doi.org/10.48550/ar**v.2007.08790

Wang J, Song B, Wang D, Qin H (2022) Two-stream network with phase map for few-shot classification. Neurocomputing 472. https://doi.org/10.1016/j.neucom.2021.11.074

Jia J, Feng X, Yu H (2024) Few-shot classification via efficient meta-learning with hybrid optimization. Eng Appl Artif Intell 127. https://doi.org/10.1016/j.engappai.2023.107296

Zhang W, Zhao Y, Gao Y, Sun C (2024) Re-abstraction and perturbing support pair network for few-shot fine-grained image classification. Pattern Recog 148. https://doi.org/10.1016/j.patcog.2023.110158

Goodfellow, I, Pouget-Abadie, J, Mirza, M, Xu, B, Warde-Farley, D, Ozair, S, Bengio, Y (2014) Generative adversarial nets. Advances in neural information processing systems, 27

Nie, D, Trullo, R, Lian, J, Petitjean, C, Ruan, S, Wang, Q, Shen, D (2017) Medical image synthesis with context-aware generative adversarial networks. In Medical Image Computing and Computer Assisted Intervention− MICCAI 2017, (pp. 417–425). https://doi.org/10.1007/978-3-319-66179-7_48

Iqbal T, Ali H (2018) Generative adversarial network for medical images (MI-GAN). J Med Syst 42. https://doi.org/10.1007/s10916-018-1072-9

Beers A, Brown J, Chang K, Campbell JP, Ostmo S, Chiang MF, Kalpathy-Cramer J (2018) High-resolution medical image synthesis using progressively grown generative adversarial networks. ar**v preprint ar**v:1805.03144. https://doi.org/10.48550/ar**v.1805.03144

Ren, Z, Stella, XY, Whitney, D (2021) Controllable medical image generation via generative adversarial networks. In IS&T International Symposium on Electronic Imaging (Vol. 33). https://doi.org/10.2352/ISSN.2470-1173.2021.11.HVEI-112

Joseph, AJ, Dwivedi, P, Joseph, J, Francis, S, Pournami, PN, Jayaraj, PB, Sankaran, P (2024) Prior-guided generative adversarial network for mammogram synthesis. Biomed Signal Process Control, 87. https://doi.org/10.1016/j.bspc.2023.105456

Tschandl, P, Rosendahl, C, Kittler, H (2018) The HAM10000 dataset, a large collection of multi-source dermatoscopic images of common pigmented skin lesions. Scientific data, 5. https://doi.org/10.1038/sdata.2018.161

Pogorelov, K, Randel, KR, Griwodz, C, Eskeland, SL, de Lange, T, Johansen, D, Halvorsen, P (2017) Kvasir: A multi-class image dataset for computer aided gastrointestinal disease detection. In Proceedings of the 8th ACM on Multimedia Systems Conference (pp. 164–169)

Wang Y, Chao WL, Weinberger KQ, Van Der Maaten L (2019) Simpleshot: revisiting nearest-neighbor classification for few-shot learning. ar**v preprint ar**v:1911.04623. https://doi.org/10.48550/ar**v.1911.04623

Huang F, Wang Z, Huang X, Qian Y, Li Z, Chen H (2023) Aligning distillation for cold-start item recommendation. In: Proceedings of the 46th International ACM SIGIR Conference on Research and Development in Information Retrieval, pp 1147–1157. https://doi.org/10.1145/3539618.3591732

Chen H, Bei Y, Shen Q, Xu Y, Zhou S, Huang W, Huang X (2024) Macro Graph Neural Networks for Online Billion-Scale Recommender Systems. ar**v preprint ar**v:2401.14939. https://doi.org/10.48550/ar**v.2401.14939

Funding

Ankit Kumar Titoriya receives a Ph.D. scholarship from the National Institute of Technology Patna.

Author information

Authors and Affiliations

Contributions

Ankit Kumar Titoriya contributed to conceptualization, methodology, and writing; Prof. Maheshwari Prasad Singh and Dr. Amit Kumar Singh contributed to validation, editing, and supervision. All authors read and approved the final manuscript.

Corresponding author

Ethics declarations

Competing Interests

The authors declare no competing interests regarding this manuscript.

Ethics Approval

Not applicable.

Consent to Participate

No requirement for informed consent for this study.

Consent to Publish

Not applicable.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Springer Nature or its licensor (e.g. a society or other partner) holds exclusive rights to this article under a publishing agreement with the author(s) or other rightsholder(s); author self-archiving of the accepted manuscript version of this article is solely governed by the terms of such publishing agreement and applicable law.

About this article

Cite this article

Titoriya, A.K., Singh, M.P. & Singh, A.K. MTUNet + + : explainable few-shot medical image classification with generative adversarial network. Multimed Tools Appl (2024). https://doi.org/10.1007/s11042-024-19316-3

Received:

Revised:

Accepted:

Published:

DOI: https://doi.org/10.1007/s11042-024-19316-3