Abstract

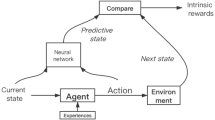

Sparse rewards and sample efficiency are open areas of research in the field of reinforcement learning. These problems are especially important when considering applications of reinforcement learning to robotics and other cyber-physical systems. This is so because in these domains many tasks are goal-based and naturally expressed with binary successes and failures, action spaces are large and continuous, and real interactions with the environment are limited. In this work, we propose Deep Value-and-Predictive-Model Control (DVPMC), a model-based predictive reinforcement learning algorithm for continuous control that uses system identification, value function approximation and sampling-based optimization to select actions. The algorithm is evaluated on a dense reward and a sparse reward task. We show that it can match the performance of a predictive control approach to the dense reward problem, and outperforms model-free and model-based learning algorithms on the sparse reward task on the metrics of sample efficiency and performance. We verify the performance of an agent trained in simulation using DVPMC on a real robot playing the reach-avoid game. Video of the experiment can be found here: https://youtu.be/0Q274kcfn4c.

Article PDF

Similar content being viewed by others

Avoid common mistakes on your manuscript.

Code Availability

Code for the DVPMC algorithm, the simulations and for the robotic experiments are available upon request from the authors at the given email addresses.

References

Yu, Y.: Towards sample efficient reinforcement learning. IJCAI International Joint Conference on Artificial Intelligence. 2018-July, 5739–5743 (2018) https://doi.org/10.24963/ijcai.2018/820

Ladosz, P., Weng, L., Kim, M., Oh, H.: Exploration in deep reinforcement learning: A survey. Information Fusion. 85(July 2021), 1–22 (2022) https://doi.org/10.1016/j.inffus.2022.03.003

Antonyshyn, L.: Deep model-based reinforcement learning for sample efficient predictive control. Master’s thesis, School of Computing, Queen’s University, Kingston, ON, Canada (2022)

Hester, T., Stone, P.: TEXPLORE: Real-time sample-efficient reinforcement learning for robots. Mach. Learn. 90(3), 385–429 (2013). https://doi.org/10.1007/s10994-012-5322-7

Rawlings, J.B.: Tutorial Overview of Model Predictive Control. IEEE Control. Syst. 20(3), 38–52 (2000). https://doi.org/10.1109/37.845037

Piga, D., Forgione, M., Formentin, S., Bemporad, A.: Performance-Oriented Model Learning for Data-Driven MPC Design. IEEE Control Systems Letters. 3(3), 577–582 (2019)

Farshidian, F., Hoeller, D., Hutter, M.: Deep Value Model Predictive Control. (CoRL) (2019)

Rosolia, U., Borrelli, F.: Learning How to Autonomously Race a Car: A Predictive Control Approach. IEEE Trans. Control Syst. Technol. 28(6), 2713–2719 (2020). https://doi.org/10.1109/TCST.2019.2948135

Han, H., Liu, Z., Hou, Y., Qiao, J.: Data-driven multiobjective predictive control for wastewater treatment process. IEEE Trans. Industr. Inf. 16(4), 2767–2775 (2020). https://doi.org/10.1109/TII.2019.2940663

Fujimoto, S., Van Hoof, H., Meger, D.: Addressing Function Approximation Error in Actor-Critic Methods. 35th International Conference on Machine Learning, ICML 2018. 4, 2587–2601 (2018)

Haarnoja, T., Zhou, A., Abbeel, P., Levine, S.: Soft actor-critic: Off-policy maximum entropy deep reinforcement learning with a stochastic actor. 35th International Conference on Machine Learning, ICML 2018. 5, 2976–2989 (2018)

Deisenroth, M.P., Rasmussen, C.E.: PILCO: A model-based and data-efficient approach to policy search. Proceedings of the 28th International Conference on Machine Learning, ICML 2011, 465–472 (2011)

Chua, K., Calandra, R., McAllister, R., Levine, S.: Deep Reinforcement Learning in a Handful of Trials using Probabilistic Dynamics Models. Advances in Neural Information Processing Systems. 2018-Decem(NeurIPS), 4754–4765 (2018)

Janner, M., Fu, J., Zhang, M., Levine, S.: When to trust your model: Model-based policy optimization. Advances in Neural Information Processing Systems. 32(NeurIPS) (2019)

Zhao, H., Zhao, J., Qiu, J., Liang, G., Dong, Z.Y.: Cooperative wind farm control with deep reinforcement learning and knowledge-assisted learning. IEEE Trans. Industr. Inf. 16(11), 6912–6921 (2020). https://doi.org/10.1109/TII.2020.2974037

Andrychowicz, M., Wolski, F., Ray, A., Schneider, J., Fong, R., Welinder, P., McGrew, B., Tobin, J., Abbeel, P., Zaremba, W.: Hindsight Experience Replay (279 cites). Advances in Neural Information Processing Systems. 2017-Decem(Nips), 5049–5059 (2017)

Seborg, D.E., Edgar, T.F., Mellichamp, D.A.: Process Dynamics & Control. Sons, Hoboken, NJ (2011)

Zhang, T., Ma, F., Peng, C., Yu, Y., Yue, D., Dou, C., O’Hare, G.M.P.: A very-short-term online pv power prediction model based on ran with secondary dynamic adjustment. IEEE Transactions on Artificial Intelligence, 1–1 (2022) https://doi.org/10.1109/TAI.2022.3179353

Hewing, L., Wabersich, K.P., Menner, M., Zeilinger, M.N.: Learning-based model predictive control: Toward safe learning in control. Annual Review of Control, Robotics, and Autonomous Systems. 3(1), 269–296 (2020). https://doi.org/10.1146/annurev-control-090419-075625

Rosolia, U., Borrelli, F.: Learning model predictive control for iterative tasks. a data-driven control framework. IEEE Transactions on Automatic Control. 63(7), 1883–1896 (2018) https://doi.org/10.1109/TAC.2017.2753460

Thananjeyan, B., Balakrishna, A., Rosolia, U., Li, F., McAllister, R., Gonzalez, J., Levine, S., Borrelli, F., Goldberg, K.: Safety augmented value estimation from demonstrations (saved): Safe deep model-based rl for sparse cost robotic tasks. IEEE Robotics and Automation Letters. PP, 1–1 (2020) https://doi.org/10.1109/LRA.2020.2976272

Wang, N., Er, M.J., Han, M.: Generalized single-hidden layer feedforward networks for regression problems. IEEE Transactions on Neural Networks and Learning Systems. 26(6), 1161–1176 (2015). https://doi.org/10.1109/TNNLS.2014.2334366

Nagabandi, A., Kahn, G., Fearing, R.S., Levine, S.: Neural Network Dynamics for Model-Based Deep Reinforcement Learning with Model-Free Fine-Tuning. Proceedings - IEEE International Conference on Robotics and Automation, 7579–7586 (2018) https://doi.org/10.1109/ICRA.2018.8463189

Wabersich, K.P., Krishnadas, R., Zeilinger, M.N.: A Soft Constrained MPC Formulation Enabling Learning From Trajectories With Constraint Violations. IEEE Control Systems Letters. 6, 980–985 (2022)

McAllister, R., Rasmussen, C.E.: Improving pilco with bayesian neural network dynamics models. (2016)

Alessio, A., Bemporad, A.: In: Magni, L., Raimondo, D.M., Allgöwer, F. (eds.) A Survey on Explicit Model Predictive Control, pp. 345–369. Springer, Berlin, Heidelberg (2009). https://doi.org/10.1007/978-3-642-01094-1_29

Liu, M., Wan, Y., Lewis, F.L., Lopez, V.G.: Adaptive Optimal Control for Stochastic Multiplayer Differential Games Using On-Policy and Off-Policy Reinforcement Learning. IEEE Transactions on Neural Networks and Learning Systems. 31(12), 5522–5533 (2020). https://doi.org/10.1109/TNNLS.2020.2969215

Zhang, Q., Zhao, D.: Data-Based Reinforcement Learning for Nonzero-Sum Games with Unknown Drift Dynamics. IEEE Transactions on Cybernetics. 49(8), 2874–2885 (2019). https://doi.org/10.1109/TCYB.2018.2830820

Schwartz, H.: An Object Oriented Approach to Fuzzy Actor-Critic Learning for Multi-Agent Differential Games. 2019 IEEE Symposium Series on Computational Intelligence, SSCI 2019, 183–190 (2019) https://doi.org/10.1109/SSCI44817.2019.9002707

Liu, W., Sun, J., Wang, G., Bullo, F., Chen, J.: Data-Driven Resilient Predictive Control under Denial-of-Service. ar**v (2021). https://doi.org/10.48550/ARXIV.2110.12766 . https://arxiv.org/abs/2110.12766

Maddalena, E.T., da S. Moraes, C.G., Waltrich, G., Jones, C.N.: A neural network architecture to learn explicit mpc controllers from data**this work has received support from the swiss national science foundation under the risk project (risk aware data-driven demand response, grant number 200021 175627. IFAC-PapersOnLine. 53(2), 11362–11367 (2020) https://doi.org/10.1016/j.ifacol.2020.12.546 . 21st IFAC World Congress

Acknowledgements

This work was partially funded by the Natural Sciences and Engineering Research Council of Canada and is based in part on a Master’s Thesis at Queen’s University.

Funding

This work was supported in part by the Natural Sciences and Engineering Research Council (NSERC) of Canada through a grant held by Dr. Sidney Givigi under the Discovery Grant Program, Grant 2022-04277.

Author information

Authors and Affiliations

Contributions

Luka Antonyshyn performed this study and wrote this manuscript during his Master of Science at Queen’s University under the supervision of Sidney Givigi. All authors discussed and approved the final manuscript.

Corresponding author

Ethics declarations

Conflicts of Interests:

The authors have no relevant conflicts of interests to declare.

Financial interests

The authors have no relevant financial or non-financial interests to disclose.

Ethics approval:

Not applicable.

Consent to Participate

Not applicable.

Consent for Publication

Not applicable.

Additional information

Publisher's Note

Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

Rights and permissions

Open Access This article is licensed under a Creative Commons Attribution 4.0 International License, which permits use, sharing, adaptation, distribution and reproduction in any medium or format, as long as you give appropriate credit to the original author(s) and the source, provide a link to the Creative Commons licence, and indicate if changes were made. The images or other third party material in this article are included in the article's Creative Commons licence, unless indicated otherwise in a credit line to the material. If material is not included in the article's Creative Commons licence and your intended use is not permitted by statutory regulation or exceeds the permitted use, you will need to obtain permission directly from the copyright holder. To view a copy of this licence, visit http://creativecommons.org/licenses/by/4.0/.

About this article

Cite this article

Antonyshyn, L., Givigi, S. Deep Model-Based Reinforcement Learning for Predictive Control of Robotic Systems with Dense and Sparse Rewards. J Intell Robot Syst 110, 100 (2024). https://doi.org/10.1007/s10846-024-02118-y

Received:

Accepted:

Published:

DOI: https://doi.org/10.1007/s10846-024-02118-y