Abstract

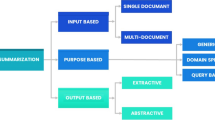

Transformer models have evolved natural language processing tasks in machine learning and set a new standard for the state of the art. Thanks to the self-attention component, these models have achieved significant improvements in text generation tasks (such as extractive and abstractive text summarization). However, research works involving text summarization and the legal domain are still in their infancy, and as such, benchmarks and a comparative analysis of these state of the art models is important for the future of text summarization of this highly specialized task. In order to contribute to these research works, the researchers propose a comparative analysis of different, fine-tuned Transformer models and datasets in order to provide a better understanding of the task at hand and the challenges ahead. The results show that Transformer models have improved upon the text summarization task, however, consistent and generalized learning is a challenge that still exists when training the models with large text dimensions. Finally, after analyzing the correlation between objective results and human opinion, the team concludes that the Recall-Oriented Understudy for Gisting Evaluation (ROUGE) [13] metrics used in the current state of the art are limited and do not reflect the precise quality of a generated summary.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

Notes

- 1.

- 2.

- 3.

Spreadsheet with the benchmark - https://bit.ly/3HxYuPT.

References

Beltagy, I., Peters, M.E., Cohan, A.: Longformer: The long-document transformer. CoRR abs/2004.05150 (2020)

Burga-Gutierrez, E., Vasquez-Chauca, B., Ugarte, W.: Comparative analysis of question answering models for HRI tasks with NAO in spanish. In: SIMBig (2020)

Chancolla-Neira, S.W., Salinas-Lozano, C.E., Ugarte, W.: Static summarization using pearson’s coefficient and transfer learning for anomaly detection for surveillance videos. In: SIMBig (2020)

Chavez-Chavez, E., Zuta-Vidal, E.I.: El Acceso a La Justicia de Los Sectores Pobres a Propósito de Los Consultorios Jurídicos Gratuitos Pucp y la Recoleta de Prosode. Master’s thesis, Pontifica Universidad Católica del Perú (2015)

Devlin, J., Chang, M., Lee, K., Toutanova, K.: BERT: pre-training of deep bidirectional transformers for language understanding. In: NAACL-HLT (2019)

El-Kassas, W.S., Salama, C.R., Rafea, A.A., Mohamed, H.K.: Automatic text summarization: a comprehensive survey. Expert Syst. Appl. 165 (2021)

Huang, L., Cao, S., Parulian, N.N., Ji, H., Wang, L.: Efficient attentions for long document summarization. In: NAACL-HLT (2021)

Jain, D., Borah, M.D., Biswas, A.: Summarization of legal documents: where are we now and the way forward. Comput. Sci. Rev. 40, 100388 (2021)

Kanapala, A., Pal, S., Pamula, R.: Text summarization from legal documents: a survey. Artif. Intell. Rev. 51(3), 371–402 (2019)

Kornilova, A., Eidelman, V.: Billsum: A corpus for automatic summarization of US legislation. CoRR abs/1910.00523 (2019)

Lewis, M., et al.: BART: denoising sequence-to-sequence pre-training for natural language generation, translation, and comprehension. In: ACL (2020)

Liang, X., et al.: R-drop: Regularized dropout for neural networks. In: NeurIPS (2021)

Lin, C.Y.: Rouge: a package for automatic evaluation of summaries. In: Proceedings of the ACL Workshop: Text Summarization Braches Out 2004 (2004)

Liu, L., Lu, Y., Yang, M., Qu, Q., Zhu, J., Li, H.: Generative adversarial network for abstractive text summarization. In: AAAI (2018)

Raffel, C., et al.: Exploring the limits of transfer learning with a unified text-to-text transformer. J. Mach. Learn. Res. 21, 1–67 (2020)

de Rivero, M., Tirado, C., Ugarte, W.: Formalstyler: GPT based model for formal style transfer based on formality and meaning preservation. In: KDIR (2021)

Shleifer, S., Rush, A.M.: Pre-trained summarization distillation. CoRR abs/2010.13002 (2020)

Vaswani, A., et al.: Attention is all you need. In: NIPS (2017)

Zhang, J., Zhao, Y., Saleh, M., Liu, P.J.: PEGASUS: pre-training with extracted gap-sentences for abstractive summarization. In: ICML (2020)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Núñez-Robinson, D., Talavera-Montalto, J., Ugarte, W. (2022). A Comparative Analysis on the Summarization of Legal Texts Using Transformer Models. In: Guarda, T., Portela, F., Augusto, M.F. (eds) Advanced Research in Technologies, Information, Innovation and Sustainability. ARTIIS 2022. Communications in Computer and Information Science, vol 1675. Springer, Cham. https://doi.org/10.1007/978-3-031-20319-0_28

Download citation

DOI: https://doi.org/10.1007/978-3-031-20319-0_28

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-20318-3

Online ISBN: 978-3-031-20319-0

eBook Packages: Computer ScienceComputer Science (R0)