Abstract

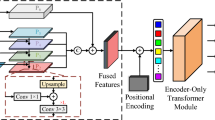

Vision transformers (ViTs) are changing the landscape of object detection approaches. A natural usage of ViTs in detection is to replace the CNN-based backbone with a transformer-based backbone, which is straightforward and effective, with the price of bringing considerable computation burden for inference. More subtle usage is the DETR family, which eliminates the need for many hand-designed components in object detection but introduces a decoder demanding an extra-long time to converge. As a result, transformer-based object detection can not prevail in large-scale applications. To overcome these issues, we propose a novel decoder-free fully transformer-based (DFFT) object detector, achieving high efficiency in both training and inference stages, for the first time. We simplify objection detection into an encoder-only single-level anchor-based dense prediction problem by centering around two entry points: 1) Eliminate the training-inefficient decoder and leverage two strong encoders to preserve the accuracy of single-level feature map prediction; 2) Explore low-level semantic features for the detection task with limited computational resources. In particular, we design a novel lightweight detection-oriented transformer backbone that efficiently captures low-level features with rich semantics based on a well-conceived ablation study. Extensive experiments on the MS COCO benchmark demonstrate that DFFTSMALL outperforms DETR by \(2.5\%\) AP with \(28\%\) computation cost reduction and more than \(10\times \) fewer training epochs. Compared with the cutting-edge anchor-based detector RetinaNet, DFFTSMALL obtains over \(5.5\%\) AP gain while cutting down \(70\%\) computation cost. The code is available at https://github.com/peixianchen/DFFT.

P. Chen and M. Zhang—Equal Contribution.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Cai, Z., Vasconcelos, N.: Cascade R-CNN: delving into high quality object detection. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 6154–6162 (2018)

Carion, N., Massa, F., Synnaeve, G., Usunier, N., Kirillov, A., Zagoruyko, S.: End-to-end object detection with transformers. In: Vedaldi, A., Bischof, H., Brox, T., Frahm, J.-M. (eds.) ECCV 2020. LNCS, vol. 12346, pp. 213–229. Springer, Cham (2020). https://doi.org/10.1007/978-3-030-58452-8_13

Chen, K., et al.: MMDetection: open MMLAB detection toolbox and benchmark. ar**v (2019)

Chen, Q., Wang, Y., Yang, T., Zhang, X., Cheng, J., Sun, J.: You only look one-level feature. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 13039–13048 (2021)

Chen, Y., et al.: Mobile-former: bridging MobileNet and transformer. ar**v (2021)

Cheng, B., Wei, Y., Shi, H., Feris, R., **ong, J., Huang, T.: Revisiting RCNN: on awakening the classification power of faster RCNN. In: Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y. (eds.) ECCV 2018. LNCS, vol. 11219, pp. 473–490. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-01267-0_28

Dai, X., et al.: Dynamic head: unifying object detection heads with attentions. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 7373–7382 (2021)

Deng, J., Dong, W., Socher, R., Li, L.J., Li, K., Fei-Fei, L.: ImageNet: a large-scale hierarchical image database. In: 2009 IEEE Conference on Computer Vision and Pattern Recognition, pp. 248–255. IEEE (2009)

Duan, K., Bai, S., **e, L., Qi, H., Huang, Q., Tian, Q.: CenterNet: keypoint triplets for object detection. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 6569–6578 (2019)

El-Nouby, A., et al.: XCiT: cross-covariance image transformers. ar**v (2021)

Fang, Y., et al.: You only look at one sequence: rethinking transformer in vision through object detection. ar**v (2021)

Feng, C., Zhong, Y., Gao, Y., Scott, M.R., Huang, W.: TOOD: task-aligned one-stage object detection. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 3510–3519 (2021)

Gao, P., Zheng, M., Wang, X., Dai, J., Li, H.: Fast convergence of DETR with spatially modulated co-attention. ar**v (2021)

Gao, Z., Wang, L., Wu, G.: Mutual supervision for dense object detection. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 3641–3650 (2021)

Ge, Z., Liu, S., Wang, F., Li, Z., Sun, J.: YOLOX: exceeding YOLO series in 2021. ar**v (2021)

Ghiasi, G., Lin, T.Y., Le, Q.V.: NAS-FPN: learning scalable feature pyramid architecture for object detection. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 7036–7045 (2019)

He, K., Gkioxari, G., Dollár, P., Girshick, R.: Mask R-CNN. In: Proceedings of the IEEE International Conference On Computer Vision, pp. 2961–2969 (2017)

Kingma, D.P., Ba, J.: Adam: a method for stochastic optimization. ar**v (2014)

Lin, T.Y., Dollár, P., Girshick, R., He, K., Hariharan, B., Belongie, S.: Feature pyramid networks for object detection. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2117–2125 (2017)

Lin, T.Y., Goyal, P., Girshick, R., He, K., Dollár, P.: Focal loss for dense object detection. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 2980–2988 (2017)

Lin, T.-Y., et al.: Microsoft COCO: common objects in context. In: Fleet, D., Pajdla, T., Schiele, B., Tuytelaars, T. (eds.) ECCV 2014. LNCS, vol. 8693, pp. 740–755. Springer, Cham (2014). https://doi.org/10.1007/978-3-319-10602-1_48

Liu, F., Wei, H., Zhao, W., Li, G., Peng, J., Li, Z.: WB-DETR: transformer-based detector without backbone. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 2979–2987 (2021)

Liu, S., Qi, L., Qin, H., Shi, J., Jia, J.: Path aggregation network for instance segmentation. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 8759–8768 (2018)

Liu, Z., et al.: Swin transformer: hierarchical vision transformer using shifted windows. ar**v (2021)

Meng, D., et al.: Conditional DETR for fast training convergence. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 3651–3660 (2021)

Ren, S., He, K., Girshick, R., Sun, J.: Faster R-CNN: towards real-time object detection with region proposal networks. Adv. Neural. Inf. Process. Syst. 28, 91–99 (2015)

Song, G., Liu, Y., Wang, X.: Revisiting the sibling head in object detector. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 11563–11572 (2020)

Sun, Z., Cao, S., Yang, Y., Kitani, K.M.: Rethinking transformer-based set prediction for object detection. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 3611–3620 (2021)

Tian, Z., Shen, C., Chen, H., He, T.: FCOS: fully convolutional one-stage object detection. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 9627–9636 (2019)

Wang, W., et al.: Pyramid vision transformer: a versatile backbone for dense prediction without convolutions. ar**v (2021)

Wang, Y., Zhang, X., Yang, T., Sun, J.: Anchor DETR: query design for transformer-based detector. ar**v (2021)

Xu, H., Yao, L., Zhang, W., Liang, X., Li, Z.: Auto-FPN: automatic network architecture adaptation for object detection beyond classification. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 6649–6658 (2019)

Yang, J., et al.: Focal self-attention for local-global interactions in vision transformers. ar**v (2021)

Yao, Z., Ai, J., Li, B., Zhang, C.: Efficient DETR: improving end-to-end object detector with dense prior. ar**v (2021)

Zhao, Q., et al.: M2Det: a single-shot object detector based on multi-level feature pyramid network. In: Proceedings of the AAAI, vol. 33, pp. 9259–9266 (2019)

Zhu, X., Su, W., Lu, L., Li, B., Wang, X., Dai, J.: Deformable DETR: deformable transformers for end-to-end object detection. ar**v (2020)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

1 Electronic supplementary material

Below is the link to the electronic supplementary material.

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Chen, P. et al. (2022). Efficient Decoder-Free Object Detection with Transformers. In: Avidan, S., Brostow, G., Cissé, M., Farinella, G.M., Hassner, T. (eds) Computer Vision – ECCV 2022. ECCV 2022. Lecture Notes in Computer Science, vol 13670. Springer, Cham. https://doi.org/10.1007/978-3-031-20080-9_5

Download citation

DOI: https://doi.org/10.1007/978-3-031-20080-9_5

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-20079-3

Online ISBN: 978-3-031-20080-9

eBook Packages: Computer ScienceComputer Science (R0)