Abstract

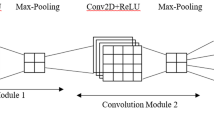

The improvement in people’s lives has resulted in a significant rise in the amount of household garbage created on a daily basis, to the point where waste separation can no longer be disregarded, especially for the series of problems: manual waste classification is time-consuming and laborious, and human waste classification errors are caused by a lack of knowledge reserve related to waste classification. To address these issues, we propose a waste classification network YOLO-CG optimized on the basis of YOLOV5 network structure in campus scene. Firstly, YOLO-CG draws lessons from the optimization idea of Transformer performance improvement by stacking the ConvNeXt Blocks in the ratio of 3:3:9:3 as backbone, adding the big size kernel and other adjustments, upgrading the mean average precision (mAP) of the network model by 5%. Then, to maintain the original accuracy while reducing the number of parameters, a computationally reduced cheap operation is introduced, which employs a simple 3 * 3 convolution to achieve a low-cost acquisition of redundant feature maps, resulting in a reduction of 12% in parameter count while also increasing the mAP. Both theoretical analysis and experiments demonstrate the effectiveness of the improved network model.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

23(20), 172–174 (2021)

23(20), 172–174 (2021)Fucong, L., et al.: Depth-wise separable convolution attention module for garbage image classification. Sustainability 14(5), 3099 (2022)

Longyu, G., et al.: A design of intelligent public trash can based on machine vision and auxiliary sensors. J. Robot. Netw. Artif. Life 8(4), 273–277 (2021)

Zhang, H., Song, A.: Research on image classification of recyclable garbage based on transfer learning. Int. Core J. Eng. 7(6), 153–157 (2021)

Hongjie, D., et al.: An embeddable algorithm for automatic garbage detection based on complex marine environment. Sensors 21(19), 6391 (2021)

Krizhevsky, A., Sutskever, I., Hinton, G.: ImageNet classification with deep convolutional neural networks. Adv. Neural Inf. Process. Syst. 25(2), 1097–1105 (2012)

Szegedy, C., et al: Going Deeper with Convolutions. CoRR, abs/1409.4842 (2014)

Simonyan, K., Zisserman, A.: Very Deep Convolutional Networks for Large-Scale Image Recognition. CoRR, abs/1409.1556 (2014)

He, K., Zhang, X., Ren, S., et al.: Deep residual learning for image recognition. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 770–778 (2016)

Vaswani, A., Shazeer, N., Parmar, N., et al.: Attention Is All You Need. ar**v: 1706.03762 (2017)

Ross, B.G., et al.: Rich feature hierarchies for accurate object detection and semantic segmentation. CoRR, abs/1311.2524 (2013)

Ross B. Girshick. Fast R-CNN. CoRR, abs/1504.08083 (2015)

Shaoqing, R., et al.: Faster R-CNN: towards real-time object detection with region proposal networks. IEEE Trans. Pattern Anal. Mach. Intell. 39(6), 1137–1149 (2017)

He, K., Gkioxari, G., Dollár, P., et al.: Mask R-CNN. In: Proceedings of the IEEE International Conference on Computer Vision, pp. 2961–2969 (2017)

Lin, T.Y., Dollár, P., Girshick, R., et al.: Feature pyramid networks for object detection. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 2117–2125 (2017)

Zhao, Q., Sheng, T., Wang, Y., et al.: M2det: A single-shot object detector based on multi-level feature pyramid network. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 33, no. 01, pp. 9259–9266 (019)

Redmon, J., Divvala, S., Girshick, R., et al.: You only look once: Unified, real-time object detection. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 779–788 (2016)

Redmon, J., Farhadi, A.: YOLO9000: better, faster, stronger. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 7263–7271 (2017)

Redmon, J., Farhadi, A.: Yolov3: An incremental improvement. ar**v preprint, ar**v:1804.02767 (2018)

Bochkovskiy, A., Wang, C.Y., Liao, H.Y.M.: Yolov4: Optimal speed and accuracy of object detection. ar**v preprint, ar**v:2004.10934 (2020)

Liu, W., Anguelov, D., Erhan, D., Szegedy, C., Reed, S., Fu, C.-Y., Berg, A.C.: SSD: Single shot multibox detector. In: Leibe, B., Matas, J., Sebe, N., Welling, M. (eds.) ECCV 2016. LNCS, vol. 9905, pp. 21–37. Springer, Cham (2016). https://doi.org/10.1007/978-3-319-46448-0_2

Fu, C.Y., Liu, W., Ranga, A., et al.: Dssd: Deconvolutional single shot detector. ar**v pre-print, ar**v:1701.06659 (2017)

Liu, S., Huang, D., Wang, Y.: Receptive field block net for accurate and fast object detection. In: Ferrari, V., Hebert, M., Sminchisescu, C., Weiss, Y. (eds.) ECCV 2018. LNCS, vol. 11215, pp. 404–419. Springer, Cham (2018). https://doi.org/10.1007/978-3-030-01252-6_24

Ultralytics/yolov5. https://github.com/ultralytics/yolov5. Accessed 21 Apr 2022

Liu, Z., Lin, Y., Cao, Y., et al.: Swin transformer: Hierarchical vision transformer using shifted windows. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 10012–10022 (2021)

Liu, Z., Mao, H., et al.: A ConvNet for the 2020s. ar**v preprint, ar**v:2201.03545 (2022)

Howard, A.G., Zhu, M., Chen, B., et al.: Mobilenets: Efficient convolutional neural networks for mobile vision applications. ar**v preprint, ar**v:1704.04861 (2017)

**e, S., Girshick, R., Dollár, P., et al.: Aggregated residual transformations for deep neural networks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 1492–1500 (2017)

Sandler, M., Howard, A., Zhu, M., et al.: Mobilenetv2: Inverted residuals and linear bottle-necks. In: Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, pp. 4510–4520 (2018)

Han, K., Wang, Y., Tian, Q., et al.: Ghostnet: more features from cheap operations. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 1580–1589 (2020)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2022 The Author(s), under exclusive license to Springer Nature Switzerland AG

About this paper

Cite this paper

Hu, F., Qian, P., Jiang, Y., Yao, J. (2022). An Improved Waste Detection and Classification Model Based on YOLOV5. In: Huang, DS., Jo, KH., **g, J., Premaratne, P., Bevilacqua, V., Hussain, A. (eds) Intelligent Computing Methodologies. ICIC 2022. Lecture Notes in Computer Science(), vol 13395. Springer, Cham. https://doi.org/10.1007/978-3-031-13832-4_61

Download citation

DOI: https://doi.org/10.1007/978-3-031-13832-4_61

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-031-13831-7

Online ISBN: 978-3-031-13832-4

eBook Packages: Computer ScienceComputer Science (R0)

23(20), 172–174 (2021)

23(20), 172–174 (2021)