Abstract

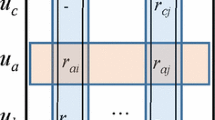

Collaborative Filtering (CF) is an important building block of recommendation systems. Alternating Least Squares (ALS) is the most popular algorithm used in CF models to calculate the latent factor matrix factorization. Parallel ALS on Hadoop is widely used in the era of big data. However, existing work on the computational efficiency of parallel ALS on Hadoop have two defects. One is the imbalance of data distribution, the other is lacking the fine-grained parallel processing on the rating data. Aiming on these issues, we propose an integrated optimized solution. The solution first optimizes the rating data partition with the consideration of both the number of involved data records and the partitioned data size. Then, the multithread-based fine-grained parallelism is introduced to process rating data records within a map task concurrently. Experimental results demonstrate that our solution can reduce the overall runtime of Hadoop ALS by 82.17% by maximum.

Supported by 2019 BenchCouncil AI System and Algorithm Challenge.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Bokde, D., Girase, S., Mukhopadhyay, D.: Matrix factorization model in collaborative filtering algorithms: a survey. J. Procedia Comput. Sci. 49(1), 136–146 (2015)

Hernando, A., Bobadilla, J., Ortega, F.: A non negative matrix factorization for collaborative filtering recommender systems based on a Bayesian probabilistic model. Knowl.-Based Syst. 97(4), 188–202 (2016)

Deshpande, M., Karypis, G.: Item-based top-n recommendation algorithms. ACM Trans. Inf. Syst. (TOIS) 22(1), 143–177 (2004)

Hanmin, Y., Zhang, Q., Bai, X.: A new collaborative filtering algorithm based on modified matrix factorization. In: Electronic and Automation Control Conference (IAEAC), pp. 147–151. IEEE (2017)

Yang, Z., Chen, W., Huang, J.: Enhancing recommendation on extremely sparse data with blocks-coupled non-negative matrix factorization. J. Neurocomput. 278, 126–133 (2018)

Herodotou, H., Dong, F., Babu, S.: Mapreduce programming and cost-based optimization crossing this chasm with starfish. J. Proc. VLDB Endowment 4(12), 1446–1449 (2011)

Herodotou, H.: Hadoop performance models. J. ar**v preprint ar**v, 1106.0940(2011)

Manda, W., Michael, B., Anthony, L., Hans, D.: Algorithmic acceleration of parallel ALS for collaborative filtering: speeding up distributed big data recommendation in Spark. In: 21st International Conference on Parallel and Distributed Systems(ICPADS), pp. 682–691. IEEE (2015)

Krzysztof, F., Rafal, Z.: Distributed nonnegative matrix factorization with HALS algorithm on Apache Spark. In: Artificial Intelligence and Soft Computing - 17th International Conference (ICAISC), pp. 333–342 (2018)

Bing, T., Linyao, K., **a, Y., Zhang, L.: GPU-accelerated large-scale non-negative matrix factorization using spark. In: Collaborative Computing: Networking, Applications and Worksharing- 14th International Conference (EAI), pp. 189–201 (2018)

Maria, M., Katayoun, N., Setareh, R., Houman, H.: Hadoop workloads characterization for performance and energy efficiency optimizations on microservers. J. IEEE Trans. Multi-Scale Comput. Syst. 4(3), 355–368 (2018)

Jyotindra, T., Mahesh, P., Anjana, P.: A Hadoop based collaborative filtering recommender system accelerated on GPU using OpenCL. J. Int. J. Eng. Sci. Res. Technol. 6(9), 195–209 (2017)

Teflioudi, C., Makari, F., Gemulla, R.: Distributed matrix completion. In: 12th International Conference on Data Mining (ICDM), pp. 655–664. IEEE(2012)

Yu, H.-F., Hsieh, C.-J.,Dhillon, I., et al.: Scalable coordinate descent approaches to parallel matrix factorization for recommender systems. In: 12th International Conference on Data Mining (ICDM), pp. 765–774. IEEE(2012)

Zaharia, M., et al.: Resilient distributed datasets: a fault-tolerant abstraction for in-memory cluster computing. In: Proceedings of the 9th USENIX conference on Networked Systems Design and Implementation, pp. 15–28 (2012)

Zhou, Y., Wilkinson, D., Schreiber, R., Pan, R.: Large-scale parallel collaborative filtering for the Netflix prize. In: Proceedings of the 4th International Conference on Algorithmic Aspects in Information and Management, pp. 337–348 (2008)

Wanling, G., Fei, T., Wang, L., Zhan, J., Lan, C., et. al.: AIBench: an industry standard internet service AI benchmark suite. J. ar**v preprint ar**v:1908.08998 (2019)

Gao, W., et al.: AIBench: towards scalable and comprehensive datacenter AI benchmarking. In: Zheng, C., Zhan, J. (eds.) Bench 2018. LNCS, vol. 11459, pp. 3–9. Springer, Cham (2019). https://doi.org/10.1007/978-3-030-32813-9_1

Jiang, Z., et al.: HPC AI500: a benchmark suite for HPC AI systems. In: Zheng, C., Zhan, J. (eds.) Bench 2018. LNCS, vol. 11459, pp. 10–22. Springer, Cham (2019). https://doi.org/10.1007/978-3-030-32813-9_2

Hao, T., Huang, Y., Wen, X., Gao, W., Zhang, F., Zheng, C., Wang, L., Ye, H., Hwang, K., Ren, Z., Zhan, J.: Edge AIBench: towards comprehensive end-to-end edge computing benchmarking. In: Zheng, C., Zhan, J. (eds.) Bench 2018. LNCS, vol. 11459, pp. 23–30. Springer, Cham (2019). https://doi.org/10.1007/978-3-030-32813-9_3

Luo, C., et al.: AIoT bench: towards comprehensive benchmarking mobile and embedded device intelligence. In: Zheng, C., Zhan, J. (eds.) Bench 2018. LNCS, vol. 11459, pp. 31–35. Springer, Cham (2019). https://doi.org/10.1007/978-3-030-32813-9_4

Comon, P., Luciani, X., de Almeida, A.L.F.: Tensor decompositions, alternating least squares and other tales. J. Chemom. 23, 393–405 (2009)

Liu, L.: Computing infrastructure for big data processing. Front. Comput. Sci. 7, 165–170 (2013)

Li, G., Wang, X., Ma, X., Liu, L., Feng, X.: XDN: Towards efficient inference of residual neural networks on cambricon chips. In: Gao, W., et al. (eds.) Bench 2019, LNCS, vol. 12093, pp. 51–56. Springer, Cham (2019)

Li, J., Jiang, Z.: Performance analysis of cambricon mlu100. In: Gao, W., et al. (eds.) Bench 2019, LNCS, vol. 12093, pp. 57–66. Springer, Cham (2019)

Hou, P., Yu, J., Miao, Y., Tai, Y., Wu, Y., Zhao, C.: RVTensor: A light-weight neural network inference framework based on the RISC-V architecture. In: Gao, W., et al. (eds.) Bench 2019, LNCS, vol. 12093, pp. 85–90. Springer, Cham (2019)

Deng, W., Wang, P., Wang, J., Li, C., Guo, M.: PSL: exploiting parallelism, sparsity and locality to accelerate matrix factorization on x86 platforms. In: Gao, W., et al. (eds.) Bench 2019, LNCS, vol. 12093, pp. 101–109. Springer, Cham (2019)

Hao, T., Zheng, Z.: The implementation and optimization of matrix decomposition based collaborative filtering task on x86 platform. In: Gao, W., et al. (eds.) Bench 2019, LNCS, vol. 12093, pp. 110–115. Springer, Cham (2019)

**ong, X., Wen, X., Huang, C.: Improving RGB-D face recognition via transfer learning from a pretrained 2D network. In: Gao, W., et al. (eds.) Bench 2019, LNCS, vol. 12093, pp. 141–148. Springer, Cham (2019)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2020 Springer Nature Switzerland AG

About this paper

Cite this paper

Liang, Y., Zeng, S., Liang, Y., Chen, K. (2020). Accelerating Parallel ALS for Collaborative Filtering on Hadoop. In: Gao, W., Zhan, J., Fox, G., Lu, X., Stanzione, D. (eds) Benchmarking, Measuring, and Optimizing. Bench 2019. Lecture Notes in Computer Science(), vol 12093. Springer, Cham. https://doi.org/10.1007/978-3-030-49556-5_13

Download citation

DOI: https://doi.org/10.1007/978-3-030-49556-5_13

Published:

Publisher Name: Springer, Cham

Print ISBN: 978-3-030-49555-8

Online ISBN: 978-3-030-49556-5

eBook Packages: Computer ScienceComputer Science (R0)