Abstract

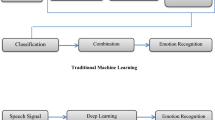

This chapter presents the literature related to the databases, features, pattern classifiers used for emotion recognition from speech. Different types of emotional databases such as simulated, elicited and natural are critically reviewed from the research point of view. Review of existing emotion recognition systems developed using excitation source, vocal tract system and prosodic features is briefly presented. Basic pattern classification models used for discriminating the emotions are discussed in brief. Finally, the chapter concludes with motivation and scope of the work presented in this book.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

D. Ververidis and C. Kotropoulos, “A state of the art review on emotional speech databases,” in Eleventh Australasian International Conference on Speech Science and Technology, (Auckland, New Zealand), 2006.

S. G. Koolagudi, N. Kumar, and K. S. Rao, “Speech emotion recognition using segmental level prosodic analysis,” in International Conference on Devices and Communication, (Mesra, India), Birla Institute of Technology, IEEE Press, 2011.

M.Schubiger, English intonation: its form and function. Tubingen, Germany: Niemeyer, 1958.

J. Connor and G.Arnold, Intonation of Colloquial English. London, UK: Longman, second ed., 1973.

M. E. Ayadi, M. S.Kamel, and F. Karray, “Survey on speech emotion recognition: Features,classification schemes, and databases,” Pattern Recognition, vol. 44, pp. 572–587, 2011.

P. Ekman, Handbook of Cognition and Emotion, ch. Basic Emotions. Sussex, UK: John Wiley and Sons Ltd, 1999.

R.Cowie, E.Douglas-Cowie, N.Tsapatsoulis, S.Kollias, W.Fellenz, and J.Taylor, “Emotion recognition in human-computer interaction,” IEEE Signal Processing Magazine, vol. 18, pp. 32–80, 2001.

J. William, “What is an emotion?,” Mind, vol. 9, p. 188–205, 1984.

A. D. Craig, Handbook of Emotion, ch. Interoception and emotion: A neuroanatomical perspective. New York: The Guildford Press, September 2009. ISBN 978-1-59385-650-2.

C. E. Williams and K. N. Stevens, “Vocal correlates of emotional states,” Speech Evaluation in Psychiatry, p. 189–220., 1981. Grune and Stratton Inc.

J.Cahn, “The generation of affect in synthesized speech,” Journal of American Voice Input/Output Society, vol. 8, pp. 1–19, 1990.

G. M. David, “Theories of emotion,” Psychology, vol. 7, 2004. New York, worth publishers.

X. ** and Z. Wang, “An emotion space model for recognition of emotions in spoken chinese,” in ACII (J. Tao, T. Tan, and R. Picard, eds.), pp. 397–402, LNCS 3784, Springer-Verlag Berlin Heidelberg, 2005.

J. Makhoul, “Linear prediction: A tutorial review,” Proceedings of the IEEE, vol. 63, no. 4, pp. 561–580, 1975.

L. R. Rabiner and B. H. Juang, Fundamentals of Speech Recognition. Englewood Cliffs, New Jersy: Prentice-Hall, 1993.

J. Benesty, M. M. Sondhi, and Y. Huang, eds., Springer Handbook on Speech Processing. Springer Publishers, 2008.

S. G. Koolagudi and K. S. Rao, “Emotion recognition from speech using source, system and prosodic features,” International Journal of Speech Technology, Springer, vol. 15, no. 3, pp. 265–289, 2012.

M. Schroder, R. Cowie, E. Douglas-Cowie, M. Westerdijk, and S. Gielen, “Acoustic correlates of emotion dimensions in view of speech synthesis,” (Aalborg, Denmark), EUROSPEECH 2001 Scandinavia, 2nd INTERSPEECH Event, September 3–7 2001. 7th European Conference on Speech Communication and Technology.

C.Williams and K.Stevens, “Emotionsandspeech:someacousticalcorrelates,” Journal of Acoustic Society of America, vol. 52, no. 4 pt 2, pp. 1238–1250, 1972.

A. Batliner, J. Buckow, H. Niemann, E. Nöth, and VolkerWarnke, Verbmobile Foundations of speech to speech translation. ISBN 3540677836, 9783540677833: springer, 2000.

D. Ververidis and C. Kotropoulos, “Emotional speech recognition: Resources, features, and methods,” SPC, vol. 48, p. 1162–1181, 2006.

F. Burkhardt, A. Paeschke, M. Rolfes, W. Sendlmeier, and B. Weiss, “A database of german emotional speech,” in Interspeech, 2005.

S. G. Koolagudi, S. Maity, V. A. Kumar, S. Chakrabarti, and K. S. Rao, IITKGP-SESC : Speech Database for Emotion Analysis. Communications in Computer and Information Science, JIIT University, Noida, India: Springer, issn: 1865-0929 ed., August 17–19 2009.

E. McMahon, R. Cowie, S. Kasderidis, J. Taylor, and S. Kollias, “What chance that a dc could recognize hazardous mental states from sensor inputs?,” in Tales of the disappearing computer, (Santorini , Greece), 2003.

C. M. Lee and S. S. Narayanan, “Toward detecting emotions in spoken dialogs,” IEEE Trans. Speech and Audio Processing, vol. 13, pp. 293–303, 2005.

B. Schuller, G. Rigoll, and M. Lang, “Speech emotion recognition combining acoustic features and linguistic information in a hybrid support vector machine-belief network architecture,” in IEEE International Conference on Acoustics, Speech, and Signal Processing, 2004. Proceedings. (ICASSP ’04), (ISBN: 0-7803-8484-9), pp. I– 577–80, IEEE Press, May 17–21 2004.

F. Dellert, T. Polzin, and A. Waibel, “Recognizing emotion in speech,” (Philadelphia, PA, USA), pp. 1970–1973, 4th International Conference on Spoken Language Processing, October 3–6 1996.

R. Nakatsu, J. Nicholson, and N. Tosa, “Emotion recognition and its application to computer agents with spontaneous interactive capabilities,” Knowledge-Based Systems, vol. 13, pp. 497–504, 2000.

F. Charles, D. Pizzi, M. Cavazza, T. Vogt, and E. André, “Emoemma: Emotional speech input for interactive storytelling,” in 8th Int. Conf. on Autonomous Agents and Multiagent Systems (AAMAS 2009) (Decker, Sichman, Sierra, and Castelfranchi, eds.), (Budapest, Hungary), pp. 1381–1382, International Foundation for Autonomous Agents and Multi-agent Systems, May, 10–15 2009.

T.V.Sagar, “Characterisation and synthesis of emotionsin speech using prosodic features,” Master’s thesis, Dept. of Electronics and communications Engineering, Indian Institute of Technology Guwahati, May. 2007.

D.J.France, R.G.Shiavi, S.Silverman, M.Silverman, and M.Wilkes, “Acoustical properties of speech as indicators of depression and suicidal risk,” IEEE Transactions on Biomedical Eng, vol. 47, no. 7, pp. 829–837, 2000.

P.-Y. Oudeyer, “The production and recognition of emotions in speech: features and algorithms,” International Journal of Human Computer Studies, vol. 59, p. 157–183, 2003.

J.Hansen and D.Cairns, “Icarus: source generator based real-time recognition of speech in noisy stressful and lombard effect environments,” Speech Communication, vol. 16, no. 4, pp. 391–422, 1995.

M. Schroder and R. Cowie, “Issues in emotion-oriented computing – toward a shared understanding,” in Workshop on Emotion and Computing, 2006. HUMAINE.

S. G. Koolagudi and K. S. Rao, “Real life emotion classification using vop and pitch based spectral features,” in INDICON-2010, (KOLKATA-700032, INDIA), Jadavpur University, December 2010.

H. Wakita, “Residual energy of linear prediction to vowel and speaker recognition,” IEEE Trans. Acoust. Speech Signal Process, vol. 24, pp. 270–271, 1976.

K. S. Rao, S. R. M. Prasanna, and B. Yegnanarayana, “Determination of instants of significant excitation in speech using hilbert envelope and group delay function,” IEEE Signal Processing Letters, vol. 14, pp. 762–765, 2007.

A. Bajpai and B. Yegnanarayana, “Exploring features for audio clip classification using lp residual and aann models,” (Chennai, India), pp. 305–310, The international Conference on Intelligent Sensing and Information Processing 2004 (ICISIP 2004), January, 4–7 2004.

B. Yegnanarayana, R. K. Swamy, and K.S.R.Murty, “Determining mixing parameters from multispeaker data using speech-specific information,” IEEE Trans. Audio, Speech, and Language Processing, vol. 17, no. 6, pp. 1196–1207, 2009. ISSN 1558–7916.

G. Seshadri and B. Yegnanarayana, “Perceived loudness of speech based on the characteristics of glottal excitation source,” Journal of Acoustic Society of America, vol. 126, p. 2061–2071, October 2009.

K. E. Cummings and M. A. Clements, “Analysis of the glottal excitation of emotionally styled and stressed speech,” Journal of Acoustic Society of America, vol. 98, pp. 88–98, 1995.

L. Z. Hua and H. Y. andf Wang Ren Hua, “A novel source analysis method by matching spectral characters of lf model with straight spectrum.” Springer-Verlag, Berlin, Heidelberg, 2005. 441–448.

D. O’Shaughnessy, Speech Communication Human and Mechine. Addison-Wesley publishing company, 1987.

M. Schröder, “Emotional speech synthesis: A review,” in 7th European Conference on Speech Communication and Technology, (Aalborg, Denmark), EUROSPEECH 2001 Scandinavia, September 3–7 2001.

S. G. Koolagudi and K. S. Rao, “Emotion recognition from speech : A review,” International Journal of Speech Technology, Springer.

E. Douglas-Cowie, N. Campbell, R. Cowie, and P. Roach, “Emotional speech: Towards a new generation of databases,” SPC, vol. 40, p. 33–60, 2003.

The 15th Oriental COCOSDA Conference, December 9–12, 2012, Macau, China. (http://www.ococosda2012.org/)

D. C. Ambrus, “Collecting and recording of an emotional speech database,” tech. rep., Faculty of Electrical Engineering, Institute of Electronics, Univ. of Maribor, 2000.

M. Alpert, E. R. Pouget, and R. R. Silva, “Reflections of depression in acoustic measures of the patient’s speech,” Journal of Affect Disord., vol. 66, pp. 59–69, September 2001.

A. Batliner, C. Hacker, S. Steidl, E. Noth, D. S. Archy, M. Russell, and M. Wong, “You stupid tin box – children interacting with the aibo robot: a cross-linguistic emotional speech corpus.,” in Proc. Language Resources and Evaluation (LREC ’04), (Lisbon), 2004.

R. Cowie and E. Douglas-Cowie, “Automatic statistical analysis of the signal and prosodic signs of emotion in speech,” in Fourth International Conference on Spoken Language Processing (ICSLP ’96),, (Philadelphia, PA, USA), pp. 1989–1992, October 1996.

R. Cowie and R. R. Cornelius, “Describing the emotional states that are expressed in speech,” Speech Communication, vol. 40, pp. 5–32, Apr. 2003.

M. Edgington, “Investigating the limitations of concatenative synthesis,” in European Conference on Speech Communication and Technology (Eurospeech ’97),, (Rhodes/Athens, Greece), pp. 593–596, 1997.

G. M. Gonzalez, “Bilingual computer-assisted psychological assessment: an innovative approach for screening depression in chicanos/latinos,” tech. report-39, Univ. Michigan, 1999.

C. Pereira, “Dimensions of emotional meaning in speech,” in Proc. ISCA Workshop on Speech and Emotion, (Belfast, Northern Ireland), pp. 25–28, 2000.

T. Polzin and A. Waibel, “Emotion sensitive human computer interfaces,” in ISCA Workshop on Speech and Emotion, Belfast, pp. 201–206, 2000.

M. Rahurkar and J. H. L. Hansen, “Frequency band analysis for stress detection using a teager energy operator based feature,” in Proc. international conf. on spoken language processing(ICSLP’02), pp. Vol.3, 2021–2024, 2002.

K. R. Scherer, D. Grandjean, L. T. Johnstone, and T. B. G. Klasmeyer, “Acoustic correlates of task load and stress,” in International Conference on Spoken Language Processing (ICSLP ’02), (Colorado), pp. 2017–2020, 2002.

M. Slaney and G. McRoberts, “Babyears: A recognition system for affective vocalizations,” Speech Communication, vol. 39, p. 367–384, February 2003.

S. Yildirim, M. Bulut, C. M. Lee, A. Kazemzadeh, C. Busso, Z. Deng., S. Lee, and S. Narayanan, “An acoustic study of emotions expressed in speech,” (Jeju island, Korean), International Conference on Spoken Language Processing (ICSLP 2004), October 2004.

F. Burkhardt and W. F. Sendlmeier, “Verification of acousical correlates of emotional speech using formant-synthesis,” (Newcastle, Northern Ireland, UK), pp. 151–156, ITRW on Speech and Emotion, September 5–7 2000.

A. Batliner, S. Biersacky, and S. Steidl, “The prosody of pet robot directed speech: Evidence from children,” in Speech Prosody 2006, (Dresden), pp. 1–4, 2006.

M. Schroder and M. Grice, “Expressing vocal effort in concatenative synthesis,” in International Conference on Phonetic Sciences (ICPhS ’03), (Barcelona), 2003.

M. Schroder, “Experimental study of affect bursts,” Speech Communication - Special issue on speech and emotion, vol. 40, no. 1–2, 2003.

M. Grimm, K. Kroschel, and S. Narayanan, “The vera am mittag german audio-visual emotional speech database,” in IEEE International Conference Multimedia and Expo, (Hannover), pp. 865–868, April 2008. DOI: 10.1109/ICME.2008.4607572.

C. H. Wu, Z. J. Chuang, and Y. C. Lin, “Emotion recognition from text using semantic labels and separable mixture models,” ACM Transactions on Asian Language Information Processing (TALIP) TALIP, vol. 5, pp. 165–182, June 2006.

T. L. Nwe, S. W. Foo, and L. C. D. Silva, “Speech emotion recognition using hidden Markov models,” Speech Communication, vol. 41, pp. 603–623, Nov. 2003.

F. Yu, E. Chang, Y. Q. Xu, and H. Y. Shum, “Emotion detection from speech to enrich multimedia content,” in Proc. IEEE Pacific Rim Conference on Multimedia, (Bei**g), Vol.1 pp. 550–557, 2001.

J. Yuan, L. Shen, and F. Chen, “The acoustic realization of anger, fear, joy and sadness in chinese,” in International Conference on Spoken Language Processing (ICSLP ’02),, (Denver, Colorado, USA), pp. 2025–2028, September 2002.

I. Iriondo, R. Guaus, A. Rodríguez, P. Lázaro, N. Montoya, J. M. Blanco, D. Bernadas, J.M. Oliver, D. Tena, and L. Longhi, “Validation of an acoustical modeling of emotional expression in spanish using speech synthesis techniques,” in ITRW on Speech and Emotion, (NewCastle, Northern Ireland, UK), September 2000. ISCA Archive.

J. M. Montro, J. Gutterrez-Arriola, J. Colas, E. Enriquez, and J. M. Pardo, “Analysis and modeling of emotional speech in spanish,” in Proc. Int.Conf. on Phonetic Sciences, pp.957–960, 1999.

A. Iida, N. Campbell, F. Higuchi, and M. Yasumura, “A corpus-based speech synthesis system with emotion,” Speech Communication, vol. 40, pp. 161–187, Apr. 2003.

V. Makarova and V. A. Petrushin, “Ruslana: A database of russian emotional utterances,” in International Conference on Spoken Language Processing (ICSLP ’02),, pp. 2041–2044, 2002.

M. Nordstrand, G. Svanfeldt, B. Granstrom, and D. House, “Measurements of ariculatory variation in expressive speech for a set of swedish vowels,” Speech Communication, vol. 44, pp. 187–196, September 2004.

E. M. Caldognetto, P. Cosi, C. Drioli, G. Tisato, and F. Cavicchio, “Modifications of phonetic labial targets in emotive speech: effects of the co-production of speech and emotions,” Speech Communication, vol. 44, no. 1–4, pp. 173–185, 2004.

J. Makhoul, “Linear prediction: A tutorial review,” Proc. IEEE, vol. 63, pp. 561–580, Apr. 1975.

S. R. M. Kodukula, Significance of Excitation Source Information for Speech Analysis. PhD thesis, Dept. of Computer Science, IIT, Madras, March 2009.

T. V. Ananthapadmanabha and B. Yegnanarayana, “Epoch extraction from linear prediction residual for identification of closed glottis interval,” IEEE Trans. Acoustics, Speech, and Signal Processing, vol. 27, pp. 309–319, Aug. 1979.

B.Yegnanarayana, S.R.M.Prasanna, and K. Rao, “Speech enhancement using excitation source information,” in Proc. IEEE Int. Conf. Acoust., Speech, Signal Processing, vol. 1, (Orlando, Florida, USA), pp. 541–544, May 2002.

A. Bajpai and B.Yegnanarayana, “Combining evidence from sub-segmental and segmental features for audio clip classification,” in IEEE Region 10 Conference (TENCON), (India), pp. 1–5, IIIT, Hyderabad, Nov. 2008.

B. S. Atal, “Automatic speaker recognition based on pitch contours,” Journal of Acoustic Society of America, vol. 52, no. 6, pp. 1687–1697, 1972.

P. Thevenaz and H. Hugli, “Usefulness of lpc residue in textindependent speaker verification,” Speech Communication, vol. 17, pp. 145–157, 1995.

J. H. L. Liu and G. Palm, “On the use of features from prediction residual signal in speaker recognition,” pp. 313–316, Proc. European Conf. Speech Processing, Technology (EUROSPEECH), 1997.

B. Yegnanarayana, P. S. Murthy, C. Avendano, and H. Hermansky, “Enhancement of reverberant speech using lp residual,” in IEEE International Conference on Acoustics, Speech and Signal Processing, (Seattle, WA , USA), pp. 405–408 vol.1, IEEE Xplore, May 1998. DOI:10.1109/ICASSP.1998.674453.

K. S. Kumar, M. S. H. Reddy, K. S. R. Murty, and B. Yegnanarayana, “Analysis of laugh signals for detecting in continuous speech,” (Brighton, UK), pp. 1591–1594, INTERSPEECH, September, 6–10 2009.

G. Bapineedu, B. Avinash, S. V. Gangashetty, and B. Yegnanarayana, “Analysis of lombard speech using excitation source information,” (Brighton, UK), pp. 1091–1094, INTERSPEECH, September, 6–10 2009.

O. M. Mubarak, E. Ambikairajah, and J. Epps, “Analysis of an mfcc-based audio indexing system for efficient coding of multimedia sources,” in The 8th International Symposium on Signal Processing and its Applications, (Sydney, Australia), 28–31 August 2005.

T. L. Pao, Y. T. Chen, J. H. Yeh, and W. Y. Liao, “Combining acoustic features for improved emotion recognition in mandarin speech,” in ACII (J. Tao, T. Tan, and R. Picard, eds.), (LNCS 3784), pp. 279–285, ©Springer-Verlag Berlin Heidelberg, 2005.

T. L. Pao, Y. T. Chen, J. H. Yeh, Y. M. Cheng, and C. S. Chien, Feature Combination for Better Differentiating Anger from Neutral in Mandarin Emotional Speech. LNCS 4738, ACII 2007: Springer-Verlag Berlin Heidelberg, 2007.

N. Kamaruddin and A. Wahab, “Features extraction for speech emotion,” Journal of Computational Methods in Science and Engineering, vol. 9, no. 9, pp. 1–12, 2009. ISSN:1472–7978 (Print) 1875–8983 (Online).

D. Neiberg, K. Elenius, and K. Laskowski, “Emotion recognition in spontaneous speech using GMMs,” in INTERSPEECH 2006 - ICSLP, (Pittsburgh, Pennsylvania), pp. 809–812, 17–19 September 2006.

D. Bitouk, R. Verma, and A. Nenkova, “Class-level spectral features for emotion recognition,” Speech Communication, 2010. Article in press.

M. Sigmund, “Spectral analysis of speech under stress,” IJCSNS International Journal of Computer Science and Network Security, vol. 7, pp. 170–172, April 2007.

K. S. Rao and B. Yegnanarayana, “Prosody modification using instants of significant excitation,” IEEE Trans. Speech and Audio Processing, vol. 14, pp. 972–980, May 2006.

S. Werner and E. Keller, “Prosodic aspects of speech,” in Fundamentals of Speech Synthesis and Speech Recognition: Basic Concepts, State of the Art, the Future Challenges (E. Keller, ed.), pp. 23–40, Chichester: John Wiley, 1994.

T. Banziger and K. R. Scherer, “The role of intonation in emotional expressions,” Speech Communication, no. 46, pp. 252–267, 2005.

R. Cowie and R. R. Cornelius, “Describing the emotional states that are expressed in speech,” Speech Communication, vol. 40, pp. 5–32, Apr. 2003.

F. Dellaert, T. Polzin, and A. Waibel, “Recognising emotions in speech,” ICSLP 96, Oct. 1996.

M. Schroder, “Emoptional speech synthesis: A review,” (Seventh european conference on speech communication and technology Aalborg, Denmark), Eurospeech 2001, Sept. 2001.

I. R. Murray and J. L. Arnott, “Implementation and testing of a system for producing emotion by rule in synthetic speech,” Speech Communication, vol. 16, pp. 369–390, 1995.

J. E. Cahn, “The generation of affect in synthesized speech,” JAVIOS, pp. 1–19, Jul. 1990.

I. R. Murray, J. L. Arnott, and E. A. Rohwer, “Emotional stress in synthetic speech: Progress and future directions,” Speech Communication, vol. 20, pp. 85–91, Nov. 1996.

K. R. Scherer, “Vocal communication of emotion: A review of research paradigms,” Speech Communication, vol. 40, pp. 227–256, 2003.

S. McGilloway, R. Cowie, E. Douglas-Cowie, S. Gielen, M. Westerdijk, and S. Stroeve, “Approaching automatic recognition of emotion from voice: A rough benchmark,” (Belfast), 2000.

I. Luengo, E. Navas, I. Hernáez, and J. Sánchez, “Automatic emotion recognition using prosodic parameters,” in INTERSPEECH, (Lisbon, Portugal), pp. 493–496, IEEE, September 2005.

T. Iliou and C.-N. Anagnostopoulos, “Statistical evaluation of speech features for emotion recognition,” in Fourth International Conference on Digital Telecommunications, (Colmar, France), pp. 121–126, July 2009. ISBN: 978-0-7695-3695-8.

Y. hao Kao and L. shan Lee, “Feature analysis for emotion recognition from mandarin speech considering the special characteristics of chinese language,” in INTERSPEECH -ICSLP, (Pittsburgh, Pennsylvania), pp. 1814–1817, September 2006.

A. Zhu and Q. Luo, “Study on speech emotion recognition system in e learning,” in Human Computer Interaction, Part III, HCII (J. Jacko, ed.), (Berlin Heidelberg), pp. 544–552, Springer Verlag, 2007. LNCS:4552, DOI: 10.1007/978-3-540-73110-8-59.

M. Lugger and B. Yang, “The relevance of voice quality features in speaker independent emotion recognition,” in ICASSP, (Honolulu, Hawai, USA), pp. IV17–IV20, IEEE, May 2007.

Y. Wang, S. Du, and Y. Zhan, “Adaptive and optimal classification of speech emotion recognition,” in Fourth International Conference on Natural Computation, pp. 407–411, October 2008. http://doi.ieeecomputersociety.org/10.1109/ICNC.2008.713.

S. Zhang, “Emotion recognition in chinese natural speech by combining prosody and voice quality features,” in Advances in Neural Networks, Lecture Notes in Computer Science, Volume 5264 (S. et al., ed.), (Berlin Heidelberg), pp. 457–464, Springer Verlag, 2008. DOI: 10.1007/978-3-540-87734-9-52.

D. Ververidis, C. Kotropoulos, and I. Pitas, “Automatic emotional speech classification,” pp. I593–I596, ICASSP 2004, IEEE, 2004.

K. S. Rao, R. Reddy, S. Maity, and S. G. Koolagudi, “Characterization of emotions using the dynamics of prosodic features,” in International Conference on Speech Prosody, (Chicago, USA), May 2010.

K. S. Rao, S. R. M. Prasanna, and T. V. Sagar, “Emotion recognition using multilevel prosodic information,” in Workshop on Image and Signal Processing (WISP-2007), (Guwahati, India), IIT Guwahati, Guwahati, December 2007.

Y.Wang and L.Guan, “An investigation of speech-based human emotion recognition,” in IEEE 6th Workshop on Multimedia Signal Processing, pp. 15–18, IEEE press, October 2004.

Y. Zhou, Y. Sun, J. Zhang, and Y. Yan, “Speech emotion recognition using both spectral and prosodic features,” in International Conference on Information Engineering and Computer Science, ICIECS, (Wuhan), pp. 1–4, IEEE press, 19–20 Dec. 2009. DOI: 10.1109/ICIECS.2009.5362730.

C. E. X. Y. Yu, F. and H. Shum, “Emotion detection from speech to enrich multimedia content,” in Second IEEE Pacific-Rim Conference on Multimedia, (Bei**g, China), October 2001.

V.Petrushin, Emotion in speech: Recognition and application to call centres. Artifi.Neu.Net. Engr.(ANNIE), 1999.

R. Nakatsu, J. Nicholson, and N. Tosa, “Emotion recognition and its application to computer agents with spontaneous interactive capabilities,” Knowledge Based Systems, vol. 13, pp.497–504, 2000.

J. Nicholson, K. Takahashi, and R.Nakatsu, “Emotion recognition in speech using neural networks,” Neural computing and applications, vol. 11, pp. 290–296, 2000.

R. Tato, R. Santos, R. Kompe1, and J. Pardo, “Emotional space improves emotion recognition,” (Denver, Colorado, USA), 7th International Conference on Spoken Language Processing, September 16–20 2002.

R. Fernandez and R. W. Picard, “Modeling drivers’ speech under stress,” Speech Communication, vol. 40, p. 145–159, 2003.

V. A. Petrushin, “Emotion in speech : Recognition and application to call centers,” Proceedings of the 1999 Conference on Artificial Neural Networks in Engineering (ANNIE ’99), 1999.

J. Nicholson, K. Takahashi, and R.Nakatsu, “Emotion recognition in speech using neural networks,” in 6th International Conference on Neural Information Processing, (Perth, WA, Australia), pp. 495–501, ICONIP-99, August 1999. 10.1109/ICONIP.1999.845644.

V. A. Petrushin, “Emotion recognition in speech signal: Experimental study, development and application,” in ICSLP, (Bei**g, China), 2000.

C. M. Lee, S. Narayanan, and R. Pieraccini, “Recognition of negative emotion in the human speech signals,” in Workshop on Auto. Speech Recognition and Understanding, December 2001.

G. Zhou, J. H. L. Hansen, and J. F. Kaiser, “Nonlinear feature based classification of speech under stress,” IEEE Trans. Speech and Audio Processing, vol. 9, pp. 201–216, March 2001.

K. S. Rao and S. G. Koolagudi, “Characterization and recognition of emotions from speech using excitation source information,” International Journal of Speech Technology, Springer. DOI 10.1007/s10772-012-9175-2.

K. S. R. Murty and B. Yegnanarayana, “Combining evidence from residual phase and mfcc features for speaker recognition,” IEEE SIGNAL PROCESSING LETTERS, vol. 13, pp.52–55, January 2006.

K. Murty and B. Yegnanarayana, “Epoch extraction from speech signals,” IEEE Trans. Audio, Speech, and Language Processing, vol. 16, pp. 1602–1613, 2008.

B. Yegnanarayana, Artificial Neural Networks. New Delhi, India: Prentice-Hall, 1999.

S. Haykin, Neural Networks: A Comprehensive Foundation. New Delhi, India: Pearson Education Aisa, Inc., 1999.

K. S. Rao, “Role of neural network models for develo** speech systems,” Sadhana, Academy Proceedings in Engineering Sciences, Indian Academy of Sciences, Springer, vol. 36, pp. 783–836, Oct. 2011.

R. H. Laskar, D. Chakrabarty, F. A. Talukdar, K. S. Rao, and K. Banerjee, “Comparing ANN and GMM in a voice conversion framework,” Applied Soft Computing,Elsevier, vol. 12, pp. 3332–3342, Nov. 2012.

K. I. Diamantaras and S. Y. Kung, Principal Component Neural Networks: Theory and Applications. Newyork: John Wiley and Sons, 1996.

M. S. Ikbal, H. Misra, and B. Yegnanarayana, “Analysis of autoassociative map** neural networks,” (USA), pp. 854–858, Proc. Internat. Joint Conf. on Neural Networks (IJCNN), 1999.

S. P. Kishore and B. Yegnanarayana, “Online text-independent speaker verification system using autoassociative neural network models,” (Washington, DC, USA.), pp. 1548–1553 (V2), Proc. Internat. Joint Conf. on Neural Networks (IJCNN), August 2001.

A. V. N. S. Anjani, “Autoassociate neural network models for processing degraded speech,” Master’s thesis, MS thesis, Department of Computer Science and Engineering, Indian Institute of Technology Madras, Chennai 600 036, India, 2000.

K. S. Reddy, “Source and system features for speaker recognition,” Master’s thesis, MS thesis, Department of Computer Science and Engineering, Indian Institute of Technology Madras, Chennai 600 036, India, 2004.

C. S. Gupta, “Significance of source features for speaker recognition,” Master’s thesis, MS thesis, Department of Computer Science and Engineering, Indian Institute of Technology Madras, Chennai 600 036, India, 2003.

S. Desai, A. W. Black, B.Yegnanarayana, and K. Prahallad, “Spectral map** using artificial neural networks for voice conversion,” IEEE Trans. Audio, Speech, and Language Processing, vol. 18, pp. 954–964, 8 Apr. 2010.

K. S. Rao and B. Yegnanarayana, “Intonation modeling for indian languages,” Computer Speech and Language, vol. 23, pp. 240–256, April 2009.

C. K. Mohan and B. Yegnanarayana, “Classification of sport videos using edge-based features and autoassociative neural network models,” Signal, Image and Video Processing, vol. 4, pp. 61–73, 15 Nov. 2008. DOI: 10.1007/s11760-008-0097-9.

L. Mary and B. Yegnanarayana, “Autoassociative neural network models for language identification,” in International Conference on Intelligent Sensing and Information Processing, pp. 317–320, IEEE, 24 Aug. 2004. DOI:10.1109/ICISIP.2004.1287674.

K. S. Rao, J. Yadav, S. Sarkar, S. G. Koolagudi, and A. K. Vuppala, “Neural network based feature transformation for emotion independent speaker identification,” International Journal of Speech Technology, Springer, vol. 15, no. 3, pp. 335–349, 2012.

B. Yegnanarayana, K. S. Reddy, and S. P. Kishore, “Source and system features for speaker recognition using aann models,” (Salt Lake City, UT), IEEE Int. Conf. Acoust., Speech, and Signal Processing, May 2001.

C. S. Gupta, S. R. M. Prasanna, and B. Yegnanarayana, “Autoassociative neural network models for online speaker verification using source features from vowels,” in Int. Joint Conf. Neural Networks, (Honululu, Hawii, USA), May 2002.

B. Yegnanarayana, K. S. Reddy, and S. P. Kishore, “Source and system features for speaker recognition using AANN models,” in Proc. IEEE Int. Conf. Acoust., Speech, Signal Processing, (Salt Lake City, Utah, USA), pp. 409–412, May 2001.

S. Theodoridis and K. Koutroumbas, Pattern Recognition. USA: Elsevier, Academic press, 3 ed., 2006.

K. S. Rao, Acquisition and incorporation prosody knowledge for speech systems in Indian languages. PhD thesis, Dept. of Computer Science and Engineering, Indian Institute of Technology Madras, Chennai, India, May 2005.

S. R. M. Prasanna, B. V. S. Reddy, and P. Krishnamoorthy, “Vowel onset point detection using source, spectral peaks, and modulation spectrum energies,” IEEE Trans. Audio, Speech, and Language Processing, vol. 17, pp. 556–565, March 2009.

S. G. Koolagudi and K. S. Rao, “Emotion recognition from speech using sub-syllabic and pitch synchronous spectral features,” International Journal of Speech Technology, Springer. DOI 10.1007/s10772-012-9150-8.

J. Chen, Y. A. Huang, Q. Li, and K. K. Paliwal, “Recognition of noisy speech using dynamic spectral subband centroids,” IEEE signal processing letters, vol. 11, pp. 258–261, February 2004.

B. Yegnanarayana and S. P. Kishore, “AANN an alternative to GMM for pattern recognition,” Neural Networks, vol. 15, pp. 459–469, Apr. 2002.

R. O. Duda, P. E. Hart, and D. G. Stork, Pattern Classification. Singapore: A Wiley-interscience Publications, 2 ed., 2004.

S. R. M. Prasanna, B. V. S. Reddy, and P. Krishnamoorthy, “Vowel onset point detection using source, spectral peaks, and modulation spectrum energies,” IEEE Trans. Audio, Speech, and Language Processing, vol. 17, pp. 556–565, May 2009.

Unicode Entity Codes for the Telugu Script, Accents, Symbols and Foreign Scripts, Penn State University, USA. (http://tlt.its.psu.edu/suggestions/international/bylanguage/teluguchart.html)

K. S. Rao, Predicting Prosody from Text for Text-to-Speech Synthesis. ISBN-13: 978-1461413370, Springer, 2012.

K. S. Rao and S. G. Koolagudi, “Selection of suitable features for modeling the durations of syllables,” Journal of Software Engineering and Applications, vol. 3, pp. 1107–1117, Dec. 2010.

K. S. Rao, “Application of prosody models for develo** speech systems in indian languages,” International Journal of Speech Technology, Springer, vol. 14, pp. 19–33, 2011.

N. P. Narendra, K. S. Rao, K. Ghosh, R. R. Vempada, and S. Maity, “Development of syllable-based text-to-speech synthesis system in bengali,” International Journal of Speech Technology, Springer, vol. 14, no. 3, pp. 167–181, 2011.

K. S. Rao, S. G. Koolagudi, and R. R. Vempada, “Emotion recognition from speech using global and local prosodic features,” International Journal of Speech Technology, Springer, Aug. 2012. DOI: 10.1007/s10772-012-9172-2.

L. R. Rabiner, Digital Signal Processing. IEEE Press, 1972.

B. S. Atal and S. L. Hanauer, “Speech analysis and synthesis by linear prediction of the speech wave,” J. Acoust. Soc. Am., vol. 50, pp. 637–655, Aug. 1971.

J. Makhoul, “Linear prediction: A tutorial review,” Proc. IEEE, vol. 63, pp. 561–580, Apr. 1975.

B. S. Atal and M. R. Schroeder, “Linear prediction analysis of speech based on a pole-zero representation,” J. Acoust. Soc. Am., vol. 64, no. 5, pp. 1310–1318, 1978.

D. O’Shaughnessy, “Linear predictive coding,” IEEE Potentials, vol. 7, pp. 29–32, Feb. 1988.

T. Ananthapadmanabha and B. Yegnanarayana, “Epoch extraction from linear prediction residual for identification of closed glottis interval,” IEEE Trans. Acoust., Speech, Signal Process., vol. ASSP-27, pp. 309–319, Aug. 1979.

J. Picone, “Signal modeling techniques in speech recognition,” Proc. IEEE, vol. 81, pp.1215–1247, Sep. 1993.

J. W. Picone, “Signal modeling techniques in speech recognition,” Proceedings of IEEE, vol. 81, pp. 1215–1247, Sep. 1993.

J. R. Deller, J. H. Hansen, and J. G. Proakis, Discrete Time Processing of Speech Signals. 1st ed. Upper Saddle River, NJ, USA: Prentice Hall PTR, 1993.

J. Benesty, M. M. Sondhi, and Y. A. Huang, Springer Handbook of Speech Processing. Springer-Verlag New York, Inc., 2008.

J. Volkmann, S. Stevens, and E. Newman, “A scale for the measurement of the psychological magnitude pitch,” J. Acoust. Soc. Amer., vol. 8, pp. 185–190, Jan. 1937.

Z. Fang, Z. Guoliang, and S. Zhanjiang, “Comparison of different implementations of MFCC,” J. Computer Science and Technology, vol. 16, no. 6, pp. 582–589, 2001.

G. K. T. Ganchev and N. Fakotakis, “Comparative evaluation of various MFCC implementations on the speaker verification task,” in Proc. of Int. Conf. on Speech and Computer, (Patras, Greece), pp. 191–194, 2005.

S. Furui, “Comparison of speaker recognition methods using statistical features and dynamic features,” IEEE Trans. Acoust., Speech, Signal Process., vol. 29, no. 3, pp. 342–350, 1981.

J. S. Mason and X. Zhang, “Velocity and acceleration features in speaker recognition,” in Proc. IEEE Int. Conf. Acoust., Speech, Signal Processing, (Toronto, Canada), pp. 3673–3676, Apr. 1991.

D. A. Reynolds, “Speaker identification and verification using Gaussian mixture speaker models,” Speech Communication, vol. 17, pp. 91–108, Aug. 1995.

F. Bimbot, J. F. Bonastre, C. Fredouille, G. Gravier, M. I. Chagnolleau, S. Meignier, T. Merlin, O. J. Garcia, D. Petrovska, and Reynolds, “A tutorial on text-independent speaker verification,” EURASIP Journal Applied Signal process, no. 4, pp. 430–451, 2004.

A. Dempster, N. M. Laird, and D. B. Rubin, “Maximum likelihood from incomplete data via the EM algorithm,” Journal Royal Statistical Society, vol. 39, no. 1, pp. 1–38, 1977.

Y. Linde, A. Buzo, and R. Gray, “An algorithm for vector quantizer design,” IEEE Trans. Communications, vol. 28, pp. 84–95, Jan. 1980.

J. B. MacQueen, “Some methods for classification and analysis of multivariate observations,” in Proc. of the fifth Berkeley Symposium on Mathematical Statistics and Probability (L. M. L. Cam and J. Neyman, eds.), vol. 1, pp. 281–297, University of California Press, 1967.

J. A. Hartigan and M. A. Wong, “A K-means clustering algorithm,” Applied Statistics, vol. 28, no. 1, pp. 100–108, 1979.

Q. Y. Hong and S. Kwong, “A discriminative training approach for text-independent speaker recognition,” Signal process., vol. 85, no. 7, pp. 1449–1463, 2005.

D. Reynolds and R. Rose, “Robust text-independent speaker identification using Gaussian mixture speaker models,” IEEE Trans. Speech Audio processeing, vol. 3, pp. 72–83, Jan. 1995.

J. Gauvain and C.-H. Lee, “Maximum a posteriori estimation for multivariate Gaussian mixture observations of Markov chains,” IEEE Trans. Speech Audio process., vol. 2, pp.291–298, Apr. 1994.

D. A. Reynolds, “Speaker verification using adapted Gaussian mixture models,” Digital Signal Process., vol. 10, pp. 19–41, Jan. 2000.

Author information

Authors and Affiliations

Rights and permissions

Copyright information

© 2013 Springer Science+Business Media New York

About this chapter

Cite this chapter

Krothapalli, S.R., Koolagudi, S.G. (2013). Speech Emotion Recognition: A Review. In: Emotion Recognition using Speech Features. SpringerBriefs in Electrical and Computer Engineering(). Springer, New York, NY. https://doi.org/10.1007/978-1-4614-5143-3_2

Download citation

DOI: https://doi.org/10.1007/978-1-4614-5143-3_2

Published:

Publisher Name: Springer, New York, NY

Print ISBN: 978-1-4614-5142-6

Online ISBN: 978-1-4614-5143-3

eBook Packages: EngineeringEngineering (R0)