Abstract

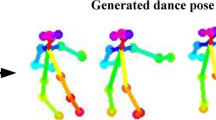

In this paper, we introduce a MusIc conditioned 3D Dance GEneraTion model, named MIDGET based on Dance motion Vector Quantised Variational AutoEncoder (VQ-VAE) model and Motion Generative Pre-Training (GPT) model to generate vibrant and high-quality dances that match the music rhythm. To tackle challenges in the field, we introduce three new components: 1) a pre-trained memory codebook based on the Motion VQ-VAE model to store different human pose codes, 2) employing Motion GPT model to generate pose codes with music and motion Encoders, 3) a simple framework for music feature extraction. We compare with existing state-of-the-art models and perform ablation experiments on AIST++, the largest publicly available music-dance dataset. Experiments demonstrate that our proposed framework achieves state-of-the-art performance on motion quality and its alignment with the music.

Access this chapter

Tax calculation will be finalised at checkout

Purchases are for personal use only

Similar content being viewed by others

References

Bengio, Y., Léonard, N., Courville, A.: Estimating or propagating gradients through stochastic neurons for conditional computation. ar**v preprint ar**v:1308.3432 (2013)

Chen, X., He, K.: Exploring simple siamese representation learning. In: Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pp. 15750–15758 (2021)

Dhariwal, P., Jun, H., Payne, C., Kim, J.W., Radford, A., Sutskever, I.: Jukebox: a generative model for music. ar**v preprint ar**v:2005.00341 (2020)

Fachner, J.: Time is the key-music and altered states of consciousness. Altering Conscious.: Multidisc. Perspect. 1, 355–376 (2011)

Heusel, M., Ramsauer, H., Unterthiner, T., Nessler, B., Hochreiter, S.: GANs trained by a two time-scale update rule converge to a local Nash equilibrium. In: Advances in Neural Information Processing Systems, vol. 30 (2017)

Ho, J., Jain, A., Abbeel, P.: Denoising diffusion probabilistic models. Adv. Neural. Inf. Process. Syst. 33, 6840–6851 (2020)

Hochreiter, S., Schmidhuber, J.: Long short-term memory. Neural Comput. 9(8), 1735–1780 (1997)

Hu, Z., Dong, Y., Wang, K., Chang, K.W., Sun, Y.: GPT-GNN: generative pre-training of graph neural networks. In: Proceedings of the 26th ACM SIGKDD International Conference on Knowledge Discovery & Data Mining, pp. 1857–1867 (2020)

Huang, R., Hu, H., Wu, W., Sawada, K., Zhang, M., Jiang, D.: Dance revolution: Long-term dance generation with music via curriculum learning. ar**v preprint ar**v:2006.06119 (2020)

Jain, L.C., Medsker, L.R.: Recurrent Neural Networks: Design and Applications, 1st edn. CRC Press Inc, USA (1999)

Kingma, D.P., Ba, J.: Adam: a method for stochastic optimization. ar**v preprint ar**v:1412.6980 (2014)

Lawrance, A., Lewis, P.: An exponential moving-average sequence and point process (ema1). J. Appl. Probab. 14(1), 98–113 (1977)

Lee, H.Y., et al.: Dancing to music. In: Advances in Neural Information Processing Systems, vol. 32 (2019)

Lee, M., Lee, K., Park, J.: Music similarity-based approach to generating dance motion sequence. Multimedia Tools Appl. 62, 895–912 (2013)

Li, B., Zhao, Y., Zhelun, S., Sheng, L.: Danceformer: music conditioned 3d dance generation with parametric motion transformer. In: Proceedings of the AAAI Conference on Artificial Intelligence, vol. 36, pp. 1272–1279 (2022)

Li, R., et al.: Magic: multi art genre intelligent choreography dataset and network for 3d dance generation. ar**v preprint ar**v:2212.03741 (2022)

Li, R., Yang, S., Ross, D.A., Kanazawa, A.: AI choreographer: music conditioned 3d dance generation with AIST++. In: Proceedings of the IEEE/CVF International Conference on Computer Vision, pp. 13401–13412 (2021)

Li, Z., Zhou, Y., **s for choreography synthesis. IEEE Trans. Multimed. 14(3), 747–759 (2012)

Qi, Y., Liu, Y., Sun, Q.: Music-driven dance generation. IEEE Access 7, 166540–166550 (2019)

Radford, A., Narasimhan, K., Salimans, T., Sutskever, I., et al.: Improving language understanding by generative pre-training (2018)

Razavi, A., Van den Oord, A., Vinyals, O.: Generating diverse high-fidelity images with VQ-VAE-2. In: Advances in Neural Information Processing Systems, vol. 32 (2019)

Shiratori, T., Nakazawa, A., Ikeuchi, K.: Synthesizing dance performance using musical and motion features. In: Proceedings 2006 IEEE International Conference on Robotics and Automation, 2006. ICRA 2006, pp. 3654–3659 (2006). https://doi.org/10.1109/ROBOT.2006.1642260

Siyao, L., et al.: Bailando: 3d dance generation via actor-critic GPT with choreographic memory. In: CVPR (2022)

Steinberg, N., et al.: Range of joint movement in female dancers and nondancers aged 8 to 16 years: anatomical and clinical implications. Am. J. Sports Med. 34(5), 814–823 (2006)

Tseng, J., Castellon, R., Liu, C.K.: Edge: editable dance generation from music. ar**v preprint ar**v:2211.10658 (2022)

Van Den Oord, A., Vinyals, O., et al.: Neural discrete representation learning. In: Advances in Neural Information Processing Systems, vol. 30 (2017)

Vaswani, A., et al.: Attention is all you need. In: Advances in Neural Information Processing Systems, vol. 30 (2017)

Author information

Authors and Affiliations

Corresponding author

Editor information

Editors and Affiliations

Rights and permissions

Copyright information

© 2024 The Author(s), under exclusive license to Springer Nature Singapore Pte Ltd.

About this paper

Cite this paper

Wang, J., Mao, W., Liu, M. (2024). MIDGET: Music Conditioned 3D Dance Generation. In: Liu, T., Webb, G., Yue, L., Wang, D. (eds) AI 2023: Advances in Artificial Intelligence. AI 2023. Lecture Notes in Computer Science(), vol 14471. Springer, Singapore. https://doi.org/10.1007/978-981-99-8388-9_23

Download citation

DOI: https://doi.org/10.1007/978-981-99-8388-9_23

Published:

Publisher Name: Springer, Singapore

Print ISBN: 978-981-99-8387-2

Online ISBN: 978-981-99-8388-9

eBook Packages: Computer ScienceComputer Science (R0)